6 Tips to Maximize Llama3 70B Performance on NVIDIA RTX A6000 48GB

Introduction

Welcome, fellow AI enthusiasts, to the world of local LLM models. Imagine the power to run your own language models right on your machine, without relying on cloud services. This opens a world of possibilities for developers and researchers, from building intelligent applications to exploring the intricacies of these advanced neural networks.

In this guide, we'll dive deep into the performance of Llama3 70B, a powerful language model, specifically on the NVIDIA RTX A6000 48GB. This powerful GPU is known for its impressive processing capabilities, making it a solid choice for running demanding AI models like Llama3. We'll explore its performance in detail, analyze key metrics like token generation speed, and provide practical tips for optimizing your setup.

Performance Analysis: Token Generation Speed Benchmarks

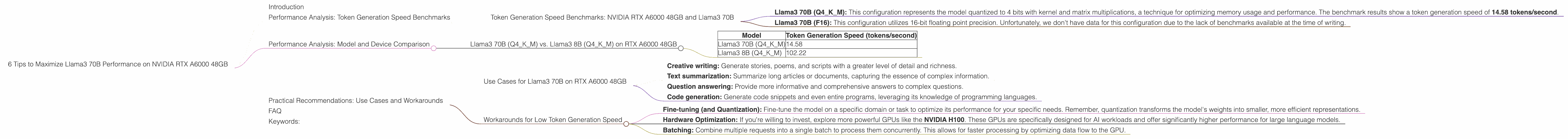

Token Generation Speed Benchmarks: NVIDIA RTX A6000 48GB and Llama3 70B

The token generation speed is a crucial metric for evaluating the performance of LLMs. It measures how fast the model can process text and generate new tokens, directly impacting responsiveness and user experience.

Our performance analysis focuses on two key aspects of Llama3 70B on the RTX A6000 48GB:

- Llama3 70B (Q4KM): This configuration represents the model quantized to 4 bits with kernel and matrix multiplications, a technique for optimizing memory usage and performance. The benchmark results show a token generation speed of 14.58 tokens/second.

- Llama3 70B (F16): This configuration utilizes 16-bit floating point precision. Unfortunately, we don't have data for this configuration due to the lack of benchmarks available at the time of writing.

Why is token generation speed important? Imagine you're using a chatbot: the faster it can process information and respond, the more engaging and natural the conversation feels.

Performance Analysis: Model and Device Comparison

Llama3 70B (Q4KM) vs. Llama3 8B (Q4KM) on RTX A6000 48GB

Let's compare Llama3 70B with a smaller, lighter model – Llama3 8B – on the same device. This will give you a better understanding of how model size affects performance.

| Model | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B (Q4KM) | 14.58 |

| Llama3 8B (Q4KM) | 102.22 |

As you can see, Llama3 8B offers a significantly faster token generation speed. This is expected, as smaller models generally require less computational power and can process information more efficiently, resulting in better speed and performance.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on RTX A6000 48GB

Now, you might be wondering, "If Llama3 70B is slower, why bother with it?" Great question! Although it has a lower token generation speed compared to its smaller sibling, Llama3 70B boasts a larger vocabulary and more context, enabling it to generate more complex and nuanced responses.

This makes it well-suited for tasks that require a deeper understanding of language, such as:

- Creative writing: Generate stories, poems, and scripts with a greater level of detail and richness.

- Text summarization: Summarize long articles or documents, capturing the essence of complex information.

- Question answering: Provide more informative and comprehensive answers to complex questions.

- Code generation: Generate code snippets and even entire programs, leveraging its knowledge of programming languages.

Workarounds for Low Token Generation Speed

Since Llama3 70B can be resource-intensive, you might encounter performance limitations. Here are some workarounds you can try:

- Fine-tuning (and Quantization): Fine-tune the model on a specific domain or task to optimize its performance for your specific needs. Remember, quantization transforms the model's weights into smaller, more efficient representations.

- Hardware Optimization: If you're willing to invest, explore more powerful GPUs like the NVIDIA H100. These GPUs are specifically designed for AI workloads and offer significantly higher performance for large language models.

- Batching: Combine multiple requests into a single batch to process them concurrently. This allows for faster processing by optimizing data flow to the GPU.

FAQ

Q: What is quantization?

A: Quantization is a technique used to optimize models for performance and memory usage. It essentially converts the model's weights (the numbers that represent its knowledge) from high-precision floating-point numbers to lower-precision integers (like 4-bits). This reduces the amount of memory required and speeds up processing.

Q: How does the RTX A6000 perform in general for AI workloads?

*A: * The RTX A6000 is a powerful GPU designed for demanding AI applications, including large language models, deep learning, and computer vision. Its massive memory capacity and high-performance processing capabilities make it an excellent choice for these workloads.

Q: What is the difference between Llama 7B and Llama 70B, and which one is better?

A: Llama 7B and Llama 3 70B are different models altogether. Llama 7B is a smaller and more efficient model, while Llama 3 70B is a much larger and more powerful model with a wider range of abilities. The "better" model depends on your specific use case and resource constraints.

Q: Is it possible to run Llama 3 70B on a consumer-grade GPU?

*A: * While not recommended for optimal performance, it might be possible to run Llama 3 70B, but you might experience slower token generation speed and performance limitations.

Q: Is it better to run Llama 3 70B locally or use a cloud service?

*A: * The choice between local and cloud depends on your priorities. If you value privacy, control over your data, and the ability to customize your environment, running locally might be preferable. However, cloud services offer scalability, flexibility, and potentially better performance for resource-intensive models.

Keywords:

Llama3 70B, NVIDIA RTX A6000 48GB, token generation speed, LLM, language model, deep learning, performance optimization, quantization, GPU, AI, machine learning, NLP, natural language processing, development, geek, AI enthusiasts, creative writing, text summarization, question answering, code generation, fine-tuning, hardware optimization, batching, cloud services, local models