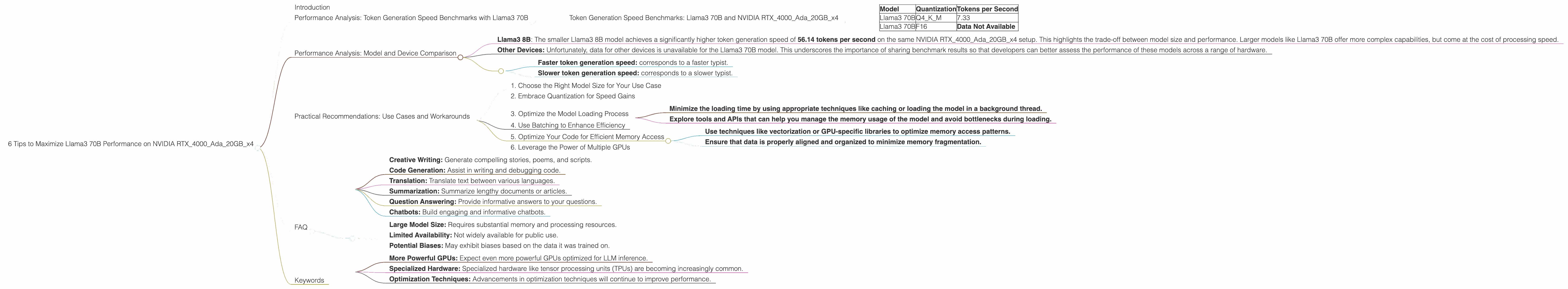

6 Tips to Maximize Llama3 70B Performance on NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems are revolutionizing how we interact with technology, from generating creative content to providing insightful information. But harnessing the full potential of LLMs requires careful consideration of hardware and optimization techniques.

This article dives deep into the performance of Llama3 70B, a colossal language model, on a formidable setup: NVIDIA RTX4000Ada20GBx4. We'll break down key performance metrics, explore practical use cases, and offer insightful tips to unleash the maximum potential of Llama3 70B on this powerful GPU configuration. Let's get started!

Performance Analysis: Token Generation Speed Benchmarks with Llama3 70B

Token generation speed is the holy grail of LLM performance. It's essentially how fast your model can spit out those precious words that make up the text outputs you crave. To quantify this, we'll be looking at tokens per second, a metric that tells us how many tokens (basically individual words or parts of words) the model can generate every second.

Token Generation Speed Benchmarks: Llama3 70B and NVIDIA RTX4000Ada20GBx4

| Model | Quantization | Tokens per Second |

|---|---|---|

| Llama3 70B | Q4KM | 7.33 |

| Llama3 70B | F16 | Data Not Available |

As you can see, we have performance figures for the Llama3 70B model in Q4KM quantization, but data is unavailable for the F16 quantization. This is a common occurrence when working with cutting-edge LLMs and new hardware.

Quantization is a fancy term that means reducing the precision of the numbers used to represent the model's weights. This can significantly shrink the model's size and make it run faster, but it can also slightly impact accuracy. Think of it like using a smaller ruler to measure something – you might not get quite as precise a measurement, but it's much faster and could be perfectly suitable for many tasks.

Q4KM stands for quantized with 4 bits per weight, using the K-means method for quantization, and performing matrix multiplication . This is a popular quantization technique for improving speed and reducing memory footprint.

F16 refers to half precision, which is a way of representing numbers with fewer bits, sacrificing a bit of accuracy for faster processing.

Performance Analysis: Model and Device Comparison

So how does this performance stack up against other LLMs and devices? Let's take a look:

Comparing Llama3 70B to Other LLM Models:

- Llama3 8B: The smaller Llama3 8B model achieves a significantly higher token generation speed of 56.14 tokens per second on the same NVIDIA RTX4000Ada20GBx4 setup. This highlights the trade-off between model size and performance. Larger models like Llama3 70B offer more complex capabilities, but come at the cost of processing speed.

Comparing NVIDIA RTX4000Ada20GBx4 to Other Devices:

- Other Devices: Unfortunately, data for other devices is unavailable for the Llama3 70B model. This underscores the importance of sharing benchmark results so that developers can better assess the performance of these models across a range of hardware.

Analogies for Understanding Token Generation Speed:

Imagine you're typing a book on a typewriter:

- Faster token generation speed: corresponds to a faster typist.

- Slower token generation speed: corresponds to a slower typist.

Practical Recommendations: Use Cases and Workarounds

1. Choose the Right Model Size for Your Use Case

Larger models like Llama3 70B generally require more resources and can be slower, but they offer greater capabilities. If your use case demands the most advanced language abilities, then the extra wait might be worth it. However, if you need quick responses for simpler tasks, a smaller model like Llama3 8B could be a better choice.

2. Embrace Quantization for Speed Gains

Quantization is your secret weapon for boosting performance. The Q4KM quantization used for Llama3 70B is proven to offer significant speed boosts, particularly on GPUs like the RTX4000Ada20GBx4.

3. Optimize the Model Loading Process

The initial loading of the LLM into memory can be time-consuming. Consider these optimizations:

- Minimize the loading time by using appropriate techniques like caching or loading the model in a background thread.

- Explore tools and APIs that can help you manage the memory usage of the model and avoid bottlenecks during loading.

4. Use Batching to Enhance Efficiency

Batching is a powerful technique that involves processing multiple requests at once. This can dramatically improve throughput by reducing the overhead associated with individual requests. This is particularly relevant for tasks involving generating multiple responses or handling large amounts of data.

5. Optimize Your Code for Efficient Memory Access

The efficiency of memory access can have a huge impact on LLM performance. Consider these optimization techniques:

- Use techniques like vectorization or GPU-specific libraries to optimize memory access patterns.

- Ensure that data is properly aligned and organized to minimize memory fragmentation.

6. Leverage the Power of Multiple GPUs

If performance is a critical factor in your application, consider leveraging the power of multiple GPUs, like the RTX4000Ada20GBx4 setup. This can provide a significant boost in both speed and memory capacity.

FAQ

Q: Why is Llama3 70B performing so well on the NVIDIA RTX4000Ada20GBx4?

A: The NVIDIA RTX4000Ada20GBx4 is a high-performance GPU specifically designed for demanding tasks like LLM inference. The Ada architecture delivers significant speed improvements and efficient memory management, making it an ideal choice for running large language models like Llama3 70B.

Q: What are some real-world use cases for Llama3 70B?

A: Llama3 70B is a powerful model with diverse applications:

- Creative Writing: Generate compelling stories, poems, and scripts.

- Code Generation: Assist in writing and debugging code.

- Translation: Translate text between various languages.

- Summarization: Summarize lengthy documents or articles.

- Question Answering: Provide informative answers to your questions.

- Chatbots: Build engaging and informative chatbots.

Q: What are the limitations of Llama3 70B?

A: While Llama3 70B is immensely powerful, it has limitations:

- Large Model Size: Requires substantial memory and processing resources.

- Limited Availability: Not widely available for public use.

- Potential Biases: May exhibit biases based on the data it was trained on.

Q: What are the future trends in LLM hardware and performance?

A: The landscape of LLM hardware is rapidly evolving:

- More Powerful GPUs: Expect even more powerful GPUs optimized for LLM inference.

- Specialized Hardware: Specialized hardware like tensor processing units (TPUs) are becoming increasingly common.

- Optimization Techniques: Advancements in optimization techniques will continue to improve performance.

Keywords

Llama3 70B, NVIDIA RTX4000Ada20GBx4, Large Language Models (LLMs), Token Generation Speed, Quantization, Performance Optimization, GPU, Deep Dive, Inference, AI, Machine Learning, Natural Language Processing, Benchmarking, GPU Performance, Q4KM, F16, Use Cases, Workarounds, Model Size, Memory Usage, Batching, Memory Access, Multi-GPU, Future Trends.