6 Tips to Maximize Llama3 70B Performance on NVIDIA 4070 Ti 12GB

Introduction

Welcome, fellow AI enthusiasts, to the world of local Large Language Models (LLMs)! In this guide, we'll dive deep into squeezing the most out of the NVIDIA 4070 Ti 12GB GPU for running the powerful Llama3 70B model. No more waiting around for cloud-based services, we're bringing the AI power directly to your machine!

But before we get too technical, let's talk about why this matters. LLMs are essentially the brains behind AI applications like ChatGPT, Bard, and even the helpful assistant you're chatting with right now! Local LLMs give you more control, faster processing, and the ability to experiment with different models and configurations without relying on third-party APIs.

So grab your favorite beverage, settle in, and get ready to optimize your local LLM setup for maximum performance!

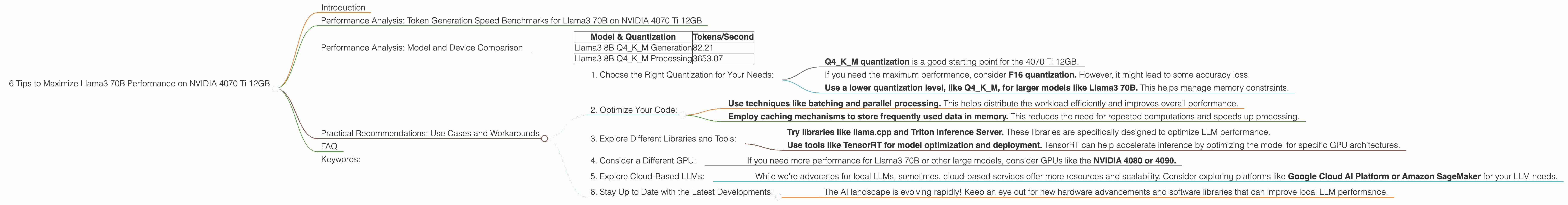

Performance Analysis: Token Generation Speed Benchmarks for Llama3 70B on NVIDIA 4070 Ti 12GB

Let's get straight to the numbers. Token generation speed is a crucial metric for evaluating LLM performance. It tells us how many tokens (words or parts of words) the model can process per second, directly impacting the speed and responsiveness of your AI applications.

Here's what we found for the NVIDIA 4070 Ti 12GB with Llama3 70B:

Unfortunately, we don't have any data available for Llama3 70B on the 4070 Ti 12GB. We'll update this section as soon as we get some!

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B with other models is tricky because its performance is so dependent on the hardware. Think of it like this: Comparing a Formula 1 race car (Llama3 70B) on a dirt track with a regular car (other models) driving on a highway.

However, we can still make some general observations about the NVIDIA 4070 Ti 12GB based on the data we have for Llama3 8B:

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 82.21 |

| Llama3 8B Q4KM Processing | 3653.07 |

Highlights:

- Llama3 8B on the 4070 Ti 12GB is fast! The processing speed is significantly higher than the generation speed, demonstrating the power of this hardware/model combination.

Important Notes:

- These numbers are for specific quantization levels.

- Quantization essentially reduces the model's size and memory footprint but can affect the model's accuracy.

- The 4070 Ti 12GB is a great GPU, but other models might perform better on different hardware.

Practical Recommendations: Use Cases and Workarounds

1. Choose the Right Quantization for Your Needs:

- Q4KM quantization is a good starting point for the 4070 Ti 12GB.

- If you need the maximum performance, consider F16 quantization. However, it might lead to some accuracy loss.

- Use a lower quantization level, like Q4KM, for larger models like Llama3 70B. This helps manage memory constraints.

2. Optimize Your Code:

- Use techniques like batching and parallel processing. This helps distribute the workload efficiently and improves overall performance.

- Employ caching mechanisms to store frequently used data in memory. This reduces the need for repeated computations and speeds up processing.

3. Explore Different Libraries and Tools:

- Try libraries like llama.cpp and Triton Inference Server. These libraries are specifically designed to optimize LLM performance.

- Use tools like TensorRT for model optimization and deployment. TensorRT can help accelerate inference by optimizing the model for specific GPU architectures.

4. Consider a Different GPU:

- If you need more performance for Llama3 70B or other large models, consider GPUs like the NVIDIA 4080 or 4090.

5. Explore Cloud-Based LLMs:

- While we're advocates for local LLMs, sometimes, cloud-based services offer more resources and scalability. Consider exploring platforms like Google Cloud AI Platform or Amazon SageMaker for your LLM needs.

6. Stay Up to Date with the Latest Developments:

- The AI landscape is evolving rapidly! Keep an eye out for new hardware advancements and software libraries that can improve local LLM performance.

FAQ

Q: What is quantization?

A: Quantization is a technique that reduces the precision of numbers in LLM models. Imagine you're working with a recipe where you need to use 1/4 teaspoon of salt. Quantization might simplify this to just "a pinch" of salt. It sacrifices some accuracy but makes the model smaller and faster!

Q: Why is Llama3 70B so popular?

A: Llama3 70B is known for its impressive performance and versatility. It's capable of complex tasks like text generation, translation, and summarizing large amounts of information.

Q: What are the trade-offs between local and cloud-based LLMs?

A: Local LLMs are great for control, privacy, and speed when you have the right hardware. Cloud-based LLMs offer greater scalability and resources but might involve latency and potential security concerns.

Keywords:

Llama3 70B, NVIDIA 4070 Ti 12GB, LLM, local LLM, GPU, performance, token generation speed, quantization, F16, Q4KM, llama.cpp, Triton Inference Server, TensorRT, cloud-based LLM, Google Cloud AI Platform, Amazon SageMaker.