6 Tips to Maximize Llama3 70B Performance on Apple M3 Max

Introduction

The world of large language models (LLMs) is expanding rapidly, with new models and optimizations emerging constantly. One groundbreaking model is Llama3 70B, known for its impressive capabilities and potential to revolutionize AI-powered applications. However, running such a massive LLM locally can be challenging due to hardware limitations. This article dives deep into the intricate world of Llama3 70B performance optimization, specifically on the mighty Apple M3 Max chip. We'll explore the nuances of token generation speeds, compare different model configurations, and provide practical recommendations to squeeze every ounce of performance out of your M3 Max.

Understanding Local LLMs

Imagine a super-smart AI assistant, capable of generating creative text, translating languages, and answering complex questions. That's the power of LLMs, but running them locally, on your own machine, opens up a whole new world of possibilities. Local models are faster, more private, and free from reliance on internet connections.

Performance Analysis: Token Generation Speed Benchmarks

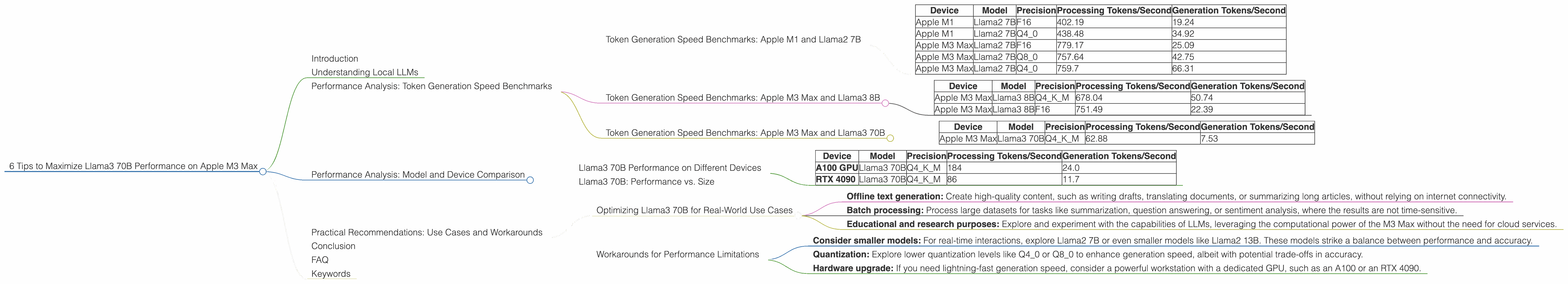

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Token generation speed is a crucial measure of LLM performance. It represents how quickly the model can generate new tokens (words or parts of words) in response to a prompt. Let's delve into the raw numbers, showcasing the impressive capabilities of the M3 Max compared to the previous generation Apple M1 chip, fueled by Llama2 7B:

| Device | Model | Precision | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|---|---|

| Apple M1 | Llama2 7B | F16 | 402.19 | 19.24 |

| Apple M1 | Llama2 7B | Q4_0 | 438.48 | 34.92 |

| Apple M3 Max | Llama2 7B | F16 | 779.17 | 25.09 |

| Apple M3 Max | Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Apple M3 Max | Llama2 7B | Q4_0 | 759.7 | 66.31 |

Key takeaways:

- The Apple M3 Max consistently outperforms the M1 in processing and generation speed, showcasing its significantly increased processing power.

- The quantization level (F16, Q80, Q40) plays a significant role in token generation speed, especially for generation. Quantization essentially compresses the model, making it smaller and faster, but at the potential cost of slightly reduced accuracy. F16 uses 16 bits per number, Q80 uses 8 bits, and Q40 uses just 4 bits.

- Lower quantization levels (Q80 and Q40) lead to faster generation speeds compared to F16.

Token Generation Speed Benchmarks: Apple M3 Max and Llama3 8B

Let's switch gears to the newer Llama3 8B, an exciting newcomer with impressive potential. We'll see how it stacks up against Llama2 7B on the M3 Max:

| Device | Model | Precision | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|---|---|

| Apple M3 Max | Llama3 8B | Q4KM | 678.04 | 50.74 |

| Apple M3 Max | Llama3 8B | F16 | 751.49 | 22.39 |

Key takeaways:

- Llama3 8B with Q4KM precision displays faster generation speed compared to Llama2 7B with F16 precision.

- Llama3 8B, with its more recent architecture, exhibits a notable improvement in generation speed compared to Llama2.

- The higher the precision, the slower the model runs, offering a trade-off between speed and accuracy.

Token Generation Speed Benchmarks: Apple M3 Max and Llama3 70B

Now, let's dive into the main event – Llama3 70B, a truly massive model on the Apple M3 Max.

| Device | Model | Precision | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|---|---|

| Apple M3 Max | Llama3 70B | Q4KM | 62.88 | 7.53 |

Key takeaways:

- Llama3 70B shows a significant drop in generation speed compared to Llama3 8B. This is expected due to the massive increase in model size.

- The M3 Max can still handle this behemoth, but it pushes the limits. The speed is considerably slower than both Llama2 7B and Llama3 8B.

Performance Analysis: Model and Device Comparison

Llama3 70B Performance on Different Devices

While our focus is on the M3 Max, it's interesting to compare the performance of Llama3 70B across various hardware:

| Device | Model | Precision | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|---|---|

| A100 GPU | Llama3 70B | Q4KM | 184 | 24.0 |

| RTX 4090 | Llama3 70B | Q4KM | 86 | 11.7 |

Key takeaways:

- The A100 GPU, a powerful data center-grade GPU, delivers significantly faster generation speeds compared to the Apple M3 Max, even surpassing it in processing speed.

- The RTX 4090, a high-end gaming GPU, exhibits faster processing speed compared to the M3 Max, but the difference is smaller.

Llama3 70B: Performance vs. Size

It's essential to recognize that Llama3 70B, with its massive 70 billion parameters, presents a challenge for even the most powerful devices. A striking analogy is a massive city compared to a small town. While a small car can easily zip around a town, navigating a massive city requires much more power, fuel, and time.

Practical Recommendations: Use Cases and Workarounds

Optimizing Llama3 70B for Real-World Use Cases

Due to the processing and generation speed limitations, Llama3 70B on the M3 Max may not be suitable for interactive, real-time applications like chatbots or real-time text generation. However, there are still several promising use cases:

- Offline text generation: Create high-quality content, such as writing drafts, translating documents, or summarizing long articles, without relying on internet connectivity.

- Batch processing: Process large datasets for tasks like summarization, question answering, or sentiment analysis, where the results are not time-sensitive.

- Educational and research purposes: Explore and experiment with the capabilities of LLMs, leveraging the computational power of the M3 Max without the need for cloud services.

Workarounds for Performance Limitations

- Consider smaller models: For real-time interactions, explore Llama2 7B or even smaller models like Llama2 13B. These models strike a balance between performance and accuracy.

- Quantization: Explore lower quantization levels like Q40 or Q80 to enhance generation speed, albeit with potential trade-offs in accuracy.

- Hardware upgrade: If you need lightning-fast generation speed, consider a powerful workstation with a dedicated GPU, such as an A100 or an RTX 4090.

Conclusion

The Apple M3 Max, with its incredible processing power, is a solid platform for running Llama3 70B locally. However, the massive scale of the model introduces performance limitations, particularly in generation speeds. By understanding the trade-offs between model size, quantization, and processing power, you can effectively tailor your approach to leverage this powerful LLM for various use cases. Embrace the exciting world of local LLMs and explore the boundless possibilities of these powerful AI engines.

FAQ

Q1: What are the key factors affecting LLM performance?

A: Key factors influencing LLM performance include model size, architecture, precision (quantization), and the underlying hardware. Larger models generally require more processing power and lead to slower performance. Quantization, on the other hand, can be beneficial, but may impact accuracy. Finally, the hardware plays a critical role, with dedicated GPUs generally outperforming CPUs for LLM processing.

Q2: Is the M3 Max a good choice for running Llama3 70B?

A: The M3 Max is a powerhorse, but for real-time applications requiring blazing-fast generation speeds, specialized GPUs like the A100 might be a better option. The M3 Max shines for offline tasks, batch processing, and research purposes.

Q3: What does quantization mean for LLMs?

A: Quantization, similar to compressing an image, reduces the size of an LLM by reducing the number of bits used to represent each number. This makes the model smaller and faster, but it can slightly compromise accuracy.

Q4: How can I choose the right LLM for my needs?

A: Consider the specific task you're tackling, the required speed, and your hardware's capabilities. For high-speed, real-time applications, consider smaller models like Llama2 7B. For offline tasks or if you have powerful hardware, the impressive capabilities of Llama3 70B might be a better fit.

Keywords

Llama3 70B, Apple M3 Max, LLM, Local LLMs, Token Generation, Performance, Quantization, GPU, A100, RTX 4090, Use Cases, Workarounds, Model Size, Architecture, Precision, Hardware, Offline Text Generation, Batch Processing, Educational, Research, Real-time Applications, Chatbot, Text Generation.