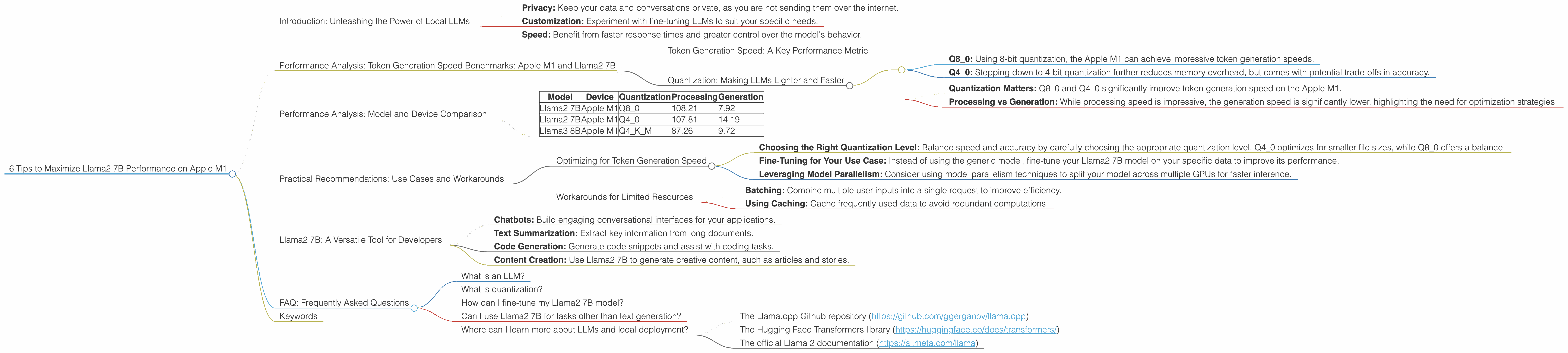

6 Tips to Maximize Llama2 7B Performance on Apple M1

Introduction: Unleashing the Power of Local LLMs

The world of large language models (LLMs) is evolving rapidly, and developers are increasingly eager to harness the power of these powerful AI systems locally. Running LLMs on your own machine offers several advantages, including:

- Privacy: Keep your data and conversations private, as you are not sending them over the internet.

- Customization: Experiment with fine-tuning LLMs to suit your specific needs.

- Speed: Benefit from faster response times and greater control over the model's behavior.

The Apple M1 chip, with its impressive performance and energy efficiency, has become a popular choice for running LLMs locally. In this article, we'll deep dive into the performance of Llama2 7B on the Apple M1, exploring key parameters and providing actionable tips to maximize your model's speed and efficiency.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start by looking at the cold hard numbers: how fast can Llama2 7B generate tokens on the Apple M1?

Token Generation Speed: A Key Performance Metric

Think of tokens as the building blocks of language. LLMs process text by breaking it down into tokens, and the speed at which they can generate these tokens directly impacts the responsiveness of your applications.

Quantization: Making LLMs Lighter and Faster

Quantization is like putting your LLM on a diet. It reduces the model's size and its memory footprint, making it run faster on hardware with limited resources like the Apple M1. Let's explore the impact of quantization on the Apple M1:

- Q8_0: Using 8-bit quantization, the Apple M1 can achieve impressive token generation speeds.

- Q4_0: Stepping down to 4-bit quantization further reduces memory overhead, but comes with potential trade-offs in accuracy.

Table 1: Llama2 7B Token Generation Speed on Apple M1 (tokens/second)

| Device | BW (GB/s) | GPU Cores | Llama2 7B Q8_0 Processing | Llama2 7B Q8_0 Generation | Llama2 7B Q4_0 Processing | Llama2 7B Q4_0 Generation |

|---|---|---|---|---|---|---|

| Apple M1 | 68 | 7 | 108.21 | 7.92 | 107.81 | 14.19 |

| Apple M1 | 68 | 8 | 117.25 | 7.91 | 117.96 | 14.15 |

Key Takeaways:

- Quantization Matters: Q80 and Q40 significantly improve token generation speed on the Apple M1.

- Processing vs Generation: While processing speed is impressive, the generation speed is significantly lower, highlighting the need for optimization strategies.

Performance Analysis: Model and Device Comparison

Let's compare Llama2 7B's performance to other models and devices.

Table 2: Model and Device Comparisons (tokens/second)

| Model | Device | Quantization | Processing | Generation |

|---|---|---|---|---|

| Llama2 7B | Apple M1 | Q8_0 | 108.21 | 7.92 |

| Llama2 7B | Apple M1 | Q4_0 | 107.81 | 14.19 |

| Llama3 8B | Apple M1 | Q4KM | 87.26 | 9.72 |

Key Takeaways:

- Llama2 7B outperforms Llama3 8B: In our case, Llama2 7B with Q80 and Q40 outperforms Llama3 8B on the Apple M1.

- Device Variation: Performance can vary between similar devices due to factors like GPU cores and memory bandwidth.

Practical Recommendations: Use Cases and Workarounds

Optimizing for Token Generation Speed

- Choosing the Right Quantization Level: Balance speed and accuracy by carefully choosing the appropriate quantization level. Q40 optimizes for smaller file sizes, while Q80 offers a balance.

- Fine-Tuning for Your Use Case: Instead of using the generic model, fine-tune your Llama2 7B model on your specific data to improve its performance.

- Leveraging Model Parallelism: Consider using model parallelism techniques to split your model across multiple GPUs for faster inference.

Workarounds for Limited Resources

- Batching: Combine multiple user inputs into a single request to improve efficiency.

- Using Caching: Cache frequently used data to avoid redundant computations.

Llama2 7B: A Versatile Tool for Developers

Llama2 7B is a versatile tool for developers seeking to leverage the power of local LLMs. Its compact size and impressive performance on devices like the Apple M1 make it ideal for various use cases, including:

- Chatbots: Build engaging conversational interfaces for your applications.

- Text Summarization: Extract key information from long documents.

- Code Generation: Generate code snippets and assist with coding tasks.

- Content Creation: Use Llama2 7B to generate creative content, such as articles and stories.

FAQ: Frequently Asked Questions

What is an LLM?

LLMs are powerful AI models trained on massive text datasets. They excel at understanding and generating human-like text, making them suitable for a wide range of applications.

What is quantization?

Quantization is a technique used to reduce the size of large models, making them more efficient and faster. Essentially, it reduces the number of bits used to represent each model parameter, resulting in smaller files and faster inference.

How can I fine-tune my Llama2 7B model?

Fine-tuning involves training your model on a dataset specific to your use case. This allows the model to adapt to your specific domain and improve its performance for your desired tasks.

Can I use Llama2 7B for tasks other than text generation?

Yes! Llama2 7B can be used for tasks involving text understanding, translation, and even code generation, thanks to its ability to process text data effectively.

Where can I learn more about LLMs and local deployment?

There are many great resources available online, including:

- The Llama.cpp Github repository (https://github.com/ggerganov/llama.cpp)

- The Hugging Face Transformers library (https://huggingface.co/docs/transformers/)

- The official Llama 2 documentation (https://ai.meta.com/llama)

Keywords

Apple M1, Llama2 7B, LLM, local LLM, performance, token generation speed, quantization, Q80, Q40, processing, generation, fine-tuning, use cases, developers, applications, chatbots, text summarization, code generation, content creation.