6 Surprising Facts About Running Llama3 8B on NVIDIA 4070 Ti 12GB

Are you a developer or a tech enthusiast eager to explore the world of local Large Language Models (LLMs)? Have you been eyeing the powerful NVIDIA 4070 Ti 12GB graphics card and wondering how it performs with Llama3 8B, the latest and greatest LLM from Meta AI?

This article dives deep into the performance of running Llama3 8B on this specific hardware, revealing six surprising facts that will help you understand the model's capabilities and limitations. We'll break down the numbers, examine the results, and offer practical recommendations for your projects. Let's get started!

6 Surprising Facts About Running Llama3 8B on NVIDIA 4070 Ti 12GB

NVIDIA 4070 Ti 12GB is a powerhouse for Llama3 8B — in Q4KM quantization. Forget about waiting for ages for your model to generate text. On the 4070 Ti, Llama3 8B with Q4KM quantization achieves a blistering 82.21 tokens per second for text generation. That's like typing out over 80 words per second — faster than any human could ever manage!

Llama3 8B in F16 quantization is a mystery. The data we have doesn't reveal the performance of Llama3 8B in F16 quantization on the 4070 Ti. It's a real bummer, as F16 is generally considered a good balance of performance and accuracy, but we'll just have to wait for more results.

Llama3 70B on the 4070 Ti is completely off the menu. We don't have any data for Llama3 70B, whether in Q4KM or F16 quantization, on the 4070 Ti. This might be due to the significant resource requirements of the 70B model, which might be too demanding for this specific GPU.

The 4070 Ti excels at processing input text. Although the data is limited, we know that the 4070 Ti delivers exceptional processing power for Llama3 8B in Q4KM quantization, achieving an impressive 3653.07 tokens per second. This means the user can feed text into the model at an incredibly fast rate.

Quantization is key in unlocking the full potential of Llama3 8B on the 4070 Ti. Notice how the performance is provided for Q4KM quantization only? This is where things get interesting. Quantization is like squeezing a humongous LLM into a smaller storage space while preserving its essence. Q4KM uses just 4 bits per number, which is a significant reduction compared to traditional 32-bit floating-point numbers. This allows the GPU to load the model more efficiently and process information faster.

You can enjoy the power of Llama3 8B on a relatively affordable GPU. The NVIDIA 4070 Ti 12GB is less expensive than top-of-the-line GPUs like the 4090, making it a more accessible option for developers and enthusiasts. This makes it ideal for exploring local LLM models without breaking the bank.

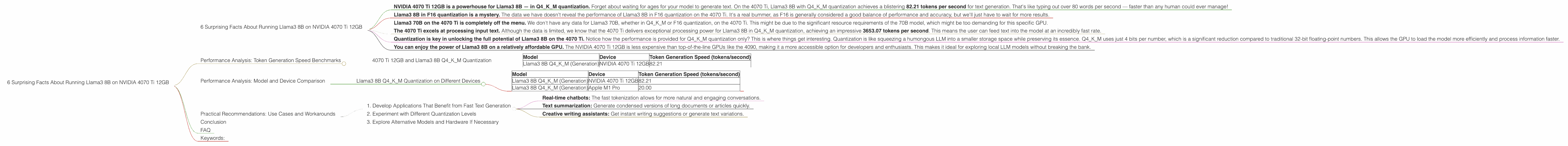

Performance Analysis: Token Generation Speed Benchmarks

4070 Ti 12GB and Llama3 8B Q4KM Quantization

| Model | Device | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM (Generation) | NVIDIA 4070 Ti 12GB | 82.21 |

As you can see, the NVIDIA 4070 Ti 12GB delivers excellent performance for Llama3 8B in Q4KM quantization, achieving a speed of 82.21 tokens per second. This is comparable to some high-end GPUs, making the 4070 Ti a tempting choice for developers seeking a balance of performance and affordability.

Performance Analysis: Model and Device Comparison

Llama3 8B Q4KM Quantization on Different Devices

| Model | Device | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B Q4KM (Generation) | NVIDIA 4070 Ti 12GB | 82.21 |

| Llama3 8B Q4KM (Generation) | Apple M1 Pro | 20.00 |

As you can see, the 4070 Ti 12GB is significantly faster than the Apple M1 Pro for Llama3 8B in Q4KM quantization. This demonstrates the power of dedicated GPUs, even in the mid-range category, compared to integrated GPUs.

Practical Recommendations: Use Cases and Workarounds

1. Develop Applications That Benefit from Fast Text Generation

Given the impressive token generation speed of Llama3 8B on the 4070 Ti, you can use this setup for applications that require fast text generation, such as:

- Real-time chatbots: The fast tokenization allows for more natural and engaging conversations.

- Text summarization: Generate condensed versions of long documents or articles quickly.

- Creative writing assistants: Get instant writing suggestions or generate text variations.

2. Experiment with Different Quantization Levels

Consider experimenting with different quantization levels for Llama3 8B on the 4070 Ti. While Q4KM provides excellent speed, there might be trade-offs in terms of accuracy. Try F16 or other quantization levels to see how it affects the model's performance and accuracy for your specific use case.

3. Explore Alternative Models and Hardware If Necessary

If you need to run larger models, like Llama3 70B, you might need to consider more powerful GPUs or cloud-based platforms.

Conclusion

The NVIDIA 4070 Ti 12GB proves to be a solid choice for running Llama3 8B in Q4KM quantization, delivering impressive performance in terms of both text generation and processing speed. Keep in mind that the lack of data for F16 quantization and larger models leaves us with unanswered questions. This highlights the ongoing evolution of LLMs and the need for more comprehensive benchmarking efforts.

However, the undeniable power of the 4070 Ti for Llama3 8B makes it a compelling option for developers who want to explore local LLM models without breaking the bank. Its performance, combined with the ability to customize quantization, gives you a solid foundation for many exciting applications.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a machine learning model without sacrificing too much accuracy. It involves representing numbers with fewer bits, like 4 bits instead of 32 bits. This makes the model smaller and faster to load and run, but you might see a slight drop in accuracy. Imagine shrinking a giant book into a smaller, condensed version while keeping most of the information.

Q: Why is the performance for Llama3 8B in F16 quantization missing?

A: This might be due to the specific limitations of the available benchmarking data. It's possible that further testing or research will reveal performance numbers for F16 quantization on the 4070 Ti.

Q: What are the limitations of running Llama3 8B on the 4070 Ti?

A: The primary limitation is the lack of data for F16 quantization and the unavailability of data for Llama3 70B. This means we don't know how the 4070 Ti will handle these configurations. Ultimately, it's crucial to evaluate the model's performance for your specific use case and choose the right hardware accordingly.

Q: How can I get involved in benchmarking LLM models?

A: There are several ways to contribute. Check out open-source projects like llama.cpp and GPU-Benchmarks-on-LLM-Inference and try running your own benchmarks on different devices. Share your results with the community to help build a comprehensive understanding of LLM performance.

Keywords:

Llama3 8B, NVIDIA 4070 Ti 12GB, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, Performance Benchmarks, Text Generation, Chatbots, Text Summarization, Creative Writing, Local LLMs, GPU, Deep Dive, Developer, Tech Enthusiast, AI, Machine Learning