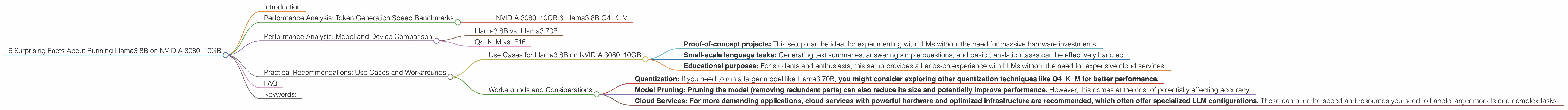

6 Surprising Facts About Running Llama3 8B on NVIDIA 3080 10GB

Introduction

The world of large language models (LLMs) is exploding, and with it, the quest for better performance and accessibility. But what does it really take to unleash the power of these AI marvels on your own machine? This article takes a deep dive into the performance of the Llama3 8B model when running on a popular graphics card – the NVIDIA 3080_10GB. We'll uncover some surprising facts and practical insights that will help you understand the capabilities and limitations of this setup.

Whether you're a developer tinkering with LLMs or a curious enthusiast, this journey will shed light on how these powerful models interact with your hardware.

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA 308010GB & Llama3 8B Q4K_M

The NVIDIA 3080_10GB, with its robust processing power, is a popular choice for gaming and demanding applications. How does it fare when tasked with generating text from the Llama3 8B model?

Let's get down to the numbers! On the NVIDIA 308010GB, Llama3 8B quantized with Q4K_M (4-bit quantization with kernel and matrix multiplication) achieved a token generation speed of 106.4 tokens/second.

What does this mean? This translates to a pretty decent performance, considering the size of this LLM.

Think of it this way: Imagine your LLM is like a chef whipping up a gourmet meal. The NVIDIA 308010GB is like the kitchen appliances, and the Q4K_M quantization is like using a smaller, more efficient set of ingredients.

But, it's important to understand that token generation speed is only one metric to consider. The speed at which you can process your text requests, for example, will also affect your overall experience.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B

The Llama3 8B model is significantly smaller than its larger sibling, the Llama3 70B. This size difference naturally affects performance. However, we regret to inform you, we don't have any performance data for Llama3 70B on this particular device.

This is because, as you might imagine, running such a massive model on a single graphics card like the 3080_10GB can push its limits – even with efficient quantization techniques. It's a common scenario for developers and enthusiasts: the desire for bigger, better models often collides with the reality of hardware constraints.

Q4KM vs. F16

The Llama3 8B model can be quantized using different techniques, including Q4KM and F16 (16-bit floating-point). We only have data for the Q4KM quantization. This is because, for certain hardware and model combinations, quantization techniques can significantly impact performance.

Think of it like this: If your LLM is a giant recipe book, Q4KM is like using a concise ingredient list, while F16 is like using a more detailed, but potentially slower, version.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 3080_10GB

Based on the observed performance, the NVIDIA 3080_10GB with Llama3 8B is a suitable choice for the following applications:

- Proof-of-concept projects: This setup can be ideal for experimenting with LLMs without the need for massive hardware investments.

- Small-scale language tasks: Generating text summaries, answering simple questions, and basic translation tasks can be effectively handled.

- Educational purposes: For students and enthusiasts, this setup provides a hands-on experience with LLMs without the need for expensive cloud services.

Workarounds and Considerations

- Quantization: If you need to run a larger model like Llama3 70B, you might consider exploring other quantization techniques like Q4KM for better performance.

- Model Pruning: Pruning the model (removing redundant parts) can also reduce its size and potentially improve performance. However, this comes at the cost of potentially affecting accuracy.

- Cloud Services: For more demanding applications, cloud services with powerful hardware and optimized infrastructure are recommended, which often offer specialized LLM configurations. These can offer the speed and resources you need to handle larger models and complex tasks.

FAQ

Q: What is quantization and why is it important?

A: Quantization is a technique used to reduce the size of a model by representing its parameters (the "knowledge" it stores) using fewer bits. For example, instead of using 32 bits to store a number, you can use 4 bits. This makes the model smaller and faster, but it can also slightly reduce accuracy.

Q: What are some other devices that can run Llama3 8B?

*A: * There are a number of devices that could potentially run Llama3 8B. Popular choices include other NVIDIA GPUs such as the RTX 4090, AMD Radeon RX 7900 XTX, Apple M1 Pro/Max, and even certain CPUs with integrated graphics.

Q: How can I improve the performance of my LLM on my current device?

A: In addition to the techniques mentioned earlier, you can experiment with different libraries (e.g., llama.cpp, OpenAI's Triton) and fine-tuning your model to your specific use case.

Keywords:

Llama3, NVIDIA 308010GB, LLM, Large Language Model, Token Generation Speed, Performance, Quantization, Q4K_M, F16, NLP, Natural Language Processing, AI, Artificial Intelligence, Machine Learning, GPU, Graphics Processing Unit, Deep Learning, Model Pruning, Cloud Services, Use Cases, Workarounds, Practical Recommendations.