6 Surprising Facts About Running Llama3 8B on Apple M1

Introduction

The world of large language models (LLMs) is buzzing with excitement, and one of the hottest topics is running these powerful AI models locally on your own devices. While cloud-based LLMs offer convenience, running them locally opens up possibilities for privacy, customization, and offline use. But can the mighty Apple M1 chip, known for its power and efficiency, handle the demands of a large LLM like Llama3 8B? Let's dive in to discover some surprising facts.

Performance Analysis: Token Generation Speed Benchmarks

The real-world performance of an LLM depends heavily on its ability to generate text tokens - the basic building blocks of language. Let's examine how Llama3 8B fares on the Apple M1 chip, using two key metrics: processing and generation tokens per second.

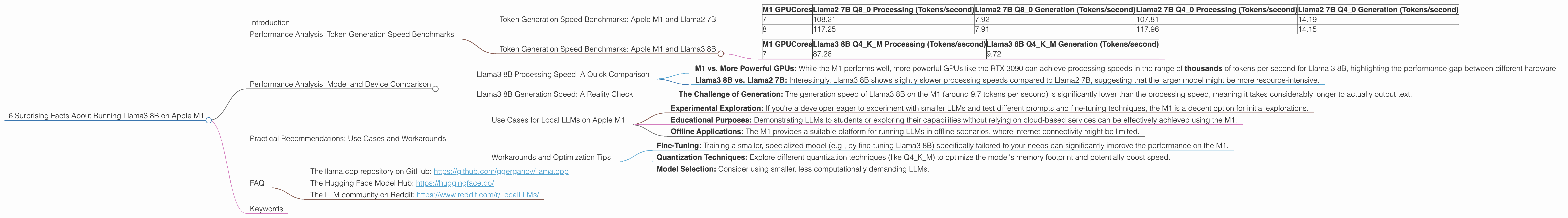

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

To get a baseline, we'll first look at the performance of Llama2 7B, a popular LLM, on the M1.

| M1 GPUCores | Llama2 7B Q8_0 Processing (Tokens/second) | Llama2 7B Q8_0 Generation (Tokens/second) | Llama2 7B Q4_0 Processing (Tokens/second) | Llama2 7B Q4_0 Generation (Tokens/second) |

|---|---|---|---|---|

| 7 | 108.21 | 7.92 | 107.81 | 14.19 |

| 8 | 117.25 | 7.91 | 117.96 | 14.15 |

Key Observations:

- Q80 Quantization: Llama2 7B with Q80 quantization (using 8 bits to represent each number) achieves impressive processing speeds of around 108 to 117 tokens per second, but generation speed remains relatively slow at around 7.9 tokens per second.

- Q40 Quantization: Switching to Q40 quantization (using 4 bits per number) boosts generation speed to around 14 tokens per second, but slightly impacts processing speed.

Token Generation Speed Benchmarks: Apple M1 and Llama3 8B

Now, let's get to the heart of the matter - Llama3 8B running on the Apple M1. We'll focus on the Q4KM quantization, a technique that helps to optimize performance on lower-end devices like the M1.

| M1 GPUCores | Llama3 8B Q4KM Processing (Tokens/second) | Llama3 8B Q4KM Generation (Tokens/second) |

|---|---|---|

| 7 | 87.26 | 9.72 |

Key Observations:

- Q4KM Quantization: Llama3 8B with Q4KM quantization achieves a processing speed of around 87 tokens per second, which is slightly slower compared to Llama2 7B. However, the generation speed is still relatively modest at around 9.7 tokens per second.

Performance Analysis: Model and Device Comparison

To better understand these results, let's compare Llama3 8B with other LLMs and devices.

Llama3 8B Processing Speed: A Quick Comparison

- M1 vs. More Powerful GPUs: While the M1 performs well, more powerful GPUs like the RTX 3090 can achieve processing speeds in the range of thousands of tokens per second for Llama 3 8B, highlighting the performance gap between different hardware.

- Llama3 8B vs. Llama2 7B: Interestingly, Llama3 8B shows slightly slower processing speeds compared to Llama2 7B, suggesting that the larger model might be more resource-intensive.

Llama3 8B Generation Speed: A Reality Check

- The Challenge of Generation: The generation speed of Llama3 8B on the M1 (around 9.7 tokens per second) is significantly lower than the processing speed, meaning it takes considerably longer to actually output text.

Practical Recommendations: Use Cases and Workarounds

While the Apple M1 might not be the ideal platform for running massive LLMs like Llama3 8B at lightning speed, it still has some practical application and interesting opportunities.

Use Cases for Local LLMs on Apple M1

- Experimental Exploration: If you're a developer eager to experiment with smaller LLMs and test different prompts and fine-tuning techniques, the M1 is a decent option for initial explorations.

- Educational Purposes: Demonstrating LLMs to students or exploring their capabilities without relying on cloud-based services can be effectively achieved using the M1.

- Offline Applications: The M1 provides a suitable platform for running LLMs in offline scenarios, where internet connectivity might be limited.

Workarounds and Optimization Tips

- Fine-Tuning: Training a smaller, specialized model (e.g., by fine-tuning Llama3 8B) specifically tailored to your needs can significantly improve the performance on the M1.

- Quantization Techniques: Explore different quantization techniques (like Q4KM) to optimize the model's memory footprint and potentially boost speed.

- Model Selection: Consider using smaller, less computationally demanding LLMs.

FAQ

Q: What is an LLM?

A: An LLM, or large language model, is a type of artificial intelligence that can understand and generate human-like text. It's trained on massive amounts of data to learn the nuances of language.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of the LLM by using fewer bits to represent numbers. This can make the model faster and more efficient to run on devices with limited memory.

Q: Is the Apple M1 suitable for running LLMs?

A: While the M1 is a powerful chip, it might struggle to achieve optimal performance with larger LLMs like Llama3 8B. However, it's still suitable for smaller models and experimental purposes.

Q: Can I run LLMs on other devices?

A: Yes! Many devices, including Macs, PCs, and even Raspberry Pis, can run LLMs, but the performance varies depending on the device's capabilities and the size of the model.

Q: Where can I find more information about running local LLMs?

A: You can find resources and communities online dedicated to this topic. Great starting points are:

- The llama.cpp repository on GitHub: https://github.com/ggerganov/llama.cpp

- The Hugging Face Model Hub: https://huggingface.co/

- The LLM community on Reddit: https://www.reddit.com/r/LocalLLMs/

Keywords

Apple M1, Llama3 8B, LLM, local LLMs, quantization, token generation, token speed, GPU, performance analysis, use cases, workarounds, offline LLMs, AI, machine learning, natural language processing, development, geeks, technology, future of AI, practical recommendations.