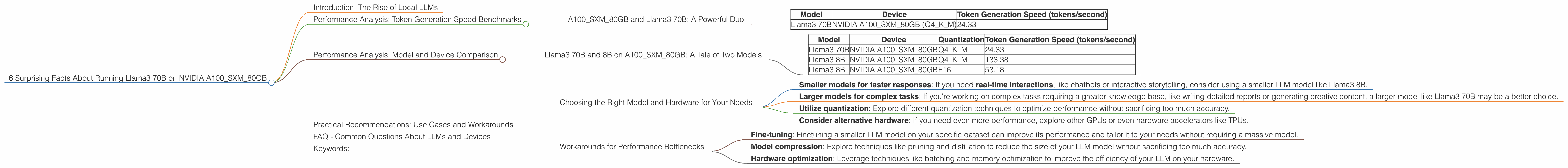

6 Surprising Facts About Running Llama3 70B on NVIDIA A100 SXM 80GB

Introduction: The Rise of Local LLMs

The world of Large Language Models (LLMs) is exploding, with models like ChatGPT and Bard capturing headlines and changing the way we interact with technology. However, these LLMs often rely on cloud-based infrastructure, raising concerns about latency, privacy, and costs. This is where local LLMs come in – they allow you to run these powerful AI models right on your own hardware, offering a compelling alternative.

To truly understand the potential of local LLMs, you need to delve into their performance characteristics. This article dives deep into the performance of the Llama3 70B model on a powerful NVIDIA A100SXM80GB GPU. Get ready to discover some surprising facts about this combination that might change your perception of running powerful LLMs locally.

Performance Analysis: Token Generation Speed Benchmarks

A100SXM80GB and Llama3 70B: A Powerful Duo

Let's start with the heart of our investigation: token generation speed. This metric measures how quickly an LLM can process text and generate new tokens (words or sub-words). Faster token generation means smoother and more responsive interactions with your LLM.

| Model | Device | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 70B | NVIDIA A100SXM80GB (Q4KM) | 24.33 |

A100SXM80GB: This powerhouse GPU packed with 40GB of HBM2e memory and 40GB of DRAM is a beast. It's known for its high memory bandwidth, making it ideal for processing large neural networks.

Llama3 70B: A major player in the LLM world, this model boasts a massive 70 billion parameters, making it one of the most powerful language models around.

Q4KM Quantization: This term refers to a technique called quantization, which reduces the size of the LLM model without compromising its accuracy. Quantization is like compressing a file, but for neural networks. This allows for faster processing and less memory usage.

The Results: You might be surprised to see that even with a high-end GPU like the A100SXM80GB, the Llama3 70B model still doesn't reach blazing-fast speeds. This highlights the computational demands of LLMs, even when using powerful hardware.

Analogy: Think of it like trying to fit a giant elephant into a small car. Even with a powerful car, you still need to make adjustments to accommodate the elephant's size.

Performance Analysis: Model and Device Comparison

Llama3 70B and 8B on A100SXM80GB: A Tale of Two Models

To better understand the performance landscape, let's compare Llama3 70B with a smaller model, Llama3 8B, both running on the A100SXM80GB.

| Model | Device | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|---|

| Llama3 70B | NVIDIA A100SXM80GB | Q4KM | 24.33 |

| Llama3 8B | NVIDIA A100SXM80GB | Q4KM | 133.38 |

| Llama3 8B | NVIDIA A100SXM80GB | F16 | 53.18 |

Key Takeaways:

- Smaller models, faster speeds: The Llama3 8B model clearly outperforms the 70B model in terms of token generation speed, even when using the same GPU. This underscores the trade-off between model size and performance.

- Quantization matters: Using Q4KM quantization for the Llama3 8B model results in nearly 2.5 times faster token generation compared to F16 quantization. Quantization is a powerful tool for boosting performance without sacrificing too much accuracy!

Analogy: Think of it like comparing a race car with a semi-truck. The race car might be more agile and faster on a track, while the semi-truck can carry a lot more cargo. It's all about choosing the right tool for the job.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model and Hardware for Your Needs

The performance data we've explored highlights the importance of considering your specific use case and requirements when choosing an LLM and hardware configuration.

Here are some practical recommendations:

- Smaller models for faster responses: If you need real-time interactions, like chatbots or interactive storytelling, consider using a smaller LLM model like Llama3 8B.

- Larger models for complex tasks: If you're working on complex tasks requiring a greater knowledge base, like writing detailed reports or generating creative content, a larger model like Llama3 70B may be a better choice.

- Utilize quantization: Explore different quantization techniques to optimize performance without sacrificing too much accuracy.

- Consider alternative hardware: If you need even more performance, explore other GPUs or even hardware accelerators like TPUs.

Workarounds for Performance Bottlenecks

Sometimes, even with the best hardware, you might encounter performance bottlenecks. Here are some workarounds:

- Fine-tuning: Finetuning a smaller LLM model on your specific dataset can improve its performance and tailor it to your needs without requiring a massive model.

- Model compression: Explore techniques like pruning and distillation to reduce the size of your LLM model without sacrificing too much accuracy.

- Hardware optimization: Leverage techniques like batching and memory optimization to improve the efficiency of your LLM on your hardware.

FAQ - Common Questions About LLMs and Devices

Q: What are local LLMs? A: Local LLMs are Large Language Models that run directly on your own hardware, like your computer or server, instead of relying on cloud services.

Q: What are the benefits of running LLMs locally? A: Running LLMs locally offers benefits like faster response times, improved privacy, and potentially lower costs.

Q: What are the challenges of running LLMs locally? A: Running LLMs locally can require powerful hardware, specialized knowledge, and potentially more resources for maintenance.

Q: What is quantization? A: Quantization is a technique used to reduce the size of an LLM while maintaining its accuracy. Think of it like compressing a file but for a neural network. This enables faster processing and less memory usage.

Q: How do I choose the right hardware for my LLM? A: Factors to consider include the size of your LLM, the tasks you want to perform, and your budget. Powerful GPUs like those from NVIDIA are popular choices for LLMs.

Keywords:

Local LLMs, Llama3, 70B, A100SXM80GB, NVIDIA, Token Generation Speed, Performance, Quantization, Practical Recommendations, Use Cases, Workarounds, Model Comparison, GPU, Hardware, AI, Machine Learning, Deep Learning