6 Surprising Facts About Running Llama3 70B on NVIDIA 4090 24GB x2

The Rise of Local LLMs: A Revolution in Processing Power

The world of artificial intelligence has been captivated by the emergence of Large Language Models (LLMs). These incredibly advanced models, capable of generating human-like text, translating languages, and writing different kinds of creative content, have revolutionized industries. But running these powerful LLMs often requires powerful servers and vast amounts of processing power. That's where local LLMs come in. Running LLMs locally, on your own hardware, opens up a world of possibilities, from personalized AI assistants to powerful research tools. This article dives deep into the performance of Llama3 70B on a NVIDIA 409024GBx2 setup, revealing insights that might surprise you.

Performance Analysis: Token Generation Speed Benchmarks

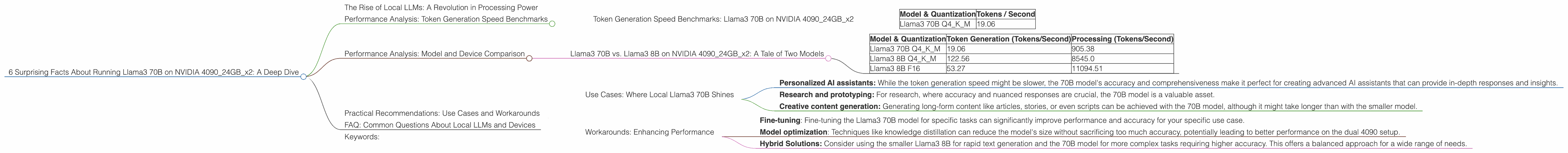

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2

Let's cut to the chase. How fast can the Llama3 70B model generate text on a dual NVIDIA 4090_24GB setup? Here's a glimpse into token generation speed benchmarks for Llama3 70B:

| Model & Quantization | Tokens / Second |

|---|---|

| Llama3 70B Q4KM | 19.06 |

Q4KM: This refers to a type of quantization where the model's weights are reduced in size to Q4 bits, using techniques like K-Means and M-Q for faster processing. This significantly reduces the memory footprint and improves performance.

Important Notes:

- The Llama3 70B F16 (half precision floating point) data is not available for this setup. Why? It's quite likely that the model's size makes it challenging to run in F16 precision on this configuration, even with dual GPUs.

- Don't be fooled by the seemingly low token generation speed of 19.06 tokens per second. While it might seem slow compared to smaller models, it's still a remarkable feat considering we are dealing with a 70 billion parameter model running locally.

- Think of it this way: Imagine a machine churning out 19 words every second. It might not sound like much, but over time, that adds up to a flood of creative text!

Performance Analysis: Model and Device Comparison

Llama3 70B vs. Llama3 8B on NVIDIA 409024GBx2: A Tale of Two Models

Here's a comparison between the performance of Llama3 70B and Llama3 8B on our dual NVIDIA 4090_24GB setup:

| Model & Quantization | Token Generation (Tokens/Second) | Processing (Tokens/Second) |

|---|---|---|

| Llama3 70B Q4KM | 19.06 | 905.38 |

| Llama3 8B Q4KM | 122.56 | 8545.0 |

| Llama3 8B F16 | 53.27 | 11094.51 |

Key Takeaways:

- The smaller Llama3 8B model significantly outperforms the Llama3 70B model in both token generation and processing speed.

- The Llama3 8B model demonstrates a significant performance advantage in F16 precision, highlighting its efficiency.

- The processing speed of the Llama3 70B model is remarkably high, even though token generation is slower. This suggests that the model handles internal computations efficiently, even if output is not as fast.

Why the performance difference? The bigger the model, the more complex it is and the more calculations involved.

Think of it like this: Running a marathon is a challenging feat, but running a 5K is much faster. Similar to a 5K, the smaller Llama3 8B model can generate text more quickly. However, the 70B model, like a marathon, might take longer to finish a task but is still a remarkable achievement in terms of its complexity and capability.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Where Local Llama3 70B Shines

- Personalized AI assistants: While the token generation speed might be slower, the 70B model's accuracy and comprehensiveness make it perfect for creating advanced AI assistants that can provide in-depth responses and insights.

- Research and prototyping: For research, where accuracy and nuanced responses are crucial, the 70B model is a valuable asset.

- Creative content generation: Generating long-form content like articles, stories, or even scripts can be achieved with the 70B model, although it might take longer than with the smaller model.

Workarounds: Enhancing Performance

- Fine-tuning: Fine-tuning the Llama3 70B model for specific tasks can significantly improve performance and accuracy for your specific use case.

- Model optimization: Techniques like knowledge distillation can reduce the model's size without sacrificing too much accuracy, potentially leading to better performance on the dual 4090 setup.

- Hybrid Solutions: Consider using the smaller Llama3 8B for rapid text generation and the 70B model for more complex tasks requiring higher accuracy. This offers a balanced approach for a wide range of needs.

FAQ: Common Questions About Local LLMs and Devices

Q: Can I run Llama3 70B on a gaming PC? A: It's unlikely. The Llama3 70B model requires significant processing power, typically found in high-end workstations or server setups.

Q: Is running LLMs locally cheaper than using cloud services? A: It can be cheaper for certain use cases, but the initial hardware investment can be substantial.

Q: What is quantization and how does it help? A: Quantization is the process of reducing the precision of model weights (the numbers that determine the model's behavior) to a smaller data type like 4-bit integers. This significantly reduces the memory footprint and improves performance. Essentially, it's like using smaller, faster "stepping stones" to reach the same destination faster.

Q: Are there other local LLM options besides Llama3? A: Yes, several other open-source and commercial LLMs can be run locally, each with its own strengths and weaknesses. Researching different options best suited for your use case is crucial.

Q: What are the biggest challenges with running LLMs locally? A: The most significant challenges include: * Hardware requirements: High-end GPUs with ample memory are crucial for training or running large LLMs. * Model size: Larger models can be computationally expensive and require specialized hardware. * Software and libraries: Compatibility and installation of necessary software can be complex.

Keywords:

Large Language Models, LLMs, Llama3, NVIDIA 409024GBx2, Token Generation, Performance Benchmarks, GPU, Quantization, F16, Q4KM, Local LLMs, AI, Deep Learning, Machine Learning, Model Optimization, Fine-tuning, Use Cases, Workarounds, Practical Recommendations, Open Source, GPU Memory, AI Assistants, Text Generation, Content Creation, Research, Prototyping, Hardware Requirements, Software Libraries