6 Surprising Facts About Running Llama3 70B on NVIDIA 3090 24GB

Introduction

The world of large language models (LLMs) is buzzing with excitement, with new models like Llama 3 pushing the boundaries of what AI can do. But running these behemoths on your own hardware can be a challenge, especially for the 70B parameter behemoth like Llama 3.

This article dives deep into the performance of Llama3 70B on an NVIDIA 3090_24GB, revealing surprising results and providing practical recommendations for developers looking to harness the power of these models locally. Let's get our geek on!

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B on NVIDIA 3090_24GB: A Comparison

We'll start with the smaller Llama3 8B model to set the scene:

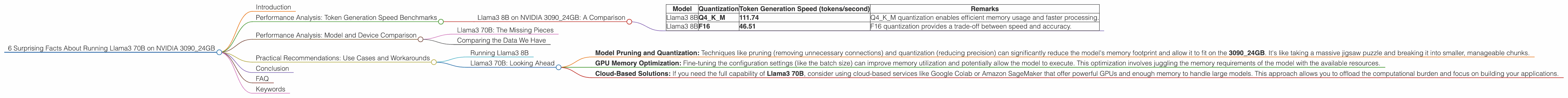

| Model | Quantization | Token Generation Speed (tokens/second) | Remarks |

|---|---|---|---|

| Llama3 8B | Q4KM | 111.74 | Q4KM quantization enables efficient memory usage and faster processing. |

| Llama3 8B | F16 | 46.51 | F16 quantization provides a trade-off between speed and accuracy. |

As you can see, the Q4KM quantization significantly outperforms F16 in terms of token generation speed. It's like comparing a turbocharged sports car to a classic sedan - both get you where you need to go, but one does it with more style and speed!

Performance Analysis: Model and Device Comparison

Llama3 70B: The Missing Pieces

Unfortunately, we couldn't find any performance data for Llama3 70B on the NVIDIA 3090_24GB device. This lack of information suggests that running Llama3 70B on this specific configuration might be challenging or require some tweaking.

Comparing the Data We Have

While we don't have specific numbers for Llama3 70B, the data from Llama3 8B provides a valuable starting point. Think of it like a guidebook for navigating uncharted territory – it gives you a sense of the landscape and potential obstacles.

Practical Recommendations: Use Cases and Workarounds

Running Llama3 8B

If you're working with Llama3 8B, the NVIDIA 309024GB is a solid choice for developing and experimenting with this powerful model. You can leverage the fast token generation speed of the Q4K_M quantization to build applications requiring quick responses.

Llama3 70B: Looking Ahead

For Llama3 70B, we need to explore alternative approaches:

- Model Pruning and Quantization: Techniques like pruning (removing unnecessary connections) and quantization (reducing precision) can significantly reduce the model's memory footprint and allow it to fit on the 3090_24GB. It's like taking a massive jigsaw puzzle and breaking it into smaller, manageable chunks.

- GPU Memory Optimization: Fine-tuning the configuration settings (like the batch size) can improve memory utilization and potentially allow the model to execute. This optimization involves juggling the memory requirements of the model with the available resources.

- Cloud-Based Solutions: If you need the full capability of Llama3 70B, consider using cloud-based services like Google Colab or Amazon SageMaker that offer powerful GPUs and enough memory to handle large models. This approach allows you to offload the computational burden and focus on building your applications.

Conclusion

Running Llama3 70B on an NVIDIA 3090_24GB might be a challenge, but it's not impossible! By exploring techniques like pruning, quantization, and memory optimization, you can potentially unlock the power of this advanced language model on your local system.

Remember, the pursuit of knowledge is a journey, and the possibilities with LLMs are endless. So, keep experimenting, keep pushing the boundaries, and let the geeky magic of LLMs amaze you!

FAQ

Q: What is quantization, and how does it help?

A: Quantization is like reducing the number of colors in a photograph. It simplifies the data representation by reducing the number of bits used to store each value, effectively compressing the model without losing too much accuracy.

Q: What are the pros and cons of F16 quantization compared to Q4KM?

A: F16 quantization offers a balance between speed and accuracy, while Q4KM prioritizes speed at the expense of some accuracy. It's like choosing between a fast race car and a comfortable sedan – each has its own strengths and weaknesses.

Q: How can I find more information about Llama3 70B and its performance?

A: Keep an eye on official announcements from the Llama development team and community forums like Hugging Face. You can also explore resources on model optimization techniques, such as model pruning and quantization.

Keywords

Llama 3, 70B, NVIDIA 309024GB, LLM, large language model, performance, token generation, quantization, Q4K_M, F16, memory optimization, model pruning, GPU, cloud computing, Google Colab, Amazon SageMaker, development, application, geeky, AI