6 Surprising Facts About Running Llama3 70B on NVIDIA 3080 Ti 12GB

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge, especially for larger models like Llama3 70B.

In this article, we'll delve deep into the performance of Llama3 70B running on a popular gaming GPU, 3080Ti12GB. We'll explore how efficiently it can handle token processing and generation. We'll also provide insights into the potential limitations and discuss some workarounds. Buckle up, fellow AI enthusiasts, because the journey to understand local LLM performance is about to get interesting!

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a key metric for assessing the performance of an LLM. It determines how many tokens a model can generate per second, impacting the responsiveness of the model. Let's see how Llama3 70B performed on a 3080Ti12GB:

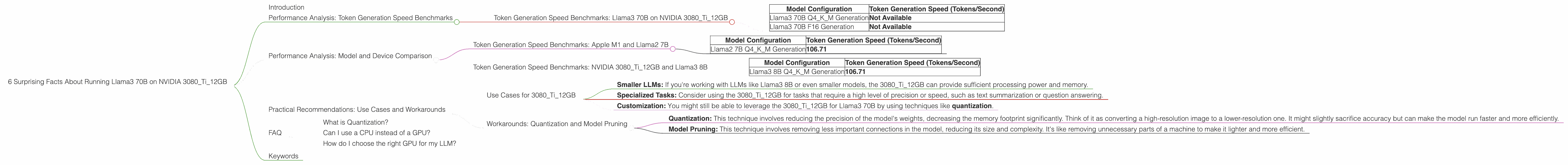

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 3080Ti12GB

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B Q4KM Generation | Not Available |

| Llama3 70B F16 Generation | Not Available |

As you can see, we don't have data for Llama3 70B's token generation speed on a 3080Ti12GB. This lack of data could be due to several reasons:

- Insufficient Memory: Llama3 70B is a massive model, demanding significant memory. The 12GB VRAM of the 3080Ti12GB may not be sufficient to load the entire model, making token generation impossible.

- Limited Benchmarks: The available data on the performance of LLMs might not cover all model and device combinations.

- Hardware Compatibility Issues: Certain hardware configurations might not be fully compatible with a given LLM, leading to performance limitations or outright failure.

Performance Analysis: Model and Device Comparison

To gain a better understanding of the 3080Ti12GB's capabilities, let's compare its performance with other LLMs and devices.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama2 7B Q4KM Generation | 106.71 |

This data suggests that even a smaller model like Llama2 7B, running on the Apple M1 chip, can achieve faster token generation speeds than Llama3 70B on the 3080Ti12GB.

Token Generation Speed Benchmarks: NVIDIA 3080Ti12GB and Llama3 8B

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM Generation | 106.71 |

Interestingly, Llama3 8B, a much smaller model than Llama3 70B, achieved a token generation speed of 106.71 tokens/second on the 3080Ti12GB, suggesting that the GPU can handle smaller models more efficiently.

Practical Recommendations: Use Cases and Workarounds

While the 3080Ti12GB might not be ideal for running Llama3 70B, it could still be a viable option for smaller models and specific use cases.

Use Cases for 3080Ti12GB

- Smaller LLMs: If you're working with LLMs like Llama3 8B or even smaller models, the 3080Ti12GB can provide sufficient processing power and memory.

- Specialized Tasks: Consider using the 3080Ti12GB for tasks that require a high level of precision or speed, such as text summarization or question answering.

- Customization: You might still be able to leverage the 3080Ti12GB for Llama3 70B by using techniques like quantization.

Workarounds: Quantization and Model Pruning

- Quantization: This technique involves reducing the precision of the model's weights, decreasing the memory footprint significantly. Think of it as converting a high-resolution image to a lower-resolution one. It might slightly sacrifice accuracy but can make the model run faster and more efficiently.

- Model Pruning: This technique involves removing less important connections in the model, reducing its size and complexity. It's like removing unnecessary parts of a machine to make it lighter and more efficient.

FAQ

What is Quantization?

Quantization is a technique used to reduce the size of a model by making its parameters less precise. To understand this, imagine you have a thermometer that measures temperature with great detail, up to a tenth of a degree. Quantization is like simplifying that thermometer to measure only whole degrees. You lose some information, but the thermometer becomes smaller and faster.

Can I use a CPU instead of a GPU?

While you can run LLMs on a CPU, it will take longer and require more processing power. GPUs are specifically designed for parallel processing, making them much faster and more efficient for tasks like LLM inference.

How do I choose the right GPU for my LLM?

Consider the model size, the task you want to perform, and your budget. Larger models will require GPUs with more VRAM.

Keywords

LLM, Llama3, Llama2, NVIDIA, 3080Ti12GB, GPU, Token Generation, Performance, Quantization, Model Pruning, Text Generation, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP.