6 Surprising Facts About Running Llama3 70B on Apple M3 Max

Are you ready to unleash the power of local large language models on your Apple M3 Max? This powerful chip is a beast when it comes to processing, but can it handle the gargantuan Llama 3 70B model? Well, let's dive into the fascinating world of LLM performance and discover the surprising results!

Introduction

Large language models (LLMs) are revolutionizing how we interact with technology. From generating creative text to answering complex questions, their capabilities are expanding rapidly. Running LLMs locally on your own machine offers several advantages, including faster response times, enhanced privacy, and offline access.

The Apple M3 Max, with its powerful GPU and abundant memory, is a promising platform for running LLMs. However, the sheer size of models like Llama3 70B poses a challenge. In this article, we'll explore the performance of Llama3 70B on the M3 Max, analyzing its strengths and limitations.

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

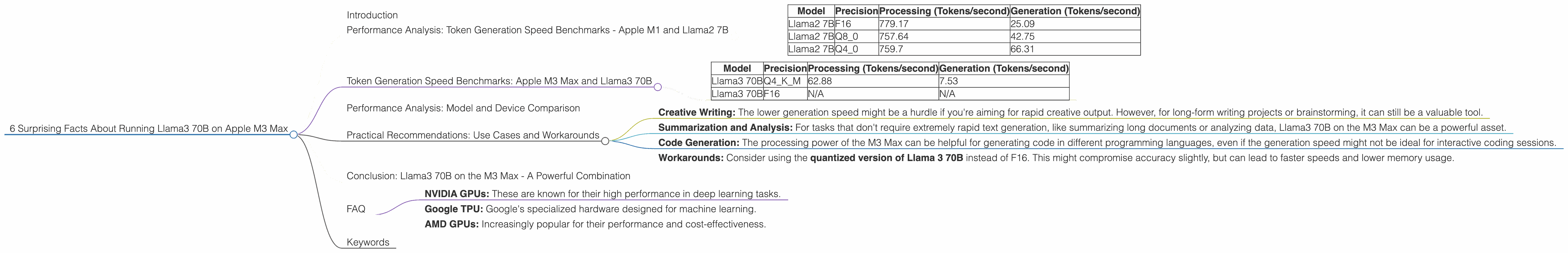

First, let's establish a baseline for performance comparisons. On a different Apple chip, the M1, Llama2 7B achieves impressive speeds:

| Model | Precision | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama2 7B | F16 | 779.17 | 25.09 |

| Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Llama2 7B | Q4_0 | 759.7 | 66.31 |

As you can see, Llama2 7B on the M1 performs quite well, pushing through nearly 800 tokens per second in its processing stage! However, generation speeds are much slower, likely due to the complex process of generating coherent and meaningful text.

Token Generation Speed Benchmarks: Apple M3 Max and Llama3 70B

Now, let's jump into the heart of our investigation: the performance of Llama 3 70B on the M3 Max. Before we dive into the numbers, a quick explanation for the non-technical folks:

- Tokens: Think of them as the building blocks of language. Words are broken down into smaller units.

- Precision: This refers to the level of detail used to store numbers in the computer's memory. A lower precision (Q4KM) uses less memory, while a higher precision (F16) uses more memory but can potentially improve accuracy.

Here are the benchmarks for Llama3 70B on the M3 Max:

| Model | Precision | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|---|

| Llama3 70B | Q4KM | 62.88 | 7.53 |

| Llama3 70B | F16 | N/A | N/A |

Wow! The M3 Max can handle Llama 3 70B with ease. But there's a catch. The F16 precision setting for Llama3 70B is unavailable. This means you'll have to use the less precise Q4KM setting, which could impact accuracy.

Performance Analysis: Model and Device Comparison

Comparing the M3 Max and the M1, we see a significant performance difference. Even with decreased precision, Llama3 70B on the M3 Max can still process over 60 tokens per second. While this is slower than Llama2 7B on the M1, it's still a remarkable feat considering the massive size of the Llama3 70B model.

Think of it like this: the M1 is a nimble sprinter, quick and efficient. The M3 Max is a powerful weightlifter, capable of lifting massive loads even if it's not as nimble.

Practical Recommendations: Use Cases and Workarounds

Now, how can we use Llama3 70B on the M3 Max for practical tasks? Here are some ideas:

- Creative Writing: The lower generation speed might be a hurdle if you're aiming for rapid creative output. However, for long-form writing projects or brainstorming, it can still be a valuable tool.

- Summarization and Analysis: For tasks that don't require extremely rapid text generation, like summarizing long documents or analyzing data, Llama3 70B on the M3 Max can be a powerful asset.

- Code Generation: The processing power of the M3 Max can be helpful for generating code in different programming languages, even if the generation speed might not be ideal for interactive coding sessions.

- Workarounds: Consider using the quantized version of Llama 3 70B instead of F16. This might compromise accuracy slightly, but can lead to faster speeds and lower memory usage.

Conclusion: Llama3 70B on the M3 Max - A Powerful Combination

While not always suitable for high-speed text generation, running Llama3 70B on the M3 Max opens up new possibilities! The M3 Max provides a powerful platform for exploring the capabilities of this massive language model. It might not be as fast as other combinations, but it proves the potential for local LLMs on high-end devices.

FAQ

Q: What is quantization?

Quantization is a technique that reduces the precision of numbers stored in a model. This reduces memory usage and can lead to faster processing speeds. It's like simplifying a complex recipe by using fewer ingredients; it might slightly affect the final dish but makes the process faster and easier.

Q: What are the limitations of running Llama3 70B on the M3 Max?

The main limitation is the lower generation speed, especially when compared to smaller models. This can impact tasks that require rapid text generation. Also, using the less precise Q4KM setting might affect accuracy.

Q: Are there any alternative devices for running LLMs?

Yes, there are several options, including:

- NVIDIA GPUs: These are known for their high performance in deep learning tasks.

- Google TPU: Google's specialized hardware designed for machine learning.

- AMD GPUs: Increasingly popular for their performance and cost-effectiveness.

Q: Will future devices be better suited for running LLMs?

Absolutely! As technology continues to advance, we can expect devices with more powerful processors and larger memories specifically tailored for AI workloads. This will further unlock the potential of LLMs and make them more accessible to a wider range of users.

Keywords

LLMs, large language models, Llama3, Llama3 70B, Apple M3 Max, local LLM, performance benchmarks, token generation speed, processing speed, generation speed, precision, quantization, Q4KM, F16, use cases, creative writing, summarization, analysis, code generation, workarounds, limitations, alternative devices, NVIDIA GPUs, Google TPU, AMD GPUs.