6 Power Saving Tips for 24 7 AI Operations on NVIDIA RTX A6000 48GB

Introduction

The world of large language models (LLMs) is buzzing with excitement! These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and even answer your questions in an informative way. But running these models, especially 24/7, can be a power-hungry endeavor. Enter the NVIDIA RTX A6000 48GB, a beastly GPU designed for AI workloads. This article will explore six power-saving tips for running LLMs smoothly and efficiently on the RTX A6000, helping you keep your AI applications humming without breaking the bank.

Imagine your LLM is a high-performance race car. It's super fast, but to get the most out of its power, you need to use the right fuel and driving strategies. Our tips are like optimizing the fuel and driving techniques, saving you money on your electricity bill and ensuring you can keep your AI engine running for longer.

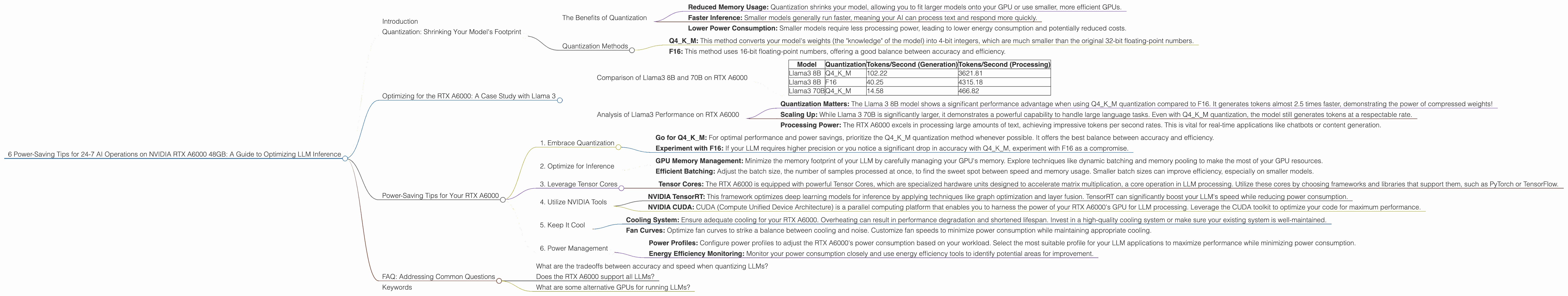

Quantization: Shrinking Your Model's Footprint

Quantization is a technique that reduces the size of your LLM, making it lighter and faster. Think of it like compressing a large image file – you lose some detail, but the file becomes much smaller.

The Benefits of Quantization

- Reduced Memory Usage: Quantization shrinks your model, allowing you to fit larger models onto your GPU or use smaller, more efficient GPUs.

- Faster Inference: Smaller models generally run faster, meaning your AI can process text and respond more quickly.

- Lower Power Consumption: Smaller models require less processing power, leading to lower energy consumption and potentially reduced costs.

Quantization Methods

There are a few common quantization methods, the most popular being:

- Q4KM: This method converts your model's weights (the "knowledge" of the model) into 4-bit integers, which are much smaller than the original 32-bit floating-point numbers.

- F16: This method uses 16-bit floating-point numbers, offering a good balance between accuracy and efficiency.

Optimizing for the RTX A6000: A Case Study with Llama 3

Let's dive into some real-world data. Using the RTX A6000 48GB, we'll analyze the performance of Llama 3, a powerful open-source LLM. For this analysis, we'll focus on two popular Llama 3 models: 8B and 70B. (Note: Data for Llama 3 70B F16 is not available at this time.)

Comparison of Llama3 8B and 70B on RTX A6000

| Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama3 8B | Q4KM | 102.22 | 3621.81 |

| Llama3 8B | F16 | 40.25 | 4315.18 |

| Llama3 70B | Q4KM | 14.58 | 466.82 |

Analysis of Llama3 Performance on RTX A6000

- Quantization Matters: The Llama 3 8B model shows a significant performance advantage when using Q4KM quantization compared to F16. It generates tokens almost 2.5 times faster, demonstrating the power of compressed weights!

- Scaling Up: While Llama 3 70B is significantly larger, it demonstrates a powerful capability to handle large language tasks. Even with Q4KM quantization, the model still generates tokens at a respectable rate.

- Processing Power: The RTX A6000 excels in processing large amounts of text, achieving impressive tokens per second rates. This is vital for real-time applications like chatbots or content generation.

Power-Saving Tips for Your RTX A6000

Now that we've established the importance of quantization, let's delve into specific tips to optimize your RTX A6000 for running LLMs 24/7:

1. Embrace Quantization

- Go for Q4KM: For optimal performance and power savings, prioritize the Q4KM quantization method whenever possible. It offers the best balance between accuracy and efficiency.

- Experiment with F16: If your LLM requires higher precision or you notice a significant drop in accuracy with Q4KM, experiment with F16 as a compromise.

2. Optimize for Inference

- GPU Memory Management: Minimize the memory footprint of your LLM by carefully managing your GPU's memory. Explore techniques like dynamic batching and memory pooling to make the most of your GPU resources.

- Efficient Batching: Adjust the batch size, the number of samples processed at once, to find the sweet spot between speed and memory usage. Smaller batch sizes can improve efficiency, especially on smaller models.

3. Leverage Tensor Cores

- Tensor Cores: The RTX A6000 is equipped with powerful Tensor Cores, which are specialized hardware units designed to accelerate matrix multiplication, a core operation in LLM processing. Utilize these cores by choosing frameworks and libraries that support them, such as PyTorch or TensorFlow.

4. Utilize NVIDIA Tools

- NVIDIA TensorRT: This framework optimizes deep learning models for inference by applying techniques like graph optimization and layer fusion. TensorRT can significantly boost your LLM's speed while reducing power consumption.

- NVIDIA CUDA: CUDA (Compute Unified Device Architecture) is a parallel computing platform that enables you to harness the power of your RTX A6000's GPU for LLM processing. Leverage the CUDA toolkit to optimize your code for maximum performance.

5. Keep It Cool

- Cooling System: Ensure adequate cooling for your RTX A6000. Overheating can result in performance degradation and shortened lifespan. Invest in a high-quality cooling system or make sure your existing system is well-maintained.

- Fan Curves: Optimize fan curves to strike a balance between cooling and noise. Customize fan speeds to minimize power consumption while maintaining appropriate cooling.

6. Power Management

- Power Profiles: Configure power profiles to adjust the RTX A6000's power consumption based on your workload. Select the most suitable profile for your LLM applications to maximize performance while minimizing power consumption.

- Energy Efficiency Monitoring: Monitor your power consumption closely and use energy efficiency tools to identify potential areas for improvement.

FAQ: Addressing Common Questions

What are the tradeoffs between accuracy and speed when quantizing LLMs?

Quantization can reduce the accuracy of your LLM slightly. However, with techniques like post-training quantization, you can minimize the impact on accuracy while still reaping the benefits of smaller model sizes and faster inference times. Experiment with different quantization levels and methods to find the best balance for your specific application.

Does the RTX A6000 support all LLMs?

The RTX A6000 excels at running a wide range of LLMs, including those based on transformer architectures like GPT-3, BERT, and Llama. However, it's important to ensure your LLM model and framework are compatible with the CUDA environment on the RTX A6000. Some models may require specific libraries or configurations.

What are some alternative GPUs for running LLMs?

The RTX A6000 is a powerful choice for LLM inference, but other options exist. Consider alternatives like the NVIDIA RTX A100 or A40 for even greater performance and memory capacity. The best GPU for your needs will depend on the size and complexity of your LLM and your specific performance requirements.

Keywords

RTX A6000, NVIDIA, LLM, large language model, inference, power saving, quantization, Q4KM, F16, GPU, tokens/second, generation, processing, Llama 3, 8B, 70B, performance, CUDA, TensorRT, Tensor Cores, power management, energy efficiency, cooling, fan curves, memory management, batching, accuracy, speed, tradeoffs, alternatives.