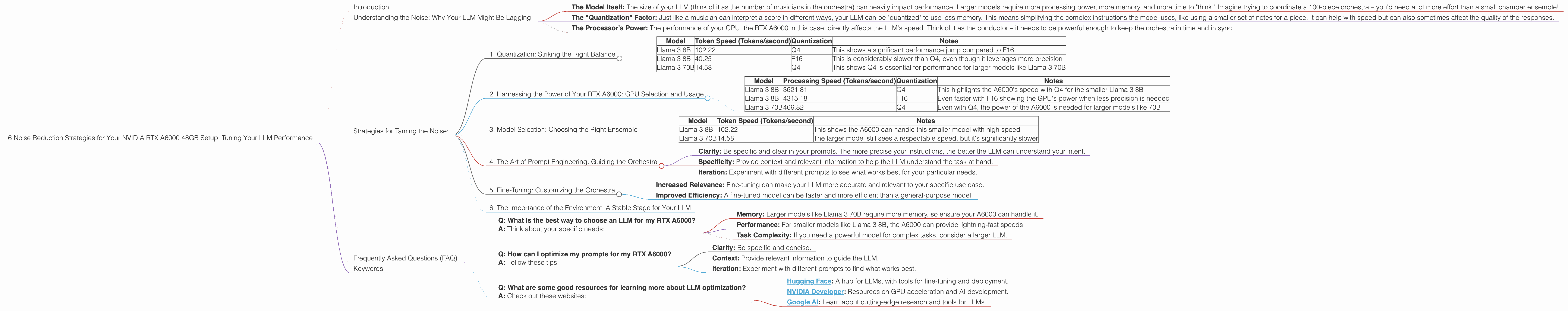

6 Noise Reduction Strategies for Your NVIDIA RTX A6000 48GB Setup

Introduction

You've got the beast, the NVIDIA RTX A6000 48GB, a titan among GPUs. But you're still hearing the whispers, the background hum of latency and sluggishness when you're trying to unleash your locally-run LLM. Ever feel like your model's performance is stuck in a loop, unable to truly break free and generate those insightful responses you crave?

Don't worry, you're not alone. This journey through the world of Large Language Model (LLM) optimization can be as exciting as it is challenging. This guide is your roadmap to smoother, faster, and more efficient LLM runs on your RTX A6000. Think of it like fine-tuning a vintage synthesizer – with every tweak, you'll discover a new sonic landscape of potential.

Understanding the Noise: Why Your LLM Might Be Lagging

Think of an LLM as a symphony orchestra – thousands of instruments working in concert to create a beautiful and complex output. But just like a real orchestra, the LLM can be affected by its environment, its setup, and its inner workings. Here are some common reasons you might be experiencing "noise" or performance issues:

- The Model Itself: The size of your LLM (think of it as the number of musicians in the orchestra) can heavily impact performance. Larger models require more processing power, more memory, and more time to "think." Imagine trying to coordinate a 100-piece orchestra – you'd need a lot more effort than a small chamber ensemble!

- The "Quantization" Factor: Just like a musician can interpret a score in different ways, your LLM can be "quantized" to use less memory. This means simplifying the complex instructions the model uses, like using a smaller set of notes for a piece. It can help with speed but can also sometimes affect the quality of the responses.

- The Processor's Power: The performance of your GPU, the RTX A6000 in this case, directly affects the LLM's speed. Think of it as the conductor – it needs to be powerful enough to keep the orchestra in time and in sync.

Strategies for Taming the Noise:

Now that we understand the potential sources of "noise," let's explore some practical strategies to optimize your LLM setup and get those tokens flowing smoothly.

1. Quantization: Striking the Right Balance

What is Quantization?

Quantization is a process that reduces the memory footprint of your LLM by using simpler representations of the model's parameters. Imagine each "note" in the music score being replaced by a simpler symbol – it uses less space but might not be as accurate.

How It Impacts Performance:

- Reduced Memory Footprint: This means your model can run on devices with less memory, and it can run faster. For example, using Q4 quantization might be like only using 4 different notes in a piece of music – it makes the model smaller and faster, but it might lose some complexity.

- Potential Accuracy Tradeoffs: Quantizing too aggressively can sacrifice some accuracy, potentially resulting in less nuanced or less accurate responses.

The Sweet Spot for RTX A6000 48GB:

You're lucky here – with 48GB of memory, your RTX A6000 can handle larger models with less need to quantize aggressively. However, you can still get a performance boost with smart quantization.

Data:

| Model | Token Speed (Tokens/second) | Quantization | Notes |

|---|---|---|---|

| Llama 3 8B | 102.22 | Q4 | This shows a significant performance jump compared to F16 |

| Llama 3 8B | 40.25 | F16 | This is considerably slower than Q4, even though it leverages more precision |

| Llama 3 70B | 14.58 | Q4 | This shows Q4 is essential for performance for larger models like Llama 3 70B |

Conclusion: For models like Llama 3 8B and 70B, Q4 quantization offers a significant performance boost on your RTX A6000. Experiment with different models and tasks to see what works best for your needs.

2. Harnessing the Power of Your RTX A6000: GPU Selection and Usage

The GPU's Role:

The RTX A6000, with its massive 48GB of memory and impressive processing power, is designed for demanding workloads like LLM inference. It's like having a top-of-the-line conductor leading the orchestra, capable of managing a large ensemble with precision.

How to Optimize GPU Usage:

- Multi-GPU Inference: While your A6000 is powerful, consider using multiple GPUs if you need to run even larger models or handle complex tasks. It's like adding more musicians to the orchestra, amplifying the overall sound.

- GPU Memory Management: Make sure your model fits comfortably within your A6000's memory. You can adjust settings to control the amount of memory allocated to your LLM. It's like managing the size of the orchestra's stage – too small, and you'll have musicians bumping into each other!

Data:

| Model | Processing Speed (Tokens/second) | Quantization | Notes |

|---|---|---|---|

| Llama 3 8B | 3621.81 | Q4 | This highlights the A6000's speed with Q4 for the smaller Llama 3 8B |

| Llama 3 8B | 4315.18 | F16 | Even faster with F16 showing the GPU's power when less precision is needed |

| Llama 3 70B | 466.82 | Q4 | Even with Q4, the power of the A6000 is needed for larger models like 70B |

Conclusion: Your RTX A6000 is a workhorse, but make sure it's not bogged down by memory constraints. Consider multi-GPU setups for demanding tasks.

3. Model Selection: Choosing the Right Ensemble

Understanding Model Size:

The size of your LLM is a key factor in performance. Larger models are more powerful, but they also require more processing power and memory. It's like choosing the right orchestra for your concert – a small chamber ensemble might be perfect for an intimate performance, while a full symphony is needed for a grand event.

Choosing the Right Model:

- For Efficiency: Consider smaller, optimized models like Llama 3 8B. These are great for tasks that don't require the full power of a 70B model.

- For Power: For extremely complex tasks, larger models like Llama 3 70B are your best bet. It's like having the entire orchestra at your disposal!

Data:

| Model | Token Speed (Tokens/second) | Notes |

|---|---|---|

| Llama 3 8B | 102.22 | This shows the A6000 can handle this smaller model with high speed |

| Llama 3 70B | 14.58 | The larger model still sees a respectable speed, but it's significantly slower |

Conclusion: Choose a model that fits your needs. Don't over-engineer your setup with a massive model if a smaller one will suffice.

4. The Art of Prompt Engineering: Guiding the Orchestra

The Power of the Prompt:

Your prompt is the conductor's score – it guides and directs the LLM's output. A well-crafted prompt can make a huge difference in the quality and speed of your results. Think of it as giving the orchestra the right sheet music for the performance you want.

Key Techniques:

- Clarity: Be specific and clear in your prompts. The more precise your instructions, the better the LLM can understand your intent.

- Specificity: Provide context and relevant information to help the LLM understand the task at hand.

- Iteration: Experiment with different prompts to see what works best for your particular needs.

Data:

This section doesn't contain specific data, but the effectiveness of a good prompt can be measured indirectly by observing the quality and relevance of the output.

Conclusion: Spend time crafting your prompts – it's one of the most powerful ways to improve your LLM's performance.

5. Fine-Tuning: Customizing the Orchestra

What is Fine-Tuning?

Fine-tuning is the process of training a pre-trained LLM on a specific dataset to improve its performance on your particular task. Think of it as customizing the orchestra's performance for a specific audience – by practicing and tailoring their performance, they can create a more refined sound for your particular concert.

How It Benefits Performance:

- Increased Relevance: Fine-tuning can make your LLM more accurate and relevant to your specific use case.

- Improved Efficiency: A fine-tuned model can be faster and more efficient than a general-purpose model.

Data:

This section doesn't contain specific data, but it's important to understand that fine-tuning can significantly improve performance.

Conclusion: If you need a highly specialized LLM, fine-tuning is a powerful tool that can significantly enhance its performance.

6. The Importance of the Environment: A Stable Stage for Your LLM

System Resources:

Your system's overall performance can impact your LLM's speed. Make sure you have enough RAM, disk space, and CPU power to support your LLM's operations. It's like ensuring your orchestra has enough space to perform and a steady power supply for their instruments.

Software Optimizations:

Choose the right library and tools for your LLM. Look for optimized libraries for your GPU (e.g., CUDA, cuDNN) and efficient code to minimize processing overhead.

Data:

This section doesn't contain specific data, but the impact of system resources and software optimizations can be measured indirectly by monitoring the overall speed and stability of the LLM.

Conclusion: Ensure your environment is stable and optimized to support your LLM's needs.

Frequently Asked Questions (FAQ)

Q: What is the best way to choose an LLM for my RTX A6000?

A: Think about your specific needs:

- Memory: Larger models like Llama 3 70B require more memory, so ensure your A6000 can handle it.

- Performance: For smaller models like Llama 3 8B, the A6000 can provide lightning-fast speeds.

- Task Complexity: If you need a powerful model for complex tasks, consider a larger LLM.

Q: How can I optimize my prompts for my RTX A6000?

A: Follow these tips:

- Clarity: Be specific and concise.

- Context: Provide relevant information to guide the LLM.

- Iteration: Experiment with different prompts to find what works best.

Q: What are some good resources for learning more about LLM optimization?

A: Check out these websites:

- Hugging Face: A hub for LLMs, with tools for fine-tuning and deployment.

- NVIDIA Developer: Resources on GPU acceleration and AI development.

- Google AI: Learn about cutting-edge research and tools for LLMs.

Keywords

Large Language Model (LLM), NVIDIA RTX A6000, GPU, performance optimization, speed, tokens per second, quantization, fine-tuning, prompt engineering, Llama 3, memory, processing power, inference, multi-GPU, CUDA, cuDNN, hugging face, NVIDIA developer, Google AI.