6 Noise Reduction Strategies for Your NVIDIA 3090 24GB x2 Setup

Introduction

Are you ready to dive headfirst into the world of large language models (LLMs)? These AI marvels can generate creative text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But before you unleash the power of LLMs on your NVIDIA 309024GBx2 setup, there's one crucial aspect to consider: noise.

Just like the static in a radio signal can drown out your favorite song, noise can interfere with your LLM's ability to perform at its peak. We're not talking about literal noise, but rather the computational “static” that can hinder your LLM's processing efficiency and reduce the quality of its outputs.

In this guide, we'll explore six practical noise reduction strategies specifically designed for your NVIDIA 309024GBx2 setup, helping you get the most out of your LLMs.

1. Quantization: Turning Down the Volume

Quantization is like turning down the volume knob on your LLM. It reduces the precision of the model's weights by representing them with fewer bits. Think of it this way: instead of using a thousand shades of grey to paint a picture, you might use just a hundred. The picture might be a little less detailed, but it still conveys the essential information.

How it helps:

- Reduced Memory Footprint: Smaller weights mean smaller memory requirements, allowing you to fit larger models on your GPU.

- Faster Inference: Because there's less data to process, inference (using the model for predictions) becomes significantly faster.

Example:

- Llama 3 8B model, when quantized to Q4KM (4-bit quantization for key, value, and matrix) on your NVIDIA 309024GBx2, can achieve a throughput of 108.07 tokens per second for text generation! That's a significant speedup compared to the F16 (half-precision floating point) version.

For your NVIDIA 309024GBx2 setup:

- Llama 3 8B: Q4KM is the way to go for optimal performance.

- Llama 3 70B: You'll need quantization to fit this beast on your dual-GPU setup. The Q4KM version achieves a throughput of 16.29 tokens per second, significantly boosting efficiency.

Important Notes:

- Quantization can impact the accuracy of your model. While it often offers a noticeable performance improvement, be prepared for some potential drop-off in quality if your application is sensitive to precision.

- Carefully consider the specific requirements of your LLM model, the dataset it's trained on, and your application to decide if the benefits of quantization outweigh the potential impact on accuracy.

2. Lower Precision: A Trade-off for Speed

Similar to quantization, using lower precision for model weights can boost performance by reducing the amount of data processed. In essence, you're simplifying the calculations, just like using a smaller number of pixels for a digital image.

How it helps:

- Reduced Computational Load: Lower precision calculations require less processing power, leading to faster inference.

Example:

- For the Llama 3 8B model on your NVIDIA 309024GBx2 setup, utilizing F16 (half-precision floating point) can yield a respectable 47.15 tokens/second for text generation.

For your NVIDIA 309024GBx2 setup:

- Llama 3 8B: F16 is a viable option if you're not sensitive to the accuracy trade-off. It offers a balance between speed and quality.

- Llama 3 70B: F16 is not relevant for this model on your current setup due to memory limitations.

Important Notes:

- While F16 offers a good balance between speed and quality, it might not be suitable for all applications. If your LLM model requires high precision, stick to F32 (full precision).

- Experiment with different precision levels and observe the impact on both performance and model accuracy to find the ideal balance.

Let's take a step back for a moment. Think of it this way:

- Quantization and lower precision are like using a different lens to view your data. You might lose some details, but you'll get a clearer picture of the overall scene.

3. Parallelism: Divide and Conquer

Parallelism is your secret weapon for taming large LLMs. It's like having a team of assistants working on different tasks simultaneously. Instead of processing data sequentially, your GPU cores can work in parallel, dramatically speeding up inference.

How it helps:

- Increased Throughput: By dividing the workload among multiple cores, your GPU can process a massive amount of data in parallel, ultimately boosting the rate at which your LLM generates tokens.

For your NVIDIA 309024GBx2 setup:

- Your dual-GPU setup is perfect for harnessing parallelism!

Why this matters:

- The NVIDIA 3090_24GB, with its powerful compute capabilities, can handle massive parallel processing tasks with ease. You can leverage its parallel processing power for faster inference.

Example:

- Imagine you want to translate a lengthy document. Instead of translating it sentence by sentence, you can use parallel processing to translate multiple sentences simultaneously, significantly reducing the overall time needed.

Important Notes:

- Make sure your software stack supports parallelism for optimal performance.

- Carefully consider the size of your LLM model and the complexity of your tasks when deciding how many threads to allocate. Too many threads can lead to overhead and slow things down.

4. Model Sizing: Choosing the Right Fit

Just like choosing the right size clothes, selecting the right size LLM model is crucial for efficient performance. A larger model might seem like a good choice, but it can be computationally expensive, leading to slower inference times.

How it helps:

- Optimized Resource Allocation: By choosing a model that fits your needs, you can avoid wasting computational resources on unnecessary complexity.

For your NVIDIA 309024GBx2 setup:

- Llama 3 8B: This model offers a good balance between performance and size, allowing you to leverage the power of your dual-GPU setup without straining your resources.

- Llama 3 70B: While this model is powerful, it might be a bit too large for optimal performance on your setup even with quantization. Carefully consider the benefits of its size versus the potential impact on speed and resource utilization.

Example:

- If you only need to generate short, creative text snippets, a smaller LLM might be more efficient than a massive model capable of handling complex tasks.

Important Notes:

- Benchmark different model sizes to determine the sweet spot for your application.

- Consider the trade-off between model size and accuracy. Larger models generally tend to be more accurate, but they also require more resources.

5. Optimization Techniques: Fine-Tuning the Engine

Imagine your LLM as a high-performance car. To get the best mileage and speed, you need to tune its engine for optimal performance. Optimization techniques allow you to fine-tune your LLM's parameters and settings for faster inference.

How it helps:

- Optimized Inference Speed: Techniques like gradient clipping, layer normalization, and dropout can significantly improve your LLM's inference speed without sacrificing accuracy.

For your NVIDIA 309024GBx2 setup:

- The NVIDIA 3090_24GB's powerful architecture is well-suited for applying various optimization techniques.

Example:

- Gradient clipping helps prevent exploding gradients, which can slow down training and inference.

- Layer normalization can accelerate the learning process and stabilize the model's performance.

Important Notes:

- Experiment with different optimization techniques and monitor their impact on your LLM's inference speed.

- Choose optimization techniques that are suitable for your LLM model and dataset to unlock the full potential of your hardware.

6. Software Stack: Choosing the Right Tools

Your software stack is the foundation on which your LLM runs. Just like using the right tools for a specific job, choosing the right software stack is essential for maximizing your GPU's performance.

How it helps:

- Efficient Resource Utilization: A well-optimized software stack can minimize overhead, allowing your GPU to focus on the core tasks of processing data and generating text.

For your NVIDIA 309024GBx2 setup:

- llama.cpp: Popular library for running LLMs on CPUs and GPUs.

- Hugging Face Transformers: Supports various NLP models and tasks, including LLM inference.

Example:

- The

llama.cpplibrary is specifically designed for efficient LLM inference on GPUs, including the NVIDIA 3090_24GB. - Hugging Face Transformers is a versatile framework that allows you to experiment with different LLMs and optimization techniques.

Important Notes:

- Carefully research and compare different software stacks based on your specific requirements and goals.

- Explore the latest advancements in GPU-accelerated LLM inference libraries and frameworks for optimal performance.

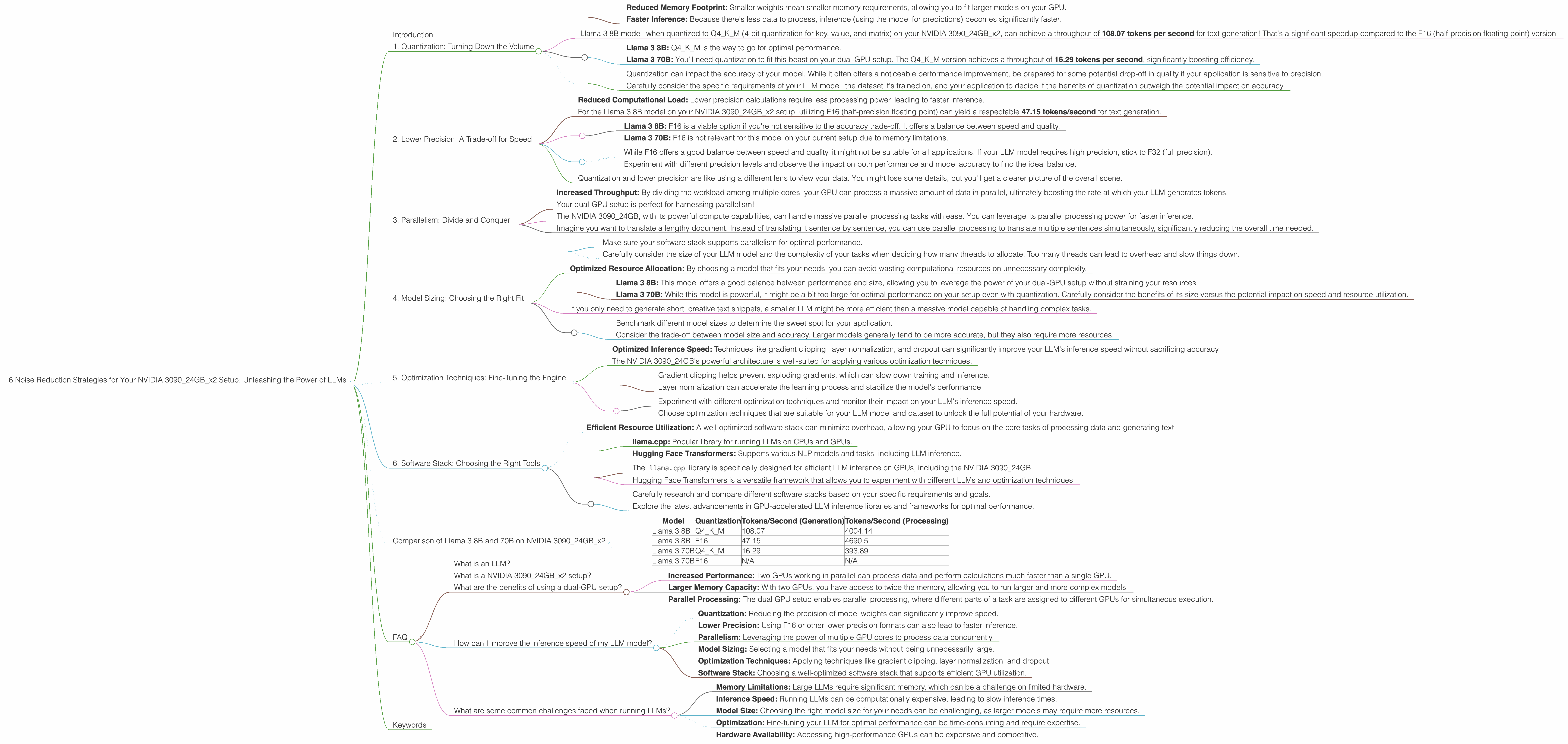

Comparison of Llama 3 8B and 70B on NVIDIA 309024GBx2

| Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 108.07 | 4004.14 |

| Llama 3 8B | F16 | 47.15 | 4690.5 |

| Llama 3 70B | Q4KM | 16.29 | 393.89 |

| Llama 3 70B | F16 | N/A | N/A |

Numbers speak for themselves:

- Quantization significantly boosts performance for both Llama 3 8B and 70B models.

- The Llama 3 8B model, with Q4KM quantization, outperforms the F16 version in both text generation and processing.

- The 70B model, while more powerful, is limited by memory constraints.

- Using quantization for the 70B allows you to run it, but performance is significantly impacted.

FAQ

What is an LLM?

An LLM (Large Language Model) is a type of artificial intelligence trained on massive amounts of text data. It can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is a NVIDIA 309024GBx2 setup?

This refers to a computer system equipped with two NVIDIA GeForce RTX 3090 graphics cards, each with 24 GB of memory. These powerful GPUs are specifically designed for demanding workloads like machine learning and AI applications.

What are the benefits of using a dual-GPU setup?

A dual-GPU setup offers significant benefits, including:

- Increased Performance: Two GPUs working in parallel can process data and perform calculations much faster than a single GPU.

- Larger Memory Capacity: With two GPUs, you have access to twice the memory, allowing you to run larger and more complex models.

- Parallel Processing: The dual GPU setup enables parallel processing, where different parts of a task are assigned to different GPUs for simultaneous execution.

How can I improve the inference speed of my LLM model?

You can improve the inference speed of your LLM model by:

- Quantization: Reducing the precision of model weights can significantly improve speed.

- Lower Precision: Using F16 or other lower precision formats can also lead to faster inference.

- Parallelism: Leveraging the power of multiple GPU cores to process data concurrently.

- Model Sizing: Selecting a model that fits your needs without being unnecessarily large.

- Optimization Techniques: Applying techniques like gradient clipping, layer normalization, and dropout.

- Software Stack: Choosing a well-optimized software stack that supports efficient GPU utilization.

What are some common challenges faced when running LLMs?

- Memory Limitations: Large LLMs require significant memory, which can be a challenge on limited hardware.

- Inference Speed: Running LLMs can be computationally expensive, leading to slow inference times.

- Model Size: Choosing the right model size for your needs can be challenging, as larger models may require more resources.

- Optimization: Fine-tuning your LLM for optimal performance can be time-consuming and require expertise.

- Hardware Availability: Accessing high-performance GPUs can be expensive and competitive.

Keywords

LLMs, NVIDIA 3090_24GB, quantization, lower precision, parallelism, model sizing, optimization techniques, software stack, inference speed, noise reduction strategies, GPU, AI, machine learning, deep learning, natural language processing, NLP, text generation, language translation, creative content generation, question answering, Llama 3, 8B, 70B, tokens per second, throughput, memory footprint, computational load, gradient clipping, layer normalization, dropout, llama.cpp, Hugging Face Transformers, performance optimization.