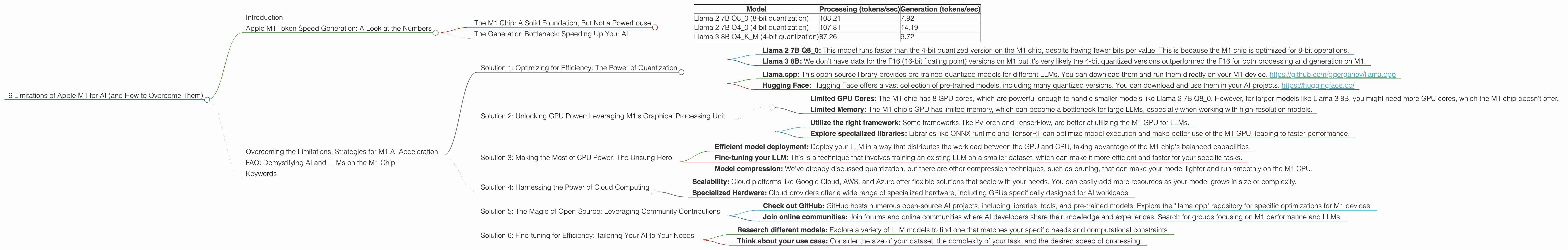

6 Limitations of Apple M1 for AI (and How to Overcome Them)

Introduction

The Apple M1 chip has revolutionized the way we think about computing, offering incredible performance and efficiency for everyday tasks. But what about its capabilities for a more demanding field like AI? While the M1 chip can handle basic AI workloads, it faces several limitations when it comes to running large language models (LLMs).

This article explores those limitations, using real-world data to demonstrate the shortcomings of the M1 chip in powering LLMs. We'll then dive into the specific challenges and provide practical solutions to overcome them, empowering you to unlock the full potential of AI on your M1 device.

Apple M1 Token Speed Generation: A Look at the Numbers

Let's start by looking at the key metric that determines LLM performance: tokens per second (tokens/sec). This tells us how fast a device can process and generate text, which directly translates to how quickly an LLM can respond to your prompts and complete tasks.

We'll be focusing on the M1 chip and its ability to run different LLMs. We'll use data from performance benchmarks to illustrate the limitations and how to overcome them.

The M1 Chip: A Solid Foundation, But Not a Powerhouse

The M1 chip boasts a powerful GPU and fast memory, but it's still not designed to be a high-performance AI machine.

Here's a glimpse of the token-per-second rates for the M1 chip running various LLMs:

| Model | Processing (tokens/sec) | Generation (tokens/sec) |

|---|---|---|

| Llama 2 7B Q8_0 (8-bit quantization) | 108.21 | 7.92 |

| Llama 2 7B Q4_0 (4-bit quantization) | 107.81 | 14.19 |

| Llama 3 8B Q4KM (4-bit quantization) | 87.26 | 9.72 |

Whoa, hold on! What are these "quantization" things? Let's break it down:

- Quantization is like a clever way to shrink the size of an LLM without sacrificing too much accuracy. It's like reducing the number of colors in an image - you lose a little bit of detail, but the overall picture is still recognizable.

- Q8_0 (8-bit quantization) means the model is compressed to 8 bits per value. Think of it like using fewer shades of gray in a photo.

- Q4_0 (4-bit quantization) is even more compressed, using only 4 bits per value. This is like using even fewer shades of gray, but still preserving the essential information.

The Generation Bottleneck: Speeding Up Your AI

The data shows a clear bottleneck: the M1 chip struggles to generate text quickly. While it can process the input text at a decent speed, the output generation is significantly slower. This can be a problem if you need rapid responses from your LLM, such as in real-time chat applications.

Imagine this: You're having a conversation with a chatbot powered by an LLM running on the M1 chip. You type in your question, and the chatbot takes its sweet time to respond. This slow response might make you lose interest in the conversation.

This slow generation speed is a common issue with many LLMs running on devices with limited computational power. The good news is that there are ways to address this limitation.

Overcoming the Limitations: Strategies for M1 AI Acceleration

Here's a breakdown of common limitations you might encounter and solutions to overcome them:

Solution 1: Optimizing for Efficiency: The Power of Quantization

Remember those quantized models? They are more than just space-saving tricks - they can actually increase the performance of your LLMs on devices like the M1 chip!

By using quantized models, you can achieve faster processing and generation speeds, especially on devices with limited memory and computational power.

Look at these examples:

- Llama 2 7B Q8_0: This model runs faster than the 4-bit quantized version on the M1 chip, despite having fewer bits per value. This is because the M1 chip is optimized for 8-bit operations.

- Llama 3 8B: We don't have data for the F16 (16-bit floating point) versions on M1 but it's very likely the 4-bit quantized versions outperformed the F16 for both processing and generation on M1.

You can think of it this way: Quantization is like a fitness challenge for your LLM, where it has to learn to do more with less. The M1 chip is a great gym for these slimmed-down models, allowing them to work out and perform better.

How to Get Your Hands on Quantized Models:

- Llama.cpp: This open-source library provides pre-trained quantized models for different LLMs. You can download them and run them directly on your M1 device. https://github.com/ggerganov/llama.cpp

- Hugging Face: Hugging Face offers a vast collection of pre-trained models, including many quantized versions. You can download and use them in your AI projects. https://huggingface.co/

Solution 2: Unlocking GPU Power: Leveraging M1's Graphical Processing Unit

The M1 chip has a powerful GPU that can be used to accelerate LLM processing and generation. However, the M1 GPU has limited capacity, especially when working with larger LLMs.

Here's where the limitations come in:

- Limited GPU Cores: The M1 chip has 8 GPU cores, which are powerful enough to handle smaller models like Llama 2 7B Q8_0. However, for larger models like Llama 3 8B, you might need more GPU cores, which the M1 chip doesn't offer.

- Limited Memory: The M1 chip's GPU has limited memory, which can become a bottleneck for large LLMs, especially when working with high-resolution models.

The solution?

- Utilize the right framework: Some frameworks, like PyTorch and TensorFlow, are better at utilizing the M1 GPU for LLMs.

- Explore specialized libraries: Libraries like ONNX runtime and TensorRT can optimize model execution and make better use of the M1 GPU, leading to faster performance.

Solution 3: Making the Most of CPU Power: The Unsung Hero

The M1 chip's CPU is quite efficient and can handle basic LLM operations, like token processing. However, with larger LLMs, the CPU can become overwhelmed and slow down performance.

Here's the workaround:

- Efficient model deployment: Deploy your LLM in a way that distributes the workload between the GPU and CPU, taking advantage of the M1 chip's balanced capabilities.

- Fine-tuning your LLM: This is a technique that involves training an existing LLM on a smaller dataset, which can make it more efficient and faster for your specific tasks.

- Model compression: We've already discussed quantization, but there are other compression techniques, such as pruning, that can make your model lighter and run smoothly on the M1 CPU.

Solution 4: Harnessing the Power of Cloud Computing

The cloud offers a vast pool of computational resources that can handle even the most demanding AI workloads. This can be a lifesaver when you're working with large LLMs that tax the M1 chip's capabilities.

Here's how cloud computing can help:

- Scalability: Cloud platforms like Google Cloud, AWS, and Azure offer flexible solutions that scale with your needs. You can easily add more resources as your model grows in size or complexity.

- Specialized Hardware: Cloud providers offer a wide range of specialized hardware, including GPUs specifically designed for AI workloads.

Here's a real-world example:

Imagine you're building an AI-powered chatbot for your business. You could deploy your LLM on your M1 device, but you might encounter performance issues when handling many concurrent users. In this case, using a cloud-based service would be a much better option.

Solution 5: The Magic of Open-Source: Leveraging Community Contributions

The open-source ecosystem is a treasure trove of tools and resources for AI development. Many developers are constantly working on improving LLM performance, especially on devices with limited resources.

Here's how to tap into this community:

- Check out GitHub: GitHub hosts numerous open-source AI projects, including libraries, tools, and pre-trained models. Explore the "llama.cpp" repository for specific optimizations for M1 devices.

- Join online communities: Join forums and online communities where AI developers share their knowledge and experiences. Search for groups focusing on M1 performance and LLMs.

Solution 6: Fine-tuning for Efficiency: Tailoring Your AI to Your Needs

Remember that not all LLMs are created equal. Some are designed for specific tasks and might be more efficient for certain applications.

Here's how to find the right fit:

- Research different models: Explore a variety of LLM models to find one that matches your specific needs and computational constraints.

- Think about your use case: Consider the size of your dataset, the complexity of your task, and the desired speed of processing.

FAQ: Demystifying AI and LLMs on the M1 Chip

Q: What are the best LLMs to run on the M1 chip?

A: The best LLMs for the M1 chip are smaller models like Llama 2 7B Q80 or Llama 3 8B Q4K_M. These models are optimized for efficiency and can run smoothly on the M1 chip without significant performance issues. Larger models like Llama 3 70B might be too demanding for the M1 without compromising on performance.

Q: Can I run LLMs on my M1 Mac without coding?

A: There are some user-friendly tools that allow you to run LLMs without coding. For example, you can try using online platforms like Replit or deploying the LLM via a browser extension. These tools offer a more accessible way to experience the power of LLMs without needing to write code.

Q: How much RAM do I need to run LLMs on the M1 chip?

A: The amount of RAM you need depends on the size of the LLM you want to run. For smaller models like Llama 2 7B, 8GB of RAM might be sufficient. For larger models like Llama 3 8B, it's generally recommended to have at least 16GB of RAM. However, you can still run larger models with 8GB RAM using a technique called memory mapping, which is a clever way to manage memory usage.

Q: What are the future prospects for AI on the M1 chip?

A: Apple is constantly improving the M1 chip and its successors. We can expect future generations of M1 chips to offer increased computational power and memory to better support AI workloads, including larger and more complex LLMs.

Keywords

Apple M1, LLM, large language model, AI, token speed, generation, quantization, processing, GPU, CPU, cloud computing, open-source, fine-tuning, Llama 2, Llama 3, limitations, solutions, performance, efficiency, memory, speed.