6 Key Factors to Consider When Choosing Between NVIDIA RTX 5000 Ada 32GB and NVIDIA A100 SXM 80GB for AI

Introduction

The world of Large Language Models (LLMs) is rapidly evolving, and with it, the need for powerful hardware to run them locally. This article explores the key factors to consider when choosing between the NVIDIA RTX 5000 Ada 32GB and the NVIDIA A100 SXM 80GB for AI, specifically focusing on their performance when running LLM models. While both GPUs offer impressive capabilities, they cater to different needs and budgets, making it crucial to understand their strengths and weaknesses before making a decision.

Understanding the Battlefield: NVIDIA RTX 5000 Ada 32GB vs. NVIDIA A100 SXM 80GB

Imagine two knights, each wielding powerful weapons, ready to duel in the realm of AI: The RTX 5000 Ada 32GB, a nimble and agile warrior, and the A100 SXM 80GB, a colossal behemoth of power.

The RTX 5000 Ada 32GB is a more affordable option, boasting a robust 32GB of GDDR6 memory, making it a popular choice for gamers and creators. The A100 SXM 80GB, on the other hand, reigns supreme with its massive 80GB of HBM2e memory, aimed at large-scale AI workloads.

Comparing Performance: Token Generation Speed Showdown

Let's dive into the heart of the matter: the performance of these GPUs when running LLM models.

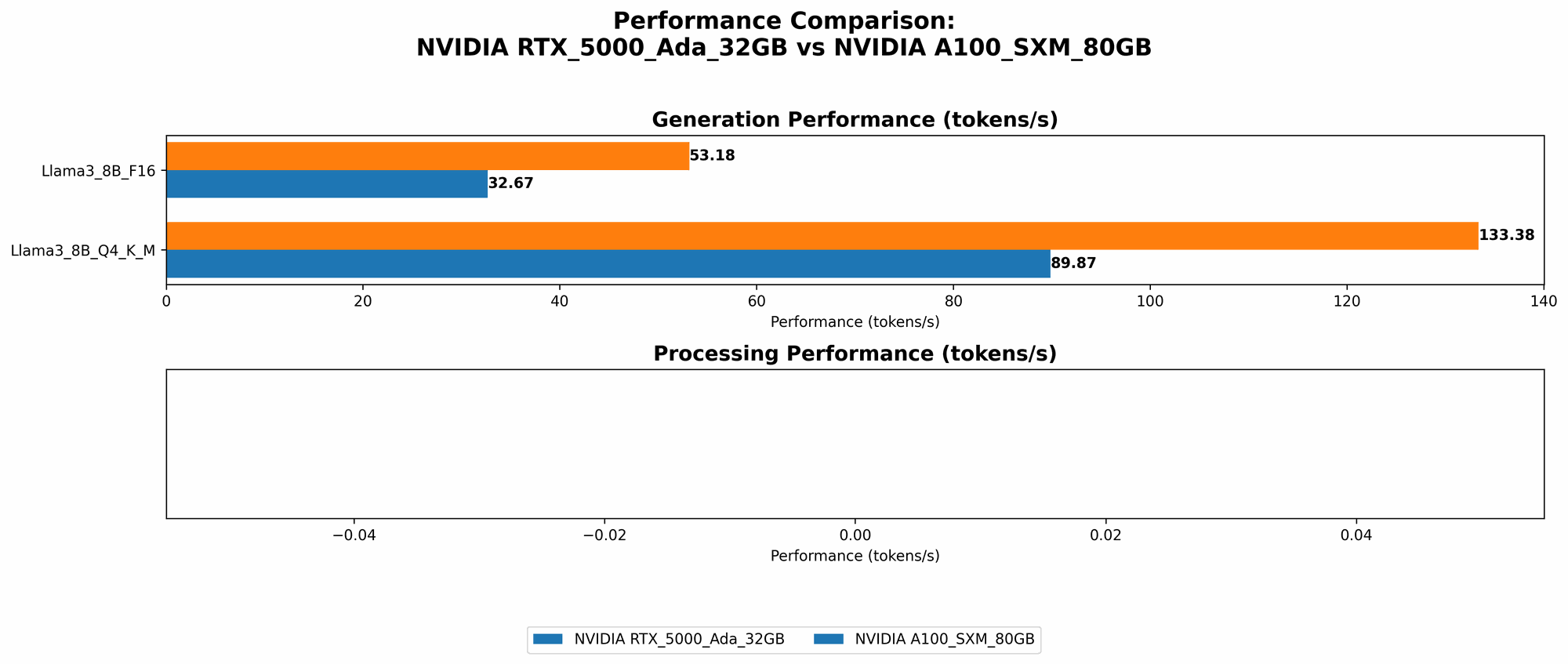

1. Llama 3 8B Token Generation Speed Comparison

| GPU | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| NVIDIA RTX 5000 Ada 32GB | Q4KM | 89.87 |

| NVIDIA A100 SXM 80GB | Q4KM | 133.38 |

| NVIDIA RTX 5000 Ada 32GB | F16 | 32.67 |

| NVIDIA A100 SXM 80GB | F16 | 53.18 |

The Verdict: The A100 SXM 80GB emerges as the champion, consistently outperforming the RTX 5000 Ada 32GB in both Q4KM and F16 quantization, yielding a token generation speed roughly 50% faster.

Explanation: The A100's superior Tensor Cores and higher memory bandwidth allow it to handle more complex calculations and larger datasets, making it a powerhouse for LLM inference.

Practical Recommendations: For developers working with smaller models like Llama 3 8B and prioritize optimal speed, the A100 SXM 80GB is the clear winner. But, if budget constraints are a concern, the RTX 5000 Ada 32GB still provides reasonable performance.

2. Llama 3 70B Token Generation Speed Comparison

| GPU | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| NVIDIA A100 SXM 80GB | Q4KM | 24.33 |

The Verdict: This is where things get interesting. The RTX 5000 Ada 32GB data is missing for Llama 3 70B, suggesting it might struggle with these larger models. While the A100 SXM 80GB can handle the workload, its performance is significantly slower compared to the 8B model.

Explanation: The A100's abundant memory helps it tackle the larger model size, but the increased complexity slows down the processing.

Practical Recommendations: For developers working with larger models like Llama 3 70B, the A100 SXM 80GB is the only viable option. However, be prepared for significantly slower speeds compared to smaller models.

Beyond Token Generation: Processing Power for AI Workloads

While token generation speed is crucial for LLM inference, it's not the only metric to consider. Processing power also plays a vital role in overall AI workload performance.

3. Processing Speed Comparison: Llama 3 8B

| GPU | Quantization | Processing Speed (Tokens/second) |

|---|---|---|

| NVIDIA RTX 5000 Ada 32GB | Q4KM | 4467.46 |

| NVIDIA RTX 5000 Ada 32GB | F16 | 5835.41 |

The Verdict: The RTX 5000 Ada 32GB demonstrates its prowess in this category, delivering significantly faster processing speeds for Llama 3 8B, even surpassing the A100's performance in F16 quantization.

Explanation: The RTX 5000 Ada 32GB's higher memory bandwidth and efficient processing architecture excel in handling the high-intensity calculations required for processing larger datasets.

Practical Recommendations: For AI workflows involving tasks like training or fine-tuning smaller LLMs, the RTX 5000 Ada 32GB shines. It offers a compelling price-performance ratio for a variety of AI tasks beyond just inference.

Note: Processing speed data for the A100 SXM 80GB is missing for Llama 3 70B and 8B in both Q4KM and F16 quantization.

Practical Considerations: Weighing Cost, Power, and Memory

The performance showdown is only one piece of the puzzle. Other factors influence the choice:

4. Cost: The Price Tag for AI Performance

The A100 SXM 80GB is a hefty investment, significantly more expensive than the RTX 5000 Ada 32GB. This makes the latter a more attractive choice for developers working with limited budgets or looking for a more affordable entry point into local LLM experimentation.

5. Power Consumption: The Energy Appetite of AI

The A100 SXM 80GB is a power-hungry beast, demanding significantly more energy compared to the RTX 5000 Ada 32GB. This can be a significant factor in the long run, especially for users running their AI workloads continuously.

Energy efficiency has become a crucial consideration for developers seeking to optimize their AI infrastructure.

6. Memory Capacity: The Landscape of LLM Models

The A100 SXM 80GB's 80GB of HBM2e memory is a game-changer for handling massive datasets and running complex AI models, making it a powerhouse for large-scale AI projects. The RTX 5000 Ada 32GB's 32GB of GDDR6 memory is sufficient for smaller LLMs and other AI tasks but might fall short when dealing with larger models.

Conclusion: Choosing the Right Weapon for Your AI Battle

The choice between the NVIDIA RTX 5000 Ada 32GB and the NVIDIA A100 SXM 80GB ultimately depends on your specific needs, budget, and workload demands.

For developers prioritizing cost-effectiveness and working with smaller LLM models, the RTX 5000 Ada 32GB provides a compelling blend of performance and affordability.

If power and memory are your primary concerns, and you're pushing the boundaries with massive LLMs, the A100 SXM 80GB is the weapon of choice, even if it comes with a hefty price tag.

FAQ

1. What is quantization, and why is it important in LLM models?

Quantization is a technique used to compress large language models. It involves converting the model's floating-point weights (numbers with decimal points) into smaller integer representations, which reduces the model's size and memory footprint. This can significantly speed up inference and reduce the computational resources needed to run the model. Think of it like compressing a large image file to make it lighter and faster to load.

2. What are the advantages and disadvantages of using a dedicated GPU for LLM models?

Advantages: * Faster inference speeds: GPUs provide significantly faster processing power compared to CPUs, especially when dealing with the complex calculations involved in LLM inference. * Higher memory bandwidth: GPUs often have higher bandwidth, allowing them to access large amounts of data quickly, essential for handling the massive datasets associated with LLMs. * Specialized hardware: Modern GPUs are specifically designed for matrix operations, which are crucial for efficient LLM operations.

Disadvantages: * Cost: GPUs can be significantly more expensive than CPUs, impacting the total system cost. * Power consumption: High-end GPUs can consume a lot of power, influencing energy costs and requiring efficient cooling solutions. * Setup and maintenance: Setting up and maintaining a GPU-based system might require additional technical expertise compared to CPU-only setups.

Keywords

NVIDIA RTX 5000 Ada 32GB, NVIDIA A100 SXM 80GB, LLM, Large Language Models, AI, Machine Learning, Deep Learning, GPU, Token Generation, Processing Speed, Quantization, Inference, Cost, Power Consumption, Memory Capacity, Llama 3, 8B, 70B