6 Key Factors to Consider When Choosing Between NVIDIA 4090 24GB and NVIDIA RTX A6000 48GB for AI

Introduction

The world of large language models (LLMs) is buzzing with excitement! From generating creative text formats like poems, scripts, musical pieces, email, letters, etc. to coding and translating languages, LLMs are revolutionizing the way we interact with technology. One of the key considerations for running these powerful models is choosing the right hardware, and that's where the NVIDIA GeForce RTX 4090 with 24GB of GDDR6X memory and the NVIDIA RTX A6000 with 48GB of HBM2e memory come into play.

But with so many diverse choices, deciding which GPU is the best fit for your AI endeavors might feel like navigating a maze. In this article, we'll dive deep into a comprehensive comparison between the NVIDIA 4090 24GB and the NVIDIA RTX A6000 48GB, focusing on six key factors that are crucial to making an informed decision.

Comparison of NVIDIA 4090 24GB and NVIDIA RTX A6000 48GB for Llama 3 Performance

Let's get down to the nitty-gritty! We'll be analyzing the performance of these two powerhouses with the Llama 3 family of LLMs, specifically focusing on the 8B and 70B variants. We'll be using the tokens/second metric, which measures how many tokens a model can process per second.

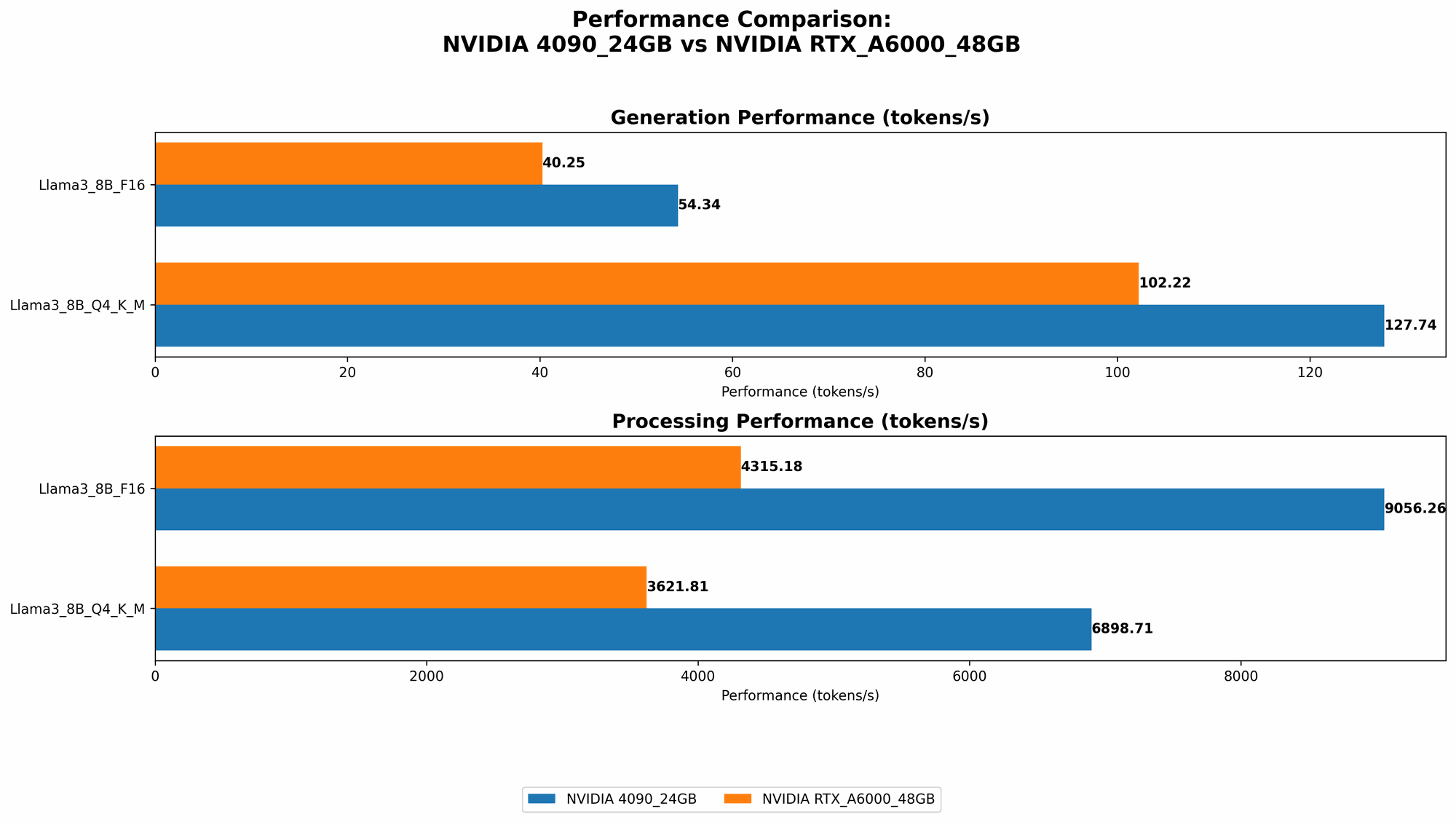

Performance of NVIDIA 4090 24GB vs NVIDIA RTX A6000 48GB for Llama 3 8B Model

In this section, we'll examine the performance of each GPU when running Llama 3 8B models, exploring different quantization levels: Q4KM and F16.

Q4KM quantization offers a balance between performance and accuracy, while F16 uses half-precision floating-point numbers for faster processing.

Token Generation Speed

| GPU | Llama 3 8B Q4KM Generation (Tokens/sec) | Llama 3 8B F16 Generation (Tokens/sec) |

|---|---|---|

| NVIDIA GeForce RTX 4090 24GB | 127.74 | 54.34 |

| NVIDIA RTX A6000 48GB | 102.22 | 40.25 |

As you can see, the NVIDIA 4090 24GB emerges as the clear winner in terms of token generation speed for both quantization levels. It offers a significant performance boost over the RTX A6000, allowing you to get results faster, especially for tasks that involve generating text, like writing creative content or translating languages.

Token Processing Speed

| GPU | Llama 3 8B Q4KM Processing (Tokens/sec) | Llama 3 8B F16 Processing (Tokens/sec) |

|---|---|---|

| NVIDIA GeForce RTX 4090 24GB | 6898.71 | 9056.26 |

| NVIDIA RTX A6000 48GB | 3621.81 | 4315.18 |

When it comes to token processing, the NVIDIA 4090 24GB surpasses the RTX A6000 again, highlighting its ability to handle large amounts of data efficiently. This difference becomes crucial for tasks like data analysis, where you need to process a lot of input to generate insights.

Performance of NVIDIA 4090 24GB vs NVIDIA RTX A6000 48GB for Llama 3 70B Model

Moving on to the more demanding Llama 3 70B model, we'll investigate the performance of each GPU using Q4KM quantization.

Token Generation Speed

| GPU | Llama 3 70B Q4KM Generation (Tokens/sec) |

|---|---|

| NVIDIA GeForce RTX 4090 24GB | Data not available |

| NVIDIA RTX A6000 48GB | 14.58 |

Unfortunately, we don't have data for the NVIDIA 4090 24GB running the Llama 3 70B model with Q4KM quantization. This is likely due to the high memory requirements of the model. The A6000, with its larger 48GB of HBM2e memory, is able to handle the workload efficiently.

Token Processing Speed

| GPU | Llama 3 70B Q4KM Processing (Tokens/sec) |

|---|---|

| NVIDIA GeForce RTX 4090 24GB | Data not available |

| NVIDIA RTX A6000 48GB | 466.82 |

Similar to the generation speed, we lack data for the NVIDIA 4090 24GB when processing the Llama 3 70B Q4KM model. The RTX A6000 shines in this scenario, showcasing its capability to handle the larger model and process tokens at a decent pace.

Key Factors to Consider When Choosing Between NVIDIA 4090 24GB and NVIDIA RTX A6000 48GB

So, which GPU wins the ultimate showdown? It's not a straightforward answer. Here are six key factors to consider when making a decision:

1. Memory Capacity

The NVIDIA RTX A6000 takes the lead in memory capacity with a whopping 48GB of HBM2e memory, making it a powerhouse for running larger LLMs, like the Llama 3 70B model. The NVIDIA 4090 24GB boasts a respectable 24GB of GDDR6X memory, sufficient for smaller models, but it might struggle with memory-intensive ones.

Think of memory capacity like the size of your kitchen counter. The A6000 has a huge counter, able to accommodate a massive feast, while the 4090 has a smaller space, perfect for a smaller gathering.

2. Memory Bandwidth

The RTX A6000's HBM2e memory boasts impressive memory bandwidth, allowing it to transfer data in and out of the GPU memory at a blazing speed, especially for tasks that require a lot of data shuffling, like training large LLMs or running complex inference tasks. The NVIDIA 4090 24GB, while utilizing high-speed GDDR6X memory, might not match the A6000's bandwidth, but still provides sufficient speed for most use cases.

Imagine your memory bandwidth as the size of your internet connection. The RTX A6000 has a super-fast fiber optic connection, while the 4090 has a good broadband connection, still great for everyday tasks, but you might notice a difference when downloading massive files.

3. GPU Compute Performance

The NVIDIA 4090 24GB packs a punch with its massive number of CUDA cores, providing superior compute performance, especially for tasks that involve a lot of parallel processing, like training LLMs or running inference tasks demanding high computational power. The RTX A6000, while equipped with a significant number of CUDA cores, might lag behind in raw compute performance.

Think of compute performance as the horsepower of your car. The 4090 has a powerful engine that can accelerate quickly and handle heavy loads, while the A6000 has a strong engine, but not as powerful, still capable of getting you to your destination but maybe not as quickly.

4. Price

The NVIDIA RTX A6000 comes with a hefty price tag, reflecting its impressive memory capacity and overall performance. The NVIDIA 4090 24GB, while still a premium GPU, is more budget-friendly compared to the A6000.

Your budget is like the amount of money you have for a car. The A6000 is akin to a luxury sports car, while the 4090 is a well-equipped performance car.

5. Power Consumption

The NVIDIA RTX A6000, with its larger memory and more CUDA cores, tends to consume more power than the NVIDIA 4090 24GB, especially when running demanding workloads. The 4090 is known for its power efficiency, maximizing performance while keeping energy consumption in check.

Imagine power consumption as the fuel efficiency of your car. The A6000 might be a gas guzzler, requiring more fuel to run, while the 4090 is a fuel-efficient model, getting more miles per gallon.

6. Availability

The NVIDIA RTX A6000, being geared towards professional workstations, might be easier to find compared to the NVIDIA 4090 24GB, which has been in high demand for gaming and other high-performance tasks.

Think of availability like the number of car models available at a dealership. The A6000 might be readily available on the lot, while the 4090 might be harder to find and you might have to wait for the next shipment.

Practical Recommendations for Use Cases

Now that we've explored the key factors, let's look at practical recommendations based on your needs:

- If you're working with large LLMs, like Llama 3 70B, and memory is a key concern, the NVIDIA RTX A6000 is your best bet. Its 48GB of HBM2e memory can handle the memory-intensive demands of these massive models.

- If you're running smaller LLMs, like Llama 3 8B, and prioritize raw compute performance, the NVIDIA 4090 24GB is an excellent choice. It offers impressive speed in both token generation and processing for these models while remaining budget-friendly.

- If you're on a tight budget and need a balanced GPU for both smaller and larger models, the RTX A6000 might be a good option. While its performance might not be the absolute top, it offers a good balance of memory capacity and compute power for a reasonable price.

FAQs:

What are quantized models, and why are they important?

Quantized models are like a simplified version of your original LLM. Think of it as using less precise numbers to represent the weights and activations of the model. This results in smaller file sizes, faster processing, and less memory consumption. The catch? It might slightly impact the performance of your model, but it's a worthwhile compromise for many use cases.

What’s the difference between token generation and token processing?

Token generation is akin to writing a story. You use the language model to generate new tokens, which create the text. Token processing is more like reading a book. You feed tokens to the model, and it processes them, understanding the meaning and generating an output.

Can I use these GPUs for other tasks besides running LLMs?

Absolutely! Both GPUs are capable of handling a wide range of tasks, including:

- Machine learning and deep learning: Training and inference for various ML models

- Scientific computing: Simulations, data analysis, and more

- Game development: Creating visually stunning and demanding games

- Video editing and rendering: Producing high-quality videos and graphics

Keywords

NVIDIA 4090, NVIDIA RTX A6000, AI, LLM, Llama 3, GPU, performance, memory, bandwidth, compute, price, power consumption, availability, token generation, token processing, quantization, use cases, FAQs, deep learning, machine learning.