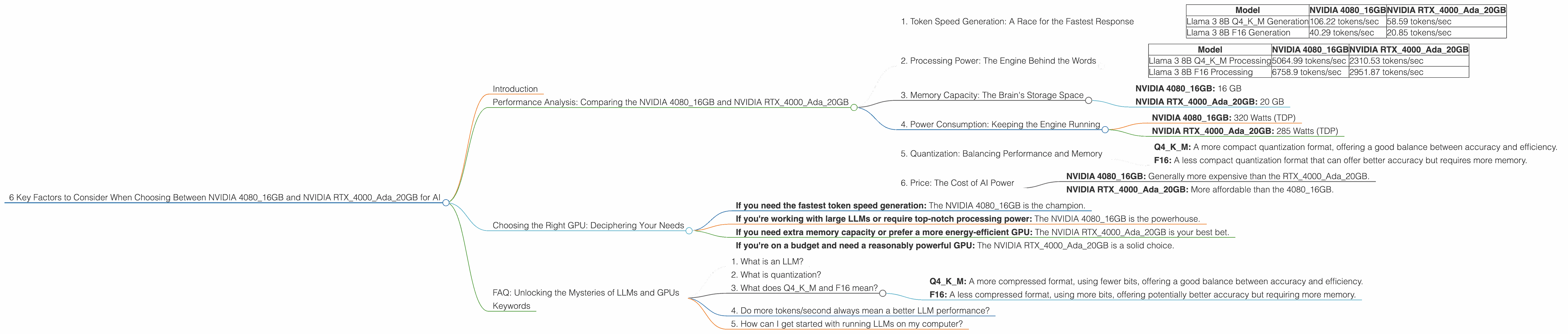

6 Key Factors to Consider When Choosing Between NVIDIA 4080 16GB and NVIDIA RTX 4000 Ada 20GB for AI

Introduction

The world of AI is moving at lightning speed, and large language models (LLMs) are at the forefront of this revolution. But running these powerful models locally requires serious hardware muscle. Two popular choices for AI enthusiasts and developers looking to harness the raw power of LLMs are the NVIDIA GeForce RTX 4080 16GB and the NVIDIA RTX 4000 Ada 20GB.

This article will delve into the fascinating world of LLM inference, examining the performance of the NVIDIA 408016GB and NVIDIA RTX4000Ada20GB GPUs for running Llama 3 models. We'll dive deep into six key factors that can help you decide which device is the right fit for your AI projects:

Note: We will focus exclusively on the performance of these two GPUs with Llama 3 models. While other LLMs and GPUs exist, we'll stick to the comparison outlined in the title.

Performance Analysis: Comparing the NVIDIA 408016GB and NVIDIA RTX4000Ada20GB

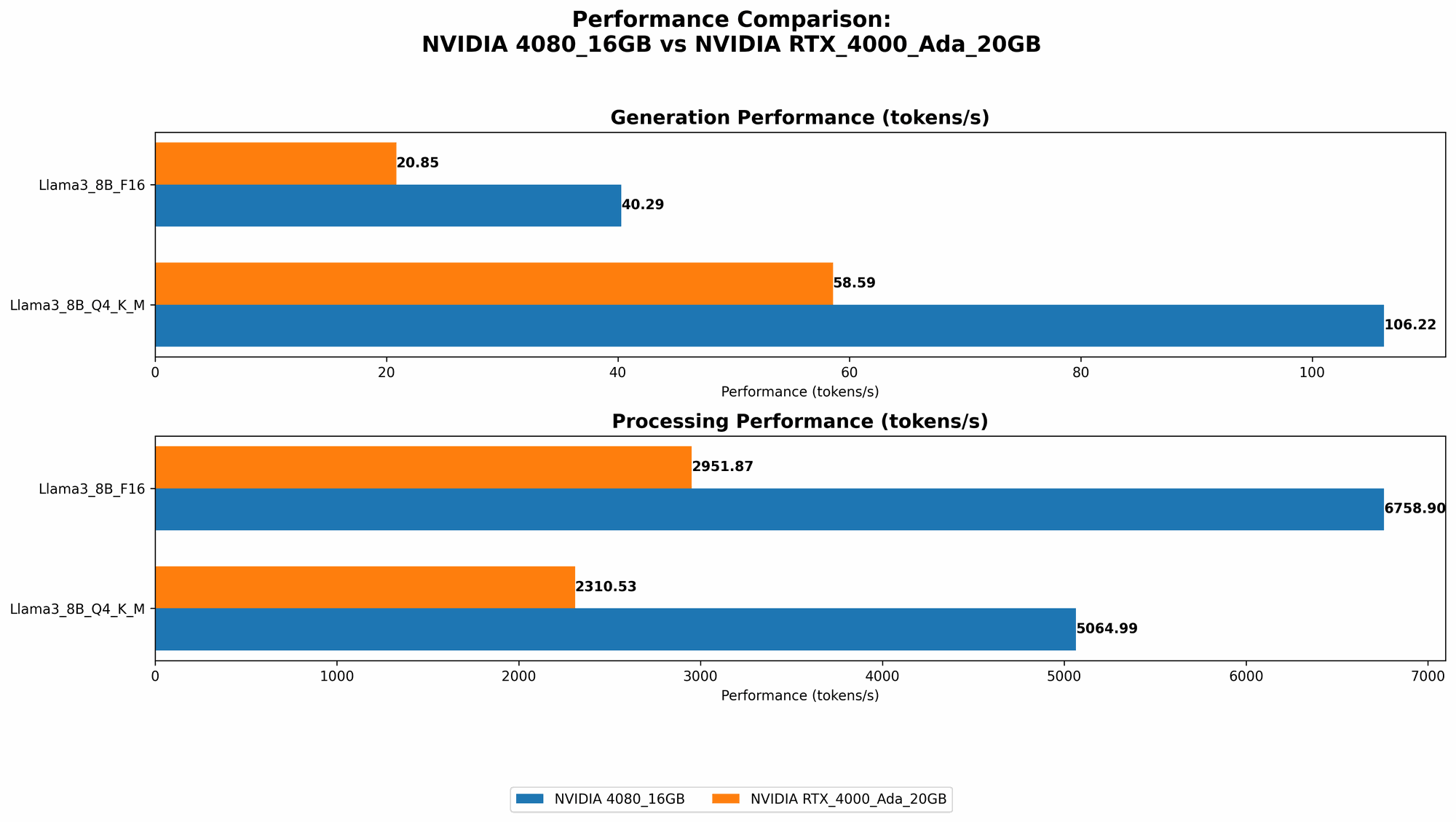

1. Token Speed Generation: A Race for the Fastest Response

Token speed generation, or the "thoughts per second" of your LLM, is crucial for real-time applications like chatbots, interactive tools, and real-time content generation.

| Model | NVIDIA 4080_16GB | NVIDIA RTX4000Ada_20GB |

|---|---|---|

| Llama 3 8B Q4KM Generation | 106.22 tokens/sec | 58.59 tokens/sec |

| Llama 3 8B F16 Generation | 40.29 tokens/sec | 20.85 tokens/sec |

The NVIDIA 408016GB takes the lead in token speed generation with significantly higher performance than the RTX4000Ada20GB across both Q4KM and F16 quantization formats.

Think of it this way: The 408016GB is like a seasoned sprinter, zipping through words and producing responses quickly. The RTX4000Ada20GB is more like a marathon runner, steady and consistent, but not as fast off the mark.

Practical Recommendation: If your AI application requires fast response times, the 4080_16GB is the clear choice.

2. Processing Power: The Engine Behind the Words

Token processing power, measured in tokens per second, is the raw capability of a GPU to handle the complex computations that drive an LLM.

| Model | NVIDIA 4080_16GB | NVIDIA RTX4000Ada_20GB |

|---|---|---|

| Llama 3 8B Q4KM Processing | 5064.99 tokens/sec | 2310.53 tokens/sec |

| Llama 3 8B F16 Processing | 6758.9 tokens/sec | 2951.87 tokens/sec |

Again, the NVIDIA 408016GB triumphs, delivering substantial processing power, with the 408016GB processing over twice as many tokens per second as the RTX4000Ada_20GB.

Practical Recommendation: For applications that demand high throughput, such as large-scale text generation or complex code completion, the 4080_16GB excels in processing power.

3. Memory Capacity: The Brain's Storage Space

Memory capacity is an essential factor: it determines how much data your GPU can hold at once. A larger memory capacity allows you to process more complex models and handle larger datasets.

- NVIDIA 4080_16GB: 16 GB

- NVIDIA RTX4000Ada_20GB: 20 GB

Practical Recommendation: The RTX4000Ada_20GB wins on memory capacity. If you're working with larger Llama 3 models (like 70B), or plan to load vast datasets alongside the LLM, the extra memory can be valuable.

4. Power Consumption: Keeping the Engine Running

Power consumption is a critical factor, particularly in the context of energy costs and thermal considerations.

- NVIDIA 4080_16GB: 320 Watts (TDP)

- NVIDIA RTX4000Ada_20GB: 285 Watts (TDP)

Practical Recommendation: The RTX4000Ada_20GB is more energy-efficient, consuming less power at a lower TDP. This translates to potentially lower electricity bills and a cooler running system.

5. Quantization: Balancing Performance and Memory

Quantization is a technique used to reduce the size of an LLM by representing the model weights with fewer bits. This can significantly decrease memory usage and improve performance, but it might also lead to a slight loss in accuracy.

- Q4KM: A more compact quantization format, offering a good balance between accuracy and efficiency.

- F16: A less compact quantization format that can offer better accuracy but requires more memory.

Practical Recommendation: The choice between Q4KM and F16 depends on your specific needs. If your AI application demands the highest accuracy, F16 might be preferred. If you prioritize efficiency and require less memory, Q4KM is a good choice.

6. Price: The Cost of AI Power

The cost of a GPU is a significant factor in the decision-making process.

- NVIDIA 408016GB: Generally more expensive than the RTX4000Ada20GB.

- NVIDIA RTX4000Ada20GB: More affordable than the 408016GB.

Practical Recommendation: The RTX4000Ada_20GB is a more budget-friendly option, making it a viable choice for developers with limited resources.

Choosing the Right GPU: Deciphering Your Needs

So, which GPU is the "winner"? The answer depends on your specific needs.

- If you need the fastest token speed generation: The NVIDIA 4080_16GB is the champion.

- If you're working with large LLMs or require top-notch processing power: The NVIDIA 4080_16GB is the powerhouse.

- If you need extra memory capacity or prefer a more energy-efficient GPU: The NVIDIA RTX4000Ada_20GB is your best bet.

- If you're on a budget and need a reasonably powerful GPU: The NVIDIA RTX4000Ada_20GB is a solid choice.

FAQ: Unlocking the Mysteries of LLMs and GPUs

1. What is an LLM?

An LLM (Large Language Model) is an advanced type of artificial intelligence capable of understanding and generating human-like text. Think of it as a massive library of language patterns and knowledge, enabling it to perform tasks like writing stories, translating languages, and answering your questions in a comprehensive and informative way.

2. What is quantization?

Quantization is a way to make LLMs smaller and more efficient by reducing the number of bits used to represent the model's weights. You can imagine this like compressing a large video file to reduce its size and make it faster to download or stream.

3. What does Q4KM and F16 mean?

These are quantization formats, representing different levels of compression:

- Q4KM: A more compressed format, using fewer bits, offering a good balance between accuracy and efficiency.

- F16: A less compressed format, using more bits, offering potentially better accuracy but requiring more memory.

4. Do more tokens/second always mean a better LLM performance?

Not necessarily. More tokens/second indicate faster processing, but the overall quality of the LLM's output (accuracy, creativity, etc.) also matters.

5. How can I get started with running LLMs on my computer?

You can typically use tools like llama.cpp, which allows you to run open-source LLMs like Llama 3 on your device!

Keywords

NVIDIA 408016GB, NVIDIA RTX4000Ada20GB, LLM, Large Language Model, Llama 3, AI, GPU, performance, token speed generation, processing power, memory capacity, power consumption, quantization, Q4KM, F16, price, comparison, decision-making, AI hardware, inference, chatbots, text generation, code completion, deep learning.