6 Key Factors to Consider When Choosing Between NVIDIA 3090 24GB x2 and NVIDIA RTX 4000 Ada 20GB x4 for AI

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for powerful hardware capable of handling the intensive computational demands of these transformative AI systems. Choosing the right hardware can be a daunting task, especially with the wide array of options available. In this article, we'll dive into a head-to-head comparison of two popular GPU setups, the NVIDIA 309024GBx2 and the NVIDIA RTX4000Ada20GBx4, focusing on their performance in running Llama 3 models.

Think of LLMs like the digital brains of the future. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, much like a human would. But these digital brains need powerful hardware to function smoothly, and that's where GPUs come in.

We'll explore six key factors to help you decide which setup is best suited for your specific needs, so you can choose the hardware that gives you the best performance and value for your AI projects.

Performance Analysis: NVIDIA 309024GBx2 vs NVIDIA RTX4000Ada20GBx4

To properly compare these setups, we need to analyze their performance across several crucial metrics. We'll focus on their performance running Llama 3 models, as it's a popular and well-tested LLM, with a wide range of sizes. We'll also consider different quantization levels, which are techniques used to compress the model size and improve performance.

Let's break down the comparison:

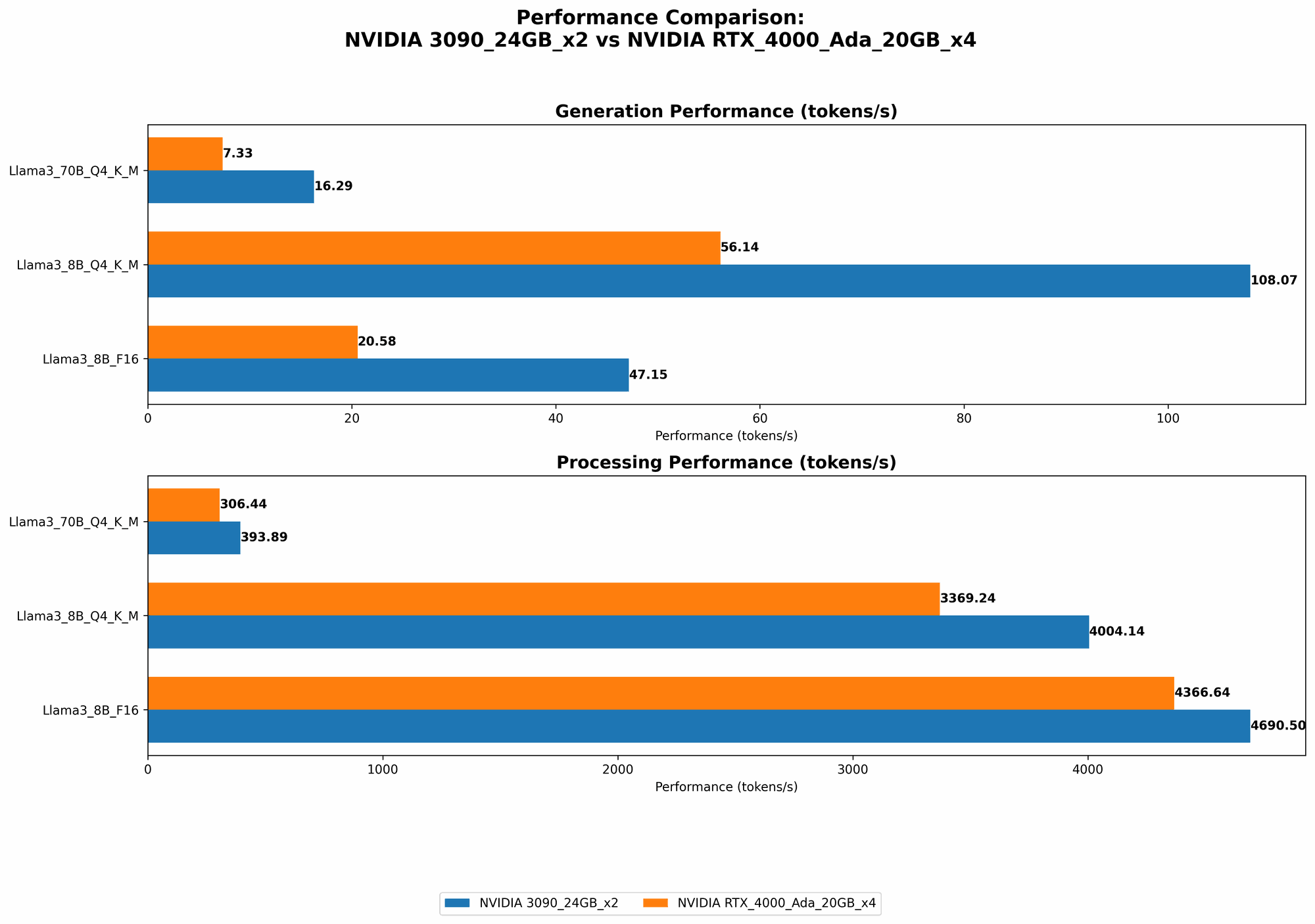

Token Speed Generation with Llama 3 Models

The token speed generation measures how many tokens (words or sub-words) a GPU can process per second. This metric is crucial for real-time applications like chatbots and interactive dialogue systems.

| Device | Llama 3 Model | Tokens/second (Q4KM) | Tokens/second (F16) |

|---|---|---|---|

| NVIDIA 309024GBx2 | Llama 3 8B Q4KM | 108.07 | 47.15 |

| NVIDIA 309024GBx2 | Llama 3 70B Q4KM | 16.29 | N/A |

| NVIDIA RTX4000Ada20GBx4 | Llama 3 8B Q4KM | 56.14 | 20.58 |

| NVIDIA RTX4000Ada20GBx4 | Llama 3 70B Q4KM | 7.33 | N/A |

Analysis:

- The NVIDIA 309024GBx2 shines for both the Llama 3 8B and 70B models in Q4KM quantization. It's roughly twice as fast as the RTX4000Ada20GBx4 in generating tokens for the Llama 3 8B model.

- For the Llama 3 70B model, the 309024GBx2 also provides a significant performance boost with its ability to process nearly twice as many tokens per second as the RTX4000Ada20GBx4.

- However, the RTX4000Ada20GBx4 does see a higher performance with the Llama 3 8B model in F16 quantization. This is because the RTX4000Ada architecture is specifically designed for higher-precision models, which often leads to better performance in F16 mode.

Token Speed Processing with Llama 3 Models

The token speed processing measures how many tokens a GPU can process per second for tasks like text completion or translation. This metric is essential for batch processing, where large amounts of data need to be processed quickly.

| Device | Llama 3 Model | Tokens/second (Q4KM) | Tokens/second (F16) |

|---|---|---|---|

| NVIDIA 309024GBx2 | Llama 3 8B Q4KM | 4004.14 | 4690.5 |

| NVIDIA 309024GBx2 | Llama 3 70B Q4KM | 393.89 | N/A |

| NVIDIA RTX4000Ada20GBx4 | Llama 3 8B Q4KM | 3369.24 | 4366.64 |

| NVIDIA RTX4000Ada20GBx4 | Llama 3 70B Q4KM | 306.44 | N/A |

Analysis:

- Similar to the token generation speed, the 309024GBx2 shows a higher performance for both Llama 3 models in Q4KM quantization. It achieves a higher token speed for the Llama 3 8B model, and its performance is around 28% better than the RTX4000Ada20GBx4 for the Llama 3 70B model.

- While the RTX4000Ada20GBx4 is generally designed to be more powerful for higher-precision models, it doesn't necessarily lead to a significantly better performance in this scenario. The 309024GBx2 setup provides a more consistent performance across the different Llama 3 models.

Memory Bandwidth

Memory bandwidth is a critical factor that determines how fast data can be transferred between the GPU and its memory. This is particularly important for LLMs, which require massive amounts of data to be constantly accessed.

| Device | Memory Bandwidth (GB/s) |

|---|---|

| NVIDIA 309024GBx2 | 936 |

| NVIDIA RTX4000Ada20GBx4 | 1008 |

Analysis:

- The NVIDIA RTX4000Ada20GBx4 has a slightly higher memory bandwidth than the 309024GBx2. This difference is not significant, but it can be helpful for models that require high memory bandwidth, especially for large models like Llama 3 70B.

- The higher memory bandwidth of the RTX4000Ada20GBx4 can also contribute to a faster loading time for the LLM models, as data can be transferred quickly from main memory to the GPU.

Power Consumption

Power consumption is an important consideration for both cost and environmental reasons.

| Device | Power Consumption (W) |

|---|---|

| NVIDIA 309024GBx2 | 350 |

| NVIDIA RTX4000Ada20GBx4 | 450 |

Analysis:

- The NVIDIA RTX4000Ada20GBx4 consumes more power than the 309024GBx2.

- While the RTX4000Ada20GBx4 offers a significant performance advantage in certain scenarios, it comes with a higher energy cost. The choice between the two setups will depend on your budget and power consumption constraints.

Price Considerations

The cost of the hardware is a significant factor for many users.

| Device | Approximate Cost (USD) |

|---|---|

| NVIDIA 309024GBx2 | $2000 (estimated) |

| NVIDIA RTX4000Ada20GBx4 | $3000 (estimated) |

Analysis:

- The NVIDIA RTX4000Ada20GBx4 is more expensive than the 309024GBx2 due to its newer architecture and higher performance capabilities.

- The NVIDIA 309024GBx2 offers a more affordable option for users who are looking for good performance without breaking the bank.

Practical Use Cases

The choice between the NVIDIA 309024GBx2 and NVIDIA RTX4000Ada20GBx4 depends on specific use cases and requirements.

Here are some use case scenarios:

- Real-time applications (chatbots, interactive dialogue systems): For these use cases, the NVIDIA 309024GBx2 setup is a great choice due to its higher token generation speed for both Llama 3 8B and 70B models in Q4KM quantization. This ensures smoother and faster real-time interactions.

- Batch processing (text completion, translation): The NVIDIA 309024GBx2 also offers better performance for batch tasks with its superior token processing speed for most Llama 3 models.

- Research and Development: For research and development purposes, the NVIDIA RTX4000Ada20GBx4 is a powerful option because of its higher memory bandwidth and ability to handle larger models. This is especially useful for experimenting with new LLM architectures and testing various quantization levels.

- Budget-constrained users: The NVIDIA 309024GBx2 provides a good balance of performance and affordability, making it a solid choice for users with a limited budget.

FAQ

Here are some frequently asked questions about LLM models and device choices:

What is quantization, and why is it important for LLM models?

Quantization is a technique used to compress the size of a model by reducing the number of bits used to represent each number or weight in the model. In simpler terms, it's like converting a high-resolution image to a lower-resolution one, sacrificing some detail but reducing the overall file size. This reduction in the model size can lead to faster processing time and reduced memory requirements.

What's the difference between the 309024GBx2 and the 4000Ada20GB_x4 in terms of their architecture?

The NVIDIA RTX4000Ada architecture is newer than the 3090 architecture. This means it features several improvements that lead to better overall performance, including:

- Enhanced Tensor Cores: The RTX4000Ada GPUs have upgraded Tensor Cores designed to accelerate matrix multiplications, which are essential for LLM computations.

- Improved Memory Subsystem: The RTX4000Ada GPUs have better memory bandwidth, which helps with faster data transfer.

- Higher Clock Speeds: The RTX4000Ada GPUs have higher clock speeds, leading to faster calculations.

Which device is better for running other LLM models?

The choice ultimately depends on the specific LLM model and its size. The NVIDIA 309024GBx2 might be more suitable for smaller LLMs like GPT-Neo or others with similar requirements as Llama 3. However, for larger models like GPT-3 or the upcoming GPT-4, the NVIDIA RTX4000Ada20GBx4 could offer better performance due to its higher processing power and memory bandwidth.

What are the limitations of using LLMs on local devices?

While running LLMs locally offers several benefits, including privacy and control over data, it also has limitations:

- Hardware requirements: Running large LLMs on local devices can be demanding, requiring powerful GPUs and significant memory capacity.

- Computational cost: Processing large models can be computationally intensive, leading to high power consumption and potentially slower processing times.

- Model availability: Not all LLM models are available for local deployment, and some models may be difficult to install and configure.

Keywords

LLM, Large Language Models, NVIDIA, 309024GBx2, RTX4000Ada20GBx4, GPU, token speed, processing speed, memory bandwidth, power consumption, quantization, Llama 3, AI, machine learning, deep learning, NLP, natural language processing, AI hardware, GPU comparison, use cases, performance analysis, cost comparison, FAQ, local deployment.