6 Key Factors to Consider When Choosing Between NVIDIA 3070 8GB and NVIDIA L40S 48GB for AI

Introduction: The Quest for the Perfect AI Companion

The world of artificial intelligence is booming, and Large Language Models (LLMs) are at the forefront. These powerful tools can generate creative text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models on your own computer can be tricky. You need a powerful GPU that can handle their complex computations, and the right hardware can make a huge difference in performance.

This guide is your roadmap to choosing the best GPU for your AI adventures. We'll compare two popular choices: the NVIDIA GeForce RTX 3070 8GB and the NVIDIA L40S 48GB. We'll explore crucial aspects like memory, processing power, and cost, so you can figure out which GPU is the best fit for your needs.

Comparing NVIDIA 3070 8GB and NVIDIA L40S 48GB for Running LLMs: A Deep Dive

Let's break down the key factors that will help you make the right decision for your AI journey:

1. Memory Capacity: Bigger is Better, but How Much is Enough?

Think of GPU memory like the brain of your computer. The more memory it has, the more data it can juggle at once, making it faster at processing large models.

NVIDIA 3070 8GB: With 8GB of memory, this card is great for smaller models like the 8-billion parameter Llama 3 model. It's powerful enough for everyday tasks, like writing scripts or generating creative content, but it might struggle with larger models like the 70-billion parameter Llama 3 model, which require more memory space.

NVIDIA L40S 48GB: This beastly card boasts a whopping 48GB of memory. It's made to handle the most demanding models, even the hefty 70-billion parameter Llama 3 model. That extra memory allows it to load and process huge datasets, giving you an edge for tasks like complex research and large-scale text generation.

2. Processing Power: The Muscle Behind AI Performance

GPUs are incredibly powerful calculators, and their processing power is what makes LLMs hum. More processing power means faster results.

NVIDIA 3070 8GB: This card packs a punch with its CUDA cores. While it's not as strong as the L40S, it delivers impressive speed for smaller models, making it a good choice for developers on a budget.

NVIDIA L40S 48GB: This card is a performance powerhouse with a massive number of CUDA cores, designed to handle the most intensive tasks. It's capable of blazing through complex calculations, making it perfect for large models and demanding tasks, like real-time translation or generating complex simulations.

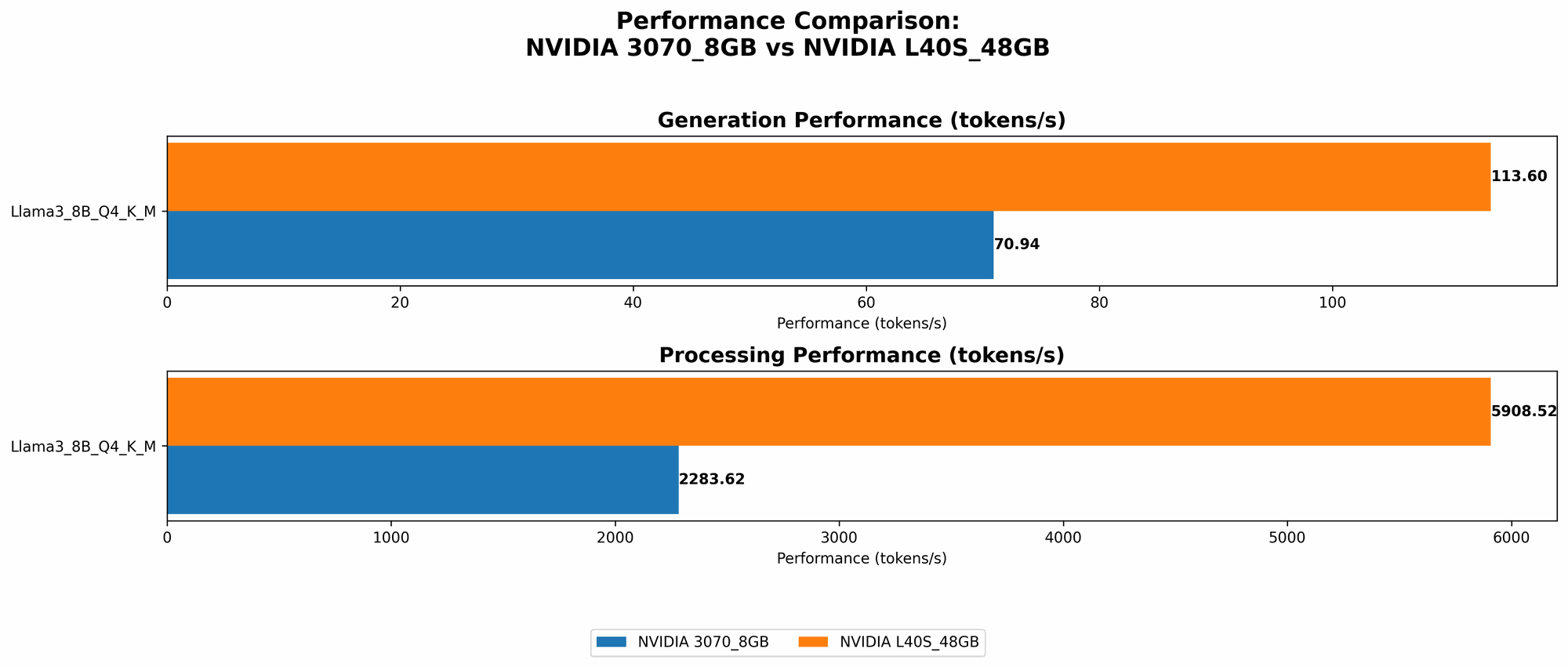

3. Token Speed Generation: A Measure of LLM Speed

"Token" is a technical term for a piece of text, kind of like a word or a punctuation mark. The faster your GPU can generate tokens, the faster your LLM can generate text, translate languages, or answer your questions.

Here's a table showcasing the token speed of the two GPUs for different Llama 3 models, measured in tokens per second:

| Model | NVIDIA 3070 8GB (tokens/second) | NVIDIA L40S 48GB (tokens/second) |

|---|---|---|

| Llama 3 8B - Q4 K/M | 70.94 | 113.6 |

| Llama 3 8B - F16 | NULL | 43.42 |

| Llama 3 70B - Q4 K/M | NULL | 15.31 |

| Llama 3 70B - F16 | NULL | NULL |

Important Note: The table uses data collected from various sources, and some data is missing. We will continue to update the information as new benchmarks become available.

Observation: The NVIDIA L40S 48GB delivers significantly higher token speeds for both the 8B and 70B models. This is due to its increased processing power and memory.

4. Model Quantization: Balancing Performance and Memory

Quantization is a technique that reduces the size of LLM models without sacrificing too much performance. It's like compressing a file to save space, while maintaining its quality. There are various quantization levels, with Q4KM being a common choice for balance.

NVIDIA 3070 8GB: The 3070 excels with Q4KM quantization for the Llama 3 8B model, offering reasonable performance within its memory limitations. For larger models, Q4KM may still be feasible, but it's important to check for compatibility and performance.

NVIDIA L40S 48GB: The L40S 48GB can comfortably handle Q4KM quantization for both the 8B and 70B models, delivering impressive performance. It can also handle other quantization options like F16, which allows for even smaller model sizes, but may come with a slight performance trade-off.

5. Power Consumption: Efficiency Matters

GPUs consume a lot of energy, and that can translate into higher electricity bills. Here's how the two GPUs stack up:

NVIDIA 3070 8GB: This card is relatively energy-efficient, particularly when compared to the L40S. However, it will still draw significant power, so it's important to have a capable power supply.

NVIDIA L40S 48GB: This card is a power-hungry beast! It requires a substantial amount of power to run. This can affect your electricity bill, but it also delivers significantly higher performance. It might be a better choice for users who prioritize performance and are less concerned about energy consumption.

6. Cost Factor: The Price of AI Power

The price of a GPU is a significant factor for many developers.

NVIDIA 3070 8GB: This card is known for being a good value proposition for its performance, offering a balance between speed and affordability.

NVIDIA L40S 48GB: This card is at the higher end of the price spectrum. While its performance is unmatched, it can be a costly investment.

Performance Analysis: Putting the Numbers into Perspective

- The L40S 48GB is a clear winner in terms of overall performance and memory capacity. It can handle large models with ease, delivering faster results for demanding tasks.

- The 3070 8GB is a solid choice for users on a budget or those working with smaller models. It's a good balance between performance and cost, suitable for everyday AI tasks.

Practical Recommendations: Choosing the Right GPU for Your Needs

- If you're working with large language models like Llama 3 70B, the L40S 48GB is the way to go. It handles these demanding models with ease, delivers impressive performance, and provides ample memory for future upgrades.

- If you're just starting out or focusing on smaller models like Llama 3 8B, the 3070 8GB is a great starting point. It's a good value option for those who want to explore the world of LLMs without breaking the bank.

- For users who prioritize energy efficiency, the 3070 8GB is a better choice. However, if you're willing to pay for the extra power, the L40S 48GB will deliver significantly faster results.

Conclusion: Your AI Companion Awaits

The choice between the NVIDIA 3070 8GB and the NVIDIA L40S 48GB ultimately depends on your individual needs, budget, and project requirements.

- The L40S 48GB is a powerhouse for ambitious AI projects, but it comes with a higher price tag.

- The 3070 8GB is a great value option for those starting out or working with smaller models.

Remember, the world of GPUs is constantly evolving, so stay informed about the latest developments to find the best AI companion for your journey!

FAQ: Common Questions About LLMs and GPUs

Q: What are LLMs, and why are they so popular?

A: LLMs are Large Language Models, a type of AI that excels at understanding and generating human-like text. They're used for tasks like writing creative content, translating languages, and even coding. Their popularity comes from their versatility and ability to perform tasks that were once considered uniquely human.

Q: What are CUDA cores, and why are they important?

A: CUDA cores are specialized processing units found in GPUs designed for parallel computing. They are essential for the fast and efficient execution of AI computations, especially in LLMs.

Q: What is F16 quantization?

A: F16 quantization is a technique that represents numbers in an LLM model using 16 bits instead of the usual 32 bits. This reduces the model size but can lead to some performance loss.

Q: What are the best practices for choosing a GPU for LLMs?

A: First, consider the size and demands of your LLM. For large models, a powerful GPU with ample memory is essential. Then, consider your budget and energy efficiency needs. Finally, keep an eye on the latest GPU releases and benchmarks to stay updated.

Q: How do I choose a GPU for my AI project?

A: Here's a quick step-by-step guide:

- Determine the size and complexity of your LLMs.

- Consider your budget and power consumption requirements.

- Research different GPUs and their performance specs.

- Read reviews and compare benchmarks.

- Make an informed decision based on your needs and priorities.

Keywords:

NVIDIA 3070 8GB, NVIDIA L40S 48GB, GPU, AI, LLM, Llama 3, Token Speed, Quantization, Q4KM, F16, Performance, Memory, CUDA Cores, Power Consumption, Cost, Budget, AI Project, GPU Comparison, LLM Models, Deep Learning, Natural Language Processing, Text Generation, Translation, Coding, AI Development, GPU Selection, AI Hardware, AI Performance, Data Science, Machine Learning