6 Key Factors to Consider When Choosing Between Apple M3 Pro 150gb 14cores and NVIDIA RTX 4000 Ada 20GB x4 for AI

Introduction

The world of AI is rapidly evolving, with Large Language Models (LLMs) becoming increasingly powerful and capable. This has led to a surge in demand for powerful hardware that can handle the demanding computational requirements of these models. Two popular choices for running LLMs locally are the Apple M3 Pro 150GB 14-Cores and the NVIDIA RTX 4000 Ada 20GB x4. Both offer impressive performance, but they have distinct strengths and weaknesses depending on the specific LLM and use case. This article will dive into the performance of these devices for a variety of LLM models, analyze their strengths and weaknesses, and provide recommendations based on your specific needs.

Performance Analysis: Apple M3 Pro 150GB 14-Cores vs NVIDIA RTX 4000 Ada 20GB x4

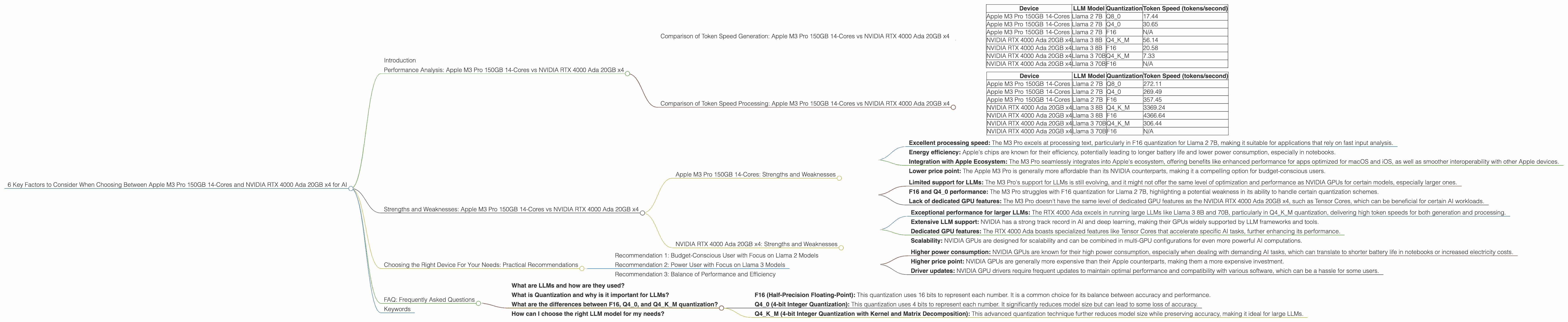

Comparison of Token Speed Generation: Apple M3 Pro 150GB 14-Cores vs NVIDIA RTX 4000 Ada 20GB x4

To understand the performance of these devices, we'll examine their token generation speed for various LLM models. Token speed is a crucial metric in LLM performance, representing the number of tokens processed per second. Higher token speeds translate to faster response times and smoother interactions with the AI model.

Here's a breakdown of the token speed data for the devices:

| Device | LLM Model | Quantization | Token Speed (tokens/second) |

|---|---|---|---|

| Apple M3 Pro 150GB 14-Cores | Llama 2 7B | Q8_0 | 17.44 |

| Apple M3 Pro 150GB 14-Cores | Llama 2 7B | Q4_0 | 30.65 |

| Apple M3 Pro 150GB 14-Cores | Llama 2 7B | F16 | N/A |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 8B | Q4KM | 56.14 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 8B | F16 | 20.58 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 70B | Q4KM | 7.33 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 70B | F16 | N/A |

Key Observations:

- NVIDIA RTX 4000 Ada 20GB x4 outperforms Apple M3 Pro 150GB 14-Cores for Llama 3 8B and Llama 3 70B models in both F16 and Q4KM quantization.

- Apple M3 Pro 150GB 14-Cores struggles with F16 quantization for Llama 2 7B, indicating a significant performance bottleneck.

- The NVIDIA RTX 4000 Ada 20GB x4 shows remarkable performance in Q4KM quantization for Llama 3 8B and Llama 3 70B.

Practical Implications:

- If your primary focus is on running Llama 3 models, especially with large sizes like 70B, the NVIDIA RTX 4000 Ada 20GB x4 is the clear choice. Its superior performance in Q4KM quantization makes it ideal for fast and smooth interactions.

- For Llama 2 models, especially 7B models, the Apple M3 Pro 150GB 14-Cores offers decent performance with Q4_0 quantization, but its F16 performance is disappointing.

Comparison of Token Speed Processing: Apple M3 Pro 150GB 14-Cores vs NVIDIA RTX 4000 Ada 20GB x4

Next, we examine the token speed for processing, which refers to the speed at which the model can process the input text.

| Device | LLM Model | Quantization | Token Speed (tokens/second) |

|---|---|---|---|

| Apple M3 Pro 150GB 14-Cores | Llama 2 7B | Q8_0 | 272.11 |

| Apple M3 Pro 150GB 14-Cores | Llama 2 7B | Q4_0 | 269.49 |

| Apple M3 Pro 150GB 14-Cores | Llama 2 7B | F16 | 357.45 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 8B | Q4KM | 3369.24 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 8B | F16 | 4366.64 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 70B | Q4KM | 306.44 |

| NVIDIA RTX 4000 Ada 20GB x4 | Llama 3 70B | F16 | N/A |

Key Observations:

- The NVIDIA RTX 4000 Ada 20GB x4 excels in processing speed for Llama 3 models, particularly for Llama 3 8B in both F16 and Q4KM quantization.

- The Apple M3 Pro 150GB 14-Cores offers surprisingly high processing speeds for Llama 2 7B in F16, outperforming even the NVIDIA RTX 4000 Ada 20GB x4 in Q4KM quantization.

- Despite its impressive performance in Q4KM quantization for Llama 3 70B, the NVIDIA RTX 4000 Ada 20GB x4 still lags behind the Apple M3 Pro 150GB 14-Cores in processing speed for Llama 2 7B, especially in F16.

Practical Implications:

- For Llama 3 models, the NVIDIA RTX 4000 Ada 20GB x4 significantly accelerates processing, leading to faster input analysis and overall improved performance.

- The Apple M3 Pro 150GB 14-Cores shines in processing Llama 2 7B models, particularly in F16 quantization, suggesting its suitability for applications requiring efficient text processing.

Strengths and Weaknesses: Apple M3 Pro 150GB 14-Cores vs NVIDIA RTX 4000 Ada 20GB x4

Apple M3 Pro 150GB 14-Cores: Strengths and Weaknesses

Strengths:

- Excellent processing speed: The M3 Pro excels at processing text, particularly in F16 quantization for Llama 2 7B, making it suitable for applications that rely on fast input analysis.

- Energy efficiency: Apple's chips are known for their efficiency, potentially leading to longer battery life and lower power consumption, especially in notebooks.

- Integration with Apple Ecosystem: The M3 Pro seamlessly integrates into Apple's ecosystem, offering benefits like enhanced performance for apps optimized for macOS and iOS, as well as smoother interoperability with other Apple devices.

- Lower price point: The Apple M3 Pro is generally more affordable than its NVIDIA counterparts, making it a compelling option for budget-conscious users.

Weaknesses:

- Limited support for LLMs: The M3 Pro's support for LLMs is still evolving, and it might not offer the same level of optimization and performance as NVIDIA GPUs for certain models, especially larger ones.

- F16 and Q4_0 performance: The M3 Pro struggles with F16 quantization for Llama 2 7B, highlighting a potential weakness in its ability to handle certain quantization schemes.

- Lack of dedicated GPU features: The M3 Pro doesn't have the same level of dedicated GPU features as the NVIDIA RTX 4000 Ada 20GB x4, such as Tensor Cores, which can be beneficial for certain AI workloads.

NVIDIA RTX 4000 Ada 20GB x4: Strengths and Weaknesses

Strengths:

- Exceptional performance for larger LLMs: The RTX 4000 Ada excels in running large LLMs like Llama 3 8B and 70B, particularly in Q4KM quantization, delivering high token speeds for both generation and processing.

- Extensive LLM support: NVIDIA has a strong track record in AI and deep learning, making their GPUs widely supported by LLM frameworks and tools.

- Dedicated GPU features: The RTX 4000 Ada boasts specialized features like Tensor Cores that accelerate specific AI tasks, further enhancing its performance.

- Scalability: NVIDIA GPUs are designed for scalability and can be combined in multi-GPU configurations for even more powerful AI computations.

Weaknesses:

- Higher power consumption: NVIDIA GPUs are known for their high power consumption, especially when dealing with demanding AI tasks, which can translate to shorter battery life in notebooks or increased electricity costs.

- Higher price point: NVIDIA GPUs are generally more expensive than their Apple counterparts, making them a more expensive investment.

- Driver updates: NVIDIA GPU drivers require frequent updates to maintain optimal performance and compatibility with various software, which can be a hassle for some users.

Choosing the Right Device For Your Needs: Practical Recommendations

Here are some recommendations based on factors such as budget, LLM model, and intended use case:

Recommendation 1: Budget-Conscious User with Focus on Llama 2 Models

If you are on a tight budget and primarily interested in running Llama 2 models, especially 7B, the Apple M3 Pro 150GB 14-Cores is a solid choice. It offers good performance with Q4_0 quantization and excellent processing speed, especially in F16. Its price point and energy efficiency are additional benefits.

Recommendation 2: Power User with Focus on Llama 3 Models

If you prioritize performance for larger LLMs like Llama 3 8B and 70B, the NVIDIA RTX 4000 Ada 20GB x4 is the superior option. Its remarkable performance in Q4KM quantization, especially for generation and processing, makes it ideal for demanding AI tasks with these models.

Recommendation 3: Balance of Performance and Efficiency

If you seek a balance between performance and energy efficiency, the Apple M3 Pro 150GB 14-Cores might be a better fit. While it might not match the NVIDIA RTX 4000 Ada 20GB x4 in terms of raw performance for larger LLMs, its efficiency and affordability make it a compelling option for many users.

FAQ: Frequently Asked Questions

What are LLMs and how are they used?

LLMs are powerful machine learning models capable of understanding and generating human-like text. They are used in a wide range of applications, including chatbots, language translation, content creation, code generation, and more. Think of them as advanced text manipulation tools.

What is Quantization and why is it important for LLMs?

Quantization is a technique that reduces the size of an LLM model without sacrificing much accuracy. It is like changing the size of a picture from high-resolution to low-resolution without drastically altering the image. Quantization is important because it allows you to run larger LLMs on devices with limited memory, making them more accessible to a wider range of users.

What are the differences between F16, Q40, and Q4K_M quantization?

- F16 (Half-Precision Floating-Point): This quantization uses 16 bits to represent each number. It is a common choice for its balance between accuracy and performance.

- Q4_0 (4-bit Integer Quantization): This quantization uses 4 bits to represent each number. It significantly reduces model size but can lead to some loss of accuracy.

- Q4KM (4-bit Integer Quantization with Kernel and Matrix Decomposition): This advanced quantization technique further reduces model size while preserving accuracy, making it ideal for large LLMs.

How can I choose the right LLM model for my needs?

The choice of LLM depends on your specific use case. Consider factors like model size, accuracy, and the types of tasks you want to perform. Smaller models (like 7B) are ideal for basic tasks and are less demanding on your hardware. Larger models (like 70B) offer greater accuracy and may be better suited for complex tasks.

Keywords

Apple M3 Pro, NVIDIA RTX 4000 Ada, LLM, Large Language Model, Token Speed, Performance, Quantization, F16, Q40, Q4K_M, Llama 2, Llama 3, GPU, AI, Machine Learning, Deep Learning, Hardware, Software, Processing, Generation, Recommendations, Comparison, Budget, Power User, Efficiency, FAQ, Ecosystem, Tensor Cores, Driver updates, Scalability