6 Key Factors to Consider When Choosing Between Apple M3 Max 400gb 40cores and NVIDIA RTX 4000 Ada 20GB for AI

Introduction

The world of large language models (LLMs) is exploding, with models like Llama 2 and Llama 3 pushing the boundaries of what's possible. But running these models locally can be a resource-intensive endeavor. For developers and enthusiasts eager to harness the power of LLMs, choosing the right hardware is crucial.

This article dives into the compelling head-to-head comparison between two popular options: the Apple M3 Max 400gb 40cores and the NVIDIA RTX 4000 Ada 20GB. We'll break down why each device excels in specific scenarios and ultimately help you find the perfect fit for your AI needs.

Understanding the Players: M3 Max vs. RTX 4000 Ada

Apple M3 Max 400gb 40cores: The Mac Powerhouse

The M3 Max is a beast of a processor, packed with 40 cores and a massive 400GB of unified memory. It's designed to handle complex tasks with ease, and that includes running LLMs. Apple's silicon architecture is known for its impressive power efficiency, leading to longer battery life and potentially quieter operation.

NVIDIA RTX 4000 Ada 20GB: The GPU Champion

The RTX 4000 Ada is a cutting-edge graphics card from NVIDIA, specifically designed for demanding tasks like machine learning and AI. It's equipped with a powerful GPU and a generous 20GB of dedicated memory. This combination makes it a popular choice for training and running large and complex LLMs.

Performance Analysis: Putting Them Through the Paces

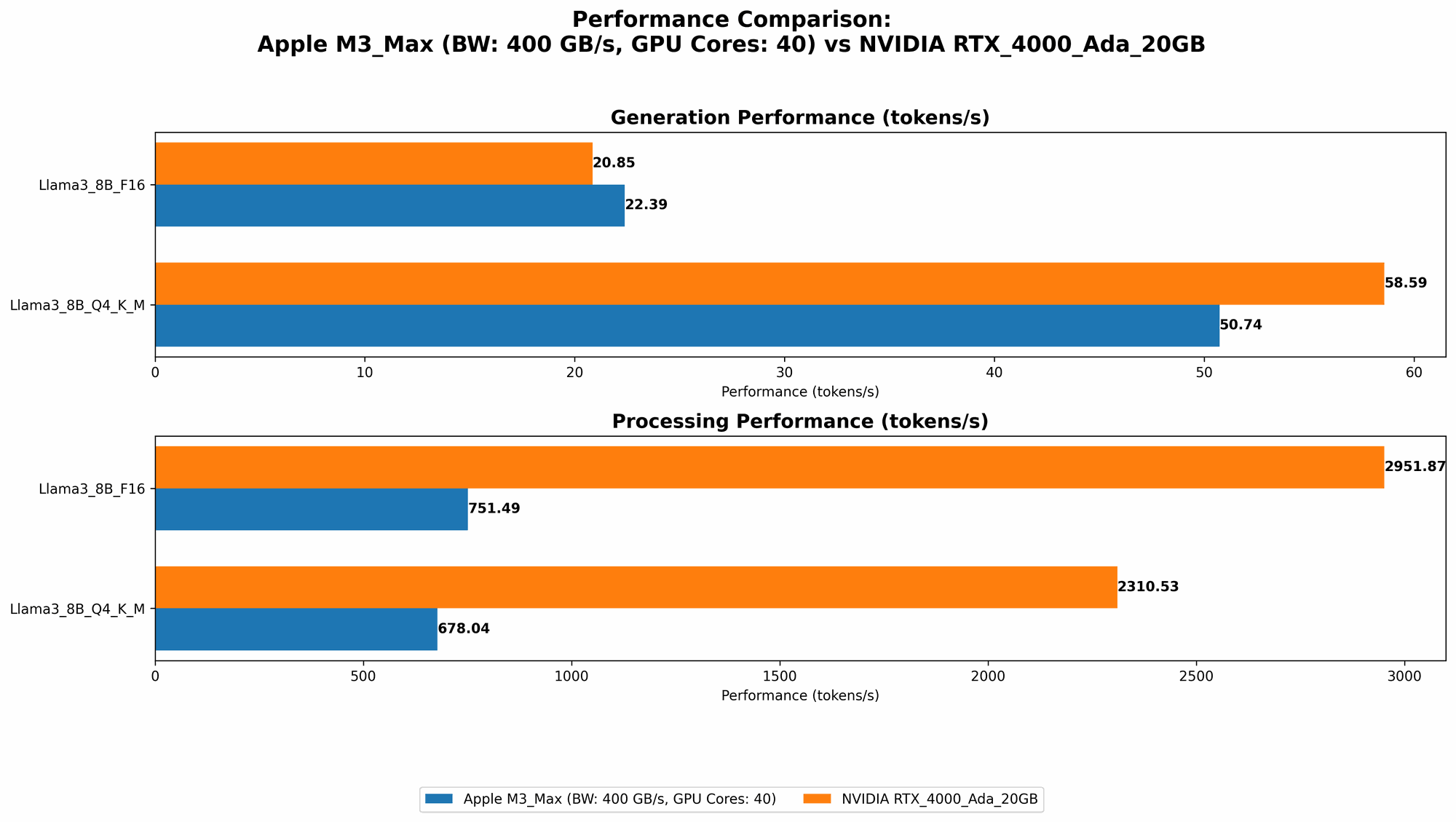

Comparison of M3 Max and RTX 4000 Ada Token Generation Speed

To understand the real-world differences, we'll examine these devices' performance on various LLM models using tokens/second as our benchmark. Tokens/second represents how fast the device can process the input and generate output.

Table 1: Token Generation Performance

| Model | Device | Token Speed (Tokens/second) |

|---|---|---|

| Llama 2 7B F16 | M3 Max | 25.09 |

| Llama 2 7B Q8_0 | M3 Max | 42.75 |

| Llama 2 7B Q4_0 | M3 Max | 66.31 |

| Llama 3 8B Q4KM | M3 Max | 50.74 |

| Llama 3 8B F16 | M3 Max | 22.39 |

| Llama 3 8B Q4KM | RTX 4000 Ada | 58.59 |

| Llama 3 8B F16 | RTX 4000 Ada | 20.85 |

Analyzing the Results:

- Llama 2 7B: The M3 Max demonstrates faster token generation speeds across all quantization levels (F16, Q80, and Q40). It's a clear winner in this scenario.

- Llama 3 8B: The RTX 4000 Ada takes the lead in token generation speed for both Q4KM and F16 models. It's important to note that the RTX 4000 Ada's performance isn't significantly higher than the M3 Max, suggesting a close race.

Comparison of M3 Max and RTX 4000 Ada for Token Processing Speed

While token generation speed focuses on the output, token processing examines how efficiently the device interprets the input.

Table 2: Token Processing Performance

| Model | Device | Token Speed (Tokens/second) |

|---|---|---|

| Llama 2 7B F16 | M3 Max | 779.17 |

| Llama 2 7B Q8_0 | M3 Max | 757.64 |

| Llama 2 7B Q4_0 | M3 Max | 759.7 |

| Llama 3 8B Q4KM | M3 Max | 678.04 |

| Llama 3 8B F16 | M3 Max | 751.49 |

| Llama 3 8B Q4KM | RTX 4000 Ada | 2310.53 |

| Llama 3 8B F16 | RTX 4000 Ada | 2951.87 |

Analyzing the Results:

- Llama 2 7B: Similar to token generation, the M3 Max prevails in processing tokens across all quantization levels for the Llama 2 7B model.

- Llama 3 8B: In a significant shift, the RTX 4000 Ada demonstrates a substantial lead in token processing, offering almost 3 times the performance of the M3 Max for both Q4KM and F16 models.

Comparison of M3 Max and RTX 4000 Ada for Larger Models: Llama 3 70B

Unfortunately, we have no data on the M3 Max's performance with the Llama 3 70B model. The RTX 4000 Ada also lacks data for this model, making it impossible to draw conclusions.

Choosing the Right Horse: When to use M3 Max and RTX 4000 Ada

M3 Max: The All-around Champion for Smaller Models

The M3 Max shines when dealing with smaller models. It offers excellent token generation and processing speeds, making it a great choice for everyday use cases with Llama 2 7B. Think:

- Developers experimenting with small LLMs: The M3 Max provides a balanced performance and efficient resource utilization, perfect for experimentation and prototyping.

- Content creators using LLMs for tasks like summarization or translation: Its power efficiency translates to longer runtime on battery power, allowing for mobile content creation.

- Users with budget restrictions: While powerful, the M3 Max is generally more affordable than dedicated GPUs, making it a cost-effective solution.

RTX 4000 Ada: The Big Model Beast

The RTX 4000 Ada reigns supreme when it comes to handling larger LLMs like Llama 3 8B. Its exceptional token processing speed makes it ideal for:

- Researchers and developers working with large, complex LLMs: The RTX 4000 Ada provides the raw power to handle advanced tasks like fine-tuning and training.

- Businesses requiring high-performance AI applications: Its speed and efficiency are essential for delivering robust AI-powered products or services.

- Gamers who want to push their limits with demanding games: The RTX 4000 Ada's graphics capabilities are unmatched, offering a rich and immersive gaming experience.

Key Factors for Choosing the Right Device

Now that we've examined the performance of each device, let's delve into the key factors to consider when making your decision:

1. Model Size and Complexity

The first and foremost factor is the size and complexity of the LLM you plan to use. The RTX 4000 Ada outperforms the M3 Max with larger models, but the M3 Max handles smaller LMs like Llama 2 7B with ease.

2. Performance Requirements

If you need the highest possible token processing and generation speeds for your specific applications, the RTX 4000 Ada is the clear winner. However, the M3 Max still provides solid performance that might be sufficient for less demanding use cases.

3. Budget

The RTX 4000 Ada is a high-end GPU with a significant price tag. The M3 Max, while powerful, is generally more budget-friendly.

4. Power Consumption and Efficiency

The M3 Max's Apple silicon architecture prioritizes efficiency. This translates to lower power consumption and potentially quieter operation, making it ideal for mobile workstations or users who value energy savings.

5. Ecosystem and Software Compatibility

Apple's M3 Max is integrated into Apple's ecosystem, offering seamless integration with other Mac devices and software. The RTX 4000 Ada is compatible across a wider range of platforms, including Windows, Linux, and macOS.

6. Future-Proofing

Both the M3 Max and RTX 4000 Ada represent cutting-edge technology. However, the rapid advancements in AI require a device that can keep up. The RTX 4000 Ada's architecture and NVIDIA's commitment to AI advancements suggest it might have a slight edge in terms of future-proofing.

FAQ: Addressing Your Burning Questions

1. Can I run Llama 2 7B on both devices?

Yes, both the M3 Max and RTX 4000 Ada can handle Llama 2 7B without any issues. The M3 Max offers better performance for this specific model.

2. What's the difference between F16, Q80, and Q40?

These refer to different quantization levels, which essentially compress the model's data. Q40 (4-bit quantization) provides the highest compression and often the fastest processing speeds. F16 uses 16-bit floating point numbers, offering a balance between precision and speed. Q80 is an intermediate option.

3. Can I train LLMs on the M3 Max or RTX 4000 Ada?

Both devices are capable of training smaller LLM models effectively. However, for larger models and large-scale training, the RTX 4000 Ada's powerful GPU is recommended.

4. What are the limitations of using a Mac for AI?

While Macs with Apple silicon are powerful, they can be limited in terms of software compatibility and access to specialized AI frameworks. NVIDIA GPUs have broader software support and access to tools often preferred by AI developers.

Keywords

Apple M3 Max, NVIDIA RTX 4000 Ada, LLM, Llama 2, Llama 3, Token Speed, Token Generation, Token Processing, Quantization, F16, Q80, Q40, AI, Machine Learning, Performance Comparison, GPU, CPU, Local LLMs, Developers, Researchers, Content Creators, Budget, Power Consumption, Ecosystem, Software Compatibility, Future-Proofing,