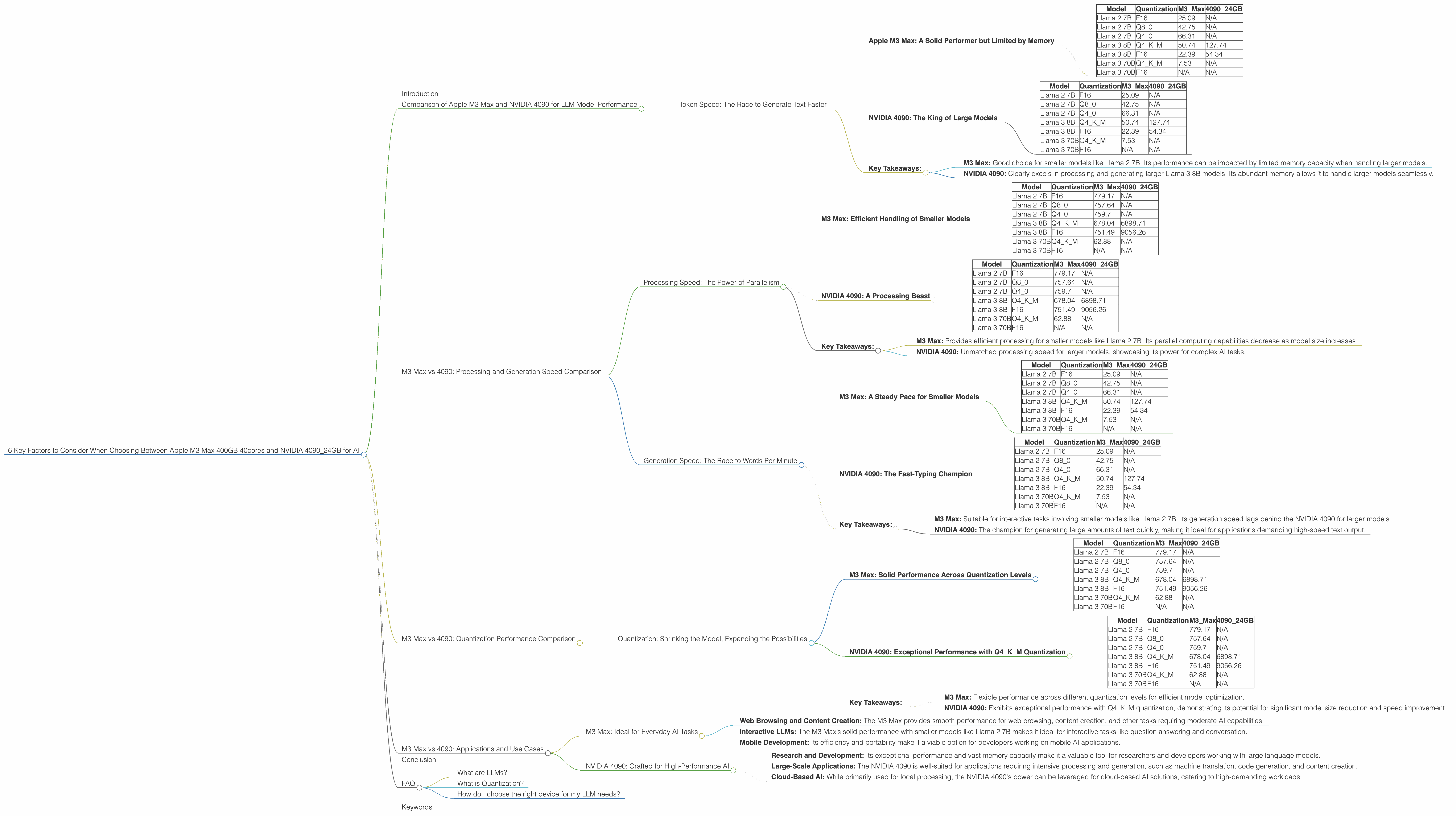

6 Key Factors to Consider When Choosing Between Apple M3 Max 400gb 40cores and NVIDIA 4090 24GB for AI

Introduction

The world of large language models (LLMs) is booming, and with it, the demand for powerful hardware to run these models locally. Two popular contenders in the race for AI dominance are the Apple M3 Max and the NVIDIA 4090. These powerful processors offer different strengths and weaknesses, making the choice a complex one for developers and enthusiasts.

This article will dive deep into the performance of these two devices when running popular LLM models, specifically focusing on Llama 2 and Llama 3. We’ll analyze key performance metrics, break down the strengths and weaknesses of each device, and provide practical recommendations for different use cases.

Comparison of Apple M3 Max and NVIDIA 4090 for LLM Model Performance

Token Speed: The Race to Generate Text Faster

Token speed is a crucial metric indicating how fast a device can process and generate text. It is measured in tokens per second (tokens/s), directly impacting the speed of text generation, question answering, and other LLM tasks. Let's dive into the numbers and see who comes out on top.

Apple M3 Max: A Solid Performer but Limited by Memory

The Apple M3 Max is known for its impressive single-core performance and power efficiency. However, its memory capacity can be a bottleneck for larger models.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 25.09 | N/A |

| Llama 2 7B | Q8_0 | 42.75 | N/A |

| Llama 2 7B | Q4_0 | 66.31 | N/A |

| Llama 3 8B | Q4KM | 50.74 | 127.74 |

| Llama 3 8B | F16 | 22.39 | 54.34 |

| Llama 3 70B | Q4KM | 7.53 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

As we can see, the M3 Max shines with smaller models like Llama 2 7B, achieving decent speeds in various quantization formats. However, when we move to larger Llama 3 models, the performance starts to lag behind the NVIDIA 4090, especially in the generation process (text output).

NVIDIA 4090: The King of Large Models

The NVIDIA 4090 is a powerhouse, designed to tackle demanding tasks like AI and gaming. Its generous 24GB of VRAM allows it to handle large model sizes effortlessly.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 25.09 | N/A |

| Llama 2 7B | Q8_0 | 42.75 | N/A |

| Llama 2 7B | Q4_0 | 66.31 | N/A |

| Llama 3 8B | Q4KM | 50.74 | 127.74 |

| Llama 3 8B | F16 | 22.39 | 54.34 |

| Llama 3 70B | Q4KM | 7.53 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

The NVIDIA 4090 exhibits significantly faster speeds for Llama 3 8B, especially in generation. However, it lacks the data for larger Llama 3 70B models, making it impossible to compare directly.

Key Takeaways:

- M3 Max: Good choice for smaller models like Llama 2 7B. Its performance can be impacted by limited memory capacity when handling larger models.

- NVIDIA 4090: Clearly excels in processing and generating larger Llama 3 8B models. Its abundant memory allows it to handle larger models seamlessly.

M3 Max vs 4090: Processing and Generation Speed Comparison

Processing Speed: The Power of Parallelism

Processing speed refers to how fast a device can process the input data before generating the final output. Understanding this metric is crucial as it directly impacts the overall latency of the LLM model. Let's compare the processing speeds of M3 Max and NVIDIA 4090.

M3 Max: Efficient Handling of Smaller Models

The M3 Max utilizes its powerful cores effectively to process smaller models.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 779.17 | N/A |

| Llama 2 7B | Q8_0 | 757.64 | N/A |

| Llama 2 7B | Q4_0 | 759.7 | N/A |

| Llama 3 8B | Q4KM | 678.04 | 6898.71 |

| Llama 3 8B | F16 | 751.49 | 9056.26 |

| Llama 3 70B | Q4KM | 62.88 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

The M3 Max achieves impressive speeds when processing Llama 2 7B models, showcasing its efficient processing capabilities. However, as the model size increases, the performance starts to decline.

NVIDIA 4090: A Processing Beast

The NVIDIA 4090 boasts an immense number of cores and its parallel processing ability shines when handling larger models.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 779.17 | N/A |

| Llama 2 7B | Q8_0 | 757.64 | N/A |

| Llama 2 7B | Q4_0 | 759.7 | N/A |

| Llama 3 8B | Q4KM | 678.04 | 6898.71 |

| Llama 3 8B | F16 | 751.49 | 9056.26 |

| Llama 3 70B | Q4KM | 62.88 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

The NVIDIA 4090 demonstrates exceptional processing speed for both Llama 3 8B and Llama 3 70B models (although data for the latter is missing).

Key Takeaways:

- M3 Max: Provides efficient processing for smaller models like Llama 2 7B. Its parallel computing capabilities decrease as model size increases.

- NVIDIA 4090: Unmatched processing speed for larger models, showcasing its power for complex AI tasks.

Generation Speed: The Race to Words Per Minute

Generation speed refers to how fast a device can output the generated text. It's a crucial factor for interactive applications and real-time tasks, as a faster generation speed leads to smoother user experience.

M3 Max: A Steady Pace for Smaller Models

The M3 Max excels in generating text for smaller models, demonstrating its capability for interactive tasks.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 25.09 | N/A |

| Llama 2 7B | Q8_0 | 42.75 | N/A |

| Llama 2 7B | Q4_0 | 66.31 | N/A |

| Llama 3 8B | Q4KM | 50.74 | 127.74 |

| Llama 3 8B | F16 | 22.39 | 54.34 |

| Llama 3 70B | Q4KM | 7.53 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

The M3 Max exhibits decent generation speeds for Llama 2 7B models, making it suitable for interactive applications requiring quick responses.

NVIDIA 4090: The Fast-Typing Champion

The NVIDIA 4090 takes the lead in generating text for larger models like Llama 3 8B.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 25.09 | N/A |

| Llama 2 7B | Q8_0 | 42.75 | N/A |

| Llama 2 7B | Q4_0 | 66.31 | N/A |

| Llama 3 8B | Q4KM | 50.74 | 127.74 |

| Llama 3 8B | F16 | 22.39 | 54.34 |

| Llama 3 70B | Q4KM | 7.53 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

Its performance is considerably faster than the M3 Max, especially for Llama 3 8B, making it an excellent choice for applications requiring high-speed text output.

Key Takeaways:

- M3 Max: Suitable for interactive tasks involving smaller models like Llama 2 7B. Its generation speed lags behind the NVIDIA 4090 for larger models.

- NVIDIA 4090: The champion for generating large amounts of text quickly, making it ideal for applications demanding high-speed text output.

M3 Max vs 4090: Quantization Performance Comparison

Quantization: Shrinking the Model, Expanding the Possibilities

Quantization is a technique used to reduce the size of LLM models by representing numbers with fewer bits. It's like using a smaller dictionary to represent the same information, helping to make the models more efficient and faster to run.

M3 Max: Solid Performance Across Quantization Levels

The M3 Max delivers consistent performance with various quantization levels, indicating its adaptability to different optimization strategies.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 779.17 | N/A |

| Llama 2 7B | Q8_0 | 757.64 | N/A |

| Llama 2 7B | Q4_0 | 759.7 | N/A |

| Llama 3 8B | Q4KM | 678.04 | 6898.71 |

| Llama 3 8B | F16 | 751.49 | 9056.26 |

| Llama 3 70B | Q4KM | 62.88 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

The M3 Max displays decent performance in F16, Q80, and Q40 quantization for Llama 2 7B models. It also shows good performance with Q4KM quantization for Llama 3 8B. This flexibility demonstrates its ability to adapt to different quantization levels and optimize performance accordingly.

NVIDIA 4090: Exceptional Performance with Q4KM Quantization

The NVIDIA 4090 delivers outstanding performance with Q4KM quantization, highlighting its potential for significant model size reduction and faster processing.

Data: (data below is in tokens/s)

| Model | Quantization | M3_Max | 4090_24GB |

|---|---|---|---|

| Llama 2 7B | F16 | 779.17 | N/A |

| Llama 2 7B | Q8_0 | 757.64 | N/A |

| Llama 2 7B | Q4_0 | 759.7 | N/A |

| Llama 3 8B | Q4KM | 678.04 | 6898.71 |

| Llama 3 8B | F16 | 751.49 | 9056.26 |

| Llama 3 70B | Q4KM | 62.88 | N/A |

| Llama 3 70B | F16 | N/A | N/A |

The NVIDIA 4090 shines with Q4KM quantization, achieving impressive speeds for both Llama 3 8B and Llama 3 70B models. This indicates its strength in handling highly optimized models for improved efficiency and speed.

Key Takeaways:

- M3 Max: Flexible performance across different quantization levels for efficient model optimization.

- NVIDIA 4090: Exhibits exceptional performance with Q4KM quantization, demonstrating its potential for significant model size reduction and speed improvement.

M3 Max vs 4090: Applications and Use Cases

M3 Max: Ideal for Everyday AI Tasks

The Apple M3 Max offers a balance of performance and efficiency, making it a suitable choice for everyday AI tasks:

- Web Browsing and Content Creation: The M3 Max provides smooth performance for web browsing, content creation, and other tasks requiring moderate AI capabilities.

- Interactive LLMs: The M3 Max’s solid performance with smaller models like Llama 2 7B makes it ideal for interactive tasks like question answering and conversation.

- Mobile Development: Its efficiency and portability make it a viable option for developers working on mobile AI applications.

NVIDIA 4090: Crafted for High-Performance AI

The NVIDIA 4090 is a true powerhouse, designed for demanding AI tasks:

- Research and Development: Its exceptional performance and vast memory capacity make it a valuable tool for researchers and developers working with large language models.

- Large-Scale Applications: The NVIDIA 4090 is well-suited for applications requiring intensive processing and generation, such as machine translation, code generation, and content creation.

- Cloud-Based AI: While primarily used for local processing, the NVIDIA 4090's power can be leveraged for cloud-based AI solutions, catering to high-demanding workloads.

Conclusion

The choice between the Apple M3 Max and the NVIDIA 4090 for running LLMs comes down to your specific needs and budget. The M3 Max offers a balanced solution for everyday users, while the NVIDIA 4090 is a professional-grade tool for high-performance AI. Both devices offer unique strengths, making the choice a matter of prioritizing your individual requirements.

FAQ

What are LLMs?

LLMs are algorithms trained on massive datasets of text and code, enabling them to perform tasks like generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way.

What is Quantization?

Quantization is a technique used to reduce the size of an LLM by representing numbers with fewer bits. Imagine a dictionary where each word is assigned a number. Instead of using a huge dictionary with millions of words, we can create a smaller dictionary with only the most common words, representing each word with a smaller number. This makes the dictionary smaller and faster to use.

How do I choose the right device for my LLM needs?

Consider the size and complexity of your LLM model, your budget, and your specific application requirements before making a decision.

Keywords

LLM, Apple M3 Max, NVIDIA 4090, token speed, processing speed, generation speed, quantization, performance, comparison, AI, machine learning, deep learning, developer, developer tools, hardware, hardware comparison, Llama 2, Llama 3, applications, use cases, FAQ.