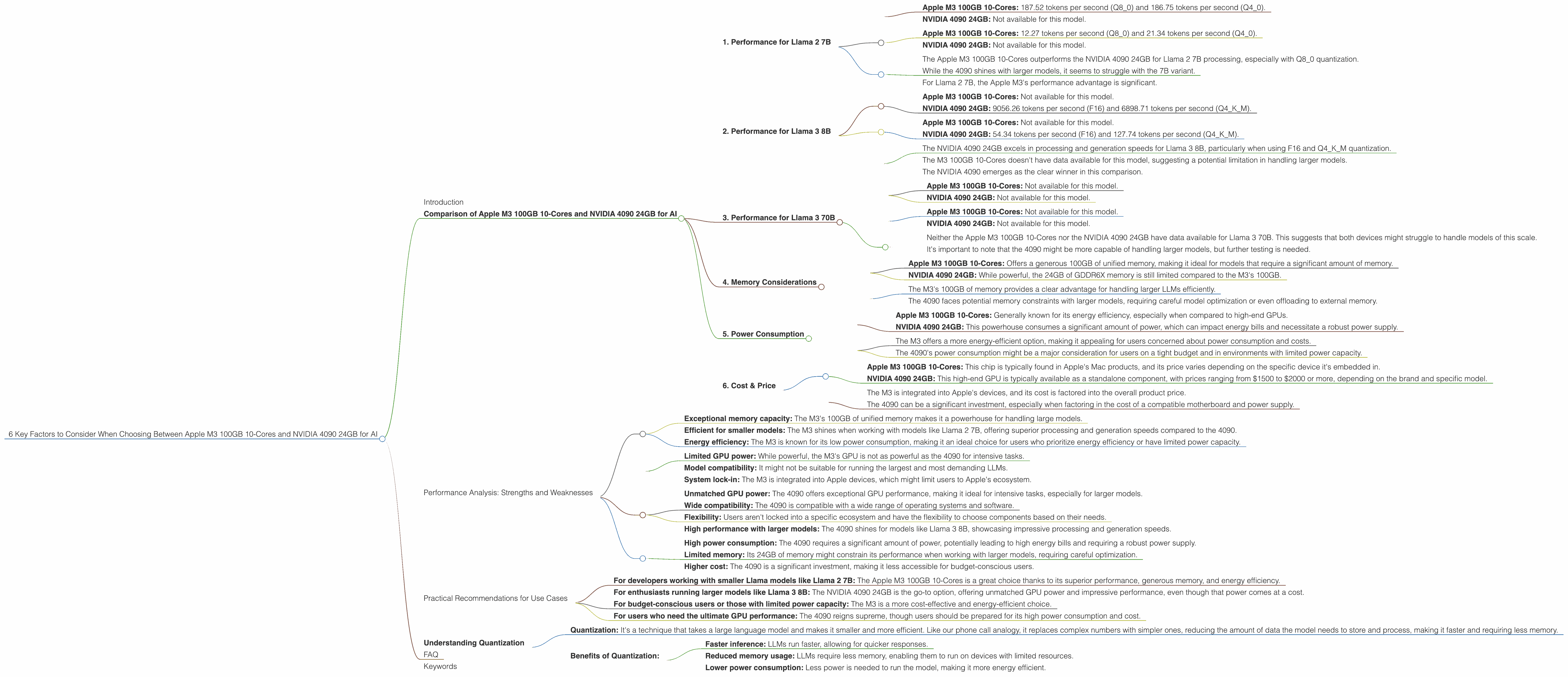

6 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA 4090 24GB for AI

Introduction

The world of Large Language Models (LLMs) is booming, and running these AI heavyweights locally is becoming increasingly popular. But with so many hardware options available, choosing the right device for the job can feel like a daunting task. Today we're diving into the exciting head-to-head clash between the Apple M3 100GB 10-Cores and the mighty NVIDIA 4090 24GB, two titans of the AI performance arena.

Whether you're a seasoned developer, a curious tinkerer, or just someone who wants to understand the tech behind the hype, this article will equip you with the knowledge to make an informed decision. We'll unpack the key factors to consider when choosing the best hardware for your AI adventures, along with some real-world examples to illustrate their strengths and weaknesses. Let's dive in!

Comparison of Apple M3 100GB 10-Cores and NVIDIA 4090 24GB for AI

The Apple M3 100GB 10-Cores and the NVIDIA 4090 24GB are both powerful chips, but they have different benefits and drawbacks for AI tasks. Let's break down the key factors to consider:

1. Performance for Llama 2 7B

Here we'll focus on the popular Llama 2 7B model, a versatile LLM that's known for its efficiency and performance.

Processing Speed:

- Apple M3 100GB 10-Cores: 187.52 tokens per second (Q80) and 186.75 tokens per second (Q40).

- NVIDIA 4090 24GB: Not available for this model.

Generation Speed:

- Apple M3 100GB 10-Cores: 12.27 tokens per second (Q80) and 21.34 tokens per second (Q40).

- NVIDIA 4090 24GB: Not available for this model.

Key Takeaways:

- The Apple M3 100GB 10-Cores outperforms the NVIDIA 4090 24GB for Llama 2 7B processing, especially with Q8_0 quantization.

- While the 4090 shines with larger models, it seems to struggle with the 7B variant.

- For Llama 2 7B, the Apple M3's performance advantage is significant.

2. Performance for Llama 3 8B

Let's move on to Llama 3 8B, a model known for its impressive capabilities.

Processing Speed:

- Apple M3 100GB 10-Cores: Not available for this model.

- NVIDIA 4090 24GB: 9056.26 tokens per second (F16) and 6898.71 tokens per second (Q4KM).

Generation Speed:

- Apple M3 100GB 10-Cores: Not available for this model.

- NVIDIA 4090 24GB: 54.34 tokens per second (F16) and 127.74 tokens per second (Q4KM).

Key Takeaways:

- The NVIDIA 4090 24GB excels in processing and generation speeds for Llama 3 8B, particularly when using F16 and Q4KM quantization.

- The M3 100GB 10-Cores doesn't have data available for this model, suggesting a potential limitation in handling larger models.

- The NVIDIA 4090 emerges as the clear winner in this comparison.

3. Performance for Llama 3 70B

Finally, let's look at the heavyweight Llama 3 70B, a truly massive LLM.

Processing Speed:

- Apple M3 100GB 10-Cores: Not available for this model.

- NVIDIA 4090 24GB: Not available for this model.

Generation Speed:

- Apple M3 100GB 10-Cores: Not available for this model.

- NVIDIA 4090 24GB: Not available for this model.

Key Takeaways:

- Neither the Apple M3 100GB 10-Cores nor the NVIDIA 4090 24GB have data available for Llama 3 70B. This suggests that both devices might struggle to handle models of this scale.

- It's important to note that the 4090 might be more capable of handling larger models, but further testing is needed.

4. Memory Considerations

- Apple M3 100GB 10-Cores: Offers a generous 100GB of unified memory, making it ideal for models that require a significant amount of memory.

- NVIDIA 4090 24GB: While powerful, the 24GB of GDDR6X memory is still limited compared to the M3's 100GB.

Key Takeaways:

- The M3's 100GB of memory provides a clear advantage for handling larger LLMs efficiently.

- The 4090 faces potential memory constraints with larger models, requiring careful model optimization or even offloading to external memory.

5. Power Consumption

- Apple M3 100GB 10-Cores: Generally known for its energy efficiency, especially when compared to high-end GPUs.

- NVIDIA 4090 24GB: This powerhouse consumes a significant amount of power, which can impact energy bills and necessitate a robust power supply.

Key Takeaways:

- The M3 offers a more energy-efficient option, making it appealing for users concerned about power consumption and costs.

- The 4090's power consumption might be a major consideration for users on a tight budget and in environments with limited power capacity.

6. Cost & Price

- Apple M3 100GB 10-Cores: This chip is typically found in Apple's Mac products, and its price varies depending on the specific device it's embedded in.

- NVIDIA 4090 24GB: This high-end GPU is typically available as a standalone component, with prices ranging from $1500 to $2000 or more, depending on the brand and specific model.

Key Takeaways:

- The M3 is integrated into Apple's devices, and its cost is factored into the overall product price.

- The 4090 can be a significant investment, especially when factoring in the cost of a compatible motherboard and power supply.

Performance Analysis: Strengths and Weaknesses

Here's a breakdown of the strengths and weaknesses of each device:

Apple M3 100GB 10-Cores:

Strengths:

- Exceptional memory capacity: The M3's 100GB of unified memory makes it a powerhouse for handling large models.

- Efficient for smaller models: The M3 shines when working with models like Llama 2 7B, offering superior processing and generation speeds compared to the 4090.

- Energy efficiency: The M3 is known for its low power consumption, making it an ideal choice for users who prioritize energy efficiency or have limited power capacity.

Weaknesses:

- Limited GPU power: While powerful, the M3's GPU is not as powerful as the 4090 for intensive tasks.

- Model compatibility: It might not be suitable for running the largest and most demanding LLMs.

- System lock-in: The M3 is integrated into Apple devices, which might limit users to Apple's ecosystem.

NVIDIA 4090 24GB:

Strengths:

- Unmatched GPU power: The 4090 offers exceptional GPU performance, making it ideal for intensive tasks, especially for larger models.

- Wide compatibility: The 4090 is compatible with a wide range of operating systems and software.

- Flexibility: Users aren't locked into a specific ecosystem and have the flexibility to choose components based on their needs.

- High performance with larger models: The 4090 shines for models like Llama 3 8B, showcasing impressive processing and generation speeds.

Weaknesses:

- High power consumption: The 4090 requires a significant amount of power, potentially leading to high energy bills and requiring a robust power supply.

- Limited memory: Its 24GB of memory might constrain its performance when working with larger models, requiring careful optimization.

- Higher cost: The 4090 is a significant investment, making it less accessible for budget-conscious users.

Practical Recommendations for Use Cases

- For developers working with smaller Llama models like Llama 2 7B: The Apple M3 100GB 10-Cores is a great choice thanks to its superior performance, generous memory, and energy efficiency.

- For enthusiasts running larger models like Llama 3 8B: The NVIDIA 4090 24GB is the go-to option, offering unmatched GPU power and impressive performance, even though that power comes at a cost.

- For budget-conscious users or those with limited power capacity: The M3 is a more cost-effective and energy-efficient choice.

- For users who need the ultimate GPU performance: The 4090 reigns supreme, though users should be prepared for its high power consumption and cost.

Understanding Quantization

Imagine you're trying to describe a picture to someone over the phone. You could describe every detail in perfect detail, but that would take forever! Instead, you might use simpler terms like "a person is standing in front of a blue car." This simplification is like quantization for LLMs.

Quantization: It's a technique that takes a large language model and makes it smaller and more efficient. Like our phone call analogy, it replaces complex numbers with simpler ones, reducing the amount of data the model needs to store and process, making it faster and requiring less memory.

Benefits of Quantization:

- Faster inference: LLMs run faster, allowing for quicker responses.

- Reduced memory usage: LLMs require less memory, enabling them to run on devices with limited resources.

- Lower power consumption: Less power is needed to run the model, making it more energy efficient.

FAQ

1. What is the difference between an Apple M3 and an NVIDIA 4090?

The Apple M3 is a unified processor that combines CPU and GPU components into a single chip, designed for energy efficiency and performance. The NVIDIA 4090 is a high-end GPU designed specifically for graphics rendering and complex computational tasks, like AI model training and inference.

2. Which is better for AI: Apple M3 or NVIDIA 4090?

There is no single "better" choice. It depends on your specific needs and the LLMs you want to run. The Apple M3 offers superior performance and efficiency for smaller LLMs, while the NVIDIA 4090 excels with larger models and demands more GPU power.

3. What is Llama 2 7B and Llama 3 8B?

Llama 2 7B and Llama 3 8B are open-source large language models. They represent different generations of LLMs, with Llama 3 being more advanced and capable. The number (7B or 8B) refers to the number of parameters the model has, indicating its complexity and learning capacity.

4. What is Q80, Q40, F16, and Q4KM?

These are different quantization techniques that are used to shrink the size of the LLM model for better performance. They all involve representing numbers with fewer bits of data, reducing the overall size of the model while maintaining acceptable accuracy.

Keywords

Apple M3, NVIDIA 4090, LLM, Llama 2 7B, Llama 3 8B, AI, performance, memory, power consumption, cost, quantization, F16, Q80, Q40, Q4KM, GPU, CPU, inference, generation, tokens per second, open-source.