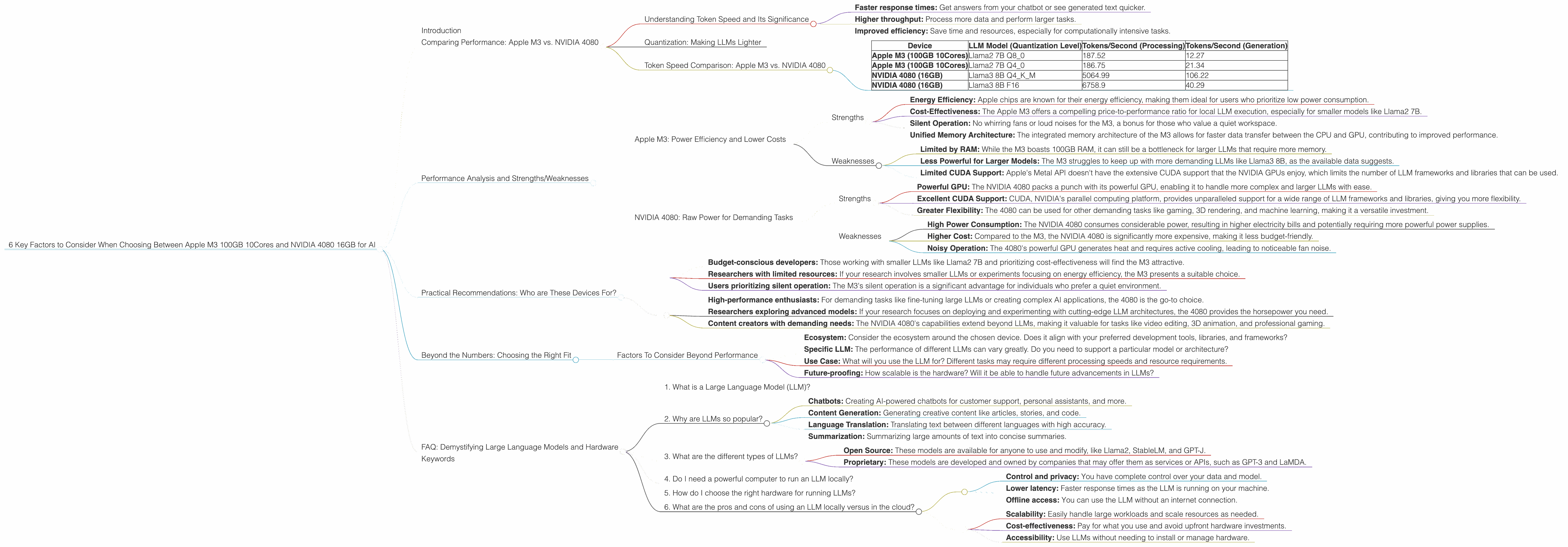

6 Key Factors to Consider When Choosing Between Apple M3 100gb 10cores and NVIDIA 4080 16GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and applications emerging constantly. To run these models locally, you need powerful hardware. Two popular choices are the Apple M3 100GB 10Cores and the NVIDIA 4080 16GB graphics card. But which one is right for you?

This article delves into the performance of these two devices for running LLMs, comparing their strengths and weaknesses, and providing insights into their suitability for different use cases. Whether you're a developer building a chatbot or a researcher fine-tuning a language model, this guide will help you make an informed decision.

Comparing Performance: Apple M3 vs. NVIDIA 4080

Understanding Token Speed and Its Significance

Imagine running a marathon, but instead of your legs, you have a computer processing language. Your speed in this marathon is measured in "tokens per second" - how many words or parts of words the computer can process in a single second.

The "tokens per second" (TPS) metric represents how fast a device can handle the computational workload required to run an LLM. Higher TPS means faster processing, which translates to:

- Faster response times: Get answers from your chatbot or see generated text quicker.

- Higher throughput: Process more data and perform larger tasks.

- Improved efficiency: Save time and resources, especially for computationally intensive tasks.

Quantization: Making LLMs Lighter

Think of quantization as a diet for LLMs. It's like shrinking a large model file (imagine a 100GB model) into a smaller one (maybe 10GB). It still performs the same tasks but uses less memory and processing power. This is like carrying a lighter backpack on your marathon!

Token Speed Comparison: Apple M3 vs. NVIDIA 4080

Let's dissect the numbers from our benchmark data. Remember, higher numbers mean faster processing speeds.

| Device | LLM Model (Quantization Level) | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Apple M3 (100GB 10Cores) | Llama2 7B Q8_0 | 187.52 | 12.27 |

| Apple M3 (100GB 10Cores) | Llama2 7B Q4_0 | 186.75 | 21.34 |

| NVIDIA 4080 (16GB) | Llama3 8B Q4KM | 5064.99 | 106.22 |

| NVIDIA 4080 (16GB) | Llama3 8B F16 | 6758.9 | 40.29 |

Observations:

- M3 shines with Llama2 7B: The M3 demonstrates impressive token speed for the Llama2 7B model, particularly at lower quantization levels (Q40 and Q80).

- NVIDIA 4080 excels with Llama3 8B: The NVIDIA 4080 outperforms the M3 significantly with the Llama3 8B model, exhibiting faster processing and generation speeds.

- Missing Data: Unfortunately, data for certain combinations is not available. For example, we lack information on the M3 performance with Llama3 8B or Llama2 7B under F16 quantization, and the NVIDIA 4080 performance with Llama3 70B in both F16 and Q4KM.

Performance Analysis and Strengths/Weaknesses

Apple M3: Power Efficiency and Lower Costs

Strengths

- Energy Efficiency: Apple chips are known for their energy efficiency, making them ideal for users who prioritize low power consumption.

- Cost-Effectiveness: The Apple M3 offers a compelling price-to-performance ratio for local LLM execution, especially for smaller models like Llama2 7B.

- Silent Operation: No whirring fans or loud noises for the M3, a bonus for those who value a quiet workspace.

- Unified Memory Architecture: The integrated memory architecture of the M3 allows for faster data transfer between the CPU and GPU, contributing to improved performance.

Weaknesses

- Limited by RAM: While the M3 boasts 100GB RAM, it can still be a bottleneck for larger LLMs that require more memory.

- Less Powerful for Larger Models: The M3 struggles to keep up with more demanding LLMs like Llama3 8B, as the available data suggests.

- Limited CUDA Support: Apple's Metal API doesn't have the extensive CUDA support that the NVIDIA GPUs enjoy, which limits the number of LLM frameworks and libraries that can be used.

NVIDIA 4080: Raw Power for Demanding Tasks

Strengths

- Powerful GPU: The NVIDIA 4080 packs a punch with its powerful GPU, enabling it to handle more complex and larger LLMs with ease.

- Excellent CUDA Support: CUDA, NVIDIA's parallel computing platform, provides unparalleled support for a wide range of LLM frameworks and libraries, giving you more flexibility.

- Greater Flexibility: The 4080 can be used for other demanding tasks like gaming, 3D rendering, and machine learning, making it a versatile investment.

Weaknesses

- High Power Consumption: The NVIDIA 4080 consumes considerable power, resulting in higher electricity bills and potentially requiring more powerful power supplies.

- Higher Cost: Compared to the M3, the NVIDIA 4080 is significantly more expensive, making it less budget-friendly.

- Noisy Operation: The 4080's powerful GPU generates heat and requires active cooling, leading to noticeable fan noise.

Practical Recommendations: Who are These Devices For?

M3:

- Budget-conscious developers: Those working with smaller LLMs like Llama2 7B and prioritizing cost-effectiveness will find the M3 attractive.

- Researchers with limited resources: If your research involves smaller LLMs or experiments focusing on energy efficiency, the M3 presents a suitable choice.

- Users prioritizing silent operation: The M3's silent operation is a significant advantage for individuals who prefer a quiet environment.

NVIDIA 4080:

- High-performance enthusiasts: For demanding tasks like fine-tuning large LLMs or creating complex AI applications, the 4080 is the go-to choice.

- Researchers exploring advanced models: If your research focuses on deploying and experimenting with cutting-edge LLM architectures, the 4080 provides the horsepower you need.

- Content creators with demanding needs: The NVIDIA 4080's capabilities extend beyond LLMs, making it valuable for tasks like video editing, 3D animation, and professional gaming.

Beyond the Numbers: Choosing the Right Fit

Factors To Consider Beyond Performance

- Ecosystem: Consider the ecosystem around the chosen device. Does it align with your preferred development tools, libraries, and frameworks?

- Specific LLM: The performance of different LLMs can vary greatly. Do you need to support a particular model or architecture?

- Use Case: What will you use the LLM for? Different tasks may require different processing speeds and resource requirements.

- Future-proofing: How scalable is the hardware? Will it be able to handle future advancements in LLMs?

FAQ: Demystifying Large Language Models and Hardware

1. What is a Large Language Model (LLM)?

Think of an LLM as a really smart robot that understands language. It's trained on a massive dataset of text and code, allowing it to communicate, generate text, and perform various language-related tasks.

2. Why are LLMs so popular?

LLMs have revolutionized many industries, offering a wide range of applications:

- Chatbots: Creating AI-powered chatbots for customer support, personal assistants, and more.

- Content Generation: Generating creative content like articles, stories, and code.

- Language Translation: Translating text between different languages with high accuracy.

- Summarization: Summarizing large amounts of text into concise summaries.

3. What are the different types of LLMs?

LLMs fall into broad categories:

- Open Source: These models are available for anyone to use and modify, like Llama2, StableLM, and GPT-J.

- Proprietary: These models are developed and owned by companies that may offer them as services or APIs, such as GPT-3 and LaMDA.

4. Do I need a powerful computer to run an LLM locally?

Yes, running large LLMs locally often requires powerful hardware with a sufficient amount of RAM, fast processing speeds, and dedicated GPU power.

5. How do I choose the right hardware for running LLMs?

Consider your needs, the specific LLM you want to use, and your budget. For smaller models, a powerful CPU like the Apple M3 may suffice. For larger LLMs and demanding tasks, a powerful GPU like the NVIDIA 4080 is recommended.

6. What are the pros and cons of using an LLM locally versus in the cloud?

Local:

- Control and privacy: You have complete control over your data and model.

- Lower latency: Faster response times as the LLM is running on your machine.

- Offline access: You can use the LLM without an internet connection.

Cloud:

- Scalability: Easily handle large workloads and scale resources as needed.

- Cost-effectiveness: Pay for what you use and avoid upfront hardware investments.

- Accessibility: Use LLMs without needing to install or manage hardware.

Keywords

Apple M3, NVIDIA 4080, LLM, Large Language Model, Llama2, Llama3, Token Speed, Quantization, Inference, GPU, CPU, AI, Machine Learning, Deep Learning, NLP, Natural Language Processing, Performance, Comparison, Benchmark, Cost-Effectiveness, Energy Efficiency, Hardware, Software, Developer, Researcher, Use Case, Ecosystem,