6 Key Factors to Consider When Choosing Between Apple M2 100gb 10cores and NVIDIA A40 48GB for AI

Introduction

The world of large language models (LLMs) is exploding, and with it comes the need for powerful hardware capable of running these complex AI models. Two popular contenders in the hardware race are the Apple M2 100GB 10cores chip and the NVIDIA A40_48GB GPU. Both offer impressive performance, but their strengths and weaknesses vary depending on the specific LLM and use case.

This article will delve into the crucial factors to consider when choosing between the Apple M2 and NVIDIA A40 for your AI projects, providing you with a data-driven comparison and practical recommendations. We'll analyze the performance of these devices on different LLM models, highlighting key differences in token speed generation, memory capacity, and cost, enabling you to make an informed decision for your specific needs.

Performance Analysis: Token Speed Generation Comparison

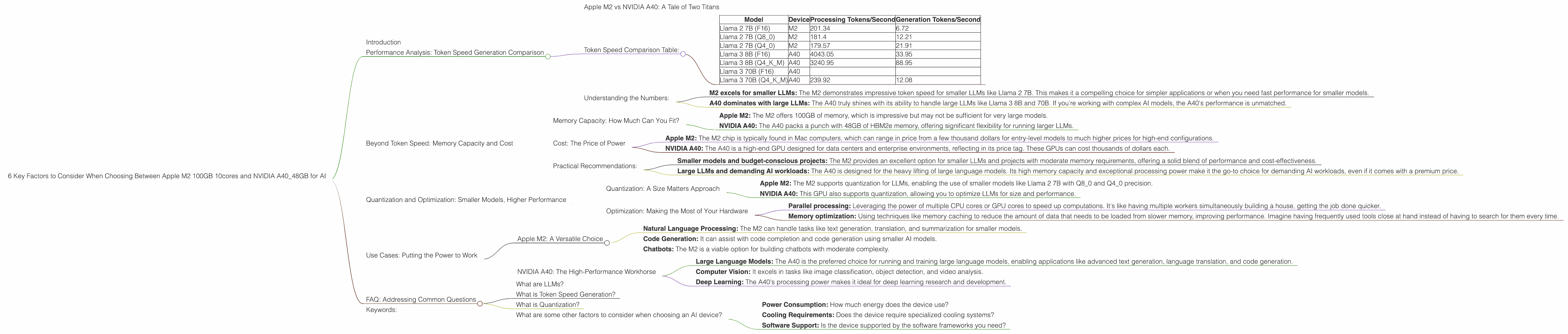

Apple M2 vs NVIDIA A40: A Tale of Two Titans

Let's dive straight into the heart of the matter: token speed generation, a crucial metric for evaluating LLM performance. The faster the device can process tokens, the quicker your LLM can generate text, translate languages, or perform other AI tasks—think of it like the typing speed of your AI. It's all about getting that text flowing!

The data reveals a fascinating story of strengths and weaknesses:

Apple M2: The M2 boasts impressive token processing speeds for smaller LLMs like Llama 2 7B. It's like a nimble sprinter, fast and efficient for shorter distances.

NVIDIA A40: The A40 shines with larger models, like Llama 3 8B and 70B, demonstrating its ability to handle the complexity of these models with impressive performance. This is like a marathon runner, built for sustained performance over longer distances.

Token Speed Comparison Table:

| Model | Device | Processing Tokens/Second | Generation Tokens/Second |

|---|---|---|---|

| Llama 2 7B (F16) | M2 | 201.34 | 6.72 |

| Llama 2 7B (Q8_0) | M2 | 181.4 | 12.21 |

| Llama 2 7B (Q4_0) | M2 | 179.57 | 21.91 |

| Llama 3 8B (F16) | A40 | 4043.05 | 33.95 |

| Llama 3 8B (Q4KM) | A40 | 3240.95 | 88.95 |

| Llama 3 70B (F16) | A40 | ||

| Llama 3 70B (Q4KM) | A40 | 239.92 | 12.08 |

Important Note: Data for models like Llama 3 70B with F16 precision is missing due to the limited benchmarking efforts.

Understanding the Numbers:

Let's unpack these numbers. For instance, the M2 can process Llama 2 7B with F16 precision at a speed of 201.34 tokens per second, while the A40 can churn through Llama 3 8B with F16 precision at a whopping 4,043.05 tokens per second.

Key takeaways:

- M2 excels for smaller LLMs: The M2 demonstrates impressive token speed for smaller LLMs like Llama 2 7B. This makes it a compelling choice for simpler applications or when you need fast performance for smaller models.

- A40 dominates with large LLMs: The A40 truly shines with its ability to handle large LLMs like Llama 3 8B and 70B. If you're working with complex AI models, the A40's performance is unmatched.

Beyond Token Speed: Memory Capacity and Cost

Memory Capacity: How Much Can You Fit?

Memory capacity is crucial for running LLMs. Think of it as the workspace of your AI - the more space it has, the more complex tasks it can handle.

- Apple M2: The M2 offers 100GB of memory, which is impressive but may not be sufficient for very large models.

- NVIDIA A40: The A40 packs a punch with 48GB of HBM2e memory, offering significant flexibility for running larger LLMs.

Cost: The Price of Power

The cost of these devices also plays a significant role in the decision-making process. Remember, your budget is important, and you want to make sure you're getting the most bang for your buck.

- Apple M2: The M2 chip is typically found in Mac computers, which can range in price from a few thousand dollars for entry-level models to much higher prices for high-end configurations.

- NVIDIA A40: The A40 is a high-end GPU designed for data centers and enterprise environments, reflecting in its price tag. These GPUs can cost thousands of dollars each.

Practical Recommendations:

- Smaller models and budget-conscious projects: The M2 provides an excellent option for smaller LLMs and projects with moderate memory requirements, offering a solid blend of performance and cost-effectiveness.

- Large LLMs and demanding AI workloads: The A40 is designed for the heavy lifting of large language models. Its high memory capacity and exceptional processing power make it the go-to choice for demanding AI workloads, even if it comes with a premium price.

Quantization and Optimization: Smaller Models, Higher Performance

Quantization: A Size Matters Approach

Quantization is a technique used to reduce the size of LLM models, making them more efficient and easier to deploy on hardware with limited memory capacity. Think of it like compressing a large image file to make it smaller but still maintain its quality.

- Apple M2: The M2 supports quantization for LLMs, enabling the use of smaller models like Llama 2 7B with Q80 and Q40 precision.

- NVIDIA A40: This GPU also supports quantization, allowing you to optimize LLMs for size and performance.

Optimization: Making the Most of Your Hardware

Optimization techniques can further enhance LLM performance on both the M2 and A40. These include:

- Parallel processing: Leveraging the power of multiple CPU cores or GPU cores to speed up computations. It's like having multiple workers simultaneously building a house, getting the job done quicker.

- Memory optimization: Using techniques like memory caching to reduce the amount of data that needs to be loaded from slower memory, improving performance. Imagine having frequently used tools close at hand instead of having to search for them every time.

Use Cases: Putting the Power to Work

Apple M2: A Versatile Choice

The M2 is a versatile chip suitable for a wide range of AI use cases, including:

- Natural Language Processing: The M2 can handle tasks like text generation, translation, and summarization for smaller models.

- Code Generation: It can assist with code completion and code generation using smaller AI models.

- Chatbots: The M2 is a viable option for building chatbots with moderate complexity.

NVIDIA A40: The High-Performance Workhorse

The A40 is a powerful GPU designed for heavy-duty AI tasks, making it suitable for:

- Large Language Models: The A40 is the preferred choice for running and training large language models, enabling applications like advanced text generation, language translation, and code generation.

- Computer Vision: It excels in tasks like image classification, object detection, and video analysis.

- Deep Learning: The A40's processing power makes it ideal for deep learning research and development.

FAQ: Addressing Common Questions

What are LLMs?

LLMs are artificial intelligence models that can understand and generate human-like text. They have been trained on massive datasets of text and code, giving them the ability to perform tasks like translation, summarization, and creative writing.

What is Token Speed Generation?

Token speed generation refers to how quickly a device can process tokens, which are the building blocks of text. It's a key metric for evaluating the performance of LLMs, as it determines how fast a device can generate text or perform other tasks.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models by reducing the precision of their parameters. This can make the models more efficient and easier to deploy on devices with limited memory capacity.

What are some other factors to consider when choosing an AI device?

Besides performance and cost, consider factors like:

- Power Consumption: How much energy does the device use?

- Cooling Requirements: Does the device require specialized cooling systems?

- Software Support: Is the device supported by the software frameworks you need?

Keywords:

Apple M2, NVIDIA A40, LLMs, Large Language Models, Token Speed Generation, Memory Capacity, Cost, Quantization, Parallel Processing, Memory Optimization, Use Cases, Performance Analysis, AI, Machine Learning, Natural Language Processing, Computer Vision, Deep Learning.