6 Key Factors to Consider When Choosing Between Apple M2 100gb 10cores and Apple M3 Max 400gb 40cores for AI

Introduction

The world of AI is buzzing with excitement, especially with the rise of Large Language Models (LLMs). These powerful AI models, like the popular Llama 2 and Llama 3, can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

But running these LLMs on your own machine can be a challenge, especially if you're dealing with models like Llama 3 70B, that are huge! That's where powerful hardware comes in.

This article compares two popular Apple chips, the M2 with 100GB of RAM and 10 cores, and the M3 Max with 400GB of RAM and 40 cores, to help you decide which one is best for your LLM adventures.

Let's dive in and see which chip reigns supreme!

Performance Analysis: Apple M2 vs. M3 Max

Token Speed: The "Words Per Second" Game of LLMs

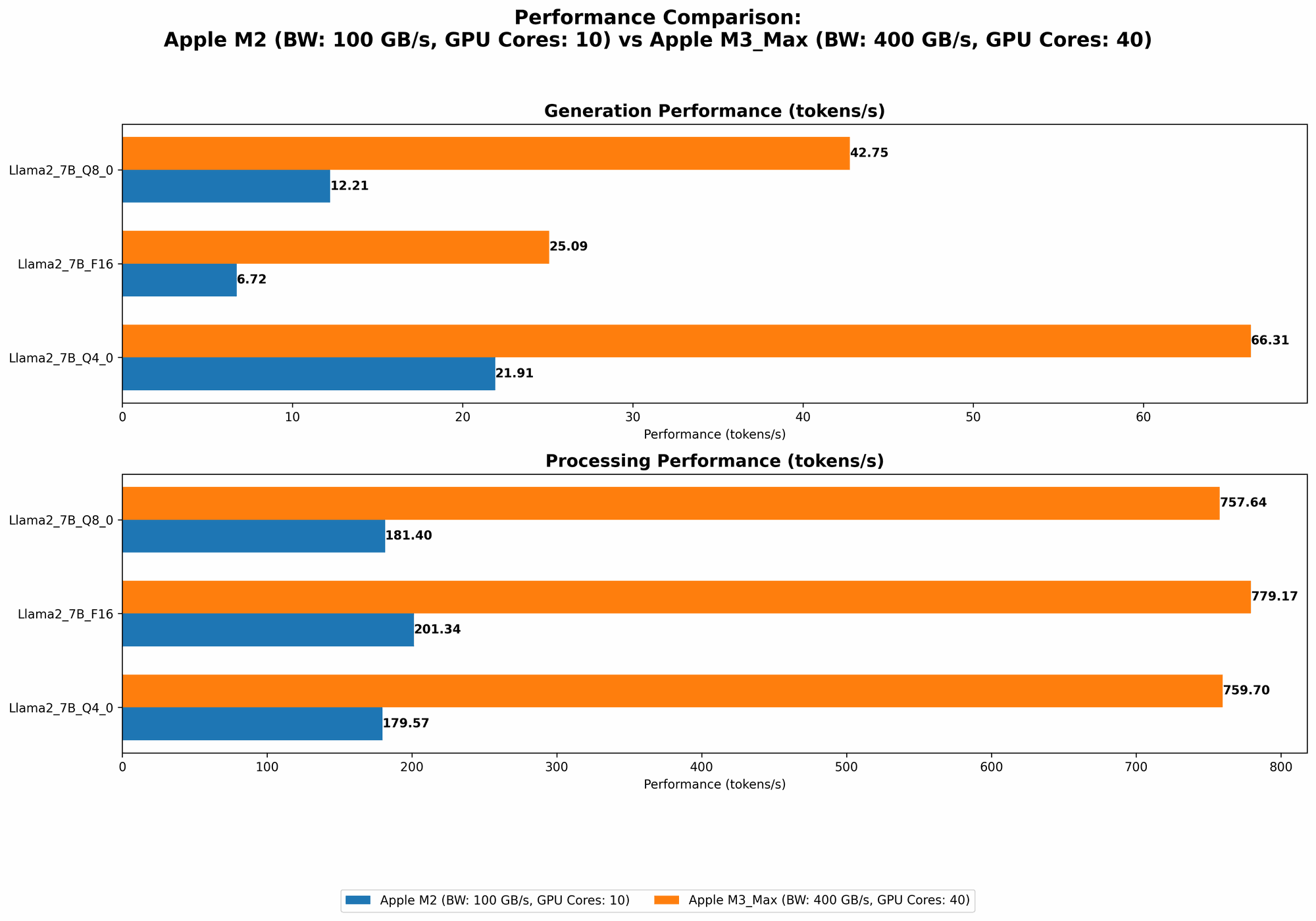

One of the most important factors to consider when choosing a device for running LLMs is token speed, the speed at which your device can process and generate text.

Token speed can be divided into two categories:

- Processing Speed: This measures how fast the chip can process input tokens, which are the fundamental units of text.

- Generation Speed: This measures how fast the chip can generate output tokens – that's the text you actually see!

Comparison of Apple M2 and M3 Max:

| Model | M2 100GB 10 Cores | M3 Max 400GB 40 Cores |

|---|---|---|

| Llama 2 7B F16 Processing | 201.34 tokens/second | 779.17 tokens/second |

| Llama 2 7B F16 Generation | 6.72 tokens/second | 25.09 tokens/second |

| Llama 2 7B Q8_0 Processing | 181.4 tokens/second | 757.64 tokens/second |

| Llama 2 7B Q8_0 Generation | 12.21 tokens/second | 42.75 tokens/second |

| Llama 2 7B Q4_0 Processing | 179.57 tokens/second | 759.7 tokens/second |

| Llama 2 7B Q4_0 Generation | 21.91 tokens/second | 66.31 tokens/second |

| Llama 3 8B Q4KM Processing | N/A | 678.04 tokens/second |

| Llama 3 8B Q4KM Generation | N/A | 50.74 tokens/second |

| Llama 3 8B F16 Processing | N/A | 751.49 tokens/second |

| Llama 3 8B F16 Generation | N/A | 22.39 tokens/second |

| Llama 3 70B Q4KM Processing | N/A | 62.88 tokens/second |

| Llama 3 70B Q4KM Generation | N/A | 7.53 tokens/second |

| Llama 3 70B F16 Processing | N/A | N/A |

| Llama 3 70B F16 Generation | N/A | N/A |

Let's break down these numbers:

Notice how the M3 Max consistently outperforms the M2 in both processing and generation speed for all LLM models. This is largely due to its significantly higher number of cores (40 vs. 10) and its larger memory bandwidth (400GB vs 100GB). It's like having a team of 40 super-fast typists working together on a document compared to a team of 10.

The "Q" values refer to quantization, a neat trick that compresses the model's size and makes it run faster. Q80, Q40, and Q4KM are different levels of quantization with Q80 being the most compressed and Q4K_M being less compressed. It's like having a super-efficient coding system for your text!

"F16" is a floating-point precision setting; it's like giving the model a higher resolution for calculations.

The M2 doesn't have data for Llama 3 models because it was released after the M2.

Memory: How Much Space do LLMs Need?

LLMs are data-hungry creatures. Larger models might require more memory than the M2 can handle, while the M3 Max with its 400GB of RAM has plenty of space for even the largest models.

- For Llama 2 7B models, both M2 and M3 Max have enough RAM.

- For Llama 3 8B models, the M3 Max is a better option because it has more RAM, making it ideal for running larger models.

- For Llama 3 70B models, the M3 Max is a must-have, as the M2 simply doesn't have enough RAM to handle this behemoth.

Imagine trying to fit a huge wardrobe into a small closet – that's what happens if you try to run a large LLM on a device with insufficient RAM.

Power Consumption: Balancing Speed and Energy

It's important to consider how much power your chip consumes, especially if you're running LLMs for extended periods.

The M2 is generally more power-efficient than the M3 Max. Why? Because the M3 Max is a bigger and more powerful chip with more cores and features. It's like having a powerful sports car that consumes more fuel than an efficient hatchback.

- For users who are running LLMs on a budget or with limited battery life, the M2 might be a suitable choice.

- For users who prioritize speed and performance, the M3 Max is the way to go.

Use Cases: Choosing the Right Tool for the Job

Now, let's talk about the "why" behind these devices. The best choice for you depends mainly on the LLMs you want to run and the specific tasks you have in mind.

Here's a quick breakdown:

M2: This is a great choice for running smaller LLMs like Llama 2 7B models. It's also good for tasks that don't require heavy processing power, such as text generation, translation, and basic question answering.

M3 Max: This is a powerhouse for running larger and more complex LLMs like Llama 3 8B and 70B models. It's a great choice for demanding tasks such as code generation, complex reasoning tasks, and sophisticated creative writing.

Think of it like this: If you're a casual writer, a comfortable chair is enough. But if you're a professional novelist, you might need a dedicated workspace with ergonomic tools.

Cost: The Price of Performance

Let's not forget about the budget. The M3 Max is more expensive than the M2.

- If you're on a tight budget, the M2 is a more affordable option.

- If you need the maximum performance and have the budget for it, the M3 Max is worth the investment.

Conclusion

The choice between the M2 and M3 Max for running LLMs is a matter of balancing performance needs with budget and power consumption considerations. Keep these factors in mind when making your decision:

- If you're working with smaller LLMs and prioritize affordability, the M2 is your best bet.

- If you're working with larger LLMs and require maximum speed and performance, the M3 Max is the way to go.

FAQ

Q: What are LLMs?

A: LLMs are large language models, which are AI models trained on massive datasets to understand and generate human-like text. They can be used for tasks such as text summarization, translation, question answering, and creative writing.

Q: What is quantization?

A: Quantization is a technique that compresses the size of a model by reducing the number of bits used to represent each number. This makes the model smaller and faster to run, but it can also reduce the model's accuracy.

Q: What is the difference between "Processing Speed" and "Generation Speed"?

A: Processing speed refers to how fast the chip can process the input tokens (the words or symbols of text) in a model, while generation speed refers to how fast the chip can generate output tokens (the words or symbols of text) as a result of processing the input.

Q: Which device is better for running Llama 2 7B?

A: Both the M2 and M3 Max are capable of running Llama 2 7B. However, the M3 Max offers significantly faster performance.

Q: Which device is better for running Llama 3 70B?

A: Only the M3 Max is capable of running Llama 3 70B, as it has enough memory and processing power for such a large LLM. The M2 does not have enough RAM to handle this model.

Keywords

LLM, Llama 2, Llama 3, M2, M3 Max, Apple, AI, Token Speed, Processing, Generation, Quantization, Memory, Power Consumption, Use Cases, Cost, Performance, Developer, Geek, GPU, CPU, Machine Learning, Deep Learning.