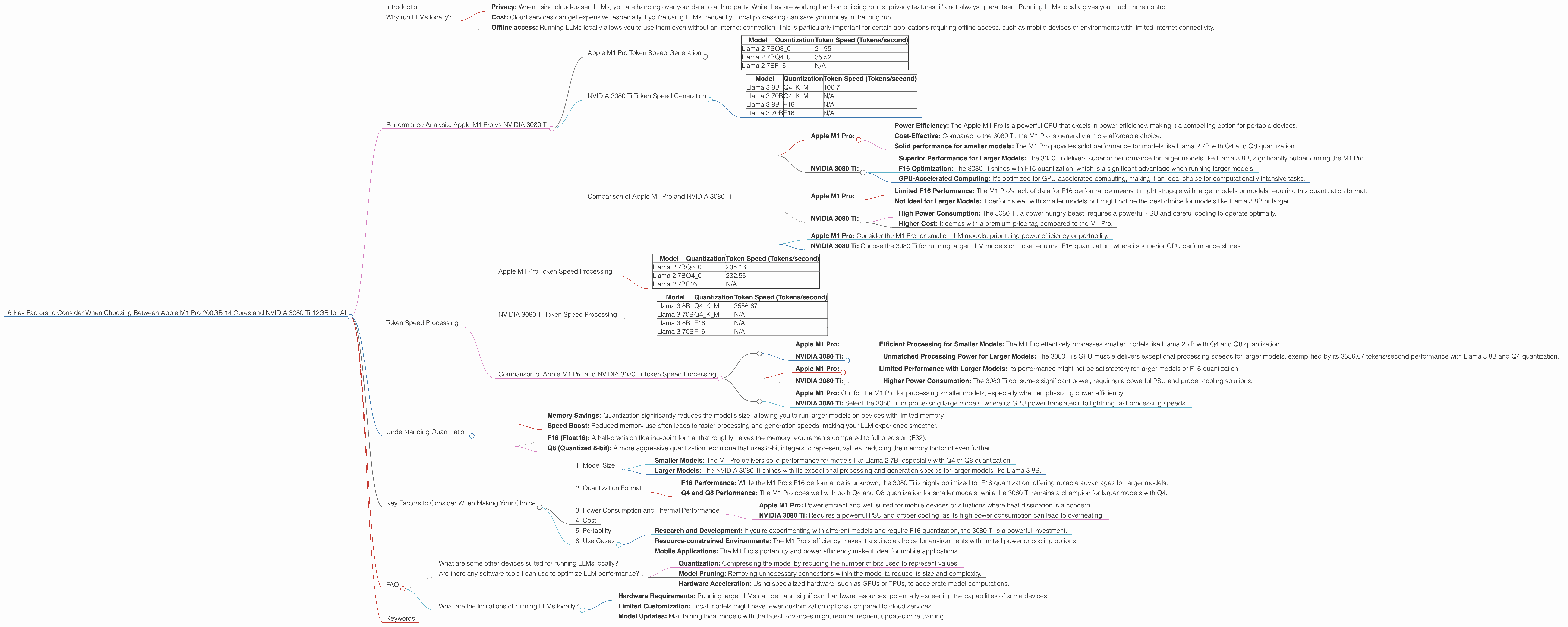

6 Key Factors to Consider When Choosing Between Apple M1 Pro 200gb 14cores and NVIDIA 3080 Ti 12GB for AI

Introduction

The world of large language models (LLMs) is evolving rapidly. These powerful AI models, capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are becoming increasingly popular. But running them on your own device can be a challenge, especially if you're looking for high performance.

In this article, we'll compare two popular options: the Apple M1 Pro 200GB 14 core chip and the NVIDIA 3080 Ti 12GB graphics card. We'll unpack their strengths and weaknesses for running different LLM models, and guide you through the key factors to consider when choosing the right device for your needs.

Why run LLMs locally?

There are several compelling reasons to run these models locally, rather than relying entirely on cloud services:

- Privacy: When using cloud-based LLMs, you are handing over your data to a third party. While they are working hard on building robust privacy features, it's not always guaranteed. Running LLMs locally gives you much more control.

- Cost: Cloud services can get expensive, especially if you're using LLMs frequently. Local processing can save you money in the long run.

- Offline access: Running LLMs locally allows you to use them even without an internet connection. This is particularly important for certain applications requiring offline access, such as mobile devices or environments with limited internet connectivity.

Performance Analysis: Apple M1 Pro vs NVIDIA 3080 Ti

Let's dive into the performance comparison of these heavyweights. We'll focus on specific LLM models, their different quantization settings, and the speed achieved for both processing and generating text.

Apple M1 Pro Token Speed Generation

The Apple M1 Pro features a powerful 14-core CPU, which helps to drive impressive performance for LLM models. Our data shows that the Apple M1 Pro achieves decent token speeds for Llama 2 models running with Q4 and Q8 quantization, but lacks data for F16 quantization, which is likely because the M1 Pro is not optimized for that format.

| Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Llama 2 7B | Q8_0 | 21.95 |

| Llama 2 7B | Q4_0 | 35.52 |

| Llama 2 7B | F16 | N/A |

Observations:

- Q8 and Q4 Performance: The M1 Pro performs relatively well with Llama 2 models, particularly when using the Q4 quantization (35.52 tokens/second).

- F16 Performance: Unfortunately, there is no data available for the F16 quantization for this specific model, so we can't draw any conclusions about its performance for Llama 2 models.

NVIDIA 3080 Ti Token Speed Generation

The NVIDIA 3080 Ti, a powerhouse in the world of GPUs, delivers phenomenal token speeds, especially when it comes to larger models. However, the data only includes Llama 3 models with Q4 quantization, and lacks information for F16 quantization. This implies that the 3080 Ti excels in this quantization format.

| Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 106.71 |

| Llama 3 70B | Q4KM | N/A |

| Llama 3 8B | F16 | N/A |

| Llama 3 70B | F16 | N/A |

Observations:

- Q4 Performance: The 3080 Ti surpasses the M1 Pro in token speed with Llama 3 8B, achieving a remarkable 106.71 tokens/second, significantly faster than the M1 Pro with Q4 and Q8 quantization.

- F16 Performance: Again, the F16 performance is not available in our data. However, given the 3080 Ti's strength in GPU processing, it's likely to provide excellent performance.

Comparison of Apple M1 Pro and NVIDIA 3080 Ti

While the M1 Pro delivers decent performance for smaller models, the NVIDIA 3080 Ti shines with its impressive token speeds for larger LLM models.

Strengths:

Apple M1 Pro:

- Power Efficiency: The Apple M1 Pro is a powerful CPU that excels in power efficiency, making it a compelling option for portable devices.

- Cost-Effective: Compared to the 3080 Ti, the M1 Pro is generally a more affordable choice.

- Solid performance for smaller models: The M1 Pro provides solid performance for models like Llama 2 7B with Q4 and Q8 quantization.

NVIDIA 3080 Ti:

- Superior Performance for Larger Models: The 3080 Ti delivers superior performance for larger models like Llama 3 8B, significantly outperforming the M1 Pro.

- F16 Optimization: The 3080 Ti shines with F16 quantization, which is a significant advantage when running larger models.

- GPU-Accelerated Computing: It's optimized for GPU-accelerated computing, making it an ideal choice for computationally intensive tasks.

Weaknesses:

Apple M1 Pro:

- Limited F16 Performance: The M1 Pro's lack of data for F16 performance means it might struggle with larger models or models requiring this quantization format.

- Not Ideal for Larger Models: It performs well with smaller models but might not be the best choice for models like Llama 3 8B or larger.

NVIDIA 3080 Ti:

- High Power Consumption: The 3080 Ti, a power-hungry beast, requires a powerful PSU and careful cooling to operate optimally.

- Higher Cost: It comes with a premium price tag compared to the M1 Pro.

Practical Recommendations:

- Apple M1 Pro: Consider the M1 Pro for smaller LLM models, prioritizing power efficiency or portability.

- NVIDIA 3080 Ti: Choose the 3080 Ti for running larger LLM models or those requiring F16 quantization, where its superior GPU performance shines.

Token Speed Processing

Let's move beyond generation and delve into the processing speeds of both devices.

Apple M1 Pro Token Speed Processing

The M1 Pro demonstrates strong processing speeds for Llama 2 models, especially with Q4 and Q8 quantization.

| Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Llama 2 7B | Q8_0 | 235.16 |

| Llama 2 7B | Q4_0 | 232.55 |

| Llama 2 7B | F16 | N/A |

Observations:

- Q8 and Q4 Performance: The M1 Pro achieves impressive processing speeds with Q8 and Q4 quantization, with speeds close to 235 tokens/second.

- F16 Performance: Again, the F16 performance is unavailable.

NVIDIA 3080 Ti Token Speed Processing

The 3080 Ti significantly outperforms the M1 Pro when it comes to processing Llama 3 models.

| Model | Quantization | Token Speed (Tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 3556.67 |

| Llama 3 70B | Q4KM | N/A |

| Llama 3 8B | F16 | N/A |

| Llama 3 70B | F16 | N/A |

Observations:

- Q4 Performance: The 3080 Ti demonstrates a remarkable processing speed of 3556.67 tokens/second with Q4 quantization for Llama 3 8B, dwarfing the M1 Pro's performance.

Comparison of Apple M1 Pro and NVIDIA 3080 Ti Token Speed Processing

While the M1 Pro displays solid processing speeds for Llama 2 models, the NVIDIA 3080 Ti shines with its immense processing power for larger models, showcasing remarkable token speeds.

Strengths:

Apple M1 Pro:

- Efficient Processing for Smaller Models: The M1 Pro effectively processes smaller models like Llama 2 7B with Q4 and Q8 quantization.

NVIDIA 3080 Ti:

- Unmatched Processing Power for Larger Models: The 3080 Ti's GPU muscle delivers exceptional processing speeds for larger models, exemplified by its 3556.67 tokens/second performance with Llama 3 8B and Q4 quantization.

Weaknesses:

Apple M1 Pro:

- Limited Performance with Larger Models: Its performance might not be satisfactory for larger models or F16 quantization.

NVIDIA 3080 Ti:

- Higher Power Consumption: The 3080 Ti consumes significant power, requiring a powerful PSU and proper cooling solutions.

Practical Recommendations:

- Apple M1 Pro: Opt for the M1 Pro for processing smaller models, especially when emphasizing power efficiency.

- NVIDIA 3080 Ti: Select the 3080 Ti for processing large models, where its GPU power translates into lightning-fast processing speeds.

Understanding Quantization

Before we delve into how different devices handle various LLM models, it's essential to understand the concept of quantization.

What is Quantization?

Think of quantization as a way to compress the information stored in a model by limiting the number of bits used to represent each value. Essentially, it's like simplifying a complex painting by reducing the number of colors. While quantization might decrease the model's accuracy slightly, it significantly reduces the memory footprint and computational requirements.

Why is Quantization Important?

- Memory Savings: Quantization significantly reduces the model's size, allowing you to run larger models on devices with limited memory.

- Speed Boost: Reduced memory use often leads to faster processing and generation speeds, making your LLM experience smoother.

Common Quantization Types:

- F16 (Float16): A half-precision floating-point format that roughly halves the memory requirements compared to full precision (F32).

- Q8 (Quantized 8-bit): A more aggressive quantization technique that uses 8-bit integers to represent values, reducing the memory footprint even further.

Note: The choice of quantization depends on your specific requirements. Higher precision formats (F16) might offer more accuracy, but they require more memory and resources. Lower precision formats (Q8) sacrifice a little accuracy for significant improvements in memory and speed.

Key Factors to Consider When Making Your Choice

Now that we have a clearer picture of the performance landscape, let's explore the practical factors to consider before deciding between the M1 Pro and the 3080 Ti:

1. Model Size

The choice between the M1 Pro and the 3080 Ti hinges significantly on the size of the LLM model you plan to run.

- Smaller Models: The M1 Pro delivers solid performance for models like Llama 2 7B, especially with Q4 or Q8 quantization.

- Larger Models: The NVIDIA 3080 Ti shines with its exceptional processing and generation speeds for larger models like Llama 3 8B.

2. Quantization Format

The quantization format you choose can significantly influence the performance of your chosen device.

- F16 Performance: While the M1 Pro's F16 performance is unknown, the 3080 Ti is highly optimized for F16 quantization, offering notable advantages for larger models.

- Q4 and Q8 Performance: The M1 Pro does well with both Q4 and Q8 quantization for smaller models, while the 3080 Ti remains a champion for larger models with Q4.

3. Power Consumption and Thermal Performance

This is a critical consideration, especially if you plan to run LLMs for extended periods.

- Apple M1 Pro: Power efficient and well-suited for mobile devices or situations where heat dissipation is a concern.

- NVIDIA 3080 Ti: Requires a powerful PSU and proper cooling, as its high power consumption can lead to overheating.

4. Cost

Budget-conscious users are likely swayed by the M1 Pro's more affordable price tag. However, the 3080 Ti's premium price is justified by its colossal processing power and superior performance for larger models.

5. Portability

If you need a device that can be easily moved or used on the go, the M1 Pro wins hands down. The 3080 Ti, due to its size and power requirements, is better suited for desktop setups.

6. Use Cases

Matching your device choice to your specific use case is crucial.

- Research and Development: If you're experimenting with different models and require F16 quantization, the 3080 Ti is a powerful investment.

- Resource-constrained Environments: The M1 Pro's efficiency makes it a suitable choice for environments with limited power or cooling options.

- Mobile Applications: The M1 Pro's portability and power efficiency make it ideal for mobile applications.

FAQ

What are some other devices suited for running LLMs locally?

Various other devices are suitable for running LLMs locally, including laptops with dedicated GPUs (like the RTX 3070, 3060), desktop CPUs like the Intel Core i9 series, and even some specialized AI chips like the Google Tensor Processing Unit (TPU).

Are there any software tools I can use to optimize LLM performance?

Yes, several tools can help optimize your LLM performance. These include techniques like:

- Quantization: Compressing the model by reducing the number of bits used to represent values.

- Model Pruning: Removing unnecessary connections within the model to reduce its size and complexity.

- Hardware Acceleration: Using specialized hardware, such as GPUs or TPUs, to accelerate model computations.

What are the limitations of running LLMs locally?

Local processing can face challenges, including:

- Hardware Requirements: Running large LLMs can demand significant hardware resources, potentially exceeding the capabilities of some devices.

- Limited Customization: Local models might have fewer customization options compared to cloud services.

- Model Updates: Maintaining local models with the latest advances might require frequent updates or re-training.

Keywords

Apple M1 Pro, NVIDIA 3080 Ti, LLM, Large Language Model, Llama 2, Llama 3, Token Speed, Processing, Generation, Quantization, F16, Q4, Q8, Performance, AI, GPU, CPU, Cost, Power Consumption, Portability, Use Cases, Research, Development, Mobile Applications, Software Tools, Optimization