6 Key Factors to Consider When Choosing Between Apple M1 Max 400gb 24cores and NVIDIA A100 SXM 80GB for AI

Introduction

The world of AI is abuzz with excitement over Large Language Models (LLMs) like Llama 2 and Llama 3. These powerful models are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way.

But running LLMs locally can be a challenge. They require a lot of processing power and memory. This is where choosing the right hardware – particularly a powerful GPU – becomes critical. In this article, we'll dive deep into a comparison of two top contenders in the AI hardware arena: the Apple M1 Max 400gb 24cores and the NVIDIA A100SXM80GB.

We'll take a look at how these devices perform on various LLM models, considering factors like token speed generation, memory bandwidth, and overall cost. By the end of this guide, you'll have a clearer understanding of which device might be the best fit for your AI needs.

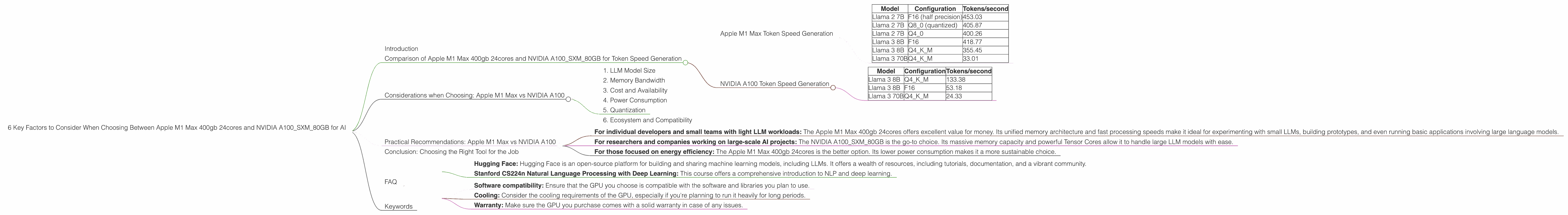

Comparison of Apple M1 Max 400gb 24cores and NVIDIA A100SXM80GB for Token Speed Generation

The real magic happens when you start using these LLMs. The speed of their inference – generating new text, translating, and answering your questions – is directly dictated by the hardware you choose.

Let's break down how the Apple M1 Max 400gb 24cores and NVIDIA A100SXM80GB fare in terms of tokens per second (tokens/sec) for different LLM models and configurations:

Apple M1 Max Token Speed Generation

The Apple M1 Max 400gb 24cores, with its unified memory architecture, shines when it comes to processing smaller models like Llama 2 7B.

Table 1: Apple M1 Max Token Speed Generation

| Model | Configuration | Tokens/second |

|---|---|---|

| Llama 2 7B | F16 (half precision) | 453.03 |

| Llama 2 7B | Q8_0 (quantized) | 405.87 |

| Llama 2 7B | Q4_0 | 400.26 |

| Llama 3 8B | F16 | 418.77 |

| Llama 3 8B | Q4KM | 355.45 |

| Llama 3 70B | Q4KM | 33.01 |

Note: F16 refers to half precision floating-point format, Q8_0 and Q4_0 refer to quantized formats, and Q4_K_M refers to quantized with kernel size 4 and model size 4.

Strengths:

- Fast processing speeds: The M1 Max handles smaller LLMs with impressive speed, making it ideal for quick prototyping and experimentation.

- Unified memory: The unified memory architecture allows the GPU and CPU to access the same pool of memory, reducing data transfer times.

- Cost-effectiveness: Compared to NVIDIA A100, the M1 Max offers a more affordable option, especially for individuals and smaller teams.

Weaknesses:

- Limited scalability: The M1 Max is less suitable for running larger LLM models like Llama 3 70B.

- Model size limitations: The memory capacity is smaller than the NVIDIA A100.

NVIDIA A100 Token Speed Generation

The NVIDIA A100SXM80GB, with its powerful Tensor Cores and massive memory, shines in handling larger LLM models.

Table 2: NVIDIA A100 Token Speed Generation

| Model | Configuration | Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 133.38 |

| Llama 3 8B | F16 | 53.18 |

| Llama 3 70B | Q4KM | 24.33 |

Strengths:

- Scalability: The A100 is designed to handle larger models, making it ideal for advanced research and production deployments.

- High memory capacity: The 80GB of HBM2e memory allows the A100 to accommodate large models.

Weaknesses:

- Cost: The A100 comes with a significantly higher price tag compared to the Apple M1 Max.

- Lower performance on smaller models: While the A100 excels with large LLMs, its token speed for smaller models like Llama 2 7B is unavailable.

Considerations when Choosing: Apple M1 Max vs NVIDIA A100

Now that we've seen the strengths and weaknesses of each device, let's dive into the key considerations when choosing between the Apple M1 Max 400gb 24cores and NVIDIA A100SXM80GB for your AI endeavors.

1. LLM Model Size

If you're planning to work with smaller LLMs (like Llama 2 7B) for tasks like generating content or translating text, the Apple M1 Max 400gb 24cores offers a fantastic combination of speed and affordability. You'll get a significantly faster experience working with these models than with the A100.

However, for those who need to work with larger LLMs (like Llama 3 70B) for advanced research, production environments, or tasks involving complex understanding and reasoning, the NVIDIA A100SXM80GB is the clear champion. The A100's massive memory and specialized Tensor Cores can handle these demanding workloads.

2. Memory Bandwidth

Memory bandwidth is a crucial factor that impacts the speed of data transfer between the GPU and the CPU. The Apple M1 Max 400gb 24cores boasts a unified memory architecture with a high bandwidth, meaning data transfers happen seamlessly.

The NVIDIA A100SXM80GB offers a high memory bandwidth as well, but with a slightly lower bandwidth than the M1 Max. This difference can become apparent when dealing with large models, where the A100's memory capacity might be necessary, but the slightly lower bandwidth could result in a performance reduction.

3. Cost and Availability

When it comes to cost, the Apple M1 Max 400gb 24cores is considerably more affordable. The NVIDIA A100SXM80GB is a high-end, enterprise-grade GPU designed for large-scale AI deployments and comes with a hefty price tag.

4. Power Consumption

For projects where power consumption is a concern, the Apple M1 Max 400gb 24cores is a more power-efficient option.

The NVIDIA A100SXM80GB, while incredibly powerful, requires a substantial amount of power to operate. This could be a significant factor for individuals and small teams that need to be mindful of their energy footprint.

5. Quantization

Quantization is a technique used to reduce the size of LLM models by representing their weights with lower precision. This can significantly improve inference speeds and reduce memory requirements.

Both the Apple M1 Max 400gb 24cores and NVIDIA A100SXM80GB support quantization, but the specific algorithms used and their impact on performance may vary.

Think of quantization like a data diet for your LLM. It's all about finding the right balance between accuracy and speed.

For a non-technical analogy, imagine having a huge dictionary, but instead of carrying all the words in full, you decide to use abbreviations. You can still understand most of the words, but the dictionary becomes much smaller and easier to carry around. That's exactly what quantization does to LLMs.

6. Ecosystem and Compatibility

The Apple M1 Max 400gb 24cores is integrated into the Apple ecosystem. While this provides a smooth experience if you're already within Apple's ecosystem, it also limits compatibility with other platforms.

The NVIDIA A100SXM80GB, on the other hand, is a highly versatile GPU and is widely compatible with various platforms, including Linux, Windows, and more. This makes it a more flexible choice for different development environments.

Practical Recommendations: Apple M1 Max vs NVIDIA A100

Here are some practical recommendations based on your AI needs:

For individual developers and small teams with light LLM workloads: The Apple M1 Max 400gb 24cores offers excellent value for money. Its unified memory architecture and fast processing speeds make it ideal for experimenting with small LLMs, building prototypes, and even running basic applications involving large language models.

For researchers and companies working on large-scale AI projects: The NVIDIA A100SXM80GB is the go-to choice. Its massive memory capacity and powerful Tensor Cores allow it to handle large LLM models with ease.

For those focused on energy efficiency: The Apple M1 Max 400gb 24cores is the better option. Its lower power consumption makes it a more sustainable choice.

Conclusion: Choosing the Right Tool for the Job

Ultimately, the "best" choice between Apple M1 Max 400gb 24cores and NVIDIA A100SXM80GB depends on your specific needs and priorities. Both devices offer unique capabilities for running LLMs, and choosing the right one can make a world of difference in your AI endeavors.

Remember, there is no one-size-fits-all solution. By carefully considering your workload requirements, budget, and desired speed, you can choose the device that best suits your AI goals.

FAQ

Q: What are some of the best resources for learning more about LLMs?

- Hugging Face: Hugging Face is an open-source platform for building and sharing machine learning models, including LLMs. It offers a wealth of resources, including tutorials, documentation, and a vibrant community.

- Stanford CS224n Natural Language Processing with Deep Learning: This course offers a comprehensive introduction to NLP and deep learning.

Q: What are the benefits of using a GPU for running LLMs?

GPUs are designed for parallel processing, making them incredibly efficient for handling the complex calculations involved in LLM inference. This translates to faster results and improved performance.

Q: Do I need a powerful GPU to run LLMs locally?

While it's certainly possible to run smaller LLMs on a CPU alone, a dedicated GPU, especially a high-performance one like the Apple M1 Max or NVIDIA A100, will offer a significant performance boost for larger and more complex models.

Q: What are some other considerations when choosing a GPU for AI workloads besides the ones mentioned in the article?

- Software compatibility: Ensure that the GPU you choose is compatible with the software and libraries you plan to use.

- Cooling: Consider the cooling requirements of the GPU, especially if you're planning to run it heavily for long periods.

- Warranty: Make sure the GPU you purchase comes with a solid warranty in case of any issues.

Keywords

Apple M1 Max, NVIDIA A100, LLMs, Llama 2, Llama 3, Token Speed, GPU, AI, Deep Learning, Natural Language Processing, Inference, Performance, Unified Memory, Tensor Cores, Memory Bandwidth, Cost, Power Consumption, Quantization, Ecosystem, Compatibility, Hugging Face, NLP, TensorFlow, PyTorch