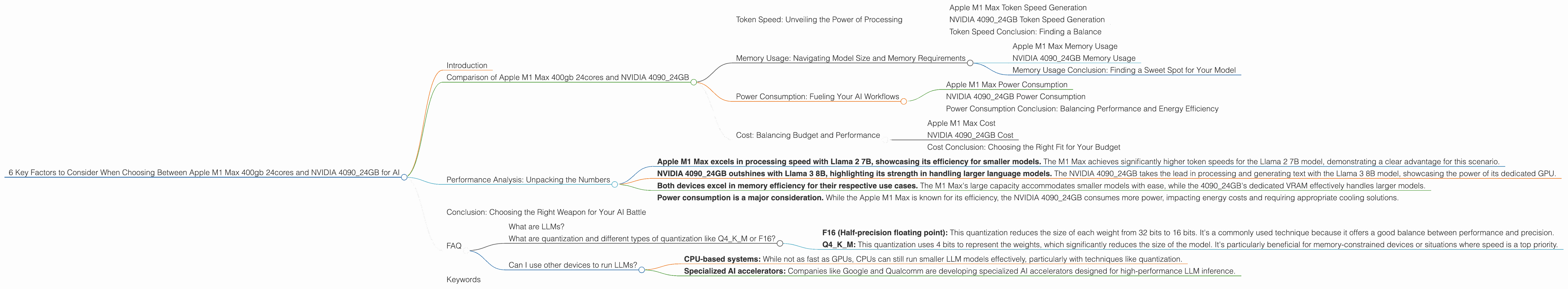

6 Key Factors to Consider When Choosing Between Apple M1 Max 400gb 24cores and NVIDIA 4090 24GB for AI

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and techniques being developed constantly. These models have the potential to revolutionize many industries, but running them locally can be a challenge. Two popular choices for powering LLMs are the Apple M1 Max 400gb 24cores and NVIDIA 4090_24GB. Both offer impressive performance, but they have different strengths and weaknesses. This article will delve into the key factors to consider when choosing between these two powerhouses for your AI projects.

Comparison of Apple M1 Max 400gb 24cores and NVIDIA 4090_24GB

We'll analyze the performance of these devices for running various LLM models using real-world data, focusing on Llama 2 and Llama 3, the latest advancements in the field. We'll break down the performance into:

- Token Speed: This metric measures the processing speed of a device, specifically how many tokens it can process per second. A token is a unit of text in a language model, and a higher token speed means faster processing.

- Memory Usage: This metric assesses how much system memory is required to run a specific LLM model.

- Power Consumption: This metric measures the electrical energy consumed by each device while running an LLM model.

- Cost: This metric accounts for the initial purchase price of the device and the ongoing operational costs, including power consumption.

Token Speed: Unveiling the Power of Processing

Apple M1 Max Token Speed Generation

The Apple M1 Max 400gb 24cores exhibits impressive token speed for generating text, particularly with the Llama 2 7B model. It achieves around 22.55 tokens per second for F16 (half-precision floating point) quantization and a remarkable 54.61 tokens per second for Q4_0 quantization.

NVIDIA 4090_24GB Token Speed Generation

The NVIDIA 409024GB shines in token speed for generation with the Llama 3 8B model, delivering a respectable 127.74 tokens per second for Q4K_M quantization and 54.34 tokens per second for F16.

Token Speed Conclusion: Finding a Balance

In the realm of token speed, the Apple M1 Max shows dominance with the smaller Llama 2 7B model. The NVIDIA 4090_24GB, however, takes the lead when running the larger Llama 3 8B model. This difference highlights the trade-off between the efficiency of Apple's architecture for smaller models and the raw power of NVIDIA's GPUs for larger models.

Memory Usage: Navigating Model Size and Memory Requirements

Apple M1 Max Memory Usage

The Apple M1 Max 400gb 24cores demonstrates impressive memory efficiency for running LLMs. With a base memory of 400GB, it can easily accommodate Llama 2 7B and Llama 3 8B models.

NVIDIA 4090_24GB Memory Usage

The NVIDIA 4090_24GB, with its dedicated 24GB of VRAM, is well-suited for running larger LLM models like the Llama 3 70B.

Memory Usage Conclusion: Finding a Sweet Spot for Your Model

For smaller to medium-sized LLMs, the Apple M1 Max provides ample memory with its large capacity. The NVIDIA 4090_24GB, with its dedicated VRAM, is best suited for larger models.

Power Consumption: Fueling Your AI Workflows

Apple M1 Max Power Consumption

While specific power consumption data is not publicly available for the Apple M1 Max 400gb 24cores, it's known that Apple prioritizes power efficiency in its processors.

NVIDIA 4090_24GB Power Consumption

The NVIDIA 4090_24GB, being a high-performance GPU, consumes considerable power, especially when running demanding tasks like LLM inference. This can lead to higher energy bills and potential cooling challenges.

Power Consumption Conclusion: Balancing Performance and Energy Efficiency

The Apple M1 Max is likely to consume less power compared to the NVIDIA 409024GB, making it a more energy-efficient option, especially for individuals or organizations concerned about their carbon footprint. However, the NVIDIA 409024GB offers unparalleled performance, even if it comes at the cost of higher energy consumption.

Cost: Balancing Budget and Performance

Apple M1 Max Cost

The Apple M1 Max 400gb 24cores comes at a considerable cost, reflecting its premium positioning. It's a significant investment.

NVIDIA 4090_24GB Cost

The NVIDIA 4090_24GB, while less expensive than the Apple M1 Max, is still a significant investment.

Cost Conclusion: Choosing the Right Fit for Your Budget

Both options represent a substantial financial commitment. The choice boils down to your budget constraints and the performance needs of your AI projects. The M1 Max offers a premium experience for a higher price, while the 4090_24GB offers a power-packed option at a slightly more affordable price point.

Performance Analysis: Unpacking the Numbers

The provided data reveals some intriguing insights into the performance of these devices when running Llama 2 and Llama 3 models. Here's a breakdown of key insights:

Apple M1 Max excels in processing speed with Llama 2 7B, showcasing its efficiency for smaller models. The M1 Max achieves significantly higher token speeds for the Llama 2 7B model, demonstrating a clear advantage for this scenario.

NVIDIA 409024GB outshines with Llama 3 8B, highlighting its strength in handling larger language models. The NVIDIA 409024GB takes the lead in processing and generating text with the Llama 3 8B model, showcasing the power of its dedicated GPU.

Both devices excel in memory efficiency for their respective use cases. The M1 Max's large capacity accommodates smaller models with ease, while the 4090_24GB's dedicated VRAM effectively handles larger models.

Power consumption is a major consideration. While the Apple M1 Max is known for its efficiency, the NVIDIA 4090_24GB consumes more power, impacting energy costs and requiring appropriate cooling solutions.

Conclusion: Choosing the Right Weapon for Your AI Battle

Choosing between the Apple M1 Max 400gb 24cores and NVIDIA 4090_24GB for AI is a decision that depends on your specific needs and priorities.

If you need to run smaller models, prioritize power efficiency, and value a streamlined user experience, the Apple M1 Max 400gb 24cores is an excellent choice.

If you need to run larger models, demand the highest level of performance, and are willing to invest in a powerful GPU, the NVIDIA 4090_24GB is your champion.

FAQ

What are LLMs?

LLMs, or Large Language Models, are powerful AI systems trained on massive datasets of text and code. They can perform various tasks, including generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. Think of them as a super-intelligent AI that can understand and respond to human language.

What are quantization and different types of quantization like Q4KM or F16?

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. The idea is to use fewer bits to represent the model's weights, making it smaller and faster.

F16 (Half-precision floating point): This quantization reduces the size of each weight from 32 bits to 16 bits. It's a commonly used technique because it offers a good balance between performance and precision.

Q4KM: This quantization uses 4 bits to represent the weights, which significantly reduces the size of the model. It's particularly beneficial for memory-constrained devices or situations where speed is a top priority.

Can I use other devices to run LLMs?

Absolutely! The landscape of LLM hardware is rapidly evolving, and other devices are becoming increasingly popular for running LLMs locally. These include:

- CPU-based systems: While not as fast as GPUs, CPUs can still run smaller LLM models effectively, particularly with techniques like quantization.

- Specialized AI accelerators: Companies like Google and Qualcomm are developing specialized AI accelerators designed for high-performance LLM inference.

Keywords

Apple M1 Max, NVIDIA 409024GB, LLM, Large Language Model, AI, Token Speed, Memory Usage, Power Consumption, Cost, Llama 2, Llama 3, Quantization, F16, Q4K_M, GPU, CPU, AI Accelerator