6 Key Factors to Consider When Choosing Between Apple M1 Max 400gb 24cores and NVIDIA 3090 24GB x2 for AI

Introduction

Choosing the right hardware for running large language models (LLMs) is crucial for achieving optimal performance and efficiency. When it comes to local inference, two popular contenders are the Apple M1 Max 400GB 24-core processor and the NVIDIA 3090 24GB x2 setup. Both offer impressive capabilities, but they excel in different areas. This article will delve into a detailed comparison of these two hardware options, highlighting their strengths, weaknesses, and ideal use cases across a range of popular LLMs.

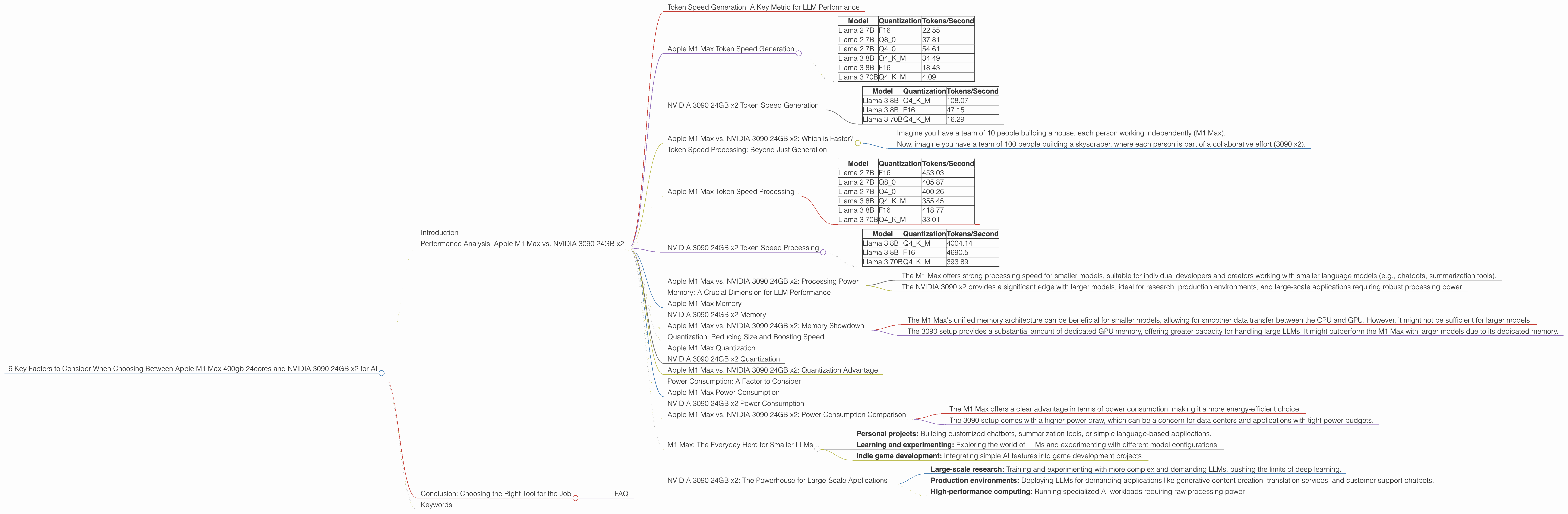

Performance Analysis: Apple M1 Max vs. NVIDIA 3090 24GB x2

Token Speed Generation: A Key Metric for LLM Performance

One of the most critical metrics for LLM performance is token speed generation. Essentially, this measures how quickly a device can process and generate text tokens, the building blocks of language. Higher token speed translates to faster response times and smoother user interactions.

Apple M1 Max Token Speed Generation

The Apple M1 Max demonstrates impressive token speed generation, particularly with smaller models like Llama 2 7B. Let's take a look at the numbers:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 2 7B | F16 | 22.55 |

| Llama 2 7B | Q8_0 | 37.81 |

| Llama 2 7B | Q4_0 | 54.61 |

| Llama 3 8B | Q4KM | 34.49 |

| Llama 3 8B | F16 | 18.43 |

| Llama 3 70B | Q4KM | 4.09 |

Key takeaway: The M1 Max shines when dealing with smaller LLMs, particularly when using quantized models (Q80 and Q40). While its performance with larger models like Llama 3 70B is decent, it falls behind the NVIDIA 3090 setup.

NVIDIA 3090 24GB x2 Token Speed Generation

The NVIDIA 3090 offers significantly higher token speed generation with larger LLMs, although it doesn't quite match the M1 Max's performance with smaller LLMs.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 108.07 |

| Llama 3 8B | F16 | 47.15 |

| Llama 3 70B | Q4KM | 16.29 |

Key takeaway: The 3090 setup offers superior token speed for larger models like Llama 3 70B, making it a strong contender for research and production environments working with advanced models.

Apple M1 Max vs. NVIDIA 3090 24GB x2: Which is Faster?

To simplify the understanding, let's take an analogy:

- Imagine you have a team of 10 people building a house, each person working independently (M1 Max).

- Now, imagine you have a team of 100 people building a skyscraper, where each person is part of a collaborative effort (3090 x2).

In the first scenario, the smaller team (M1 Max) might be faster at building a simple house. However, when constructing a complex skyscraper (large LLM), the larger team (3090 x2) is more efficient due to its greater capacity and collaboration.

Token Speed Processing: Beyond Just Generation

While token speed generation is important, it's also crucial to consider token speed processing, which measures how quickly a device can process the entire LLM model. This factors into overall inference speed and is an essential part of the equation.

Apple M1 Max Token Speed Processing

The M1 Max demonstrates impressive processing speed with smaller models.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 2 7B | F16 | 453.03 |

| Llama 2 7B | Q8_0 | 405.87 |

| Llama 2 7B | Q4_0 | 400.26 |

| Llama 3 8B | Q4KM | 355.45 |

| Llama 3 8B | F16 | 418.77 |

| Llama 3 70B | Q4KM | 33.01 |

Key takeaway: The M1 Max's processing power shines with smaller models, offering fast response times and efficient inference. However, its performance with larger models like Llama 3 70B is relatively lower compared to the 3090 setup.

NVIDIA 3090 24GB x2 Token Speed Processing

The 3090 setup boasts significantly faster processing speeds with larger models.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 4004.14 |

| Llama 3 8B | F16 | 4690.5 |

| Llama 3 70B | Q4KM | 393.89 |

Key takeaway: The 3090 setup excels at processing larger LLM models, making it a solid choice for research and production environments requiring high throughput.

Apple M1 Max vs. NVIDIA 3090 24GB x2: Processing Power

The M1 Max and 3090 setup demonstrate a clear trade-off:

- The M1 Max offers strong processing speed for smaller models, suitable for individual developers and creators working with smaller language models (e.g., chatbots, summarization tools).

- The NVIDIA 3090 x2 provides a significant edge with larger models, ideal for research, production environments, and large-scale applications requiring robust processing power.

Memory: A Crucial Dimension for LLM Performance

Memory is a critical factor in running LLMs, especially larger models. Insufficient memory can lead to performance bottlenecks and even crashes.

Apple M1 Max Memory

The M1 Max offers up to 96GB of unified memory, which is shared between the CPU and GPU. This unified memory architecture allows for faster data transfer between the CPU and GPU, potentially improving performance.

NVIDIA 3090 24GB x2 Memory

The NVIDIA 3090 x2 setup offers a total of 48GB of dedicated GPU memory. Each card has 24GB of GDDR6X memory, which is designed for high bandwidth and low latency.

Apple M1 Max vs. NVIDIA 3090 24GB x2: Memory Showdown

- The M1 Max's unified memory architecture can be beneficial for smaller models, allowing for smoother data transfer between the CPU and GPU. However, it might not be sufficient for larger models.

- The 3090 setup provides a substantial amount of dedicated GPU memory, offering greater capacity for handling large LLMs. It might outperform the M1 Max with larger models due to its dedicated memory.

Quantization: Reducing Size and Boosting Speed

Quantization is a technique that reduces the size of LLM models by representing the model's weights with fewer bits. This can lead to significant improvements in performance and efficiency.

Apple M1 Max Quantization

The M1 Max supports various quantization levels, including Q80 and Q40.

NVIDIA 3090 24GB x2 Quantization

The NVIDIA 3090 setup also supports quantization, including Q4KM.

Apple M1 Max vs. NVIDIA 3090 24GB x2: Quantization Advantage

Both devices benefit from quantization, reducing model size and potentially boosting performance. However, the specific quantization methods and their impact on performance can vary depending on the LLM and the chosen hardware. Therefore, thorough testing and experimentation might be necessary to determine the optimal quantization level for each LLM and hardware setup.

Power Consumption: A Factor to Consider

Power consumption is a significant factor, especially in data centers and resource-constrained environments.

Apple M1 Max Power Consumption

The M1 Max is known for its relatively low power consumption compared to high-end GPUs.

NVIDIA 3090 24GB x2 Power Consumption

The NVIDIA 3090 x2 setup consumes significantly more power due to its high-performance GPUs.

Apple M1 Max vs. NVIDIA 3090 24GB x2: Power Consumption Comparison

- The M1 Max offers a clear advantage in terms of power consumption, making it a more energy-efficient choice.

- The 3090 setup comes with a higher power draw, which can be a concern for data centers and applications with tight power budgets.

M1 Max: The Everyday Hero for Smaller LLMs

The Apple M1 Max emerges as a cost-effective and energy-efficient choice for developers and creators working with smaller LLMs. It offers impressive performance with Llama 2 7B models, making it suitable for various tasks like:

- Personal projects: Building customized chatbots, summarization tools, or simple language-based applications.

- Learning and experimenting: Exploring the world of LLMs and experimenting with different model configurations.

- Indie game development: Integrating simple AI features into game development projects.

NVIDIA 3090 24GB x2: The Powerhouse for Large-Scale Applications

The NVIDIA 3090 setup is a powerful choice for research and production environments demanding high performance with large LLMs. It excels at tasks like:

- Large-scale research: Training and experimenting with more complex and demanding LLMs, pushing the limits of deep learning.

- Production environments: Deploying LLMs for demanding applications like generative content creation, translation services, and customer support chatbots.

- High-performance computing: Running specialized AI workloads requiring raw processing power.

Conclusion: Choosing the Right Tool for the Job

Ultimately, the best choice between the Apple M1 Max and NVIDIA 3090 x2 depends on your specific needs and use cases.

- M1 Max: Ideal for smaller LLMs, cost-effective, and energy-efficient.

- NVIDIA 3090 x2: Offers unparalleled performance with larger models and is suitable for research, production, and high-performance computing.

FAQ

1. What are LLMs?

LLMs are computer programs trained on massive datasets of text and code, capable of understanding and generating human-like text.

2. What is quantization?

Quantization is a technique that reduces the size of LLMs by representing the model's weights with fewer bits, making them more efficient.

3. Can I use an LLM on my computer?

Yes! Local inference allows you to run LLMs on your own device without relying on cloud services.

4. Which device is better for me?

That depends on your specific needs. If you are working with smaller LLMs, the M1 Max is a great choice. For larger models and high-performance computing, the NVIDIA 3090 x2 is a better option.

5. What about other devices?

This article focuses on comparing the Apple M1 Max and NVIDIA 3090 x2. Other devices, such as the A100 GPU, might also be suitable for running LLMs.

Keywords

LLM, Apple M1 Max, NVIDIA 3090, Token Speed, Generation, Processing, Quantization, Memory, Power Consumption, Local Inference, AI, Deep Learning, Research, Production, Development, Chatbot, Summarization, Game Development, High-Performance Computing