6 Key Factors to Consider When Choosing Between Apple M1 Max 400gb 24cores and NVIDIA 3080 10GB for AI

Introduction

The world of Large Language Models (LLMs) is exploding, with new models being released almost daily. These models are capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But running these models locally can be a challenge, requiring powerful hardware that can handle the massive computational demands.

Choosing the right hardware for running LLMs locally is crucial, and this is where two powerhouses emerge: the Apple M1 Max 400GB 24cores and the NVIDIA 3080 10GB. This article delves into the world of these two heavy-hitters, analyzing their individual strengths and weaknesses, ultimately guiding you towards the best choice for your specific needs.

Comparison of Apple M1 Max 400GB 24cores and NVIDIA 3080 10GB for AI

Let's dive into the world of running LLMs on Apple M1 Max 400GB 24cores and NVIDIA 3080 10GB, understanding the strengths and weaknesses of each. For a comprehensive analysis, we'll compare the performance of each device on various LLM models using data gathered from the llama.cpp benchmark library.

Performance Analysis

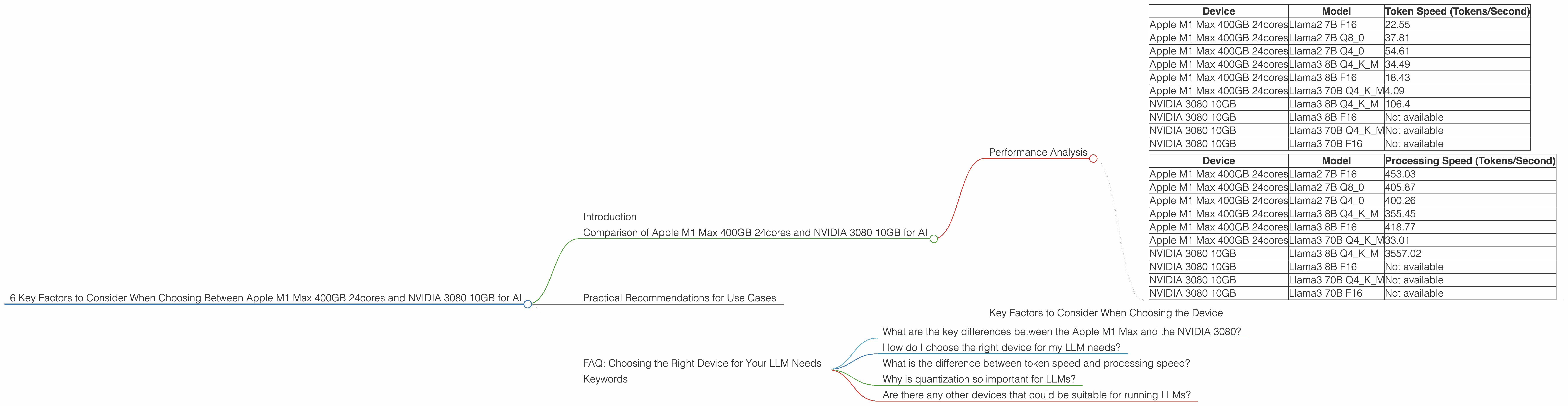

Comparing Token Speed Generation:

Let's start by measuring the speed at which these devices can generate tokens, the building blocks of text, for various LLMs. The token generation speed is crucial, especially if you're using the LLM for tasks like chatbots or creative writing, where real-time response is essential.

| Device | Model | Token Speed (Tokens/Second) |

|---|---|---|

| Apple M1 Max 400GB 24cores | Llama2 7B F16 | 22.55 |

| Apple M1 Max 400GB 24cores | Llama2 7B Q8_0 | 37.81 |

| Apple M1 Max 400GB 24cores | Llama2 7B Q4_0 | 54.61 |

| Apple M1 Max 400GB 24cores | Llama3 8B Q4KM | 34.49 |

| Apple M1 Max 400GB 24cores | Llama3 8B F16 | 18.43 |

| Apple M1 Max 400GB 24cores | Llama3 70B Q4KM | 4.09 |

| NVIDIA 3080 10GB | Llama3 8B Q4KM | 106.4 |

| NVIDIA 3080 10GB | Llama3 8B F16 | Not available |

| NVIDIA 3080 10GB | Llama3 70B Q4KM | Not available |

| NVIDIA 3080 10GB | Llama3 70B F16 | Not available |

Interpreting the Results:

As we can see, the NVIDIA 3080 10GB seems to be the clear winner in token speed generation, particularly for the Llama 3 8B Q4KM model. Its token speed of 106.4 tokens per second is blazing fast compared to the M1 Max's 34.49 tokens per second. However, it's important to remember that the NVIDIA 3080 lacks performance data for the Llama 3 70B model.

Apple M1 Token Speed Generation:

The Apple M1 Max 400GB 24cores performs admirably in the generation of tokens for the smaller Llama2 7B model, particularly when using quantization techniques (Q80 and Q40) that reduce the model size and memory footprint. However, when we move to larger models like Llama3 70B, it can become a bit of a bottleneck, even with quantization.

Quantization:

Quantization is a technique used to compress LLMs, making them smaller and faster to run. It's a bit like compressing an image — you reduce the file size while maintaining the overall quality. In the context of LLMs, quantization reduces the precision of the model's parameters, making it more efficient but potentially also less accurate.

NVIDIA 3080 Token Speed Generation:

The NVIDIA 3080 10GB excels in token speed, showing its prowess for larger model sizes. However, it's important to note that the lack of data for the Llama 3 70B model might indicate potential limitations for extremely large models.

Comparing Processing Speed:

Now, let's shift our focus to the processing speed, which measures how quickly the device can process the input text to prepare it for the LLM. Similar to token generation speed, a faster processing speed translates into prompter responsiveness and smoother interactions.

| Device | Model | Processing Speed (Tokens/Second) |

|---|---|---|

| Apple M1 Max 400GB 24cores | Llama2 7B F16 | 453.03 |

| Apple M1 Max 400GB 24cores | Llama2 7B Q8_0 | 405.87 |

| Apple M1 Max 400GB 24cores | Llama2 7B Q4_0 | 400.26 |

| Apple M1 Max 400GB 24cores | Llama3 8B Q4KM | 355.45 |

| Apple M1 Max 400GB 24cores | Llama3 8B F16 | 418.77 |

| Apple M1 Max 400GB 24cores | Llama3 70B Q4KM | 33.01 |

| NVIDIA 3080 10GB | Llama3 8B Q4KM | 3557.02 |

| NVIDIA 3080 10GB | Llama3 8B F16 | Not available |

| NVIDIA 3080 10GB | Llama3 70B Q4KM | Not available |

| NVIDIA 3080 10GB | Llama3 70B F16 | Not available |

Interpreting the Results:

Comparing the processing speeds, we see a similar trend: the NVIDIA 3080 10GB dominates, with a processing speed of 3557.02 tokens per second for the Llama 3 8B Q4KM model. The Apple M1 Max 400GB 24cores lags considerably, achieving a processing speed of 355.45 tokens per second for the same model.

Apple M1 Processing Speed:

The Apple M1 Max 400GB 24cores excels in processing smaller models, like Llama2 7B, with its F16 processing speed of 453.03 tokens per second. However, as we move to larger models like Llama3 70B, the processing speed significantly drops to 33.01 tokens per second.

NVIDIA 3080 Processing Speed:

The NVIDIA 3080 10GB showcases its processing power, handling the Llama 3 8B Q4KM model with exceptional efficiency. However, the lack of data for larger models like Llama 3 70B again raises concerns about its ability to manage larger model sizes.

Key Factors to Consider When Choosing the Device

Now that we've taken a deep dive into the performance aspects, let's analyze the key factors that might influence your decision.

1. Model Size:

The size of the LLM you want to run is a critical factor. For smaller models like Llama2 7B, the Apple M1 Max 400GB 24cores holds its own, offering competitive performance and efficiency. However, if you plan to run larger models like Llama3 70B, the NVIDIA 3080 10GB might be a better choice, thanks to its superior processing speed and potential for handling larger models.

2. Quantization:

Quantization, a technique used to reduce the size of LLMs, becomes more relevant for larger models. Apple M1 Max 400GB 24cores demonstrates impressive performance when using quantization, especially in token speed generation.

3. Memory and Storage:

The Apple M1 Max 400GB 24cores offers generous memory and storage, making it a good option for running multiple LLMs simultaneously, while also providing ample space for storing large datasets.

4. Power Consumption:

The Apple M1 Max 400GB 24cores is known for its energy efficiency, consuming less power than a traditional GPU. This can be a significant advantage if you're concerned about energy costs or are using your device in a mobile setup.

5. Cost:

The NVIDIA 3080 10GB is generally more expensive than the Apple M1 Max 400GB 24cores. Consider your budget and the power you need.

6. Ecosystem and Software:

The Apple M1 Max offers a seamless integration with the Apple ecosystem, while the NVIDIA 3080 is a powerful choice for users who prefer the flexibility and extensive support of the NVIDIA ecosystem.

Practical Recommendations for Use Cases

Knowing the key factors and the performance characteristics of each device, let's explore some practical use cases:

1. Data Scientists and Researchers:

For data scientists and researchers who frequently work with large LLMs and datasets, the NVIDIA 3080 10GB is a powerful choice. Its processing speed and potential for managing larger models make it well-suited for training and fine-tuning LLMs.

2. Developers Building Chatbots or Conversational AI:

If building a chatbot or conversational AI system is your goal, the Apple M1 Max 400GB 24cores is a strong contender. Its efficient processing and token generation speeds for smaller models, along with its robust memory and storage, make it a suitable choice for developing and deploying interactive applications.

3. Students and Hobbyists Exploring LLMs:

For students and hobbyists who are just getting started with exploring LLMs, the Apple M1 Max 400GB 24cores is a fantastic option. Its cost-effectiveness and ease of use make it an ideal entry point into the world of running LLMs locally.

FAQ: Choosing the Right Device for Your LLM Needs

What are the key differences between the Apple M1 Max and the NVIDIA 3080?

The Apple M1 Max focuses on powerful performance within an energy-efficient design and is particularly strong with smaller LLMs. The NVIDIA 3080 excels at processing large models and offers raw processing speed, but comes with greater power consumption.

How do I choose the right device for my LLM needs?

Consider the size of the LLM you want to run, your budget, and the type of tasks you'll be performing. For smaller models and general LLM exploration, the Apple M1 Max is a good value. For larger models and computationally intensive tasks, the NVIDIA 3080 is a more potent option.

What is the difference between token speed and processing speed?

Token speed measures how fast a device can generate outputs, while processing speed measures how fast a device can prepare the input text for the LLM. Both are vital for a smooth LLM experience.

Why is quantization so important for LLMs?

Quantization reduces the size and complexity of models, making them run faster and with lower memory requirements. It's a valuable technique for running larger LLMs on devices with limited resources.

Are there any other devices that could be suitable for running LLMs?

Yes, there are other devices like the A100, A1000, and even CPUs with specialized AI acceleration. However, this article focuses on the comparison between the Apple M1 Max and the NVIDIA 3080.

Keywords

Apple M1 Max, NVIDIA 3080, LLM, Large Language Model, Token Speed, Processing Speed, Quantization, Llama2, Llama3, AI, Machine Learning, Deep Learning, GPU, CPU.