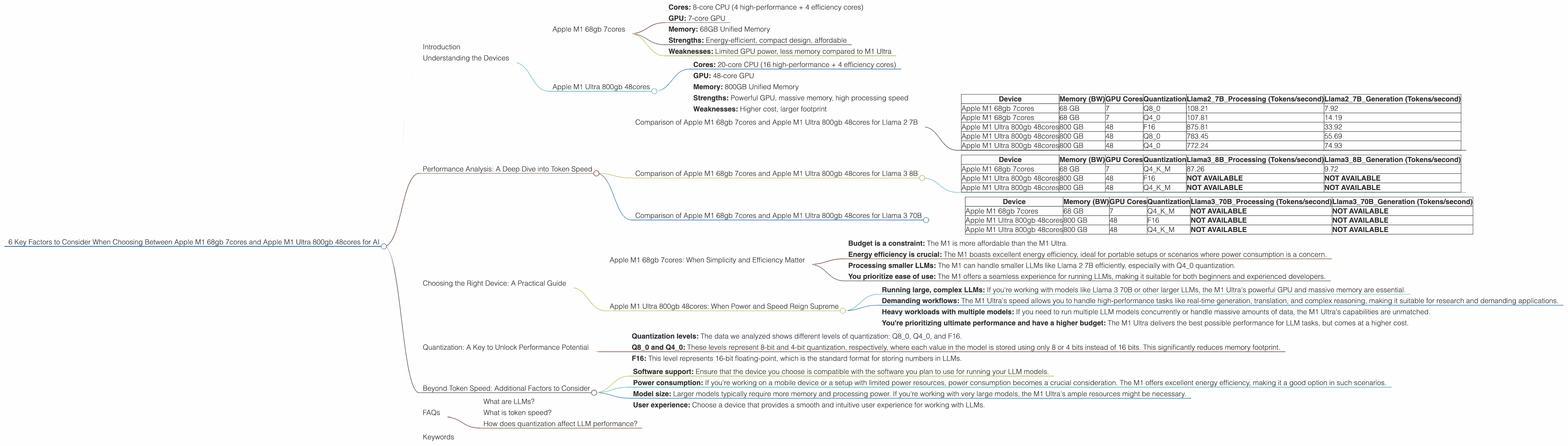

6 Key Factors to Consider When Choosing Between Apple M1 68gb 7cores and Apple M1 Ultra 800gb 48cores for AI

Introduction

The world of large language models (LLMs) is evolving at a breakneck pace, pushing the boundaries of what's possible with artificial intelligence. From generating creative text formats to translating languages, LLMs are transforming various industries.

But running these sophisticated models demands powerful hardware, and choosing the right device can be a daunting task. Today, we'll delve into the capabilities of two popular Apple chips: the M1 68gb 7cores and the M1 Ultra 800gb 48cores, and explore their suitability for running LLMs.

Understanding the Devices

Before diving into the comparison, let's understand the key features of each device:

Apple M1 68gb 7cores

- Cores: 8-core CPU (4 high-performance + 4 efficiency cores)

- GPU: 7-core GPU

- Memory: 68GB Unified Memory

- Strengths: Energy-efficient, compact design, affordable

- Weaknesses: Limited GPU power, less memory compared to M1 Ultra

Apple M1 Ultra 800gb 48cores

- Cores: 20-core CPU (16 high-performance + 4 efficiency cores)

- GPU: 48-core GPU

- Memory: 800GB Unified Memory

- Strengths: Powerful GPU, massive memory, high processing speed

- Weaknesses: Higher cost, larger footprint

Performance Analysis: A Deep Dive into Token Speed

Now, let's get to the meat of the matter - how these devices perform with different LLM models. We'll analyze token speed, which represents the number of tokens a device can process per second.

Token speed is a crucial metric for LLM performance, as it directly impacts the speed of text generation and processing. The higher the token speed, the faster the model can operate.

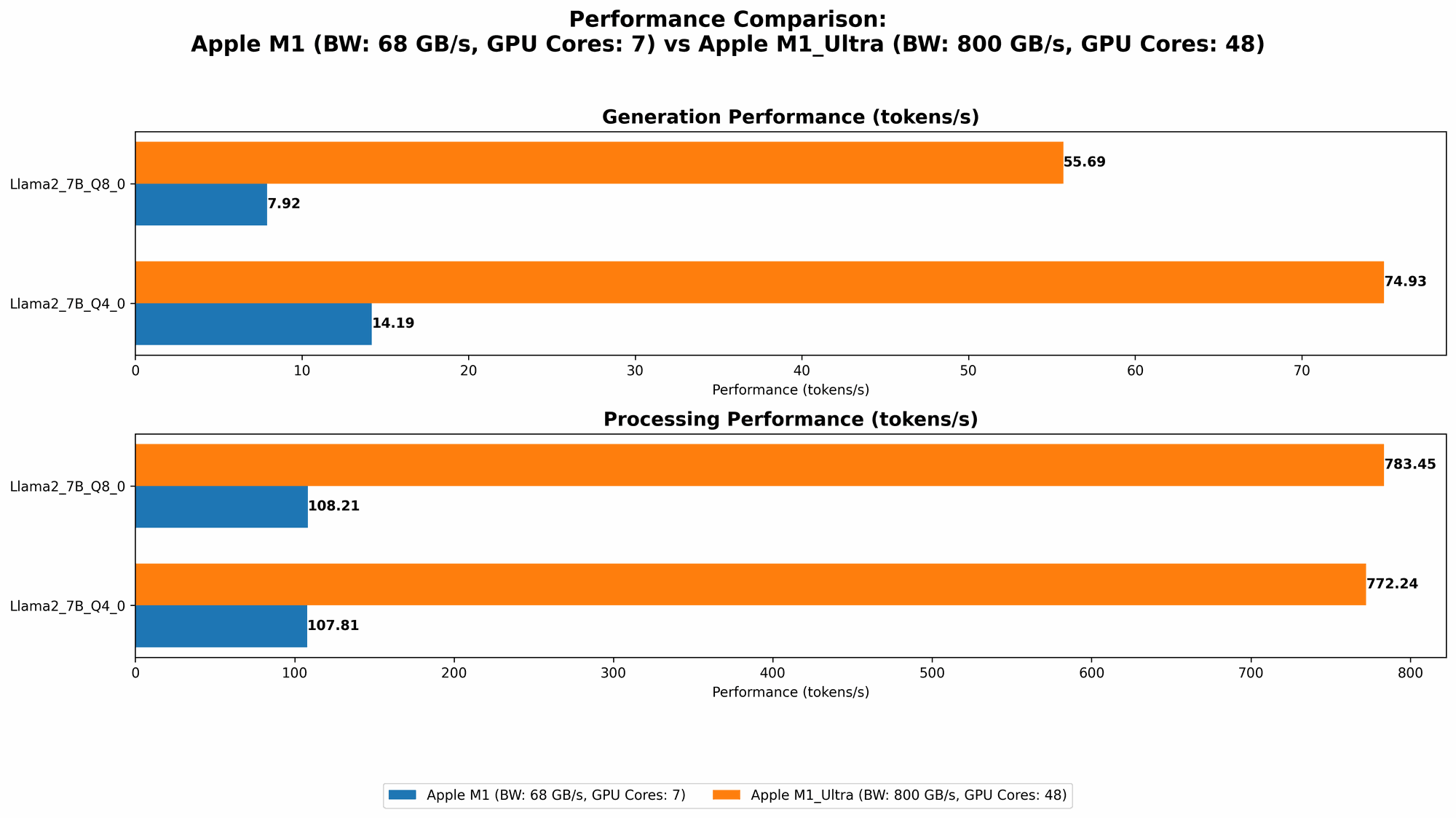

Comparison of Apple M1 68gb 7cores and Apple M1 Ultra 800gb 48cores for Llama 2 7B

| Device | Memory (BW) | GPU Cores | Quantization | Llama27BProcessing (Tokens/second) | Llama27BGeneration (Tokens/second) |

|---|---|---|---|---|---|

| Apple M1 68gb 7cores | 68 GB | 7 | Q8_0 | 108.21 | 7.92 |

| Apple M1 68gb 7cores | 68 GB | 7 | Q4_0 | 107.81 | 14.19 |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | F16 | 875.81 | 33.92 |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | Q8_0 | 783.45 | 55.69 |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | Q4_0 | 772.24 | 74.93 |

Observations:

- M1 Ultra dominates in processing speed: The M1 Ultra significantly outperforms the M1 in processing tokens for Llama 2 7B, regardless of the quantization level.

- M1 Ultra excels in generation speed: The M1 Ultra also demonstrates superior performance in token generation, especially with Q80 and Q40 quantization.

- M1's performance is dependent on quantization: The M1 shows a notable increase in token speed with Q40 quantization compared to Q80, suggesting that lower precision quantization can boost performance on this device.

Think of it like this: The M1 Ultra is like a Formula 1 car, effortlessly zipping through tokens, while the M1 is a reliable hatchback, capable of handling the journey but not at the speed of the F1.

Comparison of Apple M1 68gb 7cores and Apple M1 Ultra 800gb 48cores for Llama 3 8B

| Device | Memory (BW) | GPU Cores | Quantization | Llama38BProcessing (Tokens/second) | Llama38BGeneration (Tokens/second) |

|---|---|---|---|---|---|

| Apple M1 68gb 7cores | 68 GB | 7 | Q4KM | 87.26 | 9.72 |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | F16 | NOT AVAILABLE | NOT AVAILABLE |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | Q4KM | NOT AVAILABLE | NOT AVAILABLE |

Observations:

- Data limitations: Unfortunately, we don't have data for F16 and Q4KM quantization levels for the M1 Ultra with Llama 3 8B. This indicates a potential lack of benchmarks or limited support for these configurations.

Comparison of Apple M1 68gb 7cores and Apple M1 Ultra 800gb 48cores for Llama 3 70B

| Device | Memory (BW) | GPU Cores | Quantization | Llama370BProcessing (Tokens/second) | Llama370BGeneration (Tokens/second) |

|---|---|---|---|---|---|

| Apple M1 68gb 7cores | 68 GB | 7 | Q4KM | NOT AVAILABLE | NOT AVAILABLE |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | F16 | NOT AVAILABLE | NOT AVAILABLE |

| Apple M1 Ultra 800gb 48cores | 800 GB | 48 | Q4KM | NOT AVAILABLE | NOT AVAILABLE |

Observations:

- Data limitations: Again, we don't have data for Llama 3 70B on either device due to potential lack of benchmarks or configuration support.

Choosing the Right Device: A Practical Guide

Now that we've analyzed the performance of these devices, let's discuss how to choose the right one for your LLM needs.

Apple M1 68gb 7cores: When Simplicity and Efficiency Matter

The M1 is a great choice if:

- Budget is a constraint: The M1 is more affordable than the M1 Ultra.

- Energy efficiency is crucial: The M1 boasts excellent energy efficiency, ideal for portable setups or scenarios where power consumption is a concern.

- Processing smaller LLMs: The M1 can handle smaller LLMs like Llama 2 7B efficiently, especially with Q4_0 quantization.

- You prioritize ease of use: The M1 offers a seamless experience for running LLMs, making it suitable for both beginners and experienced developers.

Apple M1 Ultra 800gb 48cores: When Power and Speed Reign Supreme

The M1 Ultra is the go-to choice for:

- Running large, complex LLMs: If you're working with models like Llama 3 70B or other larger LLMs, the M1 Ultra's powerful GPU and massive memory are essential.

- Demanding workflows: The M1 Ultra's speed allows you to handle high-performance tasks like real-time generation, translation, and complex reasoning, making it suitable for research and demanding applications.

- Heavy workloads with multiple models: If you need to run multiple LLM models concurrently or handle massive amounts of data, the M1 Ultra's capabilities are unmatched.

- You're prioritizing ultimate performance and have a higher budget: The M1 Ultra delivers the best possible performance for LLM tasks, but comes at a higher cost.

Quantization: A Key to Unlock Performance Potential

Quantization is a technique used to reduce the size of LLM models while preserving their accuracy. This is crucial for running LLMs on devices with limited memory, like the M1.

- Quantization levels: The data we analyzed shows different levels of quantization: Q80, Q40, and F16.

- Q80 and Q40: These levels represent 8-bit and 4-bit quantization, respectively, where each value in the model is stored using only 8 or 4 bits instead of 16 bits. This significantly reduces memory footprint.

- F16: This level represents 16-bit floating-point, which is the standard format for storing numbers in LLMs.

The choice of quantization level can significantly impact performance. Lower precision quantization (like Q80 or Q40) can boost speed on devices like the M1, while higher precision (F16) may be necessary for larger LLMs or specific tasks where accuracy is paramount.

Beyond Token Speed: Additional Factors to Consider

While token speed is a vital metric, it's not the only one to consider. Here are some other factors to keep in mind when selecting a device for AI tasks:

- Software support: Ensure that the device you choose is compatible with the software you plan to use for running your LLM models.

- Power consumption: If you're working on a mobile device or a setup with limited power resources, power consumption becomes a crucial consideration. The M1 offers excellent energy efficiency, making it a good option in such scenarios.

- Model size: Larger models typically require more memory and processing power. If you're working with very large models, the M1 Ultra's ample resources might be necessary.

- User experience: Choose a device that provides a smooth and intuitive user experience for working with LLMs.

FAQs

What are LLMs?

LLMs are large language models trained on massive text datasets, enabling them to understand and generate human-like text. They can perform various tasks like text generation, translation, summarization, and more.

What is token speed?

Token speed refers to the number of tokens a device can process per second. It plays a crucial role in the performance of LLM models, directly impacting how fast they can generate or process text.

How does quantization affect LLM performance?

Quantization is a technique used to reduce the size of LLM models by representing values with fewer bits. While it can improve speed, it can also affect accuracy. Choosing the right quantization level depends on the specific model and your requirements for speed and accuracy.

Keywords

Apple M1, Apple M1 Ultra, LLM, Large Language Model, Token Speed, Quantization, Performance, AI, Deep Learning, Machine Learning, Llama 2, Llama 3, GPU, CPU, Memory, Processing, Generation, Software, Power Consumption, User Experience, Development, Research, Applications, Workflow, Data Science, NLP, Natural Language Processing, Text Generation, Translation, Summarization, Reasoning