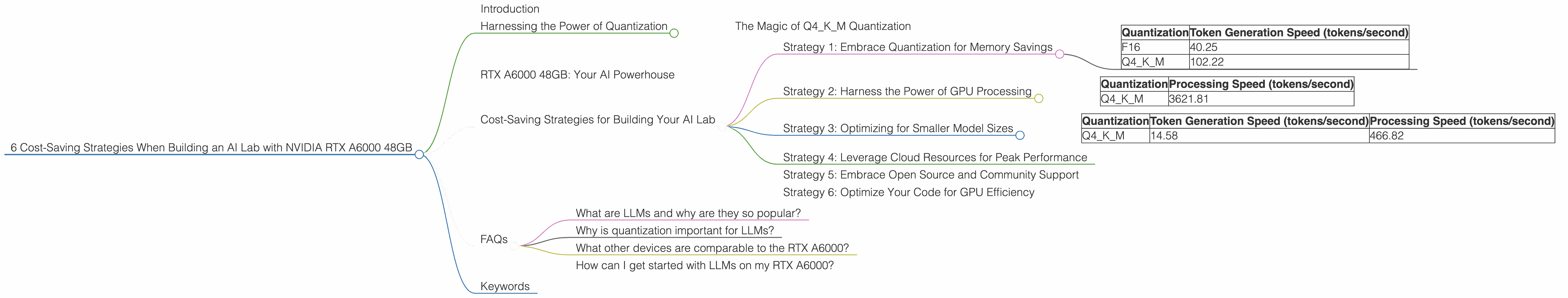

6 Cost Saving Strategies When Building an AI Lab with NVIDIA RTX A6000 48GB

Introduction

Building an AI lab can be an expensive endeavor, especially when you're working with large language models (LLMs). These AI powerhouses are hungry for processing power and require specialized hardware to run efficiently. But fear not, tech wizards! This article will guide you through smart strategies to build your AI lab using the mighty NVIDIA RTX A6000 48GB, maximizing performance while minimizing your wallet's pain. We'll explore the fascinating world of LLMs, understand the importance of quantization, and uncover how the RTX A6000's raw power can unlock cost-saving techniques.

Harnessing the Power of Quantization

Think of quantization as a clever way to slim down your model without sacrificing too much performance. It's like compressing a massive music file to fit on your phone – you lose some quality, but it becomes much more manageable. In the world of LLMs, quantization translates to smaller model sizes, which means less memory required and faster processing speeds. It’s a win-win for your AI lab!

The Magic of Q4KM Quantization

Q4KM quantization is a specific type that uses 4-bit precision for weights and 4-bit for activations, offering significant memory savings. It’s like using a smaller alphabet to write a story – it takes up less space but might need a bit more effort to decode. The beauty of Q4KM lies in its ability to strike a balance between performance and efficiency.

RTX A6000 48GB: Your AI Powerhouse

Now let's meet your new AI best friend – the NVIDIA RTX A6000 48GB. This beast is packed with 48GB of GDDR6 memory and 10752 CUDA cores, making it a real powerhouse for running LLMs. Imagine it as a turbocharged engine for your AI lab.

Cost-Saving Strategies for Building Your AI Lab

Let's dive into the heart of this article – how to build a cost-effective AI lab using the RTX A6000 48GB. Each strategy plays a vital role in ensuring your lab runs smoothly and efficiently.

Strategy 1: Embrace Quantization for Memory Savings

Quantizing LLMs can be a huge cost saver. Take the Llama 3 8B model, for instance. Running it in F16 precision on the RTX A6000 yields a token generation speed of 40.25 tokens per second. However, by quantizing the model to Q4KM, we see a significant leap in performance to 102.22 tokens per second! That's more than double the speed!

Table 1: Llama 3 8B Token Generation Speed Comparison

| Quantization | Token Generation Speed (tokens/second) |

|---|---|

| F16 | 40.25 |

| Q4KM | 102.22 |

This dramatic increase translates to faster inference times and reduced processing demands on your GPU, potentially allowing you to process more data with the same hardware.

Strategy 2: Harness the Power of GPU Processing

The RTX A6000 excels at processing data at lightning speed. For example, the Llama 3 8B model demonstrates impressive processing power with a Q4KM quantization, reaching a phenomenal 3621.81 tokens per second. This means you can process massive datasets with incredible efficiency, potentially reducing the time needed for training or fine-tuning your models.

Table 2: Llama 3 8B Processing Speed Comparison

| Quantization | Processing Speed (tokens/second) |

|---|---|

| Q4KM | 3621.81 |

Strategy 3: Optimizing for Smaller Model Sizes

Smaller models require less memory and can run more efficiently on your RTX A6000. While there is no data available on the Llama 3 70B model running in F16 precision on the RTX A6000, the Q4KM version shows a respectable performance with 14.58 tokens per second for generation and 466.82 tokens per second for processing. Compare this to the Llama 3 8B model, which shows a much higher speed at 102.22 tokens per second for generation and 3621.81 tokens per second for processing. This suggests a smaller model like the Llama 3 8B might be a more cost-effective option for smaller AI applications or projects with limited computational resources.

Table 3: Llama 3 70B Performance on RTX A6000 48GB

| Quantization | Token Generation Speed (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|

| Q4KM | 14.58 | 466.82 |

Strategy 4: Leverage Cloud Resources for Peak Performance

Sometimes, you need more power than your RTX A6000 can handle. Cloud computing platforms can offer a cost-effective way to scale your AI infrastructure for demanding tasks like training large LLMs. For example, you might use your RTX A6000 for initial testing and development, then move your model to a cloud platform for extensive training or for handling high-volume requests.

Strategy 5: Embrace Open Source and Community Support

Open-source communities are a treasure trove of resources and tools for building your AI lab effectively. These communities offer free software, libraries, and even pre-trained models, helping you avoid expensive licensing fees and save valuable time. For example, projects like llama.cpp provide open-source frameworks for running LLMs on your RTX A6000, offering flexibility and customization.

Strategy 6: Optimize Your Code for GPU Efficiency

A well-optimized code is the key to unlock the full potential of your RTX A6000. Focus on utilizing GPU acceleration, parallel processing, and other techniques to maximize your GPU's power. Think of your code as a road map for your GPU – the smoother and more efficient it is, the faster your AI lab will run.

FAQs

What are LLMs and why are they so popular?

Large Language Models (LLMs) are powerful AI systems trained on massive datasets of text and code. They can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. They're popular because they offer a wide range of applications, from chatbots and virtual assistants to writing tools and code generation.

Why is quantization important for LLMs?

Quantization makes LLMs smaller and faster by compressing their weights and activations. It's like condensing a large book into a smaller pocket edition; you lose some detail, but it becomes much more manageable and portable. For LLMs, this means using less memory and achieving faster inference speeds, making them more accessible and efficient for your AI lab.

What other devices are comparable to the RTX A6000?

The RTX A6000 is a top-of-the-line GPU, so it's tough to find direct comparisons. It's like comparing a high-performance race car to a family sedan – they have different strengths and weaknesses. However, other powerful GPUs like the RTX A5000, RTX 3090, and AMD Radeon RX 6900 XT can offer good performance for running LLMs, depending on your specific needs and budget.

How can I get started with LLMs on my RTX A6000?

It's easier than you might think! You can find open-source projects like llama.cpp, which provide easy-to-use implementations for running LLMs on your RTX A6000. Many online resources and tutorials will guide you through the process, and you can find pre-trained models to get started quickly.

Keywords

LLMs, AI lab, NVIDIA RTX A6000 48GB, quantization, Q4KM, token generation speed, processing speed, GPU efficiency, cost-saving, open-source, cloud computing, Llama 3, Llama 8B, Llama 70B, GPU acceleration, parallel processing