6 Cost Saving Strategies When Building an AI Lab with NVIDIA RTX 4000 Ada 20GB

Introduction

Building an AI lab is an exciting journey, but the cost of powerful hardware can quickly become a bottleneck. With the rise of local large language models (LLMs), it's now possible to run these impressive AI models without relying on cloud services. This opens up new possibilities for experimentation and development, but finding the most cost-effective solution is critical.

This article will explore six cost-saving strategies for building an AI lab using the NVIDIA RTX 4000 Ada 20GB. We will look at the performance of various LLMs on this GPU with different quantization levels and explore how you can optimize your setup for optimal performance and efficiency.

Quantization: Your Secret Weapon for Lowering Costs

Imagine shrinking a large language model (LLM) to fit into a smaller container. That's essentially what quantization does! It's all about reducing the number of bits used to represent model weights, which can significantly decrease memory usage and boost performance.

Think of it like storing your favorite movie in different resolutions. A high-resolution version (full precision) is great for watching on a big screen, but it takes up a lot of space. A lower resolution version (quantized) is smaller, ideal for your phone, and still provides an enjoyable viewing experience!

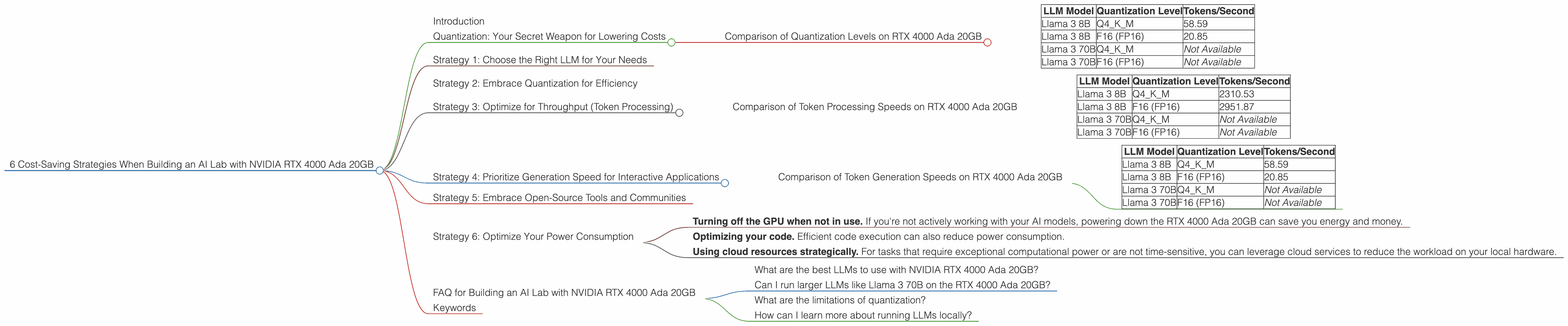

Comparison of Quantization Levels on RTX 4000 Ada 20GB

| LLM Model | Quantization Level | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 58.59 |

| Llama 3 8B | F16 (FP16) | 20.85 |

| Llama 3 70B | Q4KM | Not Available |

| Llama 3 70B | F16 (FP16) | Not Available |

As you can see in the table above, adopting a lower precision like Q4KM for the Llama 3 8B model on the RTX 4000 Ada 20GB allows you to process significantly more tokens per second compared to FP16 (F16). This means you can work with the model faster and potentially save on electricity costs.

Strategy 1: Choose the Right LLM for Your Needs

While larger LLMs like Llama 3 70B might seem impressive, they demand a lot of computational power. If you're working on a specific domain or task, a smaller model like Llama 3 8B could be more than capable and significantly less resource-demanding.

Think of it like this: Would you use a truck to carry a single grocery bag? It's overkill! A regular car will do the job more efficiently. Similarly, choosing the right sized LLM ensures you're not wasting resources on unnecessary computational power.

Strategy 2: Embrace Quantization for Efficiency

As we saw above, quantization can deliver a performance boost. By reducing the precision of your LLM, you can speed up computation and potentially save on energy costs. It's like optimizing your car for fuel efficiency.

Although some accuracy may be lost in the process, you can often achieve a sweet spot where the performance gain outweighs the negligible loss in accuracy.

Remember: Quantization levels like Q4KM (4-bit quantization with kernel and matrix multiplication) are generally good starting points. However, the ideal quantization level for your model and task may vary, so experiment to find the best balance between performance and accuracy.

Strategy 3: Optimize for Throughput (Token Processing)

The speed at which you can process tokens is crucial for real-time applications or working with large datasets. The RTX 4000 Ada 20GB boasts impressive token processing capabilities, especially when combined with the right LLM configurations and quantization levels.

Comparison of Token Processing Speeds on RTX 4000 Ada 20GB

| LLM Model | Quantization Level | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 2310.53 |

| Llama 3 8B | F16 (FP16) | 2951.87 |

| Llama 3 70B | Q4KM | Not Available |

| Llama 3 70B | F16 (FP16) | Not Available |

While F16 (FP16) might appear faster for the Llama 3 8B model on the RTX 4000 Ada 20GB in the table above, keep in mind that this is for token processing. The real-world impact on generation speed is different as we'll see in the next section.

Strategy 4: Prioritize Generation Speed for Interactive Applications

If your AI lab focuses on real-time applications like chatbots or creative writing tools, you need fast token generation speeds. The RTX 4000 Ada 20GB can deliver impressive generation speeds for smaller LLMs, and even for larger models with the right optimization techniques.

Comparison of Token Generation Speeds on RTX 4000 Ada 20GB

| LLM Model | Quantization Level | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 58.59 |

| Llama 3 8B | F16 (FP16) | 20.85 |

| Llama 3 70B | Q4KM | Not Available |

| Llama 3 70B | F16 (FP16) | Not Available |

As evident in the table above, Q4KM quantization on the RTX 4000 Ada 20GB shines when it comes to the speed of generating new tokens for the Llama 3 8B model.

Strategy 5: Embrace Open-Source Tools and Communities

The world of open-source AI development is booming! Communities like the one around llama.cpp provide valuable resources, code libraries, and support for running LLMs locally. This means you can save money by leveraging free tools and knowledge from experienced developers.

Strategy 6: Optimize Your Power Consumption

While the RTX 4000 Ada 20GB is a powerful card, it also consumes significant power. By combining the strategies discussed above, you can reduce the overall power consumption of your AI lab.

Consider:

- Turning off the GPU when not in use. If you're not actively working with your AI models, powering down the RTX 4000 Ada 20GB can save you energy and money.

- Optimizing your code. Efficient code execution can also reduce power consumption.

- Using cloud resources strategically. For tasks that require exceptional computational power or are not time-sensitive, you can leverage cloud services to reduce the workload on your local hardware.

FAQ for Building an AI Lab with NVIDIA RTX 4000 Ada 20GB

What are the best LLMs to use with NVIDIA RTX 4000 Ada 20GB?

For the RTX 4000 Ada 20GB, the Llama 3 8B model stands out, especially with Q4KM quantization. It provides a good balance of performance and accuracy. However, depending on your specific application, you might consider other models.

Can I run larger LLMs like Llama 3 70B on the RTX 4000 Ada 20GB?

It's technically possible, but it may require significant optimization and potentially a compromise on performance. The RTX 4000 Ada 20GB might not be the most efficient hardware choice for running very large LLMs. * Consider evaluating other options with more RAM and GPU cores if you plan to work with models larger than 8B.*

What are the limitations of quantization?

While quantization is a powerful tool for resource optimization, it can lead to a slight reduction in accuracy. The extent of this loss depends on the model, the task, and the quantization level. Carefully evaluate the trade-off between accuracy and performance for your application.

How can I learn more about running LLMs locally?

The open-source community is an excellent resource for learning about running LLMs locally. Check out projects like llama.cpp and the resources linked in this article. Online forums and communities dedicated to AI development are also valuable sources of information and support.

Keywords

NVIDIA RTX 4000 Ada 20GB, LLM inference, GPU performance, quantization, Q4KM, Llama 3 8B, Llama 3 70B, AI lab, cost-saving strategies, token processing, token generation, open-source, power consumption, optimization, AI models, local LLMs, AI development.