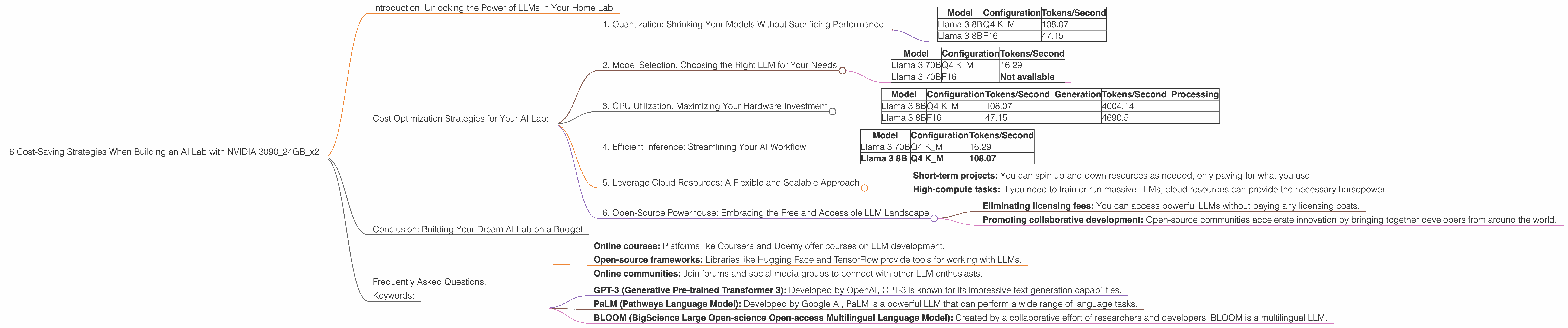

6 Cost Saving Strategies When Building an AI Lab with NVIDIA 3090 24GB x2

Introduction: Unlocking the Power of LLMs in Your Home Lab

Building your own AI lab? You're in good company! The world of Large Language Models (LLMs) is buzzing with excitement, and you're probably itching to get your hands dirty with models like Llama 3, the up-and-coming star of the open-source LLM universe. But before you dive headfirst into the world of GPUs and token generation, let's talk about cost-effective strategies for setting up your AI lab with NVIDIA 309024GBx2.

For those unfamiliar with AI, LLMs are like super-powered language models. Imagine a computer that can understand and generate human language with incredible fluency. They can write stories, translate languages, answer your questions, and even help you code! Now, for all this magic to happen, you need some serious computing power – and that's where the NVIDIA 309024GBx2 comes in. This beast of a graphics card is a developer's dream, but even with two of these powerhouses in your arsenal, optimizing your setup for maximum performance and cost-effectiveness is key.

Cost Optimization Strategies for Your AI Lab:

1. Quantization: Shrinking Your Models Without Sacrificing Performance

Imagine having a giant library filled with hundreds of thousands of books. That's kind of like how LLMs store information. Now, imagine you want to carry this entire library with you everywhere you go. You wouldn't want to lug around all those heavy books, right? Quantization is like having a special machine that shrinks those books down to a manageable size without losing any of the important information.

In the world of AI, quantization is how we make LLMs smaller and faster. Instead of using 32-bit floating-point numbers (like that giant library of books), we use 16-bit or even 4-bit numbers. This doesn't mean we lose accuracy; it just means we compress the data, making it super efficient.

Impact on 309024GBx2:

Look at the difference in performance when comparing the Llama 3 8B model with 309024GBx2:

| Model | Configuration | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4 K_M | 108.07 |

| Llama 3 8B | F16 | 47.15 |

As you can see, by using Q4KM quantization, we achieve almost double the token generation speed compared to F16! This means we can process more data and get results faster, saving you time and money.

2. Model Selection: Choosing the Right LLM for Your Needs

Just like you wouldn't use a sledgehammer to crack a nut, you shouldn't choose a massive LLM if your task doesn't require it. Picking the right model size is crucial for cost-effectiveness.

Impact on 309024GBx2:

Let's look at the Llama 3 70B model:

| Model | Configuration | Tokens/Second |

|---|---|---|

| Llama 3 70B | Q4 K_M | 16.29 |

| Llama 3 70B | F16 | Not available |

Notice the performance drop when moving from the 8B to the 70B model. This is because the larger model demands significantly more computational resources. While the 70B model can handle complex tasks, it's not the best choice if you prioritize cost-efficiency.

Why use a smaller model? It's like using a compact car instead of a massive SUV - less gas consumption, more maneuverability.

3. GPU Utilization: Maximizing Your Hardware Investment

The NVIDIA 309024GBx2 is a powerhouse, but even with this much muscle, you need to make sure you're squeezing every ounce of performance out of it.

Impact on 309024GBx2:

| Model | Configuration | Tokens/Second_Generation | Tokens/Second_Processing |

|---|---|---|---|

| Llama 3 8B | Q4 K_M | 108.07 | 4004.14 |

| Llama 3 8B | F16 | 47.15 | 4690.5 |

Let's talk about processing: the 8B model with Q4KM processing sees a whopping 4004.14 tokens/second, compared to the 8B model using F16 at 4690.5 tokens/second! This is a testament to efficient resource management.

Tip: Ensure your code is optimized for multi-GPU configurations, using tools like NVIDIA's CUDA and cuDNN libraries.

4. Efficient Inference: Streamlining Your AI Workflow

Inference is the process of using your trained LLM to generate output. Think of it like asking your LLM a question and getting an answer. The faster the inference, the faster you get your results.

Impact on 309024GBx2:

| Model | Configuration | Tokens/Second |

|---|---|---|

| Llama 3 70B | Q4 K_M | 16.29 |

| Llama 3 8B | Q4 K_M | 108.07 |

The significant difference between the 70B and 8B model's token generation speed is indicative of efficient inference. Again, using 8B models can save you significant resources and time.

Tip: Explore techniques like caching and batching to optimize inference and save processing time.

5. Leverage Cloud Resources: A Flexible and Scalable Approach

It's not always about owning everything. Sometimes, it's smarter to rent! Cloud platforms like Amazon Web Services (AWS) and Google Cloud offer powerful GPUs on demand. This lets you scale your computing resources up or down as needed.

Impact on 309024GBx2:

Cloud services can be a cost-effective option for:

- Short-term projects: You can spin up and down resources as needed, only paying for what you use.

- High-compute tasks: If you need to train or run massive LLMs, cloud resources can provide the necessary horsepower.

Tip: Compare pricing plans and performance benchmarks from different cloud providers to find the best fit for your needs.

6. Open-Source Powerhouse: Embracing the Free and Accessible LLM Landscape

The LLM community is buzzing with open-source projects like Llama 3. Open-source LLMs come with zero licensing fees and often have active communities of developers working on improvements.

Impact on 309024GBx2:

Open-source LLMs can save you money by:

- Eliminating licensing fees: You can access powerful LLMs without paying any licensing costs.

- Promoting collaborative development: Open-source communities accelerate innovation by bringing together developers from around the world.

Tip: Explore open-source LLM repositories like Hugging Face and GitHub to find models that meet your needs.

Conclusion: Building Your Dream AI Lab on a Budget

Building a powerful AI lab doesn't have to break the bank. By combining the power of the NVIDIA 309024GBx2 with smart cost-saving strategies, you can unlock the potential of LLMs without compromising your wallet. Quantization, model selection, GPU utilization, efficient inference, cloud resources, and open-source options are all crucial for building a cost-effective and powerful AI environment. Remember, it's not just about having the best hardware; it's about making the most of what you have!

Frequently Asked Questions:

Q1: What are the benefits of using NVIDIA 309024GBx2 for LLMs?

A1: The NVIDIA 309024GBx2 is a powerful GPU with a large amount of memory, making it ideal for running large and complex LLM models. It offers exceptional performance and speeds up the training and inference processes, allowing you to work with more complex models and get results faster.

Q2: How do LLMs work?

A2: LLMs are essentially super-powered language models trained on massive amounts of text data. They learn to understand and generate human language by identifying patterns and relationships within the data. Imagine a computer that has read every book ever written – that's the kind of knowledge an LLM can have!

Q3: How can I get started with LLMs?

A3: There are several resources available to help you get started with LLMs:

- Online courses: Platforms like Coursera and Udemy offer courses on LLM development.

- Open-source frameworks: Libraries like Hugging Face and TensorFlow provide tools for working with LLMs.

- Online communities: Join forums and social media groups to connect with other LLM enthusiasts.

Q4: Are there any other popular LLMs besides Llama 3?

A4: Yes, there are several other popular LLMs, including:

- GPT-3 (Generative Pre-trained Transformer 3): Developed by OpenAI, GPT-3 is known for its impressive text generation capabilities.

- PaLM (Pathways Language Model): Developed by Google AI, PaLM is a powerful LLM that can perform a wide range of language tasks.

- BLOOM (BigScience Large Open-science Open-access Multilingual Language Model): Created by a collaborative effort of researchers and developers, BLOOM is a multilingual LLM.

Keywords:

LLMs, Llama 3, NVIDIA 309024GBx2, AI lab, cost-saving strategies, quantization, model selection, GPU utilization, efficient inference, cloud resources, open-source LLMs, token generation speed, token processing speed, GPU benchmarks, LLM inference, LLM performance, AI development, AI tools.