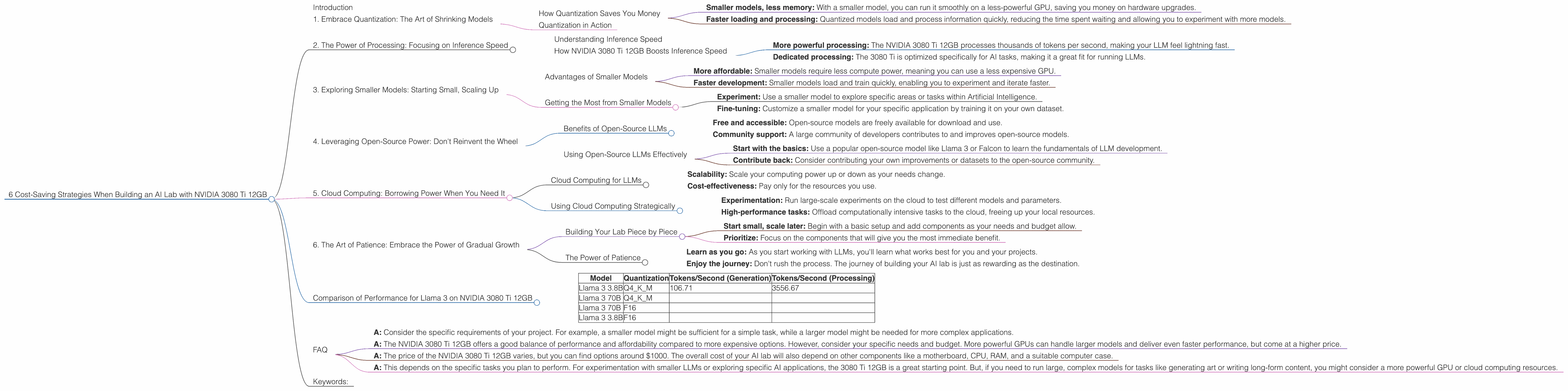

6 Cost Saving Strategies When Building an AI Lab with NVIDIA 3080 Ti 12GB

Introduction

Are you ready to dive into the exciting world of local Large Language Models (LLMs)? LLMs are the brains behind powerful applications like ChatGPT, and building your own lab lets you explore their capabilities firsthand. But, let's be real, setting up a high-performance LLM lab can be a hefty investment. That's where the NVIDIA 3080 Ti 12GB comes in as a powerful yet budget-friendly option. This article will guide you through six cost-saving strategies for building your AI lab around this GPU powerhouse, helping you maximize performance without breaking the bank.

1. Embrace Quantization: The Art of Shrinking Models

Remember that time you tried to cram way too much into your suitcase? Quantization for LLMs is kind of like that, but for models instead of clothes. It's the process of reducing the size of LLM models without significantly impacting accuracy. Think of it as squeezing more data into a smaller package.

How Quantization Saves You Money

- Smaller models, less memory: With a smaller model, you can run it smoothly on a less-powerful GPU, saving you money on hardware upgrades.

- Faster loading and processing: Quantized models load and process information quickly, reducing the time spent waiting and allowing you to experiment with more models.

Quantization in Action

Think of a model like a recipe. The original recipe (F16) uses precise measurements, allowing for the most delicious output. Quantization (Q4KM) is like using approximations, say "a pinch of salt" instead of "1/8 teaspoon." It saves a lot of space since you're not storing the exact recipe details, but the dish still tastes good.

Example: The NVIDIA 3080 Ti 12GB can handle a 3.8B parameter Llama 3 model with F16 precision at a rate of 106.71 tokens per second. Using Q4KM quantization, you can run a larger 70B parameter Llama 3 model with a similar efficiency. This means you get the benefit of a bigger, more advanced model at a lower cost, thanks to the space savings.

2. The Power of Processing: Focusing on Inference Speed

Inference is like asking your LLM a question and getting an answer. The faster it can “think” and respond, the better. The key is to optimize for speed and accuracy.

Understanding Inference Speed

Imagine your LLM is a super-efficient worker. It takes in information (tokens), processes it, and gives you an output (like a text response). The more tokens it can process per second, the faster your LLM "works."

How NVIDIA 3080 Ti 12GB Boosts Inference Speed

- More powerful processing: The NVIDIA 3080 Ti 12GB processes thousands of tokens per second, making your LLM feel lightning fast.

- Dedicated processing: The 3080 Ti is optimized specifically for AI tasks, making it a great fit for running LLMs.

Example: The NVIDIA 3080 Ti 12GB can process around 3,556 tokens per second for the 3.8B Llama 3 model with Q4KM. This means you get incredibly quick responses from the model, making your AI experiments faster and more interactive.

3. Exploring Smaller Models: Starting Small, Scaling Up

Think of smaller models as learning to walk before you run. Start with a smaller, more manageable model, and gradually increase complexity as you gain experience.

Advantages of Smaller Models

- More affordable: Smaller models require less compute power, meaning you can use a less expensive GPU.

- Faster development: Smaller models load and train quickly, enabling you to experiment and iterate faster.

Getting the Most from Smaller Models

- Experiment: Use a smaller model to explore specific areas or tasks within Artificial Intelligence.

- Fine-tuning: Customize a smaller model for your specific application by training it on your own dataset.

4. Leveraging Open-Source Power: Don't Reinvent the Wheel

Open-source LLMs are like free blueprints, allowing you to build and customize LLM models without starting from scratch. This saves you time and resources.

Benefits of Open-Source LLMs

- Free and accessible: Open-source models are freely available for download and use.

- Community support: A large community of developers contributes to and improves open-source models.

Using Open-Source LLMs Effectively

- Start with the basics: Use a popular open-source model like Llama 3 or Falcon to learn the fundamentals of LLM development.

- Contribute back: Consider contributing your own improvements or datasets to the open-source community.

5. Cloud Computing: Borrowing Power When You Need It

Think of cloud computing as renting a powerful computer for a short period. It's a great way to access high-performance computing resources without the upfront cost of buying expensive hardware.

Cloud Computing for LLMs

- Scalability: Scale your computing power up or down as your needs change.

- Cost-effectiveness: Pay only for the resources you use.

Using Cloud Computing Strategically

- Experimentation: Run large-scale experiments on the cloud to test different models and parameters.

- High-performance tasks: Offload computationally intensive tasks to the cloud, freeing up your local resources.

6. The Art of Patience: Embrace the Power of Gradual Growth

Building an AI lab is a marathon, not a sprint. Focus on gradual growth rather than trying to do everything at once.

Building Your Lab Piece by Piece

- Start small, scale later: Begin with a basic setup and add components as your needs and budget allow.

- Prioritize: Focus on the components that will give you the most immediate benefit.

The Power of Patience

- Learn as you go: As you start working with LLMs, you'll learn what works best for you and your projects.

- Enjoy the journey: Don't rush the process. The journey of building your AI lab is just as rewarding as the destination.

Comparison of Performance for Llama 3 on NVIDIA 3080 Ti 12GB

| Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 3.8B | Q4KM | 106.71 | 3556.67 |

| Llama 3 70B | Q4KM | ||

| Llama 3 70B | F16 | ||

| Llama 3 3.8B | F16 |

Note: The provided data lacks values for Llama 3 70B models with both Q4KM and F16 quantization.

FAQ

Q: What is the best way to choose an LLM model for my project?

- A: Consider the specific requirements of your project. For example, a smaller model might be sufficient for a simple task, while a larger model might be needed for more complex applications.

Q: What are the differences between the NVIDIA 3080 Ti 12GB and other GPUs?

- A: The NVIDIA 3080 Ti 12GB offers a good balance of performance and affordability compared to more expensive options. However, consider your specific needs and budget. More powerful GPUs can handle larger models and deliver even faster performance, but come at a higher price.

Q: How much does it cost to build an AI lab with an NVIDIA 3080 Ti 12GB?

- A: The price of the NVIDIA 3080 Ti 12GB varies, but you can find options around $1000. The overall cost of your AI lab will also depend on other components like a motherboard, CPU, RAM, and a suitable computer case.

Q: Will a 3080 Ti 12GB be sufficient for me?

- A: This depends on the specific tasks you plan to perform. For experimentation with smaller LLMs or exploring specific AI applications, the 3080 Ti 12GB is a great starting point. But, if you need to run large, complex models for tasks like generating art or writing long-form content, you might consider a more powerful GPU or cloud computing resources.

Keywords:

NVIDIA 3080 Ti 12GB, AI Lab, LLMs, Quantization, Inference, Open-Source, Cloud Computing, Cost-Saving Strategies, Llama 3, GPU, Token Speed, Token Processing, AI Development, Budget-Friendly, Optimization, Performance, Efficiency, Local LLM, Model Size, AI Experimentation, Data Science, Software Development.