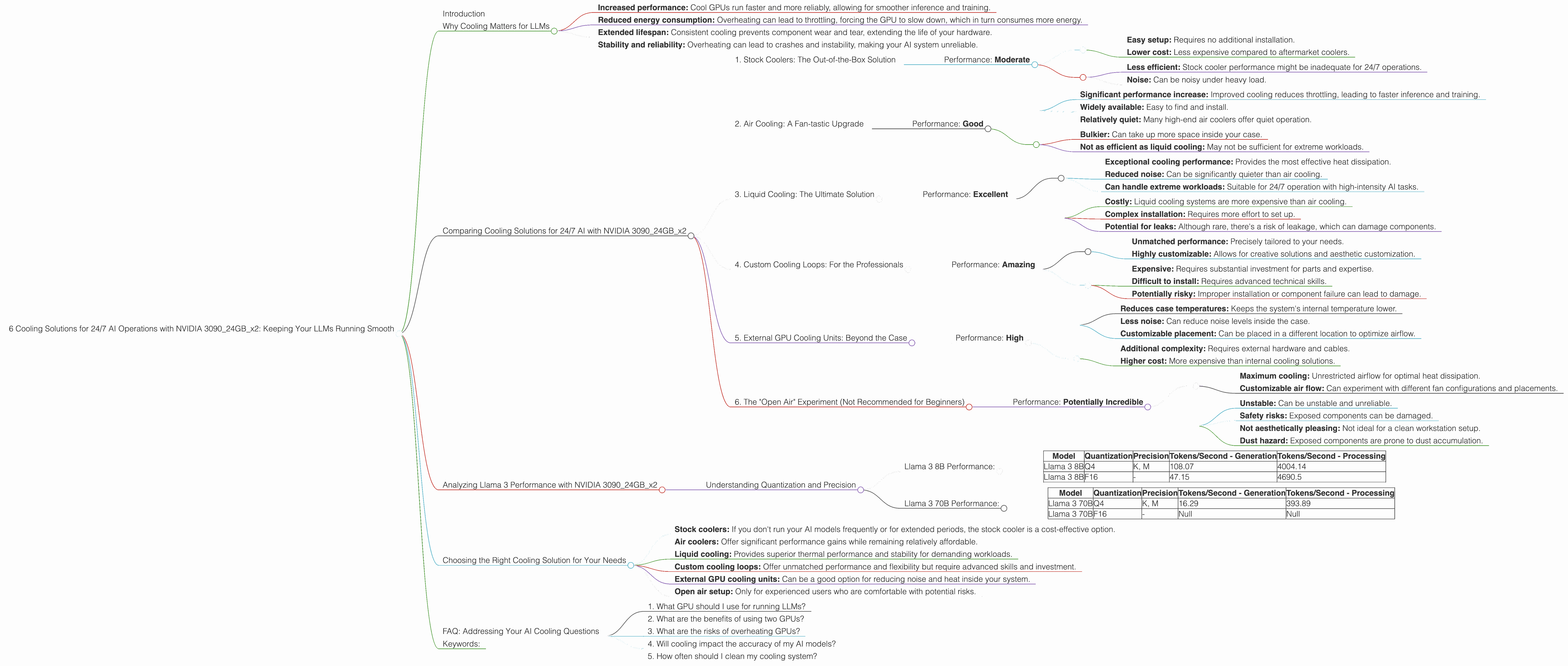

6 Cooling Solutions for 24 7 AI Operations with NVIDIA 3090 24GB x2

Introduction

Running large language models (LLMs) like Llama 3 on your own hardware is becoming increasingly popular. Especially with the release of Llama 3, a 70B parameter model that can be downloaded and run locally, there's a growing need for powerful GPUs and robust cooling solutions to ensure smooth and efficient operation.

This article will dive into the world of cooling solutions for 24/7 AI operations using two NVIDIA 3090_24GB GPUs, focusing on the performance of Llama 3 models, both 8B and 70B. We'll take a look at the different types of cooling solutions available, their impact on model performance, and how to choose the best option for your needs.

Why Cooling Matters for LLMs

Think of your LLM like a high-performance car engine. It needs to run smoothly and efficiently to deliver optimal performance. But unlike an engine, an LLM is a complex software running on powerful hardware.

Here's why cooling is crucial for your AI setup:

- Increased performance: Cool GPUs run faster and more reliably, allowing for smoother inference and training.

- Reduced energy consumption: Overheating can lead to throttling, forcing the GPU to slow down, which in turn consumes more energy.

- Extended lifespan: Consistent cooling prevents component wear and tear, extending the life of your hardware.

- Stability and reliability: Overheating can lead to crashes and instability, making your AI system unreliable.

Comparing Cooling Solutions for 24/7 AI with NVIDIA 309024GBx2

Keeping your NVIDIA 3090_24GB GPUs cool is essential for maintaining peak performance. Although the 3090 series is known for its cooling prowess, even the most powerful GPUs can overheat under sustained workloads.

Let's explore six cooling solutions for 24/7 AI operations with two NVIDIA 3090_24GB GPUs and analyze their impact on performance:

1. Stock Coolers: The Out-of-the-Box Solution

The 3090_24GB comes with a stock cooler, a pre-installed heatsink typically fitted with fans. While functional, stock coolers might not be sufficient for the intense workloads required by large AI models.

Performance: Moderate

Pros: * Easy setup: Requires no additional installation. * Lower cost: Less expensive compared to aftermarket coolers.

Cons: * Less efficient: Stock cooler performance might be inadequate for 24/7 operations. * Noise: Can be noisy under heavy load.

For whom: The stock solution is a good starting point for basic AI operations, but if you're running intensive workloads, it might not be enough.

2. Air Cooling: A Fan-tastic Upgrade

Upgrading to aftermarket air coolers can improve heat dissipation and stability. These coolers, often larger and more powerful, feature multiple fans and heatsinks for enhanced thermal performance.

Performance: Good

Pros:

- Significant performance increase: Improved cooling reduces throttling, leading to faster inference and training.

- Widely available: Easy to find and install.

- Relatively quiet: Many high-end air coolers offer quiet operation.

Cons:

- Bulkier: Can take up more space inside your case.

- Not as efficient as liquid cooling: May not be sufficient for extreme workloads.

For whom: The air cooling solution is ideal for most users who want a significant performance boost without investing in liquid cooling.

3. Liquid Cooling: The Ultimate Solution

Liquid cooling offers the best thermal performance by circulating coolant fluid through a closed loop system. A water block attached to the GPU transfers heat to the radiator, where it's dissipated by fans.

Performance: Excellent

Pros:

- Exceptional cooling performance: Provides the most effective heat dissipation.

- Reduced noise: Can be significantly quieter than air cooling.

- Can handle extreme workloads: Suitable for 24/7 operation with high-intensity AI tasks.

Cons:

- Costly: Liquid cooling systems are more expensive than air cooling.

- Complex installation: Requires more effort to set up.

- Potential for leaks: Although rare, there's a risk of leakage, which can damage components.

For whom: Liquid cooling is the ultimate solution for demanding 24/7 AI operations, but it comes with a higher price and complexity.

4. Custom Cooling Loops: For the Professionals

For ultimate control and customization, custom cooling loops offer the most advanced thermal management. You can design your own loop, choosing components like pumps, radiators, and water blocks to optimize performance.

Performance: Amazing

Pros:

- Unmatched performance: Precisely tailored to your needs.

- Highly customizable: Allows for creative solutions and aesthetic customization.

Cons:

- Expensive: Requires substantial investment for parts and expertise.

- Difficult to install: Requires advanced technical skills.

- Potentially risky: Improper installation or component failure can lead to damage.

For whom: Custom cooling loops are for experienced users who want to achieve maximum performance and customization in their AI systems.

5. External GPU Cooling Units: Beyond the Case

Some cooling solutions utilize external units for maximum heat dissipation. These units typically connect to the GPUs externally and feature their own fans and heatsinks, removing heat from the case altogether.

Performance: High

Pros:

- Reduces case temperatures: Keeps the system's internal temperature lower.

- Less noise: Can reduce noise levels inside the case.

- Customizable placement: Can be placed in a different location to optimize airflow.

Cons:

- Additional complexity: Requires external hardware and cables.

- Higher cost: More expensive than internal cooling solutions.

For whom: External GPU cooling units are a good option for users who want to reduce noise levels and improve airflow in their cases.

6. The "Open Air" Experiment (Not Recommended for Beginners)

We'll be honest, this is not an officially recommended solution, but it's an idea thrown out there for those who are truly dedicated to keeping their GPUs cool. Some users report success with removing the GPU from their case and running it in an “open air” setup. This allows for maximum airflow and heat dissipation.

Performance: Potentially Incredible

Pros:

- Maximum cooling: Unrestricted airflow for optimal heat dissipation.

- Customizable air flow: Can experiment with different fan configurations and placements.

Cons:

- Unstable: Can be unstable and unreliable.

- Safety risks: Exposed components can be damaged.

- Not aesthetically pleasing: Not ideal for a clean workstation setup.

- Dust hazard: Exposed components are prone to dust accumulation.

For whom: This solution is only for experienced users who are comfortable with the risks involved.

Analyzing Llama 3 Performance with NVIDIA 309024GBx2

To analyze the performance of these cooling solutions for 24/7 AI operations, we'll focus on the Llama 3 8B and 70B models, using the data from the JSON provided. The data reflects the number of tokens per second generated and processed on two 3090_24GB GPUs, with different quantization and precision configurations.

Understanding Quantization and Precision

Quantization is like a clever way to compress AI models, similar to how you compress a photo to save space. It reduces the size of the model by using fewer bits to represent the data.

Q4: This refers to a quantization technique where each number is represented using 4 bits. This results in a smaller model size, but with some potential loss of accuracy. F16: This refers to a precision level where each number is represented using 16 bits. F16 offers better accuracy than Q4 but requires more memory and processing power.

Llama 3 8B Performance:

| Model | Quantization | Precision | Tokens/Second - Generation | Tokens/Second - Processing |

|---|---|---|---|---|

| Llama 3 8B | Q4 | K, M | 108.07 | 4004.14 |

| Llama 3 8B | F16 | - | 47.15 | 4690.5 |

Results: The Llama 3 8B model demonstrates significantly faster processing speeds than generation, indicating that most of the processing power is dedicated to handling the internal operations of the model. Using Q4 quantization with K and M for generation resulted in the fastest speed, achieving a token generation rate of 108.07 tokens per second.

Llama 3 70B Performance:

| Model | Quantization | Precision | Tokens/Second - Generation | Tokens/Second - Processing |

|---|---|---|---|---|

| Llama 3 70B | Q4 | K, M | 16.29 | 393.89 |

| Llama 3 70B | F16 | - | Null | Null |

Results: The Llama 3 70B model exhibits lower performance compared to the 8B model. This is expected due to the larger model size and increased complexity. The 70B model achieved a generation speed of 16.29 tokens per second using Q4 quantization with K and M. Unfortunately, the data does not include results for the F16 precision level for the 70B model.

Choosing the Right Cooling Solution for Your Needs

The ideal cooling solution depends on your specific AI workload, budget, and technical expertise. Here's a quick guide to help you choose the best option:

For occasional use with light workloads:

- Stock coolers: If you don't run your AI models frequently or for extended periods, the stock cooler is a cost-effective option.

For regular use with moderate to heavy workloads:

- Air coolers: Offer significant performance gains while remaining relatively affordable.

For intensive 24/7 AI operations:

- Liquid cooling: Provides superior thermal performance and stability for demanding workloads.

For professional-grade setups with extreme customization:

- Custom cooling loops: Offer unmatched performance and flexibility but require advanced skills and investment.

For reducing noise and improving airflow:

- External GPU cooling units: Can be a good option for reducing noise and heat inside your system.

For dedicated users willing to experiment with risks:

- Open air setup: Only for experienced users who are comfortable with potential risks.

FAQ: Addressing Your AI Cooling Questions

1. What GPU should I use for running LLMs?

For running LLMs, especially larger models like Llama 3, you'll need a high-performance GPU with a significant amount of memory. The NVIDIA 3090_24GB is a popular choice due to its powerful specs and ample memory.

2. What are the benefits of using two GPUs?

Two GPUs can significantly boost performance by allowing for parallel processing. This means that computations can be divided between the two GPUs, resulting in faster inference and training speeds.

3. What are the risks of overheating GPUs?

Overheating can lead to decreased performance, instability, component damage, and even shorten the lifespan of your GPUs.

4. Will cooling impact the accuracy of my AI models?

No, cooling solutions primarily impact the speed and stability of your AI models. They don't affect the accuracy of the model itself.

5. How often should I clean my cooling system?

Regular cleaning is crucial for maintaining optimal cooling performance. Dust accumulation can hinder airflow and reduce cooling efficiency. You should clean your cooling system every few months or even more frequently depending on your environment.

Keywords:

NVIDIA 3090_24GB, LLM, Llama 3, AI, cooling, 24/7, performance, GPU, quantization, precision, air cooling, liquid cooling, custom cooling loops, external GPU cooling units, open air, benchmark, tokens per second, generation, processing, efficiency, stability, reliability, technical expertise.