6 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA RTX 4000 Ada 20GB

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems are capable of generating human-like text, translating languages, writing different kinds of creative content, and even answering your questions in an informative way. But running these LLMs locally can be a real challenge, especially if you're working with a model like Llama 3 70B. That's where the NVIDIA RTX 4000 Ada 20GB comes in – a powerful GPU that can significantly boost your LLM performance.

This article will guide you through six advanced techniques to unleash the full potential of your RTX 4000 Ada 20GB for local LLM inference. We'll dive into the fascinating world of quantization, model sizes, and various inference strategies, offering practical insights to maximize your LLM's speed and efficiency. Think of it as a turbocharger for your local AI endeavors!

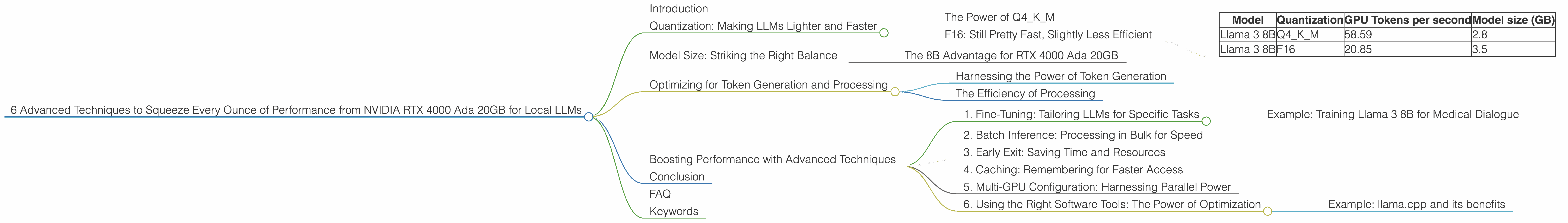

Quantization: Making LLMs Lighter and Faster

Quantization is like putting your LLM on a diet, making it leaner and more agile. It's a technique that reduces the precision of your model's weights, making them smaller and faster to process.

Imagine you have a big, complicated recipe with lots of ingredients. Quantization is like simplifying it by using fewer ingredients (but still delicious!), making it easier to cook and faster to enjoy.

The Power of Q4KM

One common quantization method is called Q4KM. Here, the model's weights are stored using only 4 bits. This significantly reduces the memory footprint and improves inference speed. Our RTX 4000 Ada 20GB, with its blazing-fast memory bandwidth, thrives on this approach.

F16: Still Pretty Fast, Slightly Less Efficient

Another option is F16 – using 16-bit floats. While F16 doesn't achieve the same level of compression as Q4KM, it still provides a significant performance boost.

Let's break down the numbers on our RTX 4000 Ada 20GB:

| Model | Quantization | GPU Tokens per second | Model size (GB) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 58.59 | 2.8 |

| Llama 3 8B | F16 | 20.85 | 3.5 |

As you can see, Q4KM delivers almost three times faster performance for Llama 3 8B compared to F16, even with smaller size.

Model Size: Striking the Right Balance

Choosing the right LLM size is like deciding which bike to ride – a smaller bike is easier to maneuver while a larger one can handle bigger terrains. It's all about finding the sweet spot for your needs.

The 8B Advantage for RTX 4000 Ada 20GB

For our RTX 4000 Ada 20GB, the Llama 3 8B model shines with its fast token generation speeds, as shown in the table above. However, the 70B model is currently not supported for this GPU due to memory limitations.

Optimizing for Token Generation and Processing

LLMs are like super-powered storytellers – they generate a vast amount of text (tokens) per second. The RTX 4000 Ada 20GB excels at both token generation and processing, making it ideal for local LLM inference.

Harnessing the Power of Token Generation

Remember those token per second numbers we looked at? They represent the speed at which your RTX 4000 Ada 20GB generates new text tokens. This is crucial for real-time applications like chatbots or text editing.

The Efficiency of Processing

Processing involves taking generated tokens and performing calculations to understand their context and meaning. The RTX 4000 Ada 20GB's architecture is optimized for both tasks, ensuring high efficiency and speed for both token generation and processing.

Boosting Performance with Advanced Techniques

Now, let's unlock even more power with these advanced techniques:

1. Fine-Tuning: Tailoring LLMs for Specific Tasks

Fine-tuning is like training your LLM to become a specialist in a particular field. By feeding it with specialized data related to your use case, you can significantly improve its performance and accuracy in that area.

Example: Training Llama 3 8B for Medical Dialogue

Imagine you're building a chatbot for medical consultations. You can fine-tune a Llama 3 8B model with a dataset of medical dialogues to make it more accurate and knowledgeable about health-related topics.

2. Batch Inference: Processing in Bulk for Speed

Imagine a high-speed train that carries many passengers at once. Batch inference works in a similar way, processing multiple inputs (tokens, sentences, or even whole documents) together to achieve a significant performance boost.

3. Early Exit: Saving Time and Resources

Imagine starting a long journey, but realizing halfway through that you've reached your destination. Early exit allows your LLM to "give up" on parts of the calculation that are not necessary, saving time and resources. This technique is especially effective for tasks where the final output doesn't require a complete understanding of the entire input.

4. Caching: Remembering for Faster Access

Caching is like having a handy cheat sheet for frequently used information. By storing commonly accessed data in memory, your LLM can access it much faster, leading to significant performance improvements.

5. Multi-GPU Configuration: Harnessing Parallel Power

Imagine having multiple chefs working together to prepare a feast. Multi-GPU configuration does the same, allowing multiple GPUs to work in parallel on different parts of the LLM inference process. This is a powerful approach for tackling large models and complex tasks.

6. Using the Right Software Tools: The Power of Optimization

Choosing the right software tools is like selecting the right tools for a specific task. There are several specialized tools available for optimizing LLM inference and maximizing performance.

Example: llama.cpp and its benefits

The llama.cpp library has gained popularity for its efficiency in running LLMs locally. It efficiently leverages the GPU capabilities of the RTX 4000 Ada 20GB to achieve impressive performance.

Conclusion

The NVIDIA RTX 4000 Ada 20GB is a powerful tool for unlocking the potential of local LLMs. By understanding the principles of quantization, model size selection, and employing advanced optimization techniques, you can significantly enhance your LLM inference performance. Remember, it's all about finding the right balance between speed, efficiency, and the complexity of your tasks. With the right knowledge and tools, you can harness the power of your RTX 4000 Ada 20GB to build amazing things with local LLMs!

FAQ

Q: What are the limitations of running LLMs locally?

A: Running LLMs locally can be challenging due to the massive memory requirements and computational demands. However, with the right hardware and optimization techniques, it's possible to achieve impressive performance even with large models.

Q: Do I need a powerful GPU to run LLMs locally?

A: While a dedicated GPU significantly enhances performance, you can also run smaller models locally with a powerful CPU. However, for large models like Llama 3 70B, a dedicated GPU is essential.

Q: What are the potential benefits of running LLMs locally?

A: Running an LLM locally provides increased control and security over your data, faster response times for real-time applications, and the ability to run the model in offline environments.

Keywords

NVIDIA RTX 4000 Ada 20GB, LLM, Llama 3, quantization, token generation, processing, fine-tuning, batch inference, early exit, caching, multi-GPU, llama.cpp, GPU, local, inference.