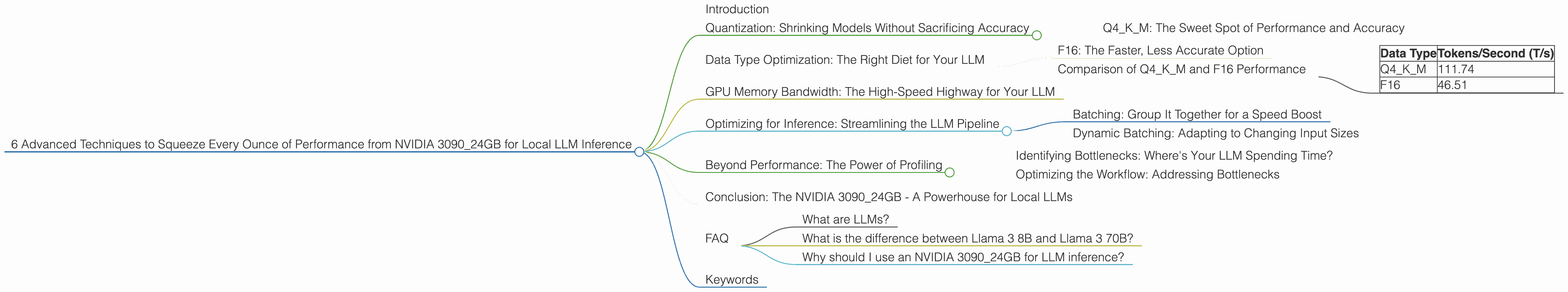

6 Advanced Techniques to Squeeze Every Ounce of Performance from NVIDIA 3090 24GB

Introduction

The world of large language models (LLMs) is exploding, offering incredible capabilities for natural language processing and generation. But running these models locally can be a resource-intensive endeavor. That's where the NVIDIA 309024GB comes in, a powerhouse of a graphics card capable of handling even the most demanding LLMs. This article will delve into six advanced techniques to maximize the performance of your NVIDIA 309024GB for local LLM inference, unleashing the full potential of these models on your desktop.

Quantization: Shrinking Models Without Sacrificing Accuracy

Think of quantization as a diet for your LLM. It's a magic trick that compresses the model's size without sacrificing too much accuracy. You see, LLMs are often trained using 32-bit floating-point numbers (FP32), which are like the heavy, calorie-laden meals of the LLM world. Quantization transforms these FP32 numbers into smaller, more efficient representations, like switching to a lighter, healthy diet.

Q4KM: The Sweet Spot of Performance and Accuracy

The NVIDIA 309024GB thrives with Q4KM quantization. "Q4" signifies 4-bit quantization, which is like reducing the number of calories in each food item. "KM" refers to the quantization approach applied to the model's key components, the kernel (K) and the model (M). This technique strikes a balance between performance and accuracy, allowing you to run larger LLMs like Llama 3 8B while maintaining reasonable accuracy.

Data Type Optimization: The Right Diet for Your LLM

Just like different foods have different nutritional values, different data types within the LLM world impact performance.

F16: The Faster, Less Accurate Option

F16, or 16-bit floating-point, is another data type used in LLMs. It's like a low-calorie, slightly less nutritious food option compared to FP32. Although it's faster, it can sometimes lead to a drop in accuracy.

Comparison of Q4KM and F16 Performance

Let's compare these data types using the Llama 3 8B model on the NVIDIA 3090_24GB. We'll use the benchmark of tokens per second (T/s) to measure performance.

| Data Type | Tokens/Second (T/s) |

|---|---|

| Q4KM | 111.74 |

| F16 | 46.51 |

As you can see, Q4KM significantly outperforms F16, achieving nearly 2.4x the speed. However, it's important to remember that F16 sacrifices a bit of accuracy for speed. The best data type depends on the specific needs of your application and the acceptable trade-off between speed and accuracy.

GPU Memory Bandwidth: The High-Speed Highway for Your LLM

Think of GPU memory bandwidth as the highway your LLM uses to transport its data. A wider highway (higher bandwidth) means faster data transfer, leading to quicker processing. The NVIDIA 3090_24GB offers an impressive bandwidth of 936 GB/s. This allows it to handle the massive amounts of data involved in LLM inference with speed.

Optimizing for Inference: Streamlining the LLM Pipeline

This section focuses on optimizing the inference process to squeeze every ounce of performance from your NVIDIA 3090_24GB.

Batching: Group It Together for a Speed Boost

Batching is like carpooling for LLMs. Instead of processing inputs individually, you group them into batches, similar to passengers in a car. This reduces overhead and increases efficiency, leading to a significant performance bump.

Dynamic Batching: Adapting to Changing Input Sizes

Dynamic batching takes batching a step further. Instead of using a fixed batch size, it dynamically adjusts the batch size based on the size of the input. This ensures efficient use of the GPU's resources, leading to faster processing times.

Beyond Performance: The Power of Profiling

Profiling is like a performance detective, providing insights into where your LLM is spending its time. It helps you identify bottlenecks and areas for improvement.

Identifying Bottlenecks: Where's Your LLM Spending Time?

Profiling tools can reveal which parts of the inference process are taking the longest, highlighting areas for optimization. For example, you might find that the model's forward pass is taking a significant amount of time, indicating a potential need for optimization in that area.

Optimizing the Workflow: Addressing Bottlenecks

By pinpointing bottlenecks through profiling, you can focus your optimization efforts on specific areas, maximizing the impact of your changes.

Conclusion: The NVIDIA 3090_24GB - A Powerhouse for Local LLMs

The NVIDIA 3090_24GB offers a powerful platform for local LLM inference. By employing advanced techniques such as quantization, data type optimization, batching, and profiling, you can unlock the full potential of this graphics card, enabling you to run demanding LLMs like Llama 3 8B with impressive speed and accuracy.

FAQ

What are LLMs?

LLMs are a type of deep learning model that can understand and generate human-like text. They are trained on vast amounts of text data and have achieved remarkable success in various natural language processing tasks.

What is the difference between Llama 3 8B and Llama 3 70B?

The numbers "8B" and "70B" refer to the number of parameters in the LLM. A larger parameter count indicates a bigger, more complex model, capable of handling more complex tasks. Llama 3 70B is a more powerful LLM than Llama 3 8B, but it also requires more computational resources.

Why should I use an NVIDIA 3090_24GB for LLM inference?

The NVIDIA 3090_24GB is a powerful graphics card with high memory bandwidth, allowing it to handle the massive amounts of data involved in LLM inference with speed. This makes it an ideal choice for local LLM inference, enabling you to run these demanding models without relying on cloud resources.

Keywords

LLM, NVIDIA 309024GB, performance, inference, quantization, Q4K_M, F16, tokens per second, batching, dynamic batching, profiling, Llama 3 8B, Llama 3 70B, GPU, GPU memory bandwidth, deep learning, natural language processing, local inference.