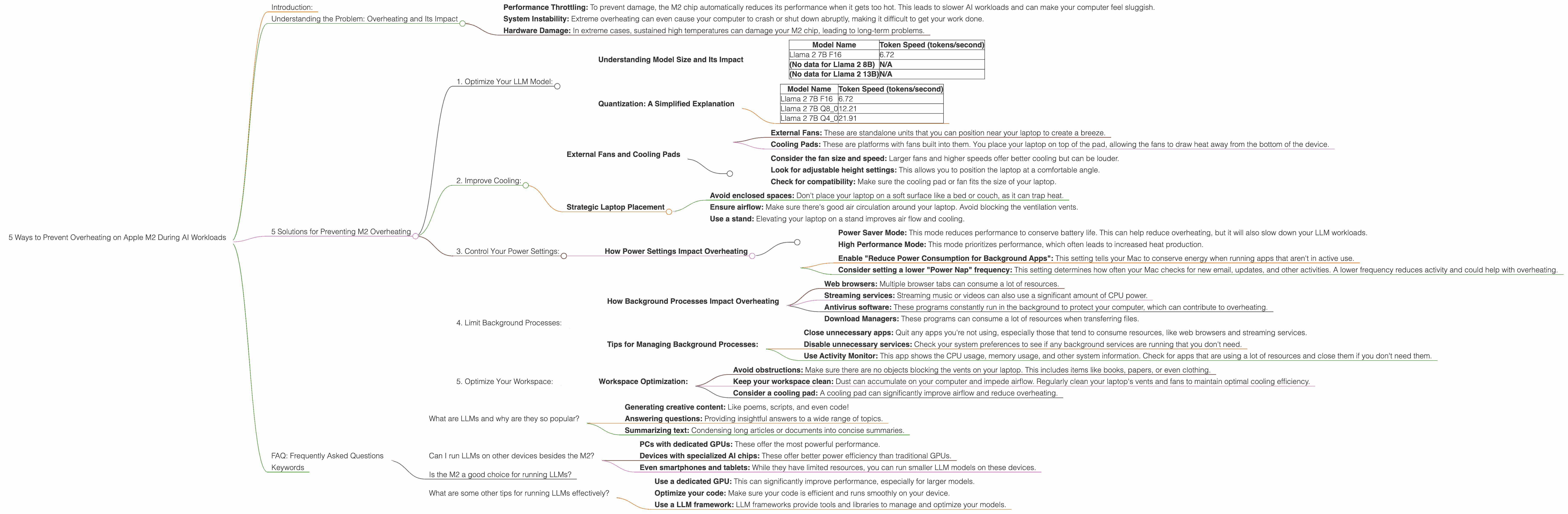

5 Ways to Prevent Overheating on Apple M2 During AI Workloads

Introduction:

You love running large language models (LLMs) on your Apple M2. You're impressed by the speed, power and the creative potential of these models. However, you've encountered a common issue: overheating. The M2 chip, while powerful, can heat up significantly when performing complex AI tasks. This can lead to performance throttling and even system instability.

But don't worry, you're not alone. This article will guide you on 5 simple ways to keep your M2 cool and your AI workloads running smoothly.

Understanding the Problem: Overheating and Its Impact

Think about the M2 chip as a high-performance engine; it's designed to deliver power, but it also produces heat. When you run AI workloads, the M2 chip works overtime, pushing its processing capabilities to the limit. This intense activity generates substantial heat.

Overheating can lead to a few issues:

- Performance Throttling: To prevent damage, the M2 chip automatically reduces its performance when it gets too hot. This leads to slower AI workloads and can make your computer feel sluggish.

- System Instability: Extreme overheating can even cause your computer to crash or shut down abruptly, making it difficult to get your work done.

- Hardware Damage: In extreme cases, sustained high temperatures can damage your M2 chip, leading to long-term problems.

5 Solutions for Preventing M2 Overheating

Let's dive into 5 common strategies to combat overheating:

1. Optimize Your LLM Model:

The first and most important step is to optimize the LLM model you're using. This involves choosing the right model size and quantization level to balance between performance and resource consumption.

Understanding Model Size and Its Impact

Model size directly impacts both performance and overheating. Larger models require more computational power and memory, leading to increased heat generation. However, larger models often deliver more sophisticated results. Here's a comparison of Llama 2 model sizes on the M2, showing the impact of model size on token speed:

| Model Name | Token Speed (tokens/second) |

|---|---|

| Llama 2 7B F16 | 6.72 |

| (No data for Llama 2 8B) | N/A |

| (No data for Llama 2 13B) | N/A |

- Note: The Llama 2 7B model is a good starting point for balancing performance and resources on the M2. Larger models like 8B or 13B might put more strain on your chip and lead to more overheating.

Quantization: A Simplified Explanation

Quantization is a technique that reduces the memory footprint and computational requirements of a model. Imagine it like compressing a video file; you reduce its size without compromising too much on quality.

Here's how quantization applies to LLMs:

- F16 (Half-precision Floating Point): The standard for many LLMs. It provides a good balance between accuracy and performance.

- Q8 (8-bit Quantization): This uses fewer bits to represent data, leading to smaller models and faster processing speeds. However, it might slightly impact accuracy.

- Q4 (4-bit Quantization): This reduces the model size even further. This is the lowest level of quantization commonly used. It can significantly increase performance, but might lead to a greater loss of accuracy.

Our data shows how different quantization levels affect token speed on an M2:

| Model Name | Token Speed (tokens/second) |

|---|---|

| Llama 2 7B F16 | 6.72 |

| Llama 2 7B Q8_0 | 12.21 |

| Llama 2 7B Q4_0 | 21.91 |

- Note: As you can see, Q80 and Q40 quantization significantly improve token speed. However, it's important to consider whether the potential loss of accuracy is acceptable for your tasks.

Tips for Choosing the Right Model and Quantization:

- Start with a smaller model: If you're not sure where to start, try the Llama 2 7B model. It's generally good enough for many tasks and won't overload your M2.

- Experiment with different quantization levels: Try Q80 or Q40 to see if it improves performance without affecting accuracy too much.

- Consider your use case: If you need the highest accuracy (e.g., for scientific research), stick with F16. If you need to generate text quickly (e.g., for a chatbot), experiment with Q80 or Q40.

2. Improve Cooling:

If optimizing model parameters doesn't solve your overheating problem, you can improve your computer’s cooling system. This involves using external fans, cooling pads, or strategically placing your laptop.

External Fans and Cooling Pads

External fans and or cooling pads are popular choices for cooling laptops. They circulate air around the device and help dissipate heat.

- External Fans: These are standalone units that you can position near your laptop to create a breeze.

- Cooling Pads: These are platforms with fans built into them. You place your laptop on top of the pad, allowing the fans to draw heat away from the bottom of the device.

Tips for Choosing External Cooling Solutions:

- Consider the fan size and speed: Larger fans and higher speeds offer better cooling but can be louder.

- Look for adjustable height settings: This allows you to position the laptop at a comfortable angle.

- Check for compatibility: Make sure the cooling pad or fan fits the size of your laptop.

Strategic Laptop Placement

Even simple changes to your laptop's environment can help with cooling:

- Avoid enclosed spaces: Don't place your laptop on a soft surface like a bed or couch, as it can trap heat.

- Ensure airflow: Make sure there's good air circulation around your laptop. Avoid blocking the ventilation vents.

- Use a stand: Elevating your laptop on a stand improves air flow and cooling.

3. Control Your Power Settings:

Your Mac's power settings can influence how aggressively the M2 chip manages its power draw. Carefully adjusting these settings can help reduce overheating, although you might have to sacrifice some performance.

How Power Settings Impact Overheating

- Power Saver Mode: This mode reduces performance to conserve battery life. This can help reduce overheating, but it will also slow down your LLM workloads.

- High Performance Mode: This mode prioritizes performance, which often leads to increased heat production.

Tips for Power Settings:

- Enable "Reduce Power Consumption for Background Apps": This setting tells your Mac to conserve energy when running apps that aren't in active use.

- Consider setting a lower "Power Nap" frequency: This setting determines how often your Mac checks for new email, updates, and other activities. A lower frequency reduces activity and could help with overheating.

Note: Experiment with these settings to find a balance between performance and cooling. You may need to adjust these settings depending on the specific task you are performing.

4. Limit Background Processes:

Background processes can consume valuable CPU resources and contribute to overheating. Close unnecessary apps and services to free up resources for your LLM workload.

#### How Background Processes Impact Overheating

Background processes are apps that run silently in the background, even when you're not actively using them. These processes can put an extra strain on your M2 chip, leading to increased heat production. Common culprits include:

- Web browsers: Multiple browser tabs can consume a lot of resources.

- Streaming services: Streaming music or videos can also use a significant amount of CPU power.

- Antivirus software: These programs constantly run in the background to protect your computer, which can contribute to overheating.

- Download Managers: These programs can consume a lot of resources when transferring files.

Tips for Managing Background Processes:

- Close unnecessary apps: Quit any apps you're not using, especially those that tend to consume resources, like web browsers and streaming services.

- Disable unnecessary services: Check your system preferences to see if any background services are running that you don't need.

- Use Activity Monitor: This app shows the CPU usage, memory usage, and other system information. Check for apps that are using a lot of resources and close them if you don't need them.

5. Optimize Your Workspace:

Your workspace can also play a role in cooling. Optimizing your workspace for airflow reduces heat concentration and helps prevent overheating.

Workspace Optimization:

- Avoid obstructions: Make sure there are no objects blocking the vents on your laptop. This includes items like books, papers, or even clothing.

- Keep your workspace clean: Dust can accumulate on your computer and impede airflow. Regularly clean your laptop's vents and fans to maintain optimal cooling efficiency.

- Consider a cooling pad: A cooling pad can significantly improve airflow and reduce overheating.

FAQ: Frequently Asked Questions

What are LLMs and why are they so popular?

LLMs, or Large Language Models, are powerful AI systems that can understand and generate human-like text. They've become popular for:

- Generating creative content: Like poems, scripts, and even code!

- Answering questions: Providing insightful answers to a wide range of topics.

- Summarizing text: Condensing long articles or documents into concise summaries.

Can I run LLMs on other devices besides the M2?

Yes, you can run LLMs on a wide array of devices, including:

- PCs with dedicated GPUs: These offer the most powerful performance.

- Devices with specialized AI chips: These offer better power efficiency than traditional GPUs.

- Even smartphones and tablets: While they have limited resources, you can run smaller LLM models on these devices.

Is the M2 a good choice for running LLMs?

The M2 chip, particularly the M2 Pro and M2 Max, offer powerful performance for running LLMs. However, it's important to consider the model size you're using and the potential for overheating.

What are some other tips for running LLMs effectively?

- Use a dedicated GPU: This can significantly improve performance, especially for larger models.

- Optimize your code: Make sure your code is efficient and runs smoothly on your device.

- Use a LLM framework: LLM frameworks provide tools and libraries to manage and optimize your models.

Keywords

LLM, large language models, Apple M2, overheating, performance, cooling, quantization, Llama 2, GPU, token speed, background processes, workspace, power settings, framework, AI, model size, F16, Q8, Q4