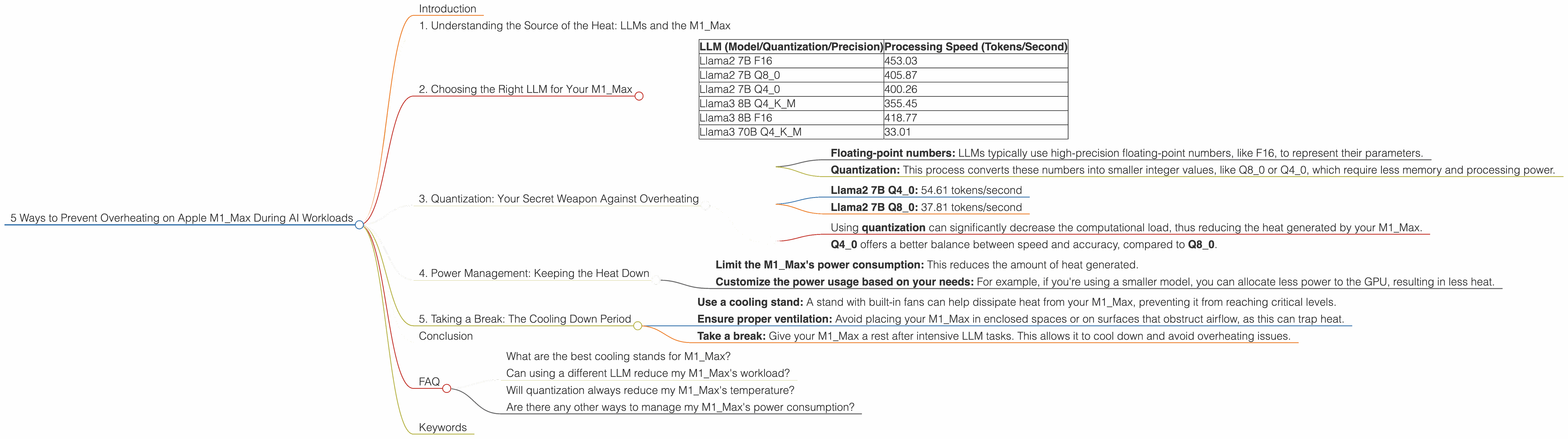

5 Ways to Prevent Overheating on Apple M1 Max During AI Workloads

Introduction

Running large language models (LLMs) on your Apple M1_Max can be a thrilling journey into the world of artificial intelligence. But picture this: you're diving deep into a chat with your favorite LLM, generating creative text, and suddenly, your Mac starts sounding like a jet engine taking off - a sure sign of overheating.

This is a common concern for many M1Max users, especially when working with computationally demanding LLMs. But fear not! This article will guide you through five effective strategies to keep your M1Max cool and prevent it from overheating while you're unlocking the potential of LLMs.

1. Understanding the Source of the Heat: LLMs and the M1_Max

LLMs, particularly those with billions of parameters, are computationally intensive. They process vast amounts of data during generation, requiring significant processing power. This power comes from the M1_Max's powerful GPU, driving the calculations. Think of it like a marathon runner: the more intense the run, the more heat your body generates!

The M1_Max is designed with a powerful GPU and a sophisticated thermal management system. However, the combination of LLM processing and a high-performance GPU can push the limits of this system, leading to potential overheating.

2. Choosing the Right LLM for Your M1_Max

Not all LLMs are created equal. Remember, the bigger the model, the more processing power it demands. If you're working with a smaller model like Llama2 7B, you're likely to have fewer thermal issues than with a larger model like Llama3 70B.

Here's how different LLMs perform on the M1_Max:

| LLM (Model/Quantization/Precision) | Processing Speed (Tokens/Second) |

|---|---|

| Llama2 7B F16 | 453.03 |

| Llama2 7B Q8_0 | 405.87 |

| Llama2 7B Q4_0 | 400.26 |

| Llama3 8B Q4KM | 355.45 |

| Llama3 8B F16 | 418.77 |

| Llama3 70B Q4KM | 33.01 |

Key Takeaways:

- Llama2 7B performs significantly faster than Llama3 70B (33.01 tokens/second) on the M1_Max, potentially generating less heat.

- F16 (half-precision floating-point numbers) generally results in faster speeds than Q80 (quantization) and Q40, but might introduce a slight loss of accuracy.

- Q4KM (quantization with kernel-based matrix multiplication) offers a good balance between performance and accuracy.

Pro Tip: If you're working with multiple LLMs, try starting with a smaller model like Llama2 7B to avoid potential thermal issues.

3. Quantization: Your Secret Weapon Against Overheating

Imagine shrinking a massive library into a smaller, more manageable space. That's essentially what quantization does for LLMs. It reduces the size of the model's parameters without significantly impacting performance.

Here's how it works:

- Floating-point numbers: LLMs typically use high-precision floating-point numbers, like F16, to represent their parameters.

- Quantization: This process converts these numbers into smaller integer values, like Q80 or Q40, which require less memory and processing power.

Example Think of it like a video game: a high-resolution image uses lots of data to show every detail. Quantization is like lowering the resolution, reducing the data while still retaining enough detail for a good gaming experience.

Results:

- Llama2 7B Q4_0: 54.61 tokens/second

- Llama2 7B Q8_0: 37.81 tokens/second

Key Takeaways:

- Using quantization can significantly decrease the computational load, thus reducing the heat generated by your M1_Max.

- Q40 offers a better balance between speed and accuracy, compared to Q80.

4. Power Management: Keeping the Heat Down

When you're pushing your M1Max to its limits, you're essentially giving it a workout. Just like a marathon runner, your M1Max needs proper power management for optimal performance.

Using a power management tool can help you:

- Limit the M1_Max's power consumption: This reduces the amount of heat generated.

- Customize the power usage based on your needs: For example, if you're using a smaller model, you can allocate less power to the GPU, resulting in less heat.

Pro Tip: Explore power management tools specifically designed for the M1_Max to control the power draw and prevent overheating.

5. Taking a Break: The Cooling Down Period

Imagine your M1_Max as a high-performance sports car. It's built for speed and power, but it needs regular breaks to cool down and prevent overheating.

Here are some tips for keeping your M1_Max cool:

- Use a cooling stand: A stand with built-in fans can help dissipate heat from your M1_Max, preventing it from reaching critical levels.

- Ensure proper ventilation: Avoid placing your M1_Max in enclosed spaces or on surfaces that obstruct airflow, as this can trap heat.

- Take a break: Give your M1_Max a rest after intensive LLM tasks. This allows it to cool down and avoid overheating issues.

Conclusion

The M1Max is a powerful tool for exploring the amazing world of LLMs. But like any high-performance system, it needs careful attention to prevent overheating. By understanding the sources of heat, using quantization techniques, managing power effectively, and giving your M1Max regular breaks, you can ensure smooth operation and enjoy a seamless AI experience. Remember, a cool M1Max is a happy M1Max!

FAQ

What are the best cooling stands for M1_Max?

There are many cooling stands available on the market, and the best choice will depend on your specific needs and budget. Look for stands with multiple fans, adjustable height, and compatibility with your M1_Max model. Read reviews and compare features to find the right one for you.

Can using a different LLM reduce my M1_Max's workload?

Yes! Choosing a smaller or more efficient LLM can significantly reduce the load on your M1_Max, leading to less heat generation. Consider using models like Llama2 7B instead of Llama3 70B for a smoother experience.

Will quantization always reduce my M1_Max's temperature?

While quantization generally helps, specific results might vary depending on the LLM and your chosen settings. It's best to experiment and find the optimal balance between performance and heat reduction.

Are there any other ways to manage my M1_Max's power consumption?

Yes, you can adjust power settings in macOS to optimize power usage for your M1_Max. Explore options like reducing screen brightness, disabling background tasks, and adjusting power-saving preferences.

Keywords

M1_Max, overheating, LLM, Llama2 7B, Llama3 70B, quantization, Apple, GPU, processing speed, tokens/second, thermal management, power management, cooling stand, ventilation, AI