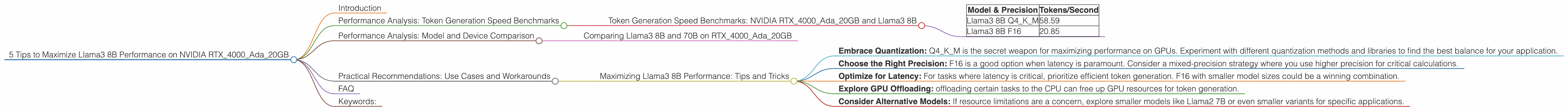

5 Tips to Maximize Llama3 8B Performance on NVIDIA RTX 4000 Ada 20GB

Introduction

You've got your hands on a powerful NVIDIA RTX4000Ada_20GB, and you're ready to unleash the power of Llama3 8B. But how do you squeeze every ounce of performance out of this dynamic duo? This guide dives deep into optimizing your local Large Language Model (LLM) setup, offering practical tips and insights based on real-world benchmarks. Whether you're a seasoned developer or just starting your LLM journey, these tips will help you achieve the smoothest experience possible.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B

Let's dive into the heart of the matter: how fast can our NVIDIA RTX4000Ada_20GB generate tokens with Llama3 8B?

| Model & Precision | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 58.59 |

| Llama3 8B F16 | 20.85 |

- Q4KM (Quantized 4-bit, Kernel/Matrix) is a technique that drastically reduces the memory footprint and computational demands of LLMs.

- F16 (Half-precision floating-point) uses less storage than the standard 32-bit float but with a slight reduction in accuracy.

Key Takeaways:

- Quantization is King: The massive jump in performance with Q4KM is a testament to the power of quantization. It's essential for achieving optimal performance on resource-constrained devices.

- Precision Trade-off: While F16 sacrifices some accuracy, it still delivers decent performance, especially when latency is critical.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and 70B on RTX4000Ada_20GB

Unfortunately, we don't have benchmark data for Llama3 70B on the RTX4000Ada_20GB. This is likely due to the massive memory requirements of the larger model.

Practical Recommendations: Use Cases and Workarounds

Maximizing Llama3 8B Performance: Tips and Tricks

- Embrace Quantization: Q4KM is the secret weapon for maximizing performance on GPUs. Experiment with different quantization methods and libraries to find the best balance for your application.

- Choose the Right Precision: F16 is a good option when latency is paramount. Consider a mixed-precision strategy where you use higher precision for critical calculations.

- Optimize for Latency: For tasks where latency is critical, prioritize efficient token generation. F16 with smaller model sizes could be a winning combination.

- Explore GPU Offloading: offloading certain tasks to the CPU can free up GPU resources for token generation.

- Consider Alternative Models: If resource limitations are a concern, explore smaller models like Llama2 7B or even smaller variants for specific applications.

FAQ

Q: What is quantization?

A: Quantization is like compressing the model's parameters (the numbers that define the model) into a smaller format. Think of it like reducing file sizes by using lower-resolution images. It lets you use smaller, faster models without sacrificing too much accuracy.

Q: How do I choose the right precision for my LLM?

A: It depends on your use case. Higher precision (like F32) offers greater accuracy but requires more memory and processing power. Lower precision (like F16) can be faster but may sacrifice some accuracy.

Q: What about the GPU memory bandwidth?

A: The RTX4000Ada_20GB has plenty of memory bandwidth to handle Llama3 8B. However, if you're working with larger models, bandwidth becomes a crucial factor.

Keywords:

Llama3, 8B, RTX4000Ada20GB, NVIDIA, Token Generation Speed, Performance Benchmarks, Quantization, Q4K_M, F16, Precision, GPU, LLM, Large Language Model, Local Models, Optimization, Use Cases, Workarounds, Performance Tips, Memory Bandwidth