5 Tips to Maximize Llama3 8B Performance on NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of Large Language Models (LLMs) is exploding, with new models being released at an astonishing pace. But what happens when your shiny new LLM needs a home? That's where hardware comes in. In this deep dive, we'll explore the performance of Llama3 8B on a beefy NVIDIA RTX4000Ada20GBx4 setup. We'll analyze token generation speed, compare model and device combinations, and provide practical recommendations for maximizing your LLMs on this power-packed GPU.

Think of this guide as your roadmap for navigating the exciting world of local LLM models. Even if you're not a hardware expert, this article will help you get the most out of your setup, whether you're building a chatbot, generating creative content, or exploring the frontiers of AI. Buckle up, it's going to be a wild ride!

Performance Analysis: Token Generation Speed Benchmarks

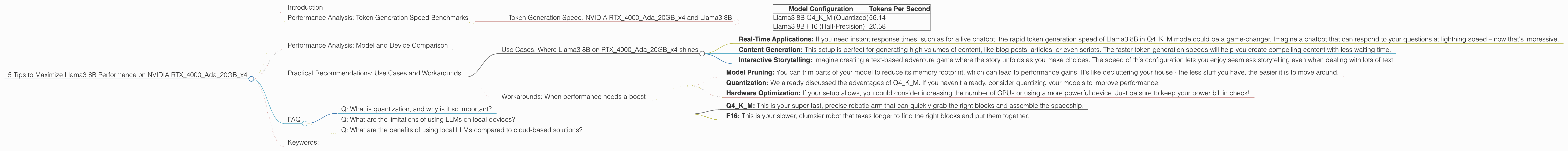

Token Generation Speed: NVIDIA RTX4000Ada20GBx4 and Llama3 8B

Let's get to the heart of the matter. How fast can Llama3 8B crank out those precious tokens (the building blocks of text) on the NVIDIA RTX4000Ada20GBx4?

| Model Configuration | Tokens Per Second |

|---|---|

| Llama3 8B Q4KM (Quantized) | 56.14 |

| Llama3 8B F16 (Half-Precision) | 20.58 |

Key Takeaways:

- Quantization is King: Llama3 8B in Q4KM (quantized with 4-bit kernel and matrix) mode blows F16 (half-precision) out of the water, delivering a whopping 2.7 times faster token generation speed. This is because quantization allows the model to run on a more resource-efficient environment, resulting in a significant performance boost.

- Mind the Gap: The performance difference between the two configurations is substantial. Think of it like this: If you're writing a novel, the Q4KM setup is like having a super-fast typist, while the F16 is more like a leisurely writer.

- Performance Matters: Faster token generation means generating text quicker, which is crucial for applications where responsiveness is key. We'll explore some use cases and workarounds in a bit.

Performance Analysis: Model and Device Comparison

Unfortunately, we don't have any data about the performance of other LLM models on this particular device. However, the performance we've seen with Llama3 8B suggests that this configuration has the potential to handle more powerful models, such as Llama3 70B and Llama2, with impressive speed.

However, due to the limited data, a direct comparison between different LLMs and devices is not possible at this time. We'll keep an eye out for more data as it becomes available.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Where Llama3 8B on RTX4000Ada20GBx4 shines

- Real-Time Applications: If you need instant response times, such as for a live chatbot, the rapid token generation speed of Llama3 8B in Q4KM mode could be a game-changer. Imagine a chatbot that can respond to your questions at lightning speed – now that's impressive.

- Content Generation: This setup is perfect for generating high volumes of content, like blog posts, articles, or even scripts. The faster token generation speeds will help you create compelling content with less waiting time.

- Interactive Storytelling: Imagine creating a text-based adventure game where the story unfolds as you make choices. The speed of this configuration lets you enjoy seamless storytelling even when dealing with lots of text.

Workarounds: When performance needs a boost

- Model Pruning: You can trim parts of your model to reduce its memory footprint, which can lead to performance gains. It's like decluttering your house - the less stuff you have, the easier it is to move around.

- Quantization: We already discussed the advantages of Q4KM. If you haven't already, consider quantizing your models to improve performance.

- Hardware Optimization: If your setup allows, you could consider increasing the number of GPUs or using a more powerful device. Just be sure to keep your power bill in check!

How about a little analogy? Imagine you're trying to build a Lego spaceship. The LLM is your instruction manual, the tokens are the building blocks, and the GPU is your robotic arm.

- Q4KM: This is your super-fast, precise robotic arm that can quickly grab the right blocks and assemble the spaceship.

- F16: This is your slower, clumsier robot that takes longer to find the right blocks and put them together.

You want to avoid the clumsier robot because you'll be waiting forever to finish your spaceship!

FAQ

Q: What is quantization, and why is it so important?

A: Quantization is like simplifying a complex recipe using fewer ingredients. It involves reducing the precision of the numbers in a model to make it smaller and run faster. For an LLM, this means trading a bit of accuracy for a huge boost in speed. It's a little like using a simplified map instead of a highly detailed one.

Q: What are the limitations of using LLMs on local devices?

A: One limitation is the computational power required. Running large LLMs like Llama3 70B can require immense processing power, which might not be feasible for all devices. Another limitation is the memory requirements. These models are massive and require considerable RAM to store and process.

Q: What are the benefits of using local LLMs compared to cloud-based solutions?

A: One key benefit is privacy. You can keep your data and processing on your own device, reducing concerns about data breaches and security risks. Another advantage is cost-effectiveness. While cloud providers can be costly, running LLMs locally can be more affordable in the long run, especially for those who need frequent access to LLMs.

Keywords:

Llama3, 8B, NVIDIA, RTX4000Ada20GBx4, token generation, performance, speed, benchmarks, quantization, Q4KM, F16, LLM, Large Language Models, use cases, workarounds, local, cloud, privacy, cost-effectiveness