5 Tips to Maximize Llama3 8B Performance on NVIDIA A40 48GB

In the ever-evolving world of artificial intelligence, large language models (LLMs) have taken center stage. LLMs, like the popular Llama series, have become the driving force behind chatbots, AI assistants, and even creative writing tools. But making these powerful models run smoothly and efficiently requires a deep dive into the hardware and software they rely on.

This article is dedicated to understanding the optimization potential of the Llama 3 8B model running on the NVIDIA A40_48GB GPU, a powerhouse designed to handle the demands of modern AI workflows. We'll explore practical tips and strategies to squeeze every ounce of performance from this dynamic duo, helping you unleash the true capabilities of Llama 3 8B in your applications.

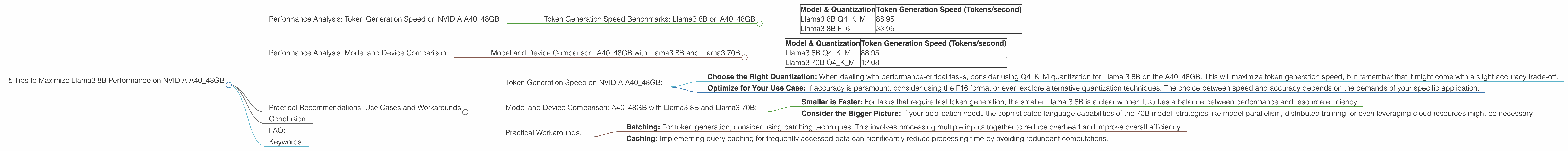

Performance Analysis: Token Generation Speed on NVIDIA A40_48GB

For a language model to be truly useful, it needs to generate text quickly and efficiently. We'll start by diving into the token generation speed of Llama 3 8B on the A40_48GB, exploring the impact of different quantization levels and formats.

Token Generation Speed Benchmarks: Llama3 8B on A40_48GB

Here's a table showcasing the token generation speeds of Llama 3 8B for various quantization levels and formats on the NVIDIA A40_48GB:

| Model & Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 88.95 |

| Llama3 8B F16 | 33.95 |

Observations:

- Quantization Impact: We can see that using Q4KM quantization significantly enhances the token generation speed compared to F16. This is because Q4KM quantization reduces the memory footprint of the model, allowing for faster processing.

- Trade-off: While Q4KM leads to speed improvements, it might impact the accuracy of the model. The choice between speed and accuracy depends on the specific application needs.

Performance Analysis: Model and Device Comparison

So, how does the A4048GB with Llama 3 8B stack up against other configurations? Let's compare the performance of Llama 3 8B with its larger counterpart, Llama 3 70B, also on the A4048GB.

Model and Device Comparison: A40_48GB with Llama3 8B and Llama3 70B

| Model & Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 88.95 |

| Llama3 70B Q4KM | 12.08 |

Observations:

- Size Matters: It's clearly evident that the smaller 8B model outperforms the 70B model in token generation speed. This is due to the significantly larger parameter space of the 70B model, requiring more computational resources.

- Scaling Challenges: While the larger 70B model offers greater potential for complex language tasks, its performance on the A40_48GB highlights the challenges of scaling LLMs efficiently. Larger models demand more memory and processing power, potentially leading to performance bottlenecks.

Practical Recommendations: Use Cases and Workarounds

Now that we've explored the performance characteristics of Llama 3 8B on the A40_48GB, let's dive into some practical recommendations for maximizing its capabilities:

Token Generation Speed on NVIDIA A40_48GB:

- Choose the Right Quantization: When dealing with performance-critical tasks, consider using Q4KM quantization for Llama 3 8B on the A40_48GB. This will maximize token generation speed, but remember that it might come with a slight accuracy trade-off.

- Optimize for Your Use Case: If accuracy is paramount, consider using the F16 format or even explore alternative quantization techniques. The choice between speed and accuracy depends on the demands of your specific application.

Model and Device Comparison: A40_48GB with Llama3 8B and Llama3 70B:

- Smaller is Faster: For tasks that require fast token generation, the smaller Llama 3 8B is a clear winner. It strikes a balance between performance and resource efficiency.

- Consider the Bigger Picture: If your application needs the sophisticated language capabilities of the 70B model, strategies like model parallelism, distributed training, or even leveraging cloud resources might be necessary.

Practical Workarounds:

- Batching: For token generation, consider using batching techniques. This involves processing multiple inputs together to reduce overhead and improve overall efficiency.

- Caching: Implementing query caching for frequently accessed data can significantly reduce processing time by avoiding redundant computations.

Conclusion:

Navigating the world of LLMs and their hardware requirements can be a challenging but rewarding journey. By understanding the performance trade-offs associated with different model sizes, quantization levels, and hardware configurations, you can optimize Llama 3 8B on NVIDIA A40_48GB for your specific needs. Whether it's crafting engaging chatbots, developing sophisticated AI assistants, or pushing the boundaries of creative writing, this powerful combination can drive impressive results.

FAQ:

1. What is quantization?

Quantization is a technique used to reduce the memory footprint of neural networks. It involves representing the numbers in the model using fewer bits, which makes the model smaller and faster. For example, Q4KM quantization uses 4 bits to represent the weights, making the model significantly smaller than the original F16 model.

2. What is the A40_48GB?

The NVIDIA A40_48GB is a powerful GPU designed for demanding AI workloads. Its large memory capacity and high processing power make it ideal for running large language models like Llama 3 8B efficiently. It's like a supercomputer on a chip!

3. What is the difference between token generation speed and model processing speed?

Token generation speed refers to how quickly the model can produce output text, while model processing speed encompasses the overall time taken to process an input and generate an output, including token generation.

4. How accurate is the Llama 3 8B model compared to the 70B model?

The 70B model generally achieves higher accuracy on complex language tasks. This is because it has a significantly larger parameter space, allowing it to learn more complex relationships in the data. However, for specific use cases, the 8B model may be sufficient and provide a better balance between accuracy and performance.

Keywords:

Llama3 8B, NVIDIA A4048GB, Token Generation Speed, Quantization, Q4K_M, F16, Model Performance, LLM, Large Language Model, GPU, Deep Learning, AI, Natural Language Processing, NLP