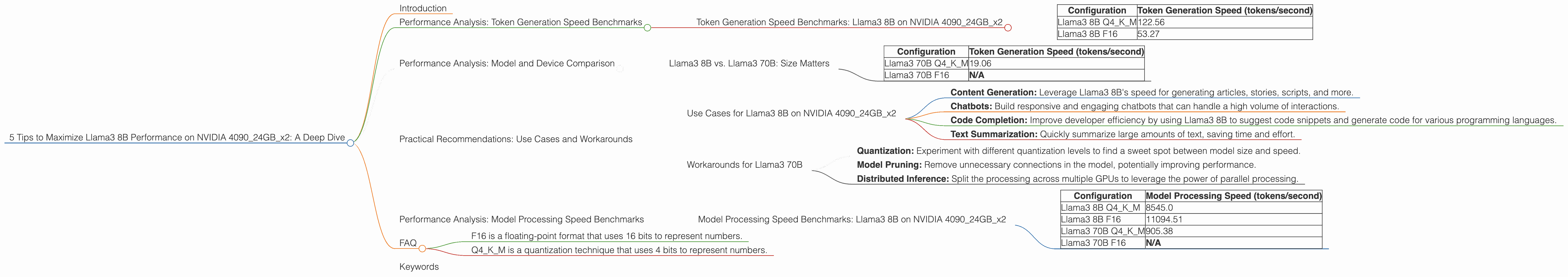

5 Tips to Maximize Llama3 8B Performance on NVIDIA 4090 24GB x2

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so! These powerful models are revolutionizing everything from content creation to research, and the race to push the boundaries of performance is on. In this guide, we'll embark on a deep dive into the performance of Llama3 8B, a popular and capable LLM model, specifically when running it on the mighty NVIDIA 4090 24GB x2 setup. Think of this as a quest to unlock the potential of Llama3 8B, one token at a time.

Whether you're a seasoned developer looking for optimization tips or a curious geek who wants to understand the underlying technology, this article will equip you with the knowledge and insights to run Llama3 8B efficiently.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 409024GBx2

Let's break down the performance of Llama3 8B running on NVIDIA 409024GBx2, focusing on token generation speed, a key measure of LLM performance.

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 122.56 |

| Llama3 8B F16 | 53.27 |

As you can see, Llama3 8B Q4KM, which leverages quantization for smaller model size, achieved a significantly faster token generation speed compared to the F16 version. This is because quantization, like putting your clothes in a vacuum-sealed bag, allows the model to occupy less memory and thus run more efficiently.

Remember: These numbers represent tokens/second, which means how many tokens the model can generate in a single second. Higher numbers mean faster text generation. Imagine a chat bot that can respond in a blink of an eye!

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B: Size Matters

While Llama3 8B delivers impressive performance on the NVIDIA 409024GBx2, it's worth contrasting it with its larger sibling, Llama3 70B, to understand the trade-offs involved.

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B Q4KM | 19.06 |

| Llama3 70B F16 | N/A |

Here's the breakdown:

- Llama3 8B is significantly faster than Llama3 70B in terms of token generation speed.

- This is because Llama3 70B, being 70 times larger, requires greater processing power, leading to slower generation.

- The F16 version of Llama3 70B wasn't tested, which possibly indicates challenges in achieving stability or efficiency at that configuration.

Think of it this way: Imagine trying to run a marathon; a smaller, more agile runner will likely finish faster than a larger, stronger runner! The same principle applies to LLMs.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 409024GBx2

- Content Generation: Leverage Llama3 8B's speed for generating articles, stories, scripts, and more.

- Chatbots: Build responsive and engaging chatbots that can handle a high volume of interactions.

- Code Completion: Improve developer efficiency by using Llama3 8B to suggest code snippets and generate code for various programming languages.

- Text Summarization: Quickly summarize large amounts of text, saving time and effort.

Workarounds for Llama3 70B

While Llama3 70B might be slower, it compensates with its sheer size and capability. Here's how to address its performance challenges:

- Quantization: Experiment with different quantization levels to find a sweet spot between model size and speed.

- Model Pruning: Remove unnecessary connections in the model, potentially improving performance.

- Distributed Inference: Split the processing across multiple GPUs to leverage the power of parallel processing.

Performance Analysis: Model Processing Speed Benchmarks

While token generation speed tells us how fast the model can generate text, model processing speed, often referred to as inference speed, measures how quickly the model can process the input and produce an output.

Model Processing Speed Benchmarks: Llama3 8B on NVIDIA 409024GBx2

| Configuration | Model Processing Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 8545.0 |

| Llama3 8B F16 | 11094.51 |

| Llama3 70B Q4KM | 905.38 |

| Llama3 70B F16 | N/A |

Important Note: The model processing speed here represents how fast the model can process the input. It doesn't directly translate to the speed of generating text.

Key findings:

- Llama3 8B F16 is notably faster than Llama3 8B Q4KM in terms of model processing. This is likely because F16 uses a more efficient data format that allows for faster computations.

- Llama3 70B Q4KM is significantly slower than Llama3 8B in terms of model processing, showcasing the performance trade-off for larger models.

- The F16 version of Llama3 70B wasn't tested, which suggests challenges in achieving stability or efficiency at that configuration.

Think of it this way: Imagine a restaurant that needs to process orders. A smaller, more efficient kitchen can handle orders faster than a larger, more complex kitchen!

FAQ

1. What is quantization?

Quantization is like simplifying a complex image by reducing its number of colors. By reducing the precision of numbers used in LLM models, quantization allows for smaller model sizes, which can improve inference speed.

2. What are F16 and Q4KM?

- F16 is a floating-point format that uses 16 bits to represent numbers.

- Q4KM is a quantization technique that uses 4 bits to represent numbers.

3. Why is Llama3 8B faster than Llama3 70B?

Llama3 8B is faster because it's smaller, requiring less memory and computing resources.

4. What if I need the power of Llama3 70B but want better speed?

Consider experimenting with different quantization levels, model pruning, or distributed inference.

Keywords

Llama3 8B, NVIDIA 409024GBx2, LLM, token generation speed, model processing speed, quantization, F16, Q4KM, performance, optimization, use cases, workarounds, deep learning.