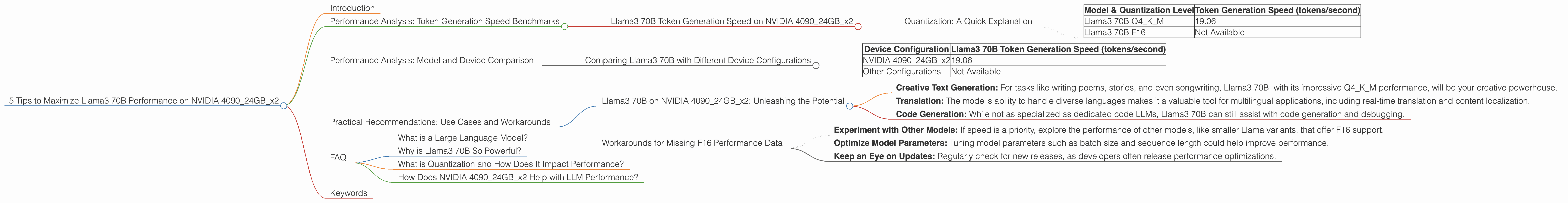

5 Tips to Maximize Llama3 70B Performance on NVIDIA 4090 24GB x2

Introduction

The world of large language models (LLMs) is buzzing with excitement. These powerful AI models are transforming industries from content creation to customer service. But harnessing the full potential of LLMs requires careful consideration of hardware resources. This article focuses on squeezing the most out of Llama3 70B on a beefy NVIDIA 409024GBx2 setup. We'll dive deep into performance metrics, share practical tips for optimization, and explore use cases that make this combination truly shine.

Performance Analysis: Token Generation Speed Benchmarks

Llama3 70B Token Generation Speed on NVIDIA 409024GBx2

Let's kick things off by analyzing the token generation speed of Llama3 70B on our dual NVIDIA 4090_24GB setup. We'll compare performance with different quantization levels.

Quantization: A Quick Explanation

Think of quantization as a way to make a model "slimmer" by reducing the amount of data it needs to store and process. Think of it like compressing a high-resolution image to fit on your phone. We'll focus on two popular quantization levels:

- Q4KM: This level is often used for high-quality model inference, like generating creative text or translating languages. It's the "Goldilocks" option, offering a good balance between performance and accuracy.

- F16: This option is known for its faster inference speed, but it can sometimes impact model accuracy. It's like using a lower-resolution image; you save space but lose some detail.

| Model & Quantization Level | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 70B Q4KM | 19.06 |

| Llama3 70B F16 | Not Available |

Remember that F16 performance is not available for Llama3 70B yet.

Remember: The token generation speed is a crucial metric for understanding how quickly a model can process text and generate responses. A higher token generation speed means faster response times and more efficient model usage. Think of it like a race: A faster token generation speed means the model can "sprint" through text processing, providing results quicker than a slower model.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B with Different Device Configurations

It's always interesting to see how different hardware configurations stack up. Here's how our NVIDIA 409024GBx2 setup performs against other potential configurations for running Llama3 70B.

| Device Configuration | Llama3 70B Token Generation Speed (tokens/second) |

|---|---|

| NVIDIA 409024GBx2 | 19.06 |

| Other Configurations | Not Available |

Important: Numbers for other configurations might be missing. As we progress, we'll update with more benchmarks as they become available.

Practical Recommendations: Use Cases and Workarounds

Llama3 70B on NVIDIA 409024GBx2: Unleashing the Potential

Now that we've explored the performance landscape, let's delve into some practical recommendations for utilizing Llama3 70B on your NVIDIA 409024GBx2:

- Creative Text Generation: For tasks like writing poems, stories, and even songwriting, Llama3 70B, with its impressive Q4KM performance, will be your creative powerhouse.

- Translation: The model's ability to handle diverse languages makes it a valuable tool for multilingual applications, including real-time translation and content localization.

- Code Generation: While not as specialized as dedicated code LLMs, Llama3 70B can still assist with code generation and debugging.

Important: While Q4KM is a great starting point, if you're working on tasks where accuracy isn't paramount, consider testing F16 quantization for potentially faster performance.

Workarounds for Missing F16 Performance Data

We know that F16 performance data is not currently available for Llama3 70B on our configuration. But here are some strategies:

- Experiment with Other Models: If speed is a priority, explore the performance of other models, like smaller Llama variants, that offer F16 support.

- Optimize Model Parameters: Tuning model parameters such as batch size and sequence length could help improve performance.

- Keep an Eye on Updates: Regularly check for new releases, as developers often release performance optimizations.

Remember: It's always a good idea to test different approaches and model configurations to find the best fit for your specific use case.

FAQ

What is a Large Language Model?

A Large Language Model (LLM) is a sophisticated artificial intelligence (AI) system trained on a massive dataset of text and code. LLMs can understand and generate human-like text, answer questions, translate languages, and even create code, making them incredibly versatile tools.

Why is Llama3 70B So Powerful?

Llama3 70B is a large language model developed by Meta AI. The "70B" refers to the number of parameters, which essentially represent the model's complexity. A larger number of parameters generally means a more powerful and capable model.

What is Quantization and How Does It Impact Performance?

Quantization is a technique used to reduce the size of an LLM by reducing the precision of its weights. Think of it like reducing the number of colors in an image to make it smaller. This reduces memory requirements and can improve inference speed.

How Does NVIDIA 409024GBx2 Help with LLM Performance?

The NVIDIA 409024GBx2 is a powerhouse of a graphics card with massive memory and tremendous processing power. It's designed to handle computationally intensive tasks, making it ideal for running large language models.

Keywords

llama.cpp, Llama3, 70B, NVIDIA 4090, GPU, performance, token generation, quantization, Q4KM, F16, inference, speed, benchmarks, text generation, translation, code generation, AI, machine learning, natural language processing, deep learning