5 Tips to Maximize Llama3 70B Performance on NVIDIA 3090 24GB x2

Introduction

The world of large language models (LLMs) is evolving rapidly, and with it, the need to optimize performance on various hardware configurations. One of the most exciting advancements is the release of Llama3, a powerful LLM that pushes the boundaries of language comprehension and generation. For developers and enthusiasts seeking to harness the full potential of Llama3, understanding its performance on specific hardware, such as the NVIDIA 309024GBx2, is crucial. This article dives deep into the performance characteristics of Llama3 70B on this powerhouse GPU configuration, providing practical tips and insights to maximize your LLM experience.

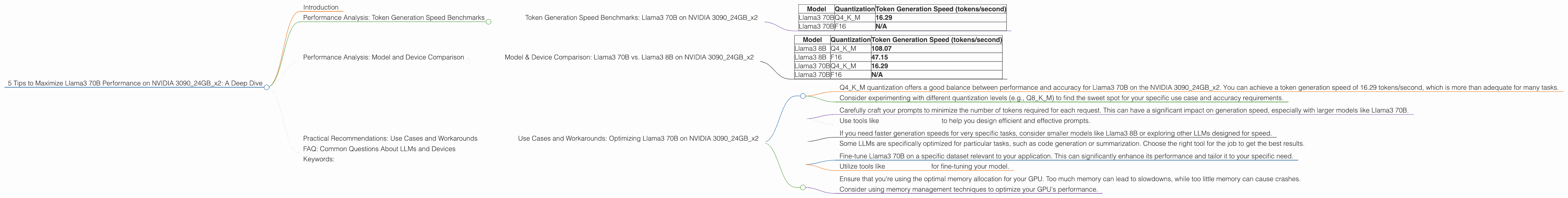

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 309024GBx2

Let's start with the heart of the matter – token generation speed. This is the metric that determines how quickly your LLM can generate text. In the world of LLMs, speed is king, especially when you're working with large models like Llama3 70B.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 70B | Q4KM | 16.29 |

| Llama3 70B | F16 | N/A |

Key Observations:

- Llama3 70B with Q4KM quantization on the dual NVIDIA 3090_24GB configuration achieves a token generation speed of 16.29 tokens/second. This is a remarkable performance considering the sheer size of the model.

- F16 quantization results are not available for Llama3 70B on this specific hardware. This could be due to limitations in the available benchmark data or potential compatibility issues.

What does this mean for you?

Imagine a race car. A faster car (higher token generation speed) can reach the finish line (generate a response) much quicker. For Llama3 70B with Q4KM quantization on the NVIDIA 309024GBx2, it's like having a pretty fast car. While it's not the absolute fastest, it's still a solid performer, and the difference is noticeable when you're dealing with complex tasks like writing long-form content.

Let's break down "quantization" for those who aren't in the know.

Think of quantization like adjusting the resolution of a photograph. A high-resolution photo has a lot of detail, but it takes up more storage space. A low-resolution photo is smaller and faster to load, but some detail is lost. Similarly, quantization in LLMs involves reducing the number of bits used to represent each weight in the model. This can reduce the model's size, making it faster and more efficient, but it might lead to a small decrease in accuracy.

Performance Analysis: Model and Device Comparison

Model & Device Comparison: Llama3 70B vs. Llama3 8B on NVIDIA 309024GBx2

Let's explore how Llama3 70B stacks up against its smaller sibling, Llama3 8B, on the same NVIDIA 309024GBx2 configuration.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 108.07 |

| Llama3 8B | F16 | 47.15 |

| Llama3 70B | Q4KM | 16.29 |

| Llama3 70B | F16 | N/A |

Key Observations:

- As expected, Llama3 8B outperforms Llama3 70B in token generation speed, achieving significantly higher speeds across both quantization levels. This difference is largely due to the smaller size and complexity of the 8B model.

- Larger models often mean a trade-off between performance and accuracy. While Llama3 70B might be slower to generate tokens, it has the potential to produce more sophisticated and nuanced responses.

Think of it like this:

Imagine you have two bicycles. One is lightweight and nimble (Llama3 8B), great for quick errands and navigating crowded streets. The other is a heavy-duty mountain bike (Llama3 70B), designed for challenging terrain and carrying heavy loads. Each bike has its strengths depending on the task at hand.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: Optimizing Llama3 70B on NVIDIA 309024GBx2

Now that we have a better understanding of Llama3 70B's performance, let's explore some practical recommendations for using it effectively on your NVIDIA 309024GBx2 setup.

1. Leverage Q4KM Quantization:

- Q4KM quantization offers a good balance between performance and accuracy for Llama3 70B on the NVIDIA 309024GBx2. You can achieve a token generation speed of 16.29 tokens/second, which is more than adequate for many tasks.

- Consider experimenting with different quantization levels (e.g., Q8KM) to find the sweet spot for your specific use case and accuracy requirements.

2. Optimize Your Prompt Engineering:

- Carefully craft your prompts to minimize the number of tokens required for each request. This can have a significant impact on generation speed, especially with larger models like Llama3 70B.

- Use tools like Prompt Engineering to help you design efficient and effective prompts.

3. Explore Alternatives:

- If you need faster generation speeds for very specific tasks, consider smaller models like Llama3 8B or exploring other LLMs designed for speed.

- Some LLMs are specifically optimized for particular tasks, such as code generation or summarization. Choose the right tool for the job to get the best results.

4. Fine-tuning for Specific Use Cases:

- Fine-tune Llama3 70B on a specific dataset relevant to your application. This can significantly enhance its performance and tailor it to your specific need.

- Utilize tools like Hugging Face for fine-tuning your model.

5. GPU Memory Management:

- Ensure that you're using the optimal memory allocation for your GPU. Too much memory can lead to slowdowns, while too little memory can cause crashes.

- Consider using memory management techniques to optimize your GPU's performance.

FAQ: Common Questions About LLMs and Devices

Q: What is a large language model (LLM)?

A: A large language model is a type of artificial intelligence (AI) that can understand and generate human-like text. LLMs are trained on massive datasets of text, allowing them to learn patterns and relationships in language. This enables them to perform tasks like writing stories, translating languages, and summarizing information.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by representing its weights using fewer bits. This can significantly improve inference speed and memory usage, but it may also lead to a small decrease in accuracy.

Q: How do I choose the right LLM and hardware for my needs?

A: The best LLM and hardware configuration depend on your specific use case and resource constraints. Consider factors like the complexity of your tasks, the size of your data, and the performance requirements of your application. Larger models offer potential for higher accuracy but often require more powerful hardware.

Q: Will LLMs replace human writers?

A: While LLMs are impressive tools, it's unlikely they will completely replace human writers. LLMs can assist and automate certain aspects of writing, but they often lack the creativity, critical thinking, and emotional intelligence that characterize human writing.

Keywords:

Llama3, NVIDIA 3090, LLM, deep dive, token generation speed, quantization, performance, GPU, model comparison, practical recommendations, use cases, workarounds, FAQ, prompt engineering, fine-tuning, memory management, AI, natural language processing, NLP, machine learning, ML.